eks-lambda-drainer is an Amazon EKS node drainer with AWS Lambda. If you provision spot instances or spotfleet in your Amazon EKS nodegroup, you can listen to the spot termination signal from CloudWatch Events 120 seconds in prior to the final termination process. By configuring this Lambda function as the CloudWatch Event target, eks-lambda-drainer will perform the taint-based eviction on the terminating node and all the pods without relative toleration will be evicted and rescheduled to another node - your workload will get very minimal impact on the spot instance termination.

-

git cloneto check out the repository to local andcdto the directory -

run

dep ensure -vto install required go packages - you might need to install go dep first. -

edit

Makefileand update S3TMPBUCKET variable:

modify this to your private S3 bucket you have read/write access to

S3TMPBUCKET ?= pahud-temp

- type

make worldto build, pack, package and deploy to Lambda

pahud:~/go/src/eks-lambda-drainer (master) $ make world

Checking dependencies...

Building...

Packing binary...

updating: main (deflated 73%)

sam packaging...

Uploading to a33bb95c227378e21102db1274f5dffd 8423458 / 8423458.0 (100.00%)

Successfully packaged artifacts and wrote output template to file sam-packaged.yaml.

Execute the following command to deploy the packaged template

aws cloudformation deploy --template-file /home/ec2-user/go/src/eks-lambda-drainer/sam-packaged.yaml --stack-name <YOUR STACK NAME>

sam deploying...

Waiting for changeset to be created..

Waiting for stack create/update to complete

Successfully created/updated stack - eks-lambda-drainer

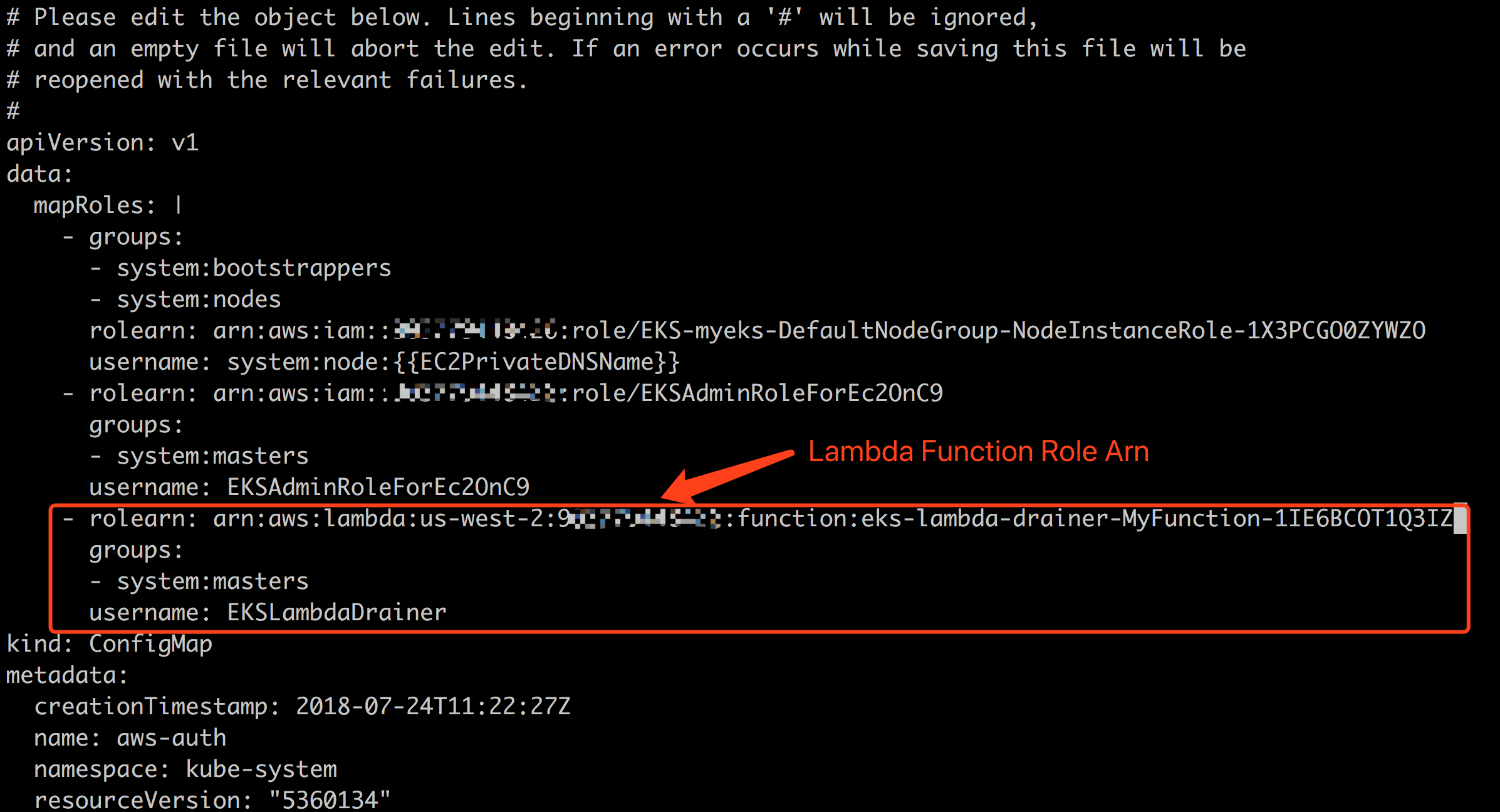

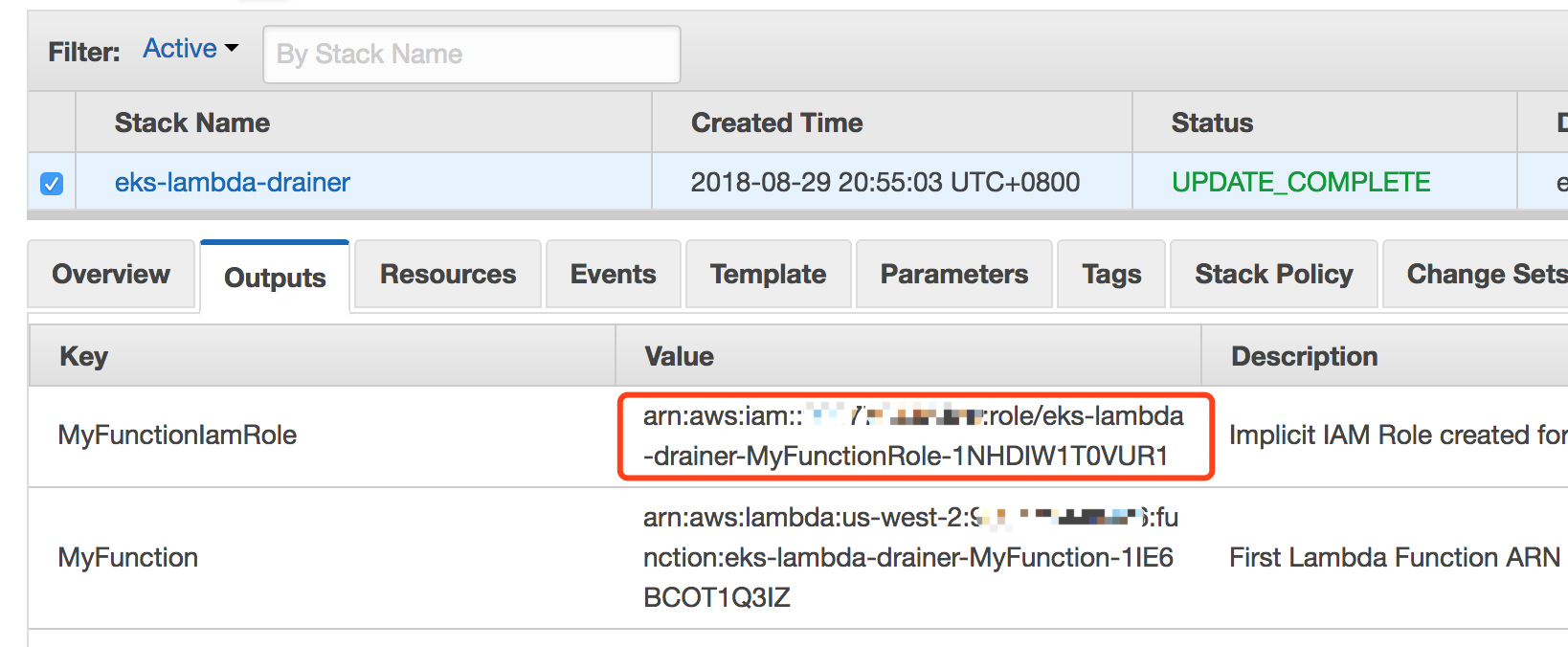

Read Amazon EKS document about how to add an IAM Role to the aws-auth ConfigMap.

Edit the aws-auth ConfigMap by

kubectl edit -n kube-system configmap/aws-auth

And insert rolearn, groups and username into the mapRoles, make sure the groups contain system:masters

You can get the rolearn from the output tab of cloudformation console.

By creating your nodegroup with this cloudformation template, your autoscaling group will have a LifecycleHook to a specific SNS topic and eventually invoke eks-lambda-drainer to drain the pods from the terminating node. Your node will first enter the Terminating:Wait state and after a pre-defined graceful period of time(default: 10 seconds), eks-lambda-drainer will put CompleteLifecycleAction back to the hook and Autoscaling group therefore move on to the Terminaing:Proceed phase to execute the real termination process. The Pods in the terminating node will be rescheduled to other node(s) just in a few seconds. Your service will have almost zero impact.

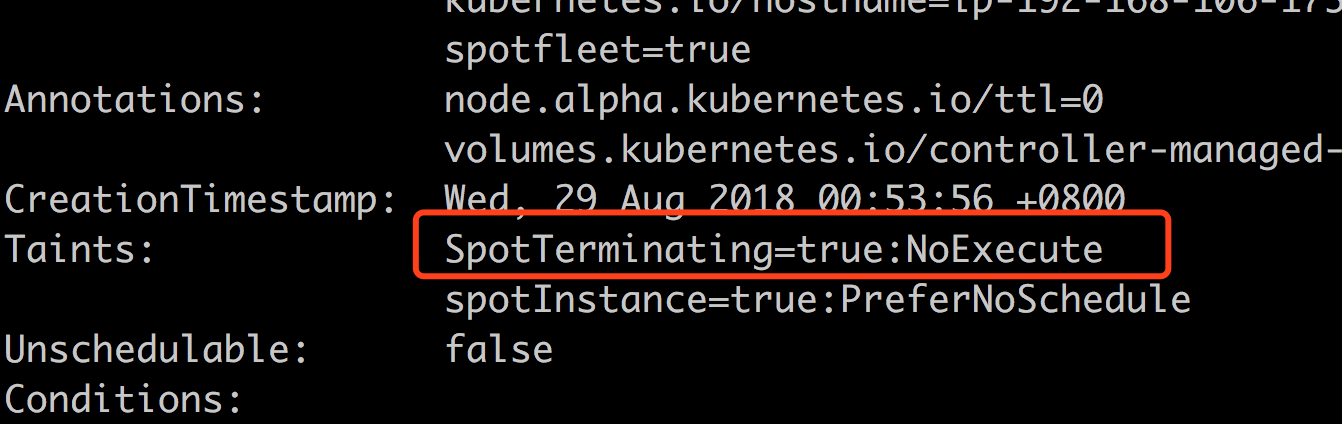

try kubectl describe this node and see the Taints on it

- package the Lambda function in AWS SAM format

- publish to AWS Serverless Applicaton Repository

- ASG/LifeCycle integration #2

- add more samples

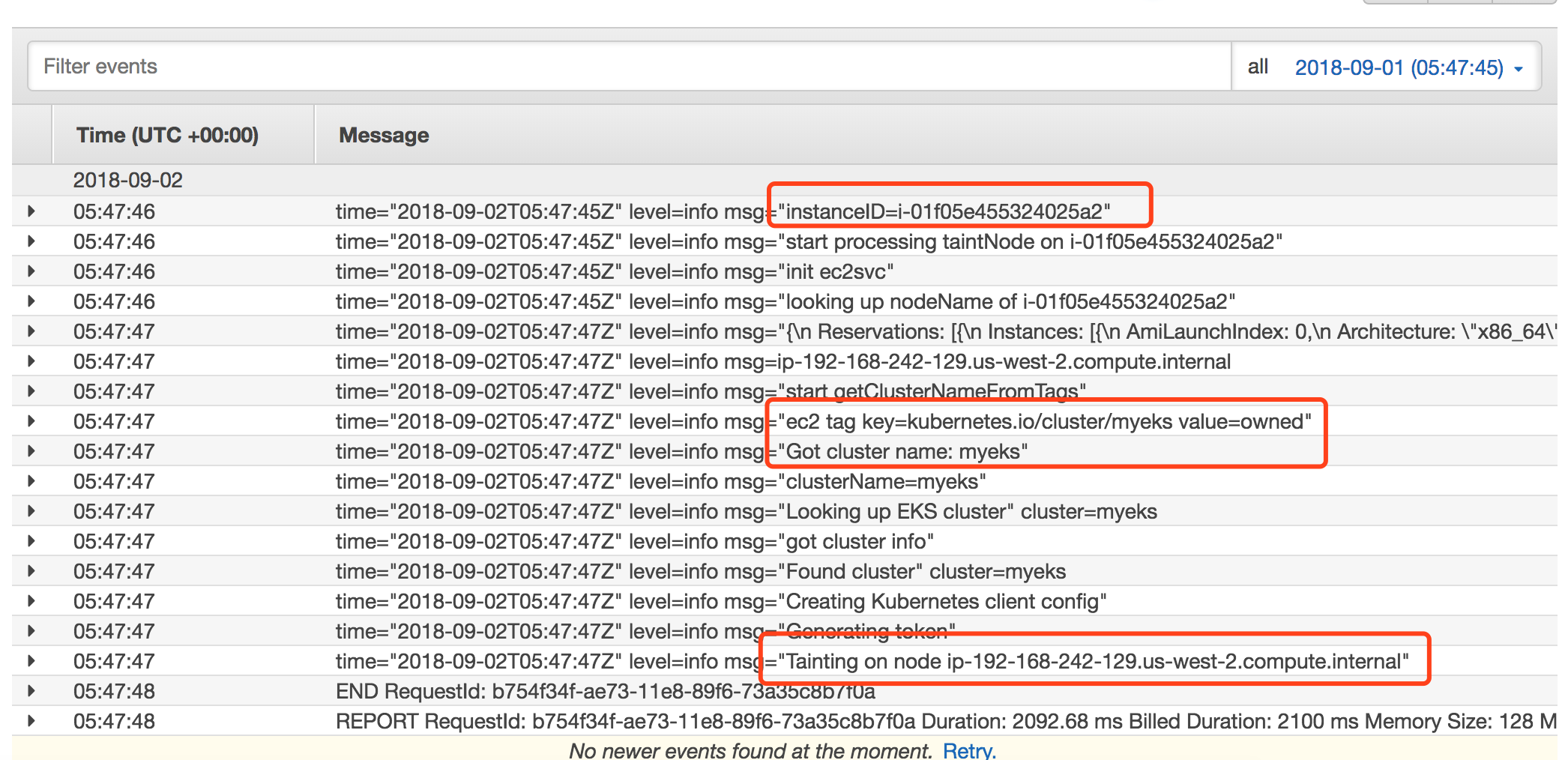

ANS: No, eks-lambda-drainer will determine the Amazon EKS cluster name from the EC2 Tags(key=kubernetes.io/cluster/{CLUSTER_NAME} with value=owned). You just need single Lambda function to handle all spot instances from different nodegroups from different Amazon EKS clusters.