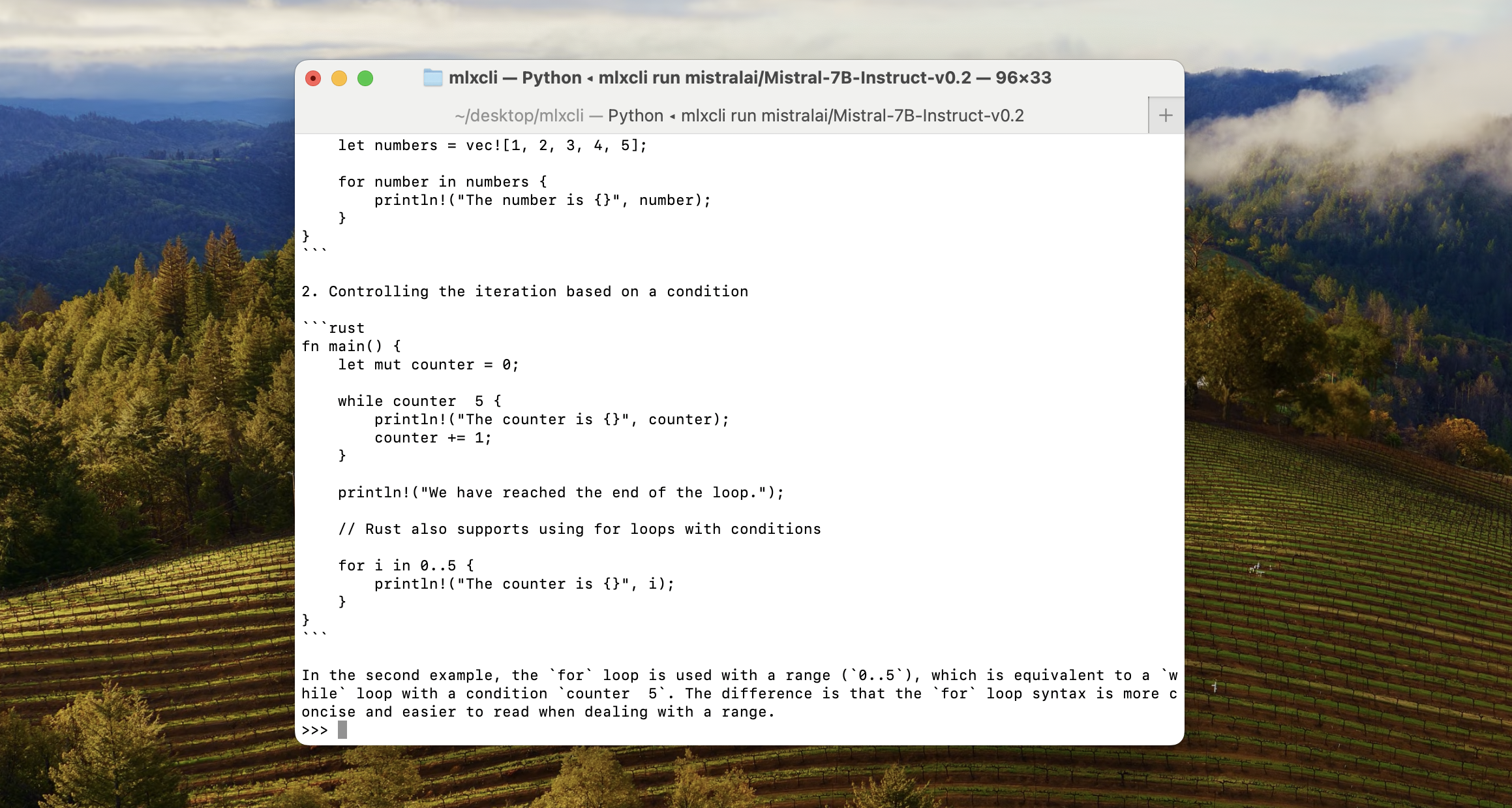

Run large models locally through the terminal using MLX.

Features:

- Run language models directly from the terminal.

- Optimized for Apple Silicon using MLX.

- Verbose output to measure the TPS & Time Elapsed.

- Chat with context of your previous messages in the terminal.

Download using pip:

pip install mlxcliSelect the model you want to use, browse all models on Huggingface mlx-community 🤗

mlxcli run mistralai/Mistral-7B-Instruct-v0.2Popular Models:

- Mistral 7B Instruct:

mlxcli run mistralai/Mistral-7B-Instruct-v0.2 - Nous Research Mistral 7B 4-bit quant:

mlxcli run mlx-community/Nous-Hermes-2-Mistral-7B-DPO-4bit-MLX - Hermes 2 Pro Mistral 7B 8-bit quant:

mlx-community/Hermes-2-Pro-Mistral-7B-8bit

Requirements:

- Using an M series chip (Apple silicon)

- Using a native Python >= 3.8

- macOS >= 13.5

- Verbose: Include the tokens per second & time elapsed for each prompt using

/verbose. You can also disable it by running the command again!

To solve the following - zsh: command not found: mlxcli run:

- Locate the path using

pip show mlxcli - Open up zshrc using

vim ~/.zshrc - Add the path path to zshrc

export PATH="{path_from_pip_show}:$PATH" - Reload and apply changes:

source ~/.zshrc

This library was created by Mustafa Aljadery & Siddharth Sharma. Contact Us for contribution!

This was creating using Huggingface mlx_lm, shoutout to 🤗, and Apple MLX shoutout to Awni Hannun.