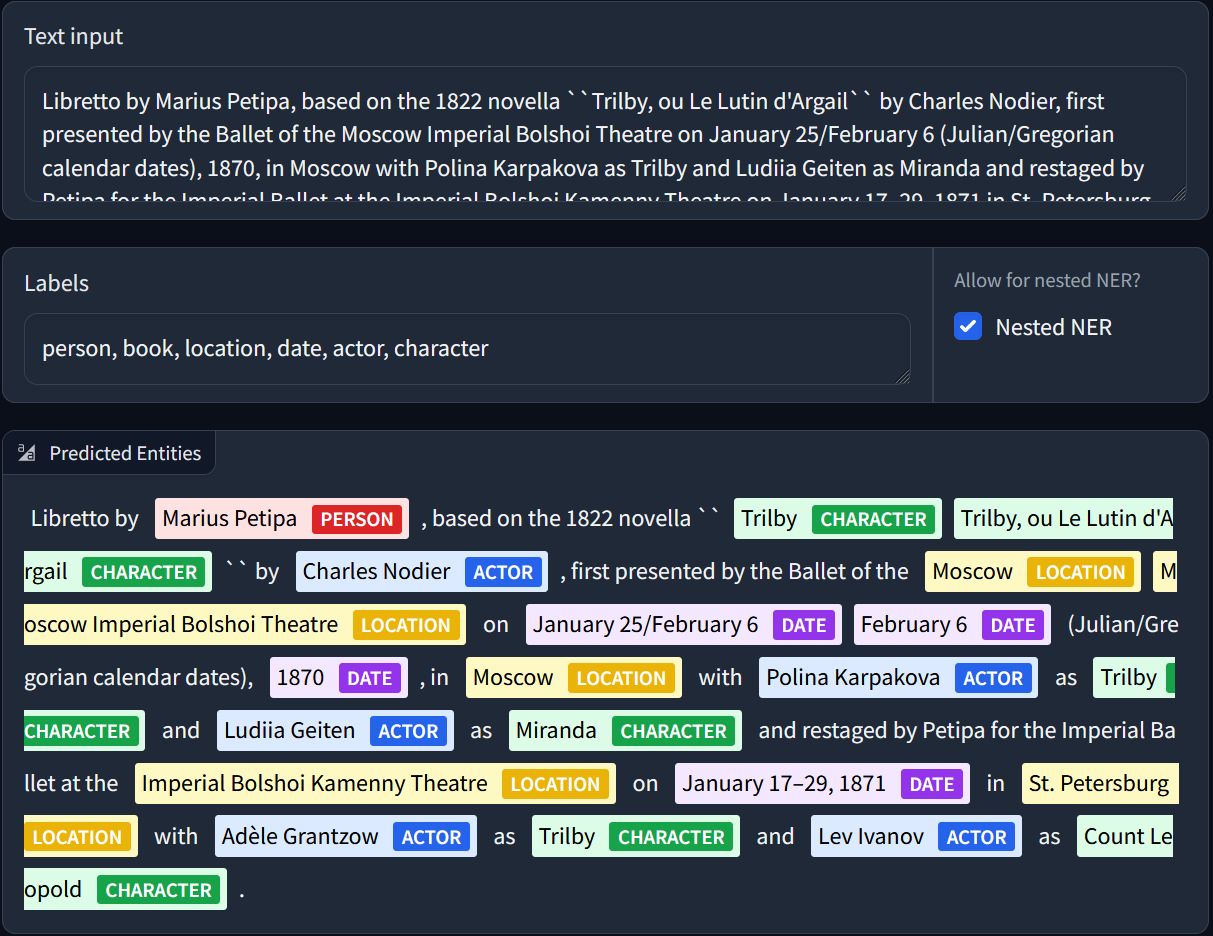

GLiNER is a Named Entity Recognition (NER) model capable of identifying any entity type using a bidirectional transformer encoder (BERT-like). It provides a practical alternative to traditional NER models, which are limited to predefined entities, and Large Language Models (LLMs) that, despite their flexibility, are costly and large for resource-constrained scenarios.

-

Paper: https://arxiv.org/abs/2311.08526 (by Urchade Zaratiana, Nadi Tomeh, Pierre Holat, Thierry Charnois)

-

Colab: https://colab.research.google.com/drive/1mhalKWzmfSTqMnR0wQBZvt9-ktTsATHB?usp=sharing

conda create -n glirel python=3.10 -y && conda activate glirel

cd GLiNER && pip install -e .

# few_rel

cd data

python process_few_rel.py

cd ..

# adjust config

python train.py --config config_few_rel.yaml --log_dir logs-few-rel --relation_extraction# wiki_zsl

cd data

curl -L -o wiki_all.json 'https://drive.google.com/uc?export=download&id=1ELFGUIYDClmh9GrEHjFYoE_VI1t2a5nK'

python process_wiki_zsl.py

cd ..

# adjust config

python train.py --config config_wiki_zsl.yaml --log_dir logs-wiki-zsl --relation_extraction

JSONL file:

{

"ner": [

[7, 8, "Q4914513", "Binsey"],

[11, 13, "Q19686", "River Thames"]

],

"relations": [

{

"head": {"mention": "Binsey", "position": [7, 8], "type": "Q4914513"},

"tail": {"mention": "River Thames", "position": [11, 13], "type": "Q19686"},

"relation_id": "P206",

"relation_text": "located in or next to body of water"

}

],

"tokenized_text": ["The", "race", "took", "place", "between", "Godstow", "and", "Binsey", "along", "the", "Upper", "River", "Thames", "."]

},

{

"ner": [

[9, 11, "Q4386693", "Legislative Assembly"],

[1, 4, "Q1848835", "Parliament of Victoria"]

],

"relations": [

{

"head": {"mention": "Legislative Assembly", "position": [9, 11], "type": "Q4386693"},

"tail": {"mention": "Parliament of Victoria", "position": [1, 4], "type": "Q1848835"},

"relation_id": "P361",

"relation_text": "part of"

}

],

"tokenized_text": ["The", "Parliament", "of", "Victoria", "consists", "of", "the", "lower", "house", "Legislative", "Assembly", ",", "the", "upper", "house", "Legislative", "Council", "and", "the", "Queen", "of", "Australia", "."]

}

gliner_mediumv2.1is available under the Apache 2.0 license. It should be better/on par withgliner_baseandgliner_medium.- 📝 Finetuning notebook is available: examples/finetune.ipynb

- 🗂 Training dataset preprocessing scripts are now available in the

data/directory, covering both Pile-NER 📚 and NuNER 📘 datasets.

- GLiNER-Base (CC BY NC 4.0)

- GLiNER-Multi (CC BY NC 4.0)

- GLiNER-small (CC BY NC 4.0)

- GLiNER-small-v2 (Apache)

- GLiNER-medium (CC BY NC 4.0)

- GLiNER-medium-v2 (Apache)

- GLiNER-large (CC BY NC 4.0)

- GLiNER-large-v2 (Apache)

- ⏳ GLiNER-Multiv2

- ⏳ GLiNER-Sup (trained on mixture of NER datasets)

- Allow longer context (eg. train with long context transformers such as Longformer, LED, etc.)

- Use Bi-encoder (entity encoder and span encoder) allowing precompute entity embeddings

- Filtering mechanism to reduce number of spans before final classification to save memory and computation when the number entity types is large

- Improve understanding of more detailed prompts/instruction, eg. "Find the first name of the person in the text"

- Better loss function: for instance use

Focal Loss(see this paper) instead ofBCEto handle class imbalance, as some entity types are more frequent than others - Improve multi-lingual capabilities: train on more languages, and use multi-lingual training data

- Decoding: allow a span to have multiple labels, eg: "Cristiano Ronaldo" is both a "person" and "football player"

- Dynamic thresholding (in

model.predict_entities(text, labels, threshold=0.5)): allow the model to predict more entities, or less entities, depending on the context. Actually, the model tend to predict less entities where the entity type or the domain are not well represented in the training data. - Train with EMAs (Exponential Moving Averages) or merge multiple checkpoints to improve model robustness (see this paper

- Extend the model to relation extraction but need dataset with relation annotations. Our preliminary work ATG.

To use this model, you must install the GLiNER Python library:

!pip install gliner

Once you've downloaded the GLiNER library, you can import the GLiNER class. You can then load this model using GLiNER.from_pretrained and predict entities with predict_entities.

from gliner import GLiNER

model = GLiNER.from_pretrained("urchade/gliner_base")

text = """

Cristiano Ronaldo dos Santos Aveiro (Portuguese pronunciation: [kɾiʃˈtjɐnu ʁɔˈnaldu]; born 5 February 1985) is a Portuguese professional footballer who plays as a forward for and captains both Saudi Pro League club Al Nassr and the Portugal national team. Widely regarded as one of the greatest players of all time, Ronaldo has won five Ballon d'Or awards,[note 3] a record three UEFA Men's Player of the Year Awards, and four European Golden Shoes, the most by a European player. He has won 33 trophies in his career, including seven league titles, five UEFA Champions Leagues, the UEFA European Championship and the UEFA Nations League. Ronaldo holds the records for most appearances (183), goals (140) and assists (42) in the Champions League, goals in the European Championship (14), international goals (128) and international appearances (205). He is one of the few players to have made over 1,200 professional career appearances, the most by an outfield player, and has scored over 850 official senior career goals for club and country, making him the top goalscorer of all time.

"""

labels = ["person", "award", "date", "competitions", "teams"]

entities = model.predict_entities(text, labels, threshold=0.5)

for entity in entities:

print(entity["text"], "=>", entity["label"])Cristiano Ronaldo dos Santos Aveiro => person

5 February 1985 => date

Al Nassr => teams

Portugal national team => teams

Ballon d'Or => award

UEFA Men's Player of the Year Awards => award

European Golden Shoes => award

UEFA Champions Leagues => competitions

UEFA European Championship => competitions

UEFA Nations League => competitions

Champions League => competitions

European Championship => competitions

You can also use GliNER with spaCy with the Gliner-spaCy library. To install it, you can use pip:

pip install gliner-spacyOnce installed, you then load GliNER into a regular NLP pipeline. Here's an example using a blank English pipeline, but you can use any spaCy model.

import spacy

from gliner_spacy.pipeline import GlinerSpacy

nlp = spacy.blank("en")

nlp.add_pipe("gliner_spacy")

text = "This is a text about Bill Gates and Microsoft."

doc = nlp(text)

for ent in doc.ents:

print(ent.text, ent.label_)Bill Gates person

Microsoft organization

The model authors are:

- Urchade Zaratiana

- Nadi Tomeh

- Pierre Holat

- Thierry Charnois

@misc{zaratiana2023gliner,

title={GLiNER: Generalist Model for Named Entity Recognition using Bidirectional Transformer},

author={Urchade Zaratiana and Nadi Tomeh and Pierre Holat and Thierry Charnois},

year={2023},

eprint={2311.08526},

archivePrefix={arXiv},

primaryClass={cs.CL}

}