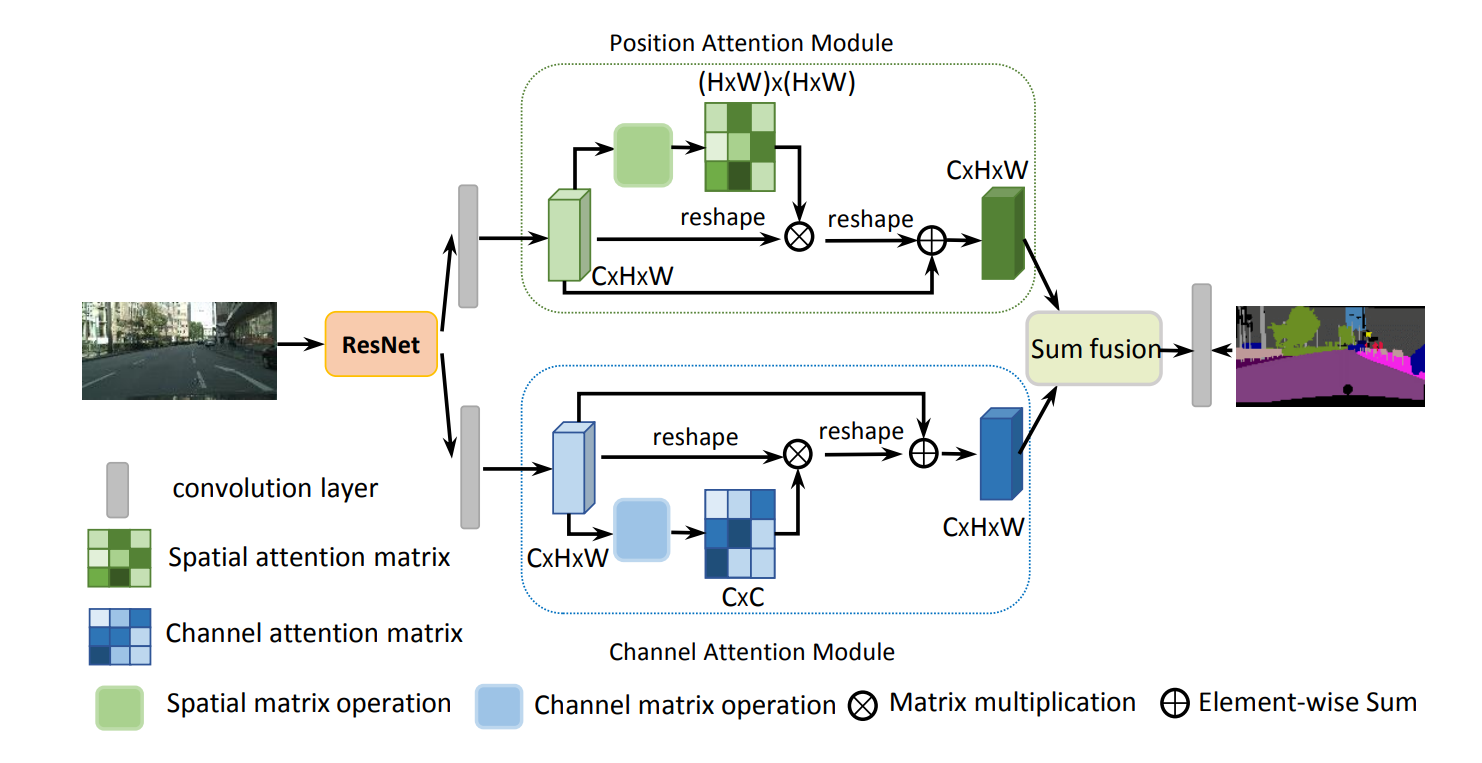

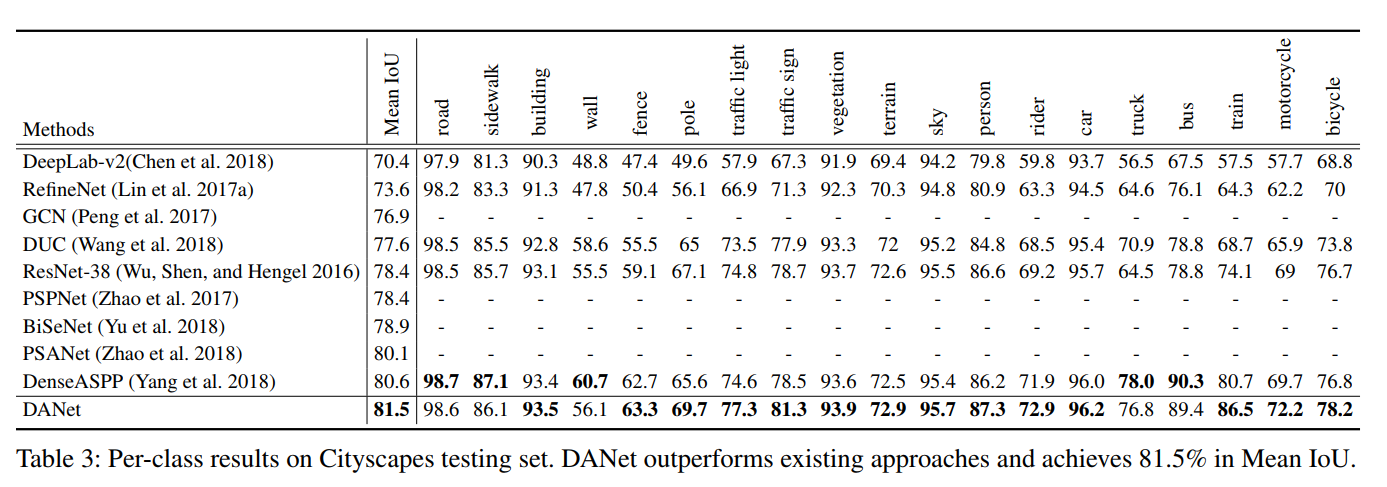

We propose a Dual Attention Network (DANet) to adaptively integrate local features with their global dependencies based on the self-attention mechanism. And we achieve new state-of-the-art segmentation performance on three challenging scene segmentation datasets, i.e., Cityscapes, PASCAL Context and COCO Stuff-10k dataset.

We train our DANet-101 with only fine annotated data and submit our test results to the official evaluation server.

@article{fu2018dual,

title={Dual Attention Network for Scene Segmentation},

author={Fu, Jun and Liu, Jing and Tian, Haijie, and Fang, Zhiwei, and Lu, Hanqing},

journal={arXiv preprint arXiv:1809.02983},

year={2018}

}