This is an (re-)implementation of DeepLab-ResNet in TensorFlow for semantic image segmentation on the PASCAL VOC dataset.

The DeepLab-ResNet is built on a fully convolutional variant of ResNet-101 with atrous (dilated) convolutions to increase the field-of-view, atrous spatial pyramid pooling, and multi-scale inputs (not implemented here).

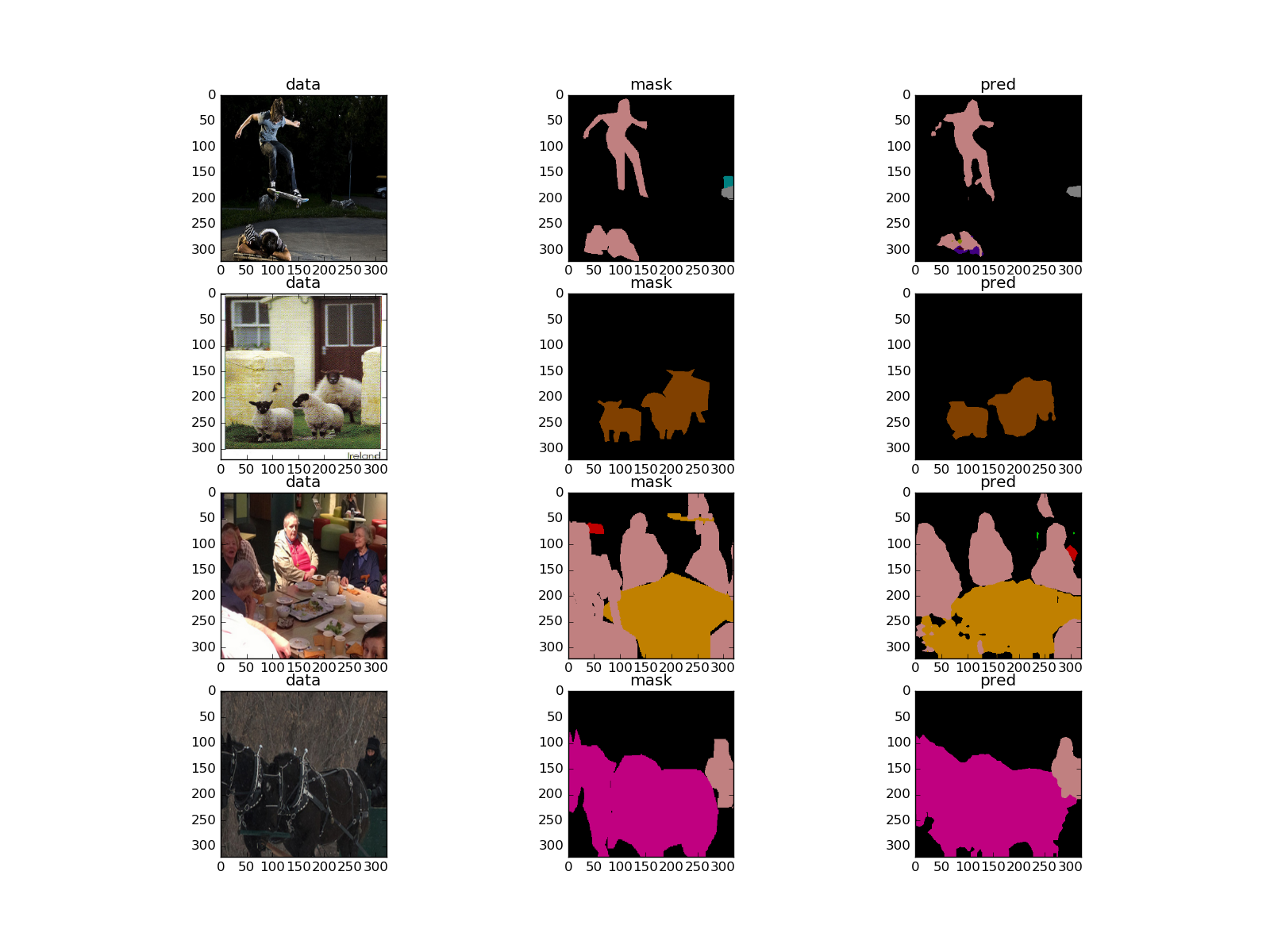

The model is trained on a mini-batch of images and corresponding ground truth masks with the softmax classifier on the top. During training, the masks are downsampled to match the size of the output from the network; during inference, to acquire the output of the same size as the input, bilinear upsampling is applied. The final segmentation mask is acquired using argmax over unnormalised log scores from the network.

Optionally, a fully-connected probabilistic graphical model, namely, CRF, can be applied to refine the final predictions.

On the test set of PASCAL VOC, the model shows 79.7% of mean intersection-over-union.

For more details on the underlying model please refer to the following paper:

@article{CP2016Deeplab,

title={DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs},

author={Liang-Chieh Chen and George Papandreou and Iasonas Kokkinos and Kevin Murphy and Alan L Yuille},

journal={arXiv:1606.00915},

year={2016}

}

TensorFlow needs to be installed before running the scripts. TensorFlow>=0.11 is supported.

To install the required python packages (except TensorFlow), run

pip install -r requirements.txtor for a local installation

pip install -user -r requirements.txtTo imitate the structure of the model, we have used .caffemodel files provided by the authors. The conversion has been performed using Caffe to TensorFlow with an additional configuration for atrous convolution.

There is no need to perform the conversion yourself as you can download the already converted model here.

To train the network, one can use the augmented PASCAL VOC 2012 dataset with 10582 images for training and 1449 images for validation.

To see the documentation on each of the training settings run the following:

python train.py --helpThe single-scale model shows 76.5% mIoU on the Pascal VOC 2012 validation dataset. No post-processing step with CRF is being used.

To see the documentation on each of the evaluation settings run the following:

python evaluate.py --helpTo perform inference your own images, use the following command:

python inference.py /path/to/your/image /path/to/ckpt/fileThis will run the forward pass and save the resulted mask with this colour map:

At the moment, the post-processing step with CRF is not implemented. Besides that, multi-scale inputs are missing, as well.