A Kubernetes multi-node cluster for developer of Kubernetes or extend Kubernetes. Based on kubeadm and Docker in Docker.

Docker 1.12+ is recommended. If you're not using one of the

preconfigured scripts (see below) and not building from source, it's

better to have kubectl executable in your path matching the

version of k8s binaries you're using (i.e. for example better don't

use kubectl 1.9.x with hyperkube 1.8.x).

kubernetes-docker-in-docker-cluster supports k8s versions 1.8.x, 1.9.x .

Run kubernetes-docker-in-docker-cluster on Docker with btrfs

storage driver is not supported.

By default kubernetes-docker-in-docker-cluster uses dockerized builds, so no Go

installation is necessary even if you're building Kubernetes from

source. If you want you can overridde this behavior by setting

KUBEADM_DIND_LOCAL to a non-empty value in config.sh.

Ensure to have md5sha1sum installed. If not existing can be installed via brew install md5sha1sum.

kubernetes-cluster-over-docker currently provides preconfigured scripts for

Kubernetes 1.8, 1.9. This may be convenient for use with

projects that extend or use Kubernetes. For example, you can start

Kubernetes 1.9 like this:

# Downloading kubectl (1.9.9 - for linux)

$ curl -O https://storage.googleapis.com/kubernetes-release/release/v1.9.9/bin/linux/amd64/kubectl

$ mv kubectl /usr/local/bin/

$ wget https://raw.githubusercontent.com/richardsonlima/kubernetes-docker-in-docker-cluster/master/kubernetes-docker-in-docker-cluster-v1.9.sh

$ chmod +x kubernetes-docker-in-docker-cluster-v1.9.sh

$ # start the cluster

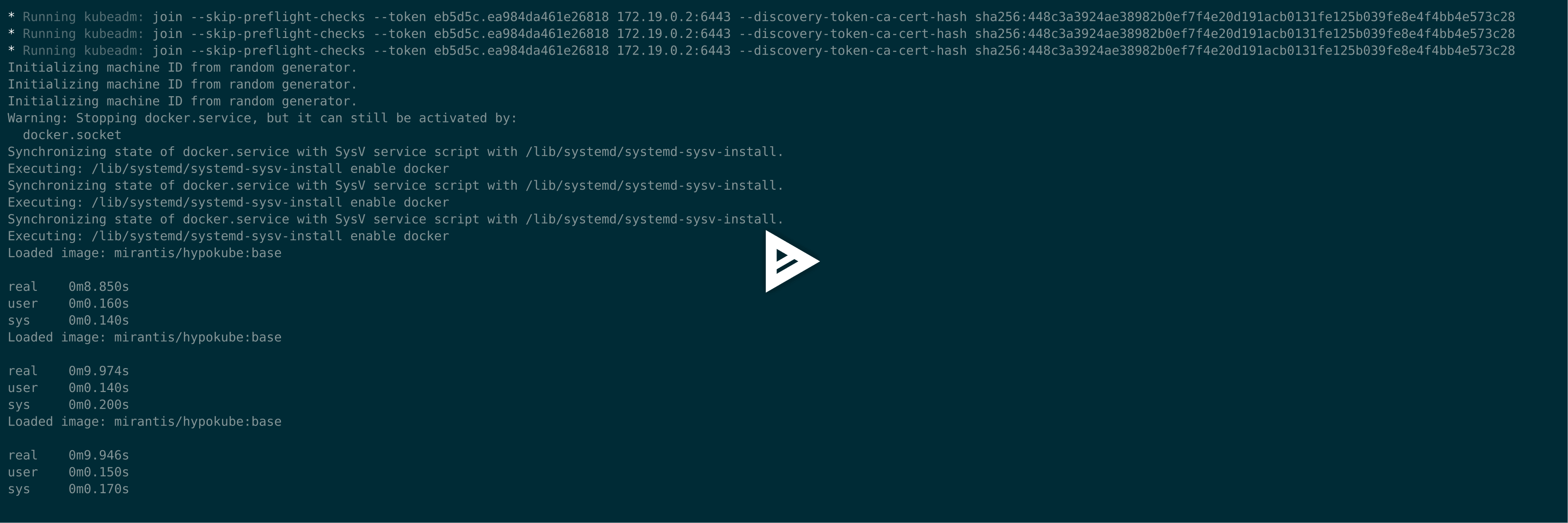

$ ./kubernetes-docker-in-docker-cluster-v1.9.sh up

$ # also you can start the cluster with 3 nodes

$ NUM_NODES=3 ./kubernetes-docker-in-docker-cluster-v1.9.sh up

$ ./kubernetes-docker-in-docker-cluster-v1.9.sh initial-config

$ kubectl --kubeconfig ~/.kube/config get pods --all-namespaces

# Creating a namespace

$ kubectl --kubeconfig ~/.kube/config create namespace test-docker-in-docker

$ kubectl --kubeconfig ~/.kube/config get nodes

NAME STATUS ROLES AGE VERSION

kube-master Ready master 57m v1.9.9

kube-node-1 Ready <none> 56m v1.9.9

kube-node-2 Ready <none> 56m v1.9.9

kube-node-3 Ready <none> 56m v1.9.9

$ # k8s dashboard available at http://localhost:8080/api/v1/namespaces/kube-system/services/kubernetes-dashboard:/proxy

$ # restart the cluster, this should happen much quicker than initial startup

$ ./kubernetes-docker-in-docker-cluster-v1.9.sh up

$ # stop the cluster

$ ./kubernetes-docker-in-docker-cluster-v1.9.sh down

$ # remove containers and volumes

$ ./kubernetes-docker-in-docker-cluster-v1.9.sh cleanReplace 1.8 with 1.9 or 1.10 to use other Kubernetes versions.

Important note: you need to do ./kubernetes-docker-in-docker-cluster-....sh clean when

you switch between Kubernetes versions (but no need to do this between

rebuilds if you use BUILD_HYPERKUBE=y like described below).

# Get jx (https://jenkins-x.io/)

curl -L https://github.com/jenkins-x/jx/releases/download/v1.3.168/jx-darwin-amd64.tar.gz | tar xzv

sudo mv jx /usr/local/bin

# Install JenkinsX on DIND K8S Cluster

jx install --provider=kubernetes --on-premise (or --local-cloud-environment)This example shows how to build a simple multi-tier web application using Kubernetes and Docker. The application consists of a web front end, Redis master for storage, and replicated set of Redis slaves, all for which we will create Kubernetes replication controllers, pods, and services.

- Step Zero: Prerequisites

- Step One: Create the Redis master pod

- Step Two: Create the Redis master service

- Step Three: Create the Redis slave pods

- Step Four: Create the Redis slave service

- Step Five: Create the guestbook pods

- Step Six: Create the guestbook service

- Step Seven: View the guestbook

- Step Eight: Cleanup

This example assumes that you have a working cluster.

Tip: View all the kubectl commands, including their options and descriptions in the kubectl CLI reference.

Use the examples/guestbook-go/redis-master-controller.json file to create a replication controller and Redis master pod. The pod runs a Redis key-value server in a container. Using a replication controller is the preferred way to launch long-running pods, even for 1 replica, so that the pod benefits from the self-healing mechanism in Kubernetes (keeps the pods alive).

-

Use the redis-master-controller.json file to create the Redis master replication controller in your Kubernetes cluster by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/redis-master-controller.json replicationcontrollers/redis-master

-

To verify that the redis-master controller is up, list the replication controllers you created in the cluster with the

kubectl get rccommand(if you don't specify a--namespace, thedefaultnamespace will be used. The same below):$ kubectl get rc CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS redis-master redis-master gurpartap/redis app=redis,role=master 1 ...

Result: The replication controller then creates the single Redis master pod.

-

To verify that the redis-master pod is running, list the pods you created in cluster with the

kubectl get podscommand:$ kubectl get pods NAME READY STATUS RESTARTS AGE redis-master-xx4uv 1/1 Running 0 1m ...

Result: You'll see a single Redis master pod and the machine where the pod is running after the pod gets placed (may take up to thirty seconds).

A Kubernetes service is a named load balancer that proxies traffic to one or more pods. The services in a Kubernetes cluster are discoverable inside other pods via environment variables or DNS.

Services find the pods to load balance based on pod labels. The pod that you created in Step One has the label app=redis and role=master. The selector field of the service determines which pods will receive the traffic sent to the service.

-

Use the redis-master-service.json file to create the service in your Kubernetes cluster by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/redis-master-service.json services/redis-master

-

To verify that the redis-master service is up, list the services you created in the cluster with the

kubectl get servicescommand:$ kubectl get services NAME CLUSTER_IP EXTERNAL_IP PORT(S) SELECTOR AGE redis-master 10.0.136.3 <none> 6379/TCP app=redis,role=master 1h ...

Result: All new pods will see the

redis-masterservice running on the host ($REDIS_MASTER_SERVICE_HOSTenvironment variable) at port 6379, or running onredis-master:6379. After the service is created, the service proxy on each node is configured to set up a proxy on the specified port (in our example, that's port 6379).

The Redis master we created earlier is a single pod (REPLICAS = 1), while the Redis read slaves we are creating here are 'replicated' pods. In Kubernetes, a replication controller is responsible for managing the multiple instances of a replicated pod.

-

Use the file redis-slave-controller.json to create the replication controller by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/redis-slave-controller.json replicationcontrollers/redis-slave

-

To verify that the redis-slave controller is running, run the

kubectl get rccommand:$ kubectl get rc CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS redis-master redis-master redis app=redis,role=master 1 redis-slave redis-slave kubernetes/redis-slave:v2 app=redis,role=slave 2 ...

Result: The replication controller creates and configures the Redis slave pods through the redis-master service (name:port pair, in our example that's

redis-master:6379). -

To verify that the Redis master and slaves pods are running, run the

kubectl get podscommand:$ kubectl get pods NAME READY STATUS RESTARTS AGE redis-master-xx4uv 1/1 Running 0 18m redis-slave-b6wj4 1/1 Running 0 1m redis-slave-iai40 1/1 Running 0 1m ...

Result: You see the single Redis master and two Redis slave pods.

Just like the master, we want to have a service to proxy connections to the read slaves. In this case, in addition to discovery, the Redis slave service provides transparent load balancing to clients.

-

Use the redis-slave-service.json file to create the Redis slave service by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/redis-slave-service.json services/redis-slave

-

To verify that the redis-slave service is up, list the services you created in the cluster with the

kubectl get servicescommand:$ kubectl get services NAME CLUSTER_IP EXTERNAL_IP PORT(S) SELECTOR AGE redis-master 10.0.136.3 <none> 6379/TCP app=redis,role=master 1h redis-slave 10.0.21.92 <none> 6379/TCP app-redis,role=slave 1h ...

Result: The service is created with labels

app=redisandrole=slaveto identify that the pods are running the Redis slaves.

Tip: It is helpful to set labels on your services themselves--as we've done here--to make it easy to locate them later.

This is a simple Go net/http (negroni based) server that is configured to talk to either the slave or master services depending on whether the request is a read or a write. The pods we are creating expose a simple JSON interface and serves a jQuery-Ajax based UI. Like the Redis slave pods, these pods are also managed by a replication controller.

-

Use the guestbook-controller.json file to create the guestbook replication controller by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/guestbook-controller.json replicationcontrollers/guestbook

Tip: If you want to modify the guestbook code open the _src of this example and read the README.md and the Makefile. If you have pushed your custom image be sure to update the image accordingly in the guestbook-controller.json.

-

To verify that the guestbook replication controller is running, run the

kubectl get rccommand:$ kubectl get rc CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS guestbook guestbook k8s.gcr.io/guestbook:v3 app=guestbook 3 redis-master redis-master redis app=redis,role=master 1 redis-slave redis-slave kubernetes/redis-slave:v2 app=redis,role=slave 2 ...

-

To verify that the guestbook pods are running (it might take up to thirty seconds to create the pods), list the pods you created in cluster with the

kubectl get podscommand:$ kubectl get pods NAME READY STATUS RESTARTS AGE guestbook-3crgn 1/1 Running 0 2m guestbook-gv7i6 1/1 Running 0 2m guestbook-x405a 1/1 Running 0 2m redis-master-xx4uv 1/1 Running 0 23m redis-slave-b6wj4 1/1 Running 0 6m redis-slave-iai40 1/1 Running 0 6m ...

Result: You see a single Redis master, two Redis slaves, and three guestbook pods.

Just like the others, we create a service to group the guestbook pods but this time, to make the guestbook front end externally visible, we specify "type": "LoadBalancer".

-

Use the guestbook-service.json file to create the guestbook service by running the

kubectl create -ffilenamecommand:$ kubectl create -f examples/guestbook-go/guestbook-service.json -

To verify that the guestbook service is up, list the services you created in the cluster with the

kubectl get servicescommand:$ kubectl get services NAME CLUSTER_IP EXTERNAL_IP PORT(S) SELECTOR AGE guestbook 10.0.217.218 146.148.81.8 3000/TCP app=guestbook 1h redis-master 10.0.136.3 <none> 6379/TCP app=redis,role=master 1h redis-slave 10.0.21.92 <none> 6379/TCP app-redis,role=slave 1h ...

Result: The service is created with label

app=guestbook.

You can now play with the guestbook that you just created by opening it in a browser (it might take a few moments for the guestbook to come up).

- Service Port Forward: It should be a trick to connect to guestbook running in a Kubernetes cluster

$ kubectl port-forward svc/guestbook 3000:3000

Forwarding from 127.0.0.1:3000 -> 3000

Forwarding from [::1]:3000 -> 3000More info: https://kubernetes.io/docs/tasks/access-application-cluster/port-forward-access-application-cluster/

To view the guestbook, navigate to http://localhost:3000 in your browser.

$ curl -fsSL https://raw.githubusercontent.com/fishworks/gofish/master/scripts/install.sh | bash

$ gofish init

$ gofish install helm

$ helm repo add bitnami https://charts.bitnami.com/bitnami

$ helm init

$ helm install --name kubeapps --namespace kubeapps bitnami/kubeapps

$ kubectl create serviceaccount kubeapps-operator

$ kubectl create clusterrolebinding kubeapps-operator --clusterrole=cluster-admin --serviceaccount=default:kubeapps-operator

$ kubectl get secret $(kubectl get serviceaccount kubeapps-operator -o jsonpath='{.secrets[].name}') -o jsonpath='{.data.token}' | base64 --decode\n

$ kubectl port-forward --namespace kubeapps svc/kubeapps 8080:80- Service Port Forward: It should be a trick to connect to KubeApps running in a Kubernetes cluster

$ kubectl port-forward --namespace kubeapps svc/kubeapps 8080:80

Forwarding from 127.0.0.1:8080 -> 8080

Forwarding from [::1]:8080 -> 8080To view the KubeApps, navigate to http://localhost:8080 in your browser.