___ ___ _ __ ___ ___ _ __ _ __ (_)_ __ ___

/ __|/ __| '__/ _ \/ _ \ '_ \| '_ \| | '_ \ / _ \

\__ \ (__| | | __/ __/ | | | |_) | | |_) | __/

|___/\___|_| \___|\___|_| |_| .__/|_| .__/ \___|

|_| |_|

Latest News 🔥

- [2024/08] Anyone can now create, share, install pipes (plugins) from the app interface based on a github repo/dir

- [2024/08] We're running bounties! Contribute to screenpipe & make money, check issues

- [2024/08] Audio input & output now works perfect on Windows, Linux, MacOS (<15.0). We also support multi monitor capture and defaulting STT to Whisper Distil large v3

- [2024/08] We released video embedding. AI gives you links to your video recording in the chat!

- [2024/08] We released the pipe store! Create, share, use plugins that get you the most out of your data in less than 30s, even if you are not technical.

- [2024/08] We released Apple & Windows Native OCR.

- [2024/08] The Linux desktop app is here!.

- [2024/07] The Windows desktop app is here! Get it now!.

- [2024/07] 🎁 Screenpipe won Friends (the AI necklace) hackathon at AGI House (integrations soon)

- [2024/07] We just launched the desktop app! Download now!

Library to build personalized AI powered by what you've seen, said, or heard. Works with Ollama. Alternative to Rewind.ai. Open. Secure. You own your data. Rust.

We are shipping daily, make suggestions, post bugs, give feedback.

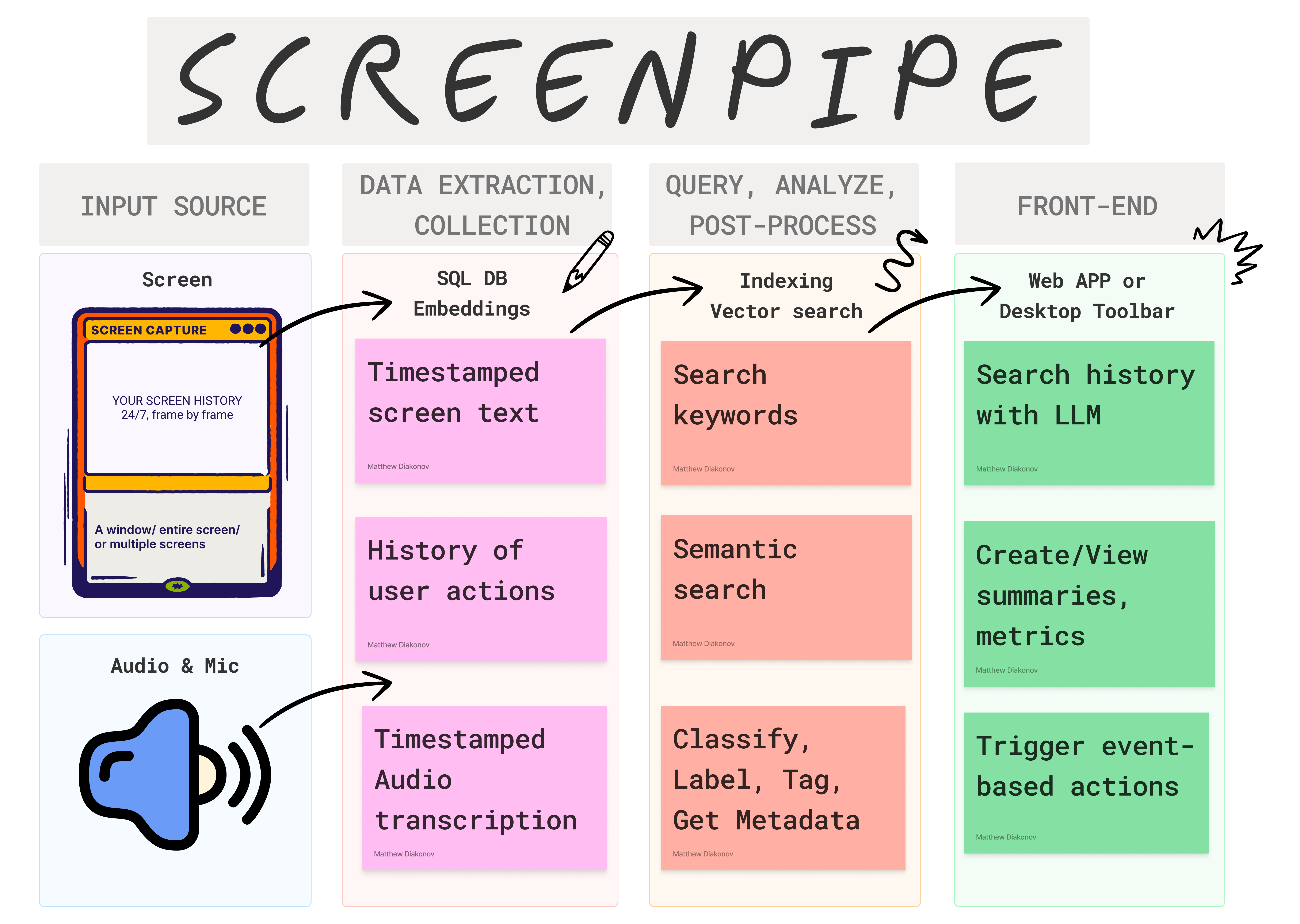

Building a reliable stream of audio and screenshot data, where a user simply clicks a button and the script runs in the background 24/7, collecting and extracting data from screen and audio input/output, can be frustrating.

There are numerous use cases that can be built on top of this layer. To simplify life for other developers, we decided to solve this non-trivial problem. It's still in its early stages, but it works end-to-end. We're working on this full-time and would love to hear your feedback and suggestions.

There are multiple ways to install screenpipe:

- as a CLI (continue reading), for rather technical users

- as a paid desktop app with 1 year updates, priority support, and priority features

- as a free forever desktop app (but you need to build it yourself). We're 100% OSS.

- as a free forever desktop app - by sending a PR (example) (or offer free app to a friend)

- as a Rust or WASM library (documentation WIP)

- as a business - check use cases and DM louis

PS: we invest 80% of the paid app revenue in bounties, send PR, make money!

This is the instructions to install the command line interface.

Struggle to get it running? I'll install it with you in a 15 min call.

CLI installation

MacOS

Option I: brew

- Install CLI

brew tap mediar-ai/screenpipe https://github.com/mediar-ai/screenpipe.git

brew install screenpipe- Run it:

screenpipe we just released experimental apple native OCR, to use it:

screenpipe --ocr-engine apple-nativeor if you don't want audio to be recorded

screenpipe --disable-audioif you want to save OCR data to text file in text_json folder in the root of your project (good for testing):

screenpipe --save-text-filesif you want to run screenpipe in debug mode to show more logs in terminal:

screenpipe --debugby default screenpipe is using whisper-tiny that runs LOCALLY to get better quality or lower compute you can use cloud model (we use Deepgram) via cloud api:

screenpipe -audio-transcription-engine deepgramby default screenpipe is using a local model for screen capture OCR processing to use the cloud (through unstructured.io) for better performance use this flag:

screenpipe --ocr-engine unstructuredfriend wearable integration, in order to link your wearable you need to pass user ID from friend app:

screenpipe --friend-wearable-uid AC...........................F3you can combine multiple flags if needed

Option II: Install from the source

- Install dependencies:

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh # takes 5 minutes

brew install pkg-config ffmpeg jq tesseract- Clone the repo:

git clone https://github.com/mediar-ai/screenpipeThis runs a local SQLite DB + an API + screenshot, ocr, mic, stt, mp4 encoding

cd screenpipe # enter cloned repoBuild the project, takes 5-10 minutes depending on your hardware

# necessary to use apple native OCR

export RUSTFLAGS="-C link-arg=-Wl,-rpath,@executable_path/../../screenpipe-vision/bin -C link-arg=-Wl,-rpath,@loader_path/../../screenpipe-vision/lib"

cargo build --release --features metal # takes 3 minuttesThen run it

./target/release/screenpipe # add --ocr-engine apple-native to use apple native OCR

# add "--disable-audio" if you don't want audio to be recorded

# "--save-text-files" if you want to save OCR data to text file in text_json folder in the root of your project (good for testing)

# "--debug" if you want to run screenpipe in debug mode to show more logs in terminalWindows

[!note] This is experimental support for Windows build. This assumes you already have the CUDA Toolkit installed and the CUDA_PATH set to my CUDA v12.6 folder. Replace

V:\projectsandV:\packageswith your own folders.

If this does not work for you, please open an issue or get the pre-built desktop app

- Install chocolatey

- Install git

- Install CUDA Toolkit (if using NVIDIA and building with cuda)

- Install MS Visual Studio Build Tools (below are the components I have installed)

- Desktop development with C++

- MSVC v143

- Windows 11 SDK

- C++ Cmake tools for Windows

- Testing tools core features - Build tools

- C++ AddressSanitizer

- C++ ATL for latest v143

- Individual components

- C++ ATL for latest v143 build tools (x86 & x64)

- MSBuild support for LLVM (clang-c) toolset

- C++ Clang Compiler for Windows

- Desktop development with C++

choco install pkgconfiglite rust

cd V:\projects

git clone https://github.com/mediar-ai/screenpipe

cd V:\packages

git clone https://github.com/microsoft/vcpkg.git

cd vcpkg

bootstrap-vcpkg.bat -disableMetrics

vcpkg.exe integrate install --disable-metrics

vcpkg.exe install ffmpeg

SET PKG_CONFIG_PATH=V:\packages\vcpkg\packages\ffmpeg_x64-windows\lib\pkgconfig

SET VCPKG_ROOT=V:\packages\vcpkg

SET LIBCLANG_PATH=C:\Program Files (x86)\Microsoft Visual Studio\2022\BuildTools\VC\Tools\Llvm\x64\bin

cd V:\projects\screen-pipe

cargo build --release --features cudaLinux

Option I: Install from source

- Install dependencies:

sudo apt-get update

sudo apt-get install -y libavformat-dev libavfilter-dev libavdevice-dev ffmpeg libasound2-dev tesseract-ocr libtesseract-dev

# Install Rust programming language

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh- Clone the repo:

git clone https://github.com/mediar-ai/screenpipe

cd screenpipe- Build and run:

cargo build --release --features cuda # remove "--features cuda" if you do not have a NVIDIA GPU

# then run it

./target/release/screenpipeOption II: Install through Nix

Choose one of the following methods:

a. Using nix-env:

nix-env -iA nixpkgs.screen-pipeb. In your configuration.nix (for NixOS users):

Add the following to your configuration.nix:

environment.systemPackages = with pkgs; [

screen-pipe

];Then rebuild your system with sudo nixos-rebuild switch.

c. In a Nix shell:

nix-shell -p screen-piped. Using nix run (for ad-hoc usage):

nix run nixpkgs#screen-pipeNote: Make sure you're using a recent version of nixpkgs that includes the screen-pipe package.

By default the data is stored in $HOME/.screenpipe (C:\AppData\Users\<user>\.screenpipe on Windows) you can change using --data-dir <mydir>

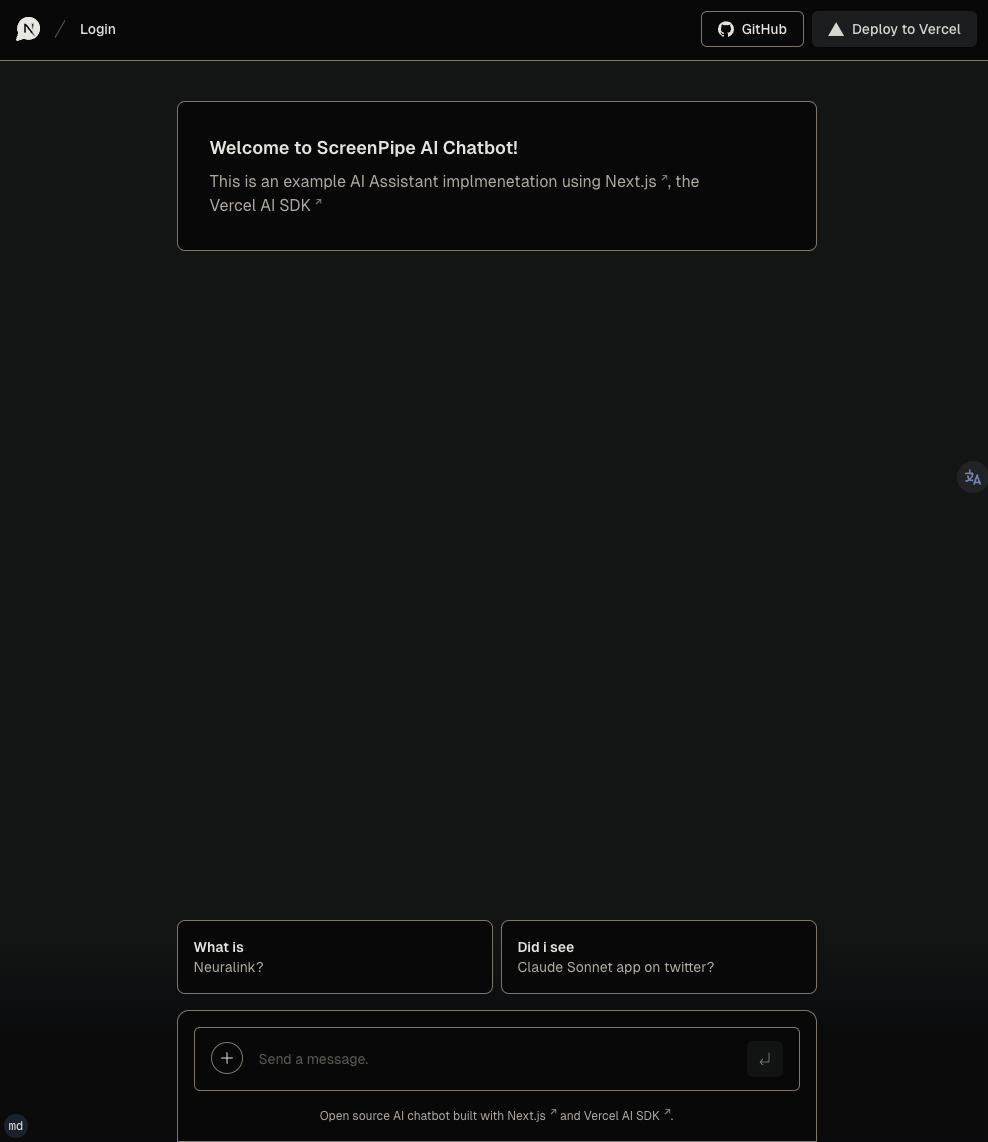

run example vercel/ai chatbot web interface

This example uses OpenAI. If you're looking for ollama example check the examples folder

The desktop app fully support OpenAI & Ollama by default.

To run Vercel chatbot, try this:

git clone https://github.com/mediar-ai/screenpipeNavigate to app directory

cd screenpipe/examples/typescript/vercel-ai-chatbot Set up you OPENAI API KEY in .env

echo "OPENAI_API_KEY=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX" > .envInstall dependencies and run local web server

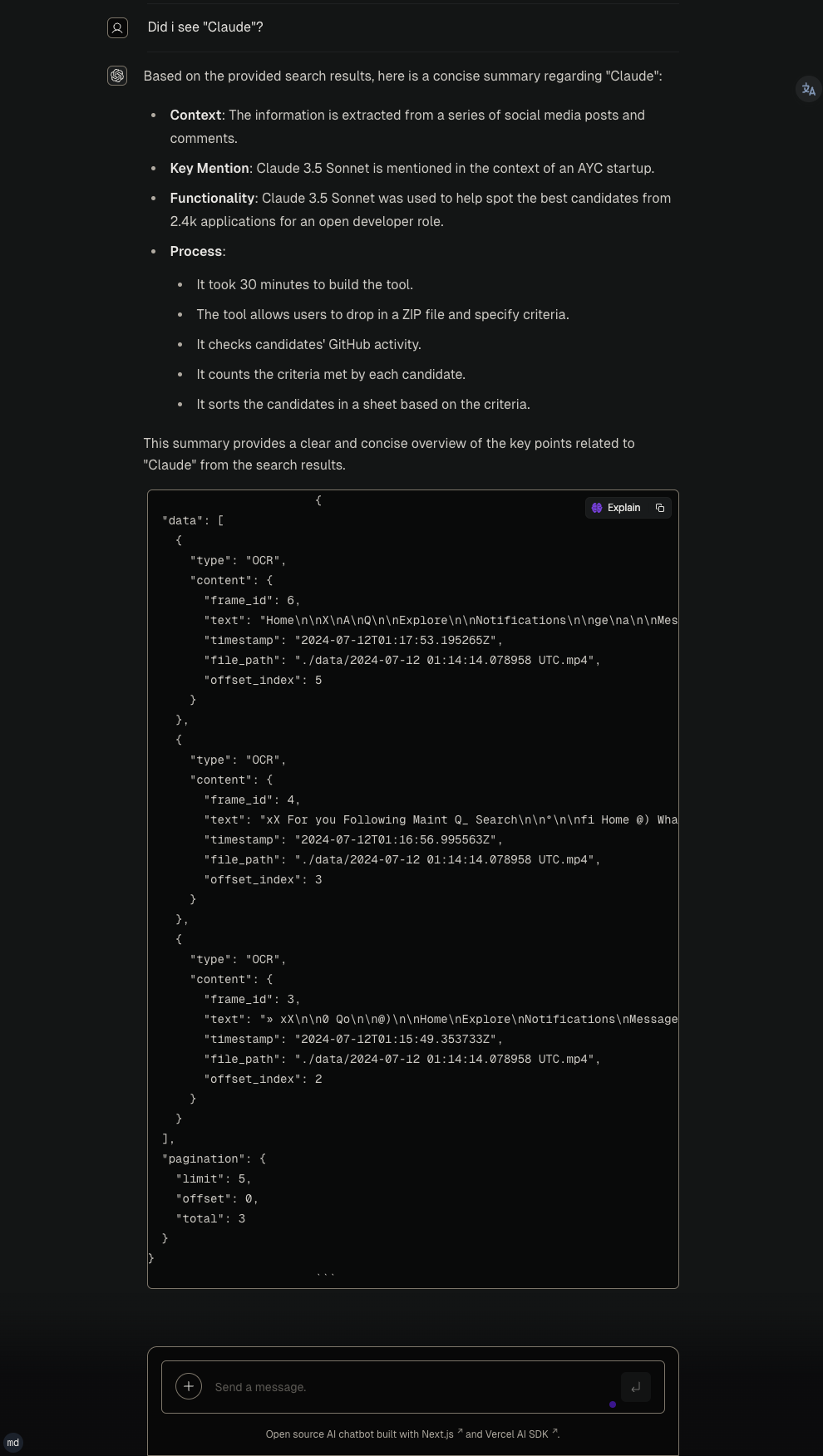

npm install npm run devYou can use terminal commands to query and view your data as shown below. Also, we recommend Tableplus.com to view the database, it has a free tier.

Here's a pseudo code to illustrate how to use screenpipe, after a meeting for example (automatically with our webhooks):

// 1h ago

const startDate = "<some time 1h ago..>"

// 10m ago

const endDate = "<some time 10m ago..>"

// get all the screen & mic data from roughly last hour

const results = fetchScreenpipe(startDate, endDate)

// send it to an LLM and ask for a summary

const summary = fetchOllama("{results} create a summary from these transcriptions")

// or const summary = fetchOpenai(results)

// add the meeting summary to your notes

addToNotion(summary)

// or your favourite note taking appOr thousands of other usages of all your screen & mic data!

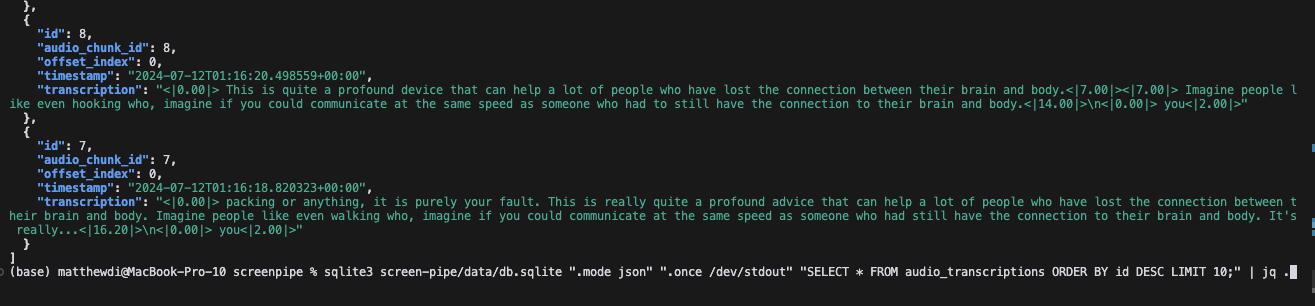

Check which tables you have in the local database

sqlite3 ~/.screenpipe/db.sqlite ".tables" Print a sample audio_transcriptions from the database

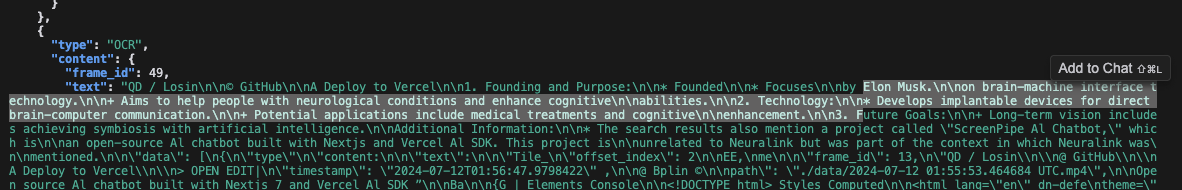

sqlite3 ~/.screenpipe/db.sqlite ".mode json" ".once /dev/stdout" "SELECT * FROM audio_transcriptions ORDER BY id DESC LIMIT 1;" | jq .Print a sample frame_OCR_text from the database

sqlite3 ~/.screenpipe/db.sqlite ".mode json" ".once /dev/stdout" "SELECT * FROM ocr_text ORDER BY frame_id DESC LIMIT 1;" | jq -r '.[0].text'Play a sample frame_recording from the database

ffplay "data/2024-07-12_01-14-14.mp4"Play a sample audio_recording from the database

ffplay "data/Display 1 (output)_2024-07-12_01-14-11.mp4"Example to query the API

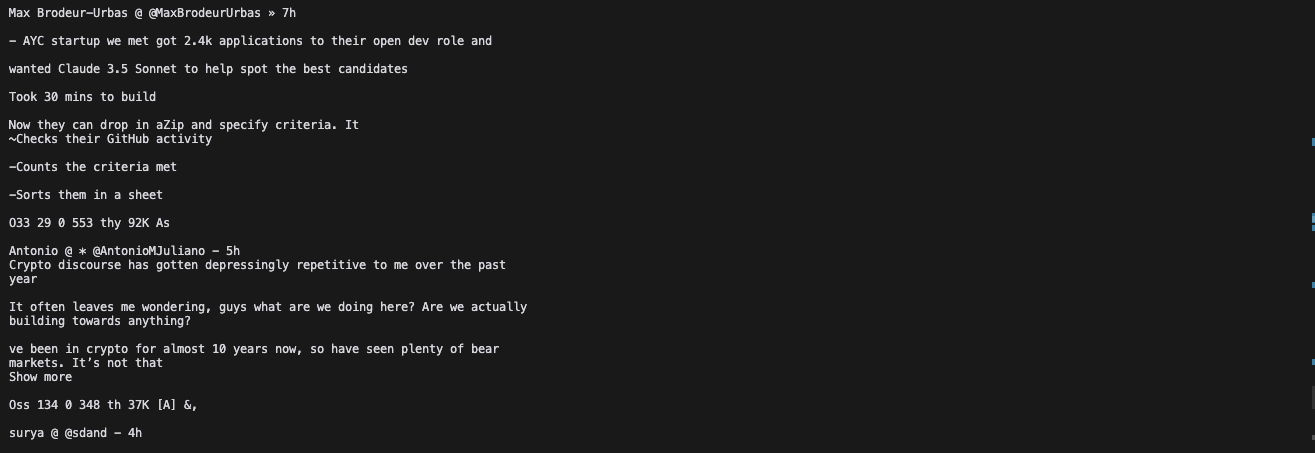

- Basic search query

curl "http://localhost:3030/search?q=Neuralink&limit=5&offset=0&content_type=ocr" | jqOther Example to query the API

# 2. Search with content type filter (OCR)

curl "http://localhost:3030/search?q=QUERY_HERE&limit=5&offset=0&content_type=ocr"

# 3. Search with content type filter (Audio)

curl "http://localhost:3030/search?q=QUERY_HERE&limit=5&offset=0&content_type=audio"

# 4. Search with pagination

curl "http://localhost:3030/search?q=QUERY_HERE&limit=10&offset=20"

# 6. Search with no query (should return all results)

curl "http://localhost:3030/search?limit=5&offset=0"

# filter by app (wll only return OCR results)

curl "http://localhost:3030/search?app_name=cursor"Keep in mind that it's still experimental.

screenpipe_demo2.1.mp4

- Search

- Semantic and keyword search. Find information you've forgotten or misplaced

- Playback history of your desktop when searching for a specific info

- Automation:

- Automatically generate documentation

- Populate CRM systems with relevant data

- Synchronize company knowledge across platforms

- Automate repetitive tasks based on screen content

- Analytics:

- Track personal productivity metrics

- Organize and analyze educational materials

- Gain insights into areas for personal improvement

- Analyze work patterns and optimize workflows

- Personal assistant:

- Summarize lengthy documents or videos

- Provide context-aware reminders and suggestions

- Assist with research by aggregating relevant information

- Live captions, translation support

- Collaboration:

- Share and annotate screen captures with team members

- Create searchable archives of meetings and presentations

- Compliance and security:

- Track what your employees are really up to

- Monitor and log system activities for audit purposes

- Detect potential security threats based on screen content

Alpha: runs on my computer Macbook pro m3 32 GB ram and a $400 Windows laptop, 24/7.

- Integrations

- ollama

- openai

- Friend wearable

- Fileorganizer2000

- mem0

- Brilliant Frames

- Vercel AI SDK

- supermemory

- deepgram

- unstructured

- excalidraw

- Obsidian

- Apple shortcut

- multion

- iPhone

- Android

- Camera

- Keyboard

- Browser

- Pipe Store (a list of "pipes" you can build, share & easily install to get more value out of your screen & mic data without effort). It runs in Deno Typescript engine within screenpipe on your computer

- screenshots + OCR with different engines to optimise privacy, quality, or energy consumption

- tesseract

- Windows native OCR

- Apple native OCR

- unstructured.io

- screenpipe screen/audio specialised LLM

- audio + STT (works with multi input devices, like your iPhone + mac mic, many STT engines)

- Linux, MacOS, Windows input & output devices

- iPhone microphone

- remote capture (run screenpipe on your cloud and it capture your local machine, only tested on Linux) for example when you have low compute laptop

- optimised screen & audio recording (mp4 encoding, estimating 30 gb/m with default settings)

- sqlite local db

- local api

- Cross platform CLI, desktop app (MacOS, Windows, Linux)

- Metal, CUDA

- TS SDK

- multimodal embeddings

- cloud storage options (s3, pgsql, etc.)

- cloud computing options (deepgram for audio, unstructured for OCR)

- custom storage settings: customizable capture settings (fps, resolution)

- security

- window specific capture (e.g. can decide to only capture specific tab of cursor, chrome, obsidian, or only specific app)

- encryption

- PII removal

- fast, optimised, energy-efficient modes

- webhooks/events (for automations)

- abstractions for multiplayer usage (e.g. aggregate sales team data, company team data, partner, etc.)

Recent breakthroughs in AI have shown that context is the final frontier. AI will soon be able to incorporate the context of an entire human life into its 'prompt', and the technologies that enable this kind of personalisation should be available to all developers to accelerate access to the next stage of our evolution.

Contributions are welcome! If you'd like to contribute, please read CONTRIBUTING.md.

What's the difference with adept.ai and rewind.ai?

- adept.ai is a closed product, focused on automation while we are open and focused on enabling tooling & infra for a wide range of applications like adept

- rewind.ai is a closed product, focused on a single use case (they only focus on meetings now), not customisable, your data is owned by them, and not extendable by developers

Where is the data stored?

- 100% of the data stay local in a SQLite database and mp4/mp3 files. You own your data

Do you encrypt the data?

- Not yet but we're working on it. We want to provide you the highest level of security.

How can I customize capture settings to reduce storage and energy usage?

- You can adjust frame rates and resolution in the configuration. Lower values will reduce storage and energy consumption. We're working on making this more user-friendly in future updates.

What are some practical use cases for screenpipe?

- RAG & question answering

- Automation (write code somewhere else while watching you coding, write docs, fill your CRM, sync company's knowledge, etc.)

- Analytics (track human performance, education, become aware of how you can improve, etc.)

- etc.

- We're constantly exploring new use cases and welcome community input!