Doing fast searching of nearest neighbors in high dimensional spaces is an increasingly important problem with notably few empirical attempts at comparing approaches in an objective way, despite a clear need for such to drive optimization forward.

This project contains tools to benchmark various implementations of approximate nearest neighbor (ANN) search for selected metrics. We have pre-generated datasets (in HDF5 format) and prepared Docker containers for each algorithm, as well as a test suite to verify function integrity.

- Annoy

- FLANN

- scikit-learn: LSHForest, KDTree, BallTree

- Weaviate

- PANNS

- NearPy

- KGraph

- NMSLIB (Non-Metric Space Library)

: SWGraph, HNSW, BallTree, MPLSH

- hnswlib (a part of nmslib project)

- RPForest

- FAISS

- DolphinnPy

- Datasketch

- nndescent

- PyNNDescent

- MRPT

- NGT

: ONNG, PANNG, QG

- SPTAG

- PUFFINN

- N2

- ScaNN

- Vearch

- Elasticsearch

: HNSW

- Elastiknn

- OpenSearch KNN

- DiskANN

: Vamana, Vamana-PQ

- Vespa

- scipy: cKDTree

- vald

- Qdrant

- HUAWEI(qsgngt)

- Milvus

: Knowhere

- Zilliz(Glass)

- pgvector

- pgvecto.rs

- RediSearch

- Descartes(01AI)

We have a number of precomputed data sets in HDF5 format. All data sets have been pre-split into train/test and include ground truth data for the top-100 nearest neighbors.

| Dataset | Dimensions | Train size | Test size | Neighbors | Distance | Download |

|---|---|---|---|---|---|---|

| DEEP1B | 96 | 9,990,000 | 10,000 | 100 | Angular | HDF5 (3.6GB) |

| Fashion-MNIST | 784 | 60,000 | 10,000 | 100 | Euclidean | HDF5 (217MB) |

| GIST | 960 | 1,000,000 | 1,000 | 100 | Euclidean | HDF5 (3.6GB) |

| GloVe | 25 | 1,183,514 | 10,000 | 100 | Angular | HDF5 (121MB) |

| GloVe | 50 | 1,183,514 | 10,000 | 100 | Angular | HDF5 (235MB) |

| GloVe | 100 | 1,183,514 | 10,000 | 100 | Angular | HDF5 (463MB) |

| GloVe | 200 | 1,183,514 | 10,000 | 100 | Angular | HDF5 (918MB) |

| Kosarak | 27,983 | 74,962 | 500 | 100 | Jaccard | HDF5 (33MB) |

| MNIST | 784 | 60,000 | 10,000 | 100 | Euclidean | HDF5 (217MB) |

| MovieLens-10M | 65,134 | 69,363 | 500 | 100 | Jaccard | HDF5 (63MB) |

| NYTimes | 256 | 290,000 | 10,000 | 100 | Angular | HDF5 (301MB) |

| SIFT | 128 | 1,000,000 | 10,000 | 100 | Euclidean | HDF5 (501MB) |

| Last.fm | 65 | 292,385 | 50,000 | 100 | Angular | HDF5 (135MB) |

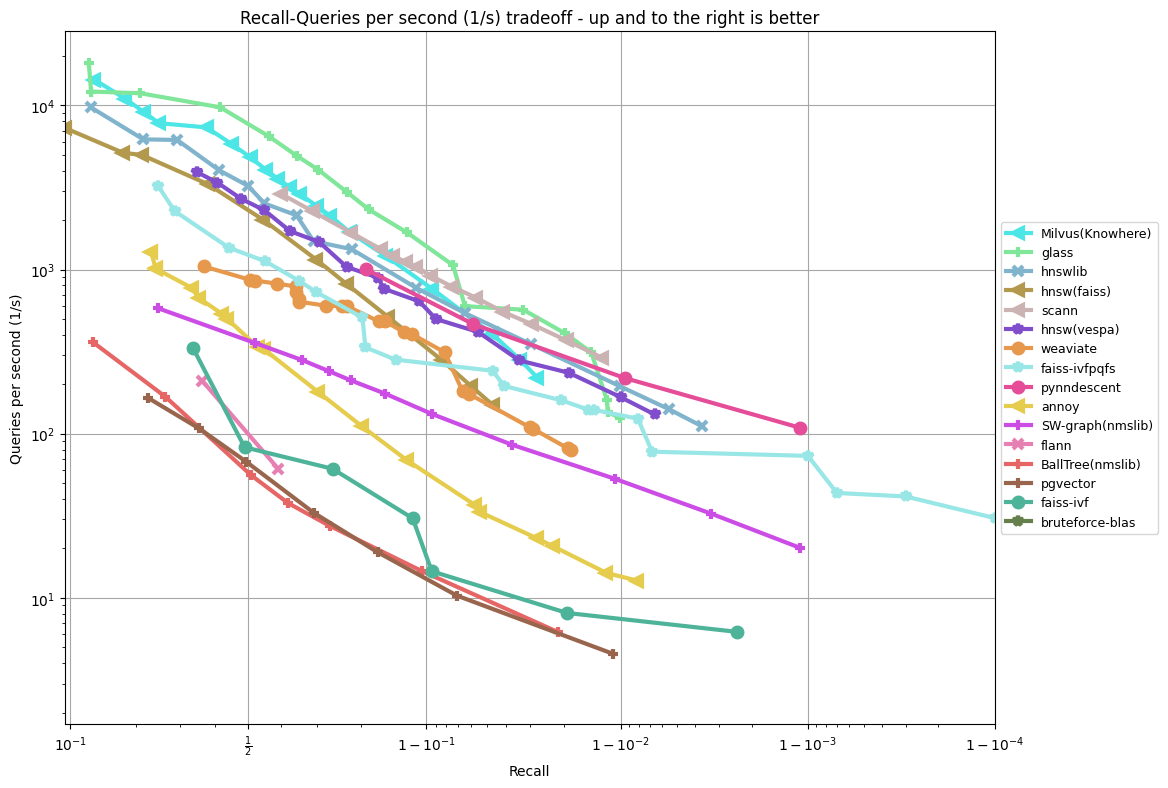

These are all as of April 2023, running all benchmarks on a r6i.16xlarge machine on AWS with --parallelism 31 and hyperthreading disabled. All benchmarks are single-CPU.

TODO: update plots on http://ann-benchmarks.com.

The only prerequisite is Python (tested with 3.10.6) and Docker.

- Clone the repo.

- Run

pip install -r requirements.txt. - Run

python install.pyto build all the libraries inside Docker containers (this can take a while, like 10-30 minutes).

- Run

python run.py(this can take an extremely long time, potentially days) - Run

python plot.pyorpython create_website.pyto plot results. - Run

python data_export.py --out res.csvto export all results into a csv file for additional post-processing.

You can customize the algorithms and datasets as follows:

- Check that

ann_benchmarks/algorithms/{YOUR_IMPLEMENTATION}/config.ymlcontains the parameter settings that you want to test - To run experiments on SIFT, invoke

python run.py --dataset glove-100-angular. Seepython run.py --helpfor more information on possible settings. Note that experiments can take a long time. - To process the results, either use

python plot.py --dataset glove-100-angularorpython create_website.py. An example call:python create_website.py --plottype recall/time --latex --scatter --outputdir website/.

Add your algorithm in the folder ann_benchmarks/algorithms/{YOUR_IMPLEMENTATION}/ by providing

- A small Python wrapper in

module.py - A Dockerfile named

Dockerfile - A set of hyper-parameters in

config.yml - A CI test run by adding your implementation to

.github/workflows/benchmarks.yml

Check the available implementations for inspiration.

- Everyone is welcome to submit pull requests with tweaks and changes to how each library is being used.

- In particular: if you are the author of any of these libraries, and you think the benchmark can be improved, consider making the improvement and submitting a pull request.

- This is meant to be an ongoing project and represent the current state.

- Make everything easy to replicate, including installing and preparing the datasets.

- Try many different values of parameters for each library and ignore the points that are not on the precision-performance frontier.

- High-dimensional datasets with approximately 100-1000 dimensions. This is challenging but also realistic. Not more than 1000 dimensions because those problems should probably be solved by doing dimensionality reduction separately.

- Single queries are used by default. ANN-Benchmarks enforces that only one CPU is saturated during experimentation, i.e., no multi-threading. A batch mode is available that provides all queries to the implementations at once. Add the flag

--batchtorun.pyandplot.pyto enable batch mode. - Avoid extremely costly index building (more than several hours).

- Focus on datasets that fit in RAM. For billion-scale benchmarks, see the related big-ann-benchmarks project.

- We mainly support CPU-based ANN algorithms. GPU support exists for FAISS, but it has to be compiled with GPU support locally and experiments must be run using the flags

--local --batch. - Do proper train/test set of index data and query points.

- Note that we consider that set similarity datasets are sparse and thus we pass a sorted array of integers to algorithms to represent the set of each user.

Built by Erik Bernhardsson with significant contributions from Martin Aumüller and Alexander Faithfull.

Design principles behind the benchmarking framework are described in the following publications:

- M. Aumüller, E. Bernhardsson, A. Faithfull: ANN-Benchmarks: A Benchmarking Tool for Approximate Nearest Neighbor Algorithms. Information Systems 2019. DOI: 10.1016/j.is.2019.02.006

- M. Aumüller, E. Bernhardsson, A. Faithfull: Reproducibility protocol for ANN-Benchmarks: A benchmarking tool for approximate nearest neighbor search algorithms, Artifacts.

- big-ann-benchmarks is a benchmarking effort for billion-scale approximate nearest neighbor search as part of the NeurIPS'21 Competition track.