Han Shu, Wenshuo Li, Yehui Tang, Yiman Zhang, Yihao Chen, Houqiang Li, Yunhe Wang, Xinghao Chen

arXiv 2023

- 2024/01/06: Demo of TinySAM is now available in OpenXLab. Thanks for the GPU grant.

- 2023/12/27: Models and demo of TinySAM are now available in Hugging Face. Thanks for merveenoyan.

- 2023/12/27: Pre-trained models and codes of Q-TinySAM (quantized variant) are released.

- 2023/12/27: Evaluation codes for zero-shot instance segmentation task on COCO are released.

- 2023/12/22: Pre-trained models and codes of TinySAM are released both in Pytorch and Mindspore.

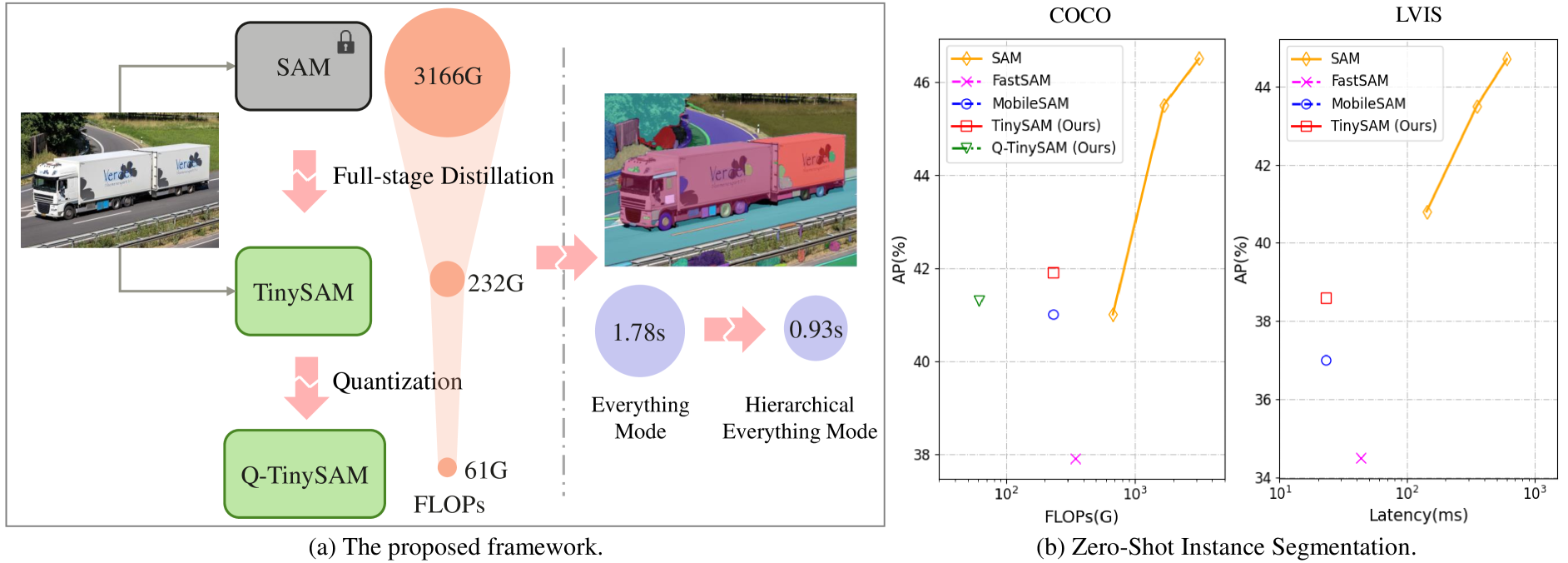

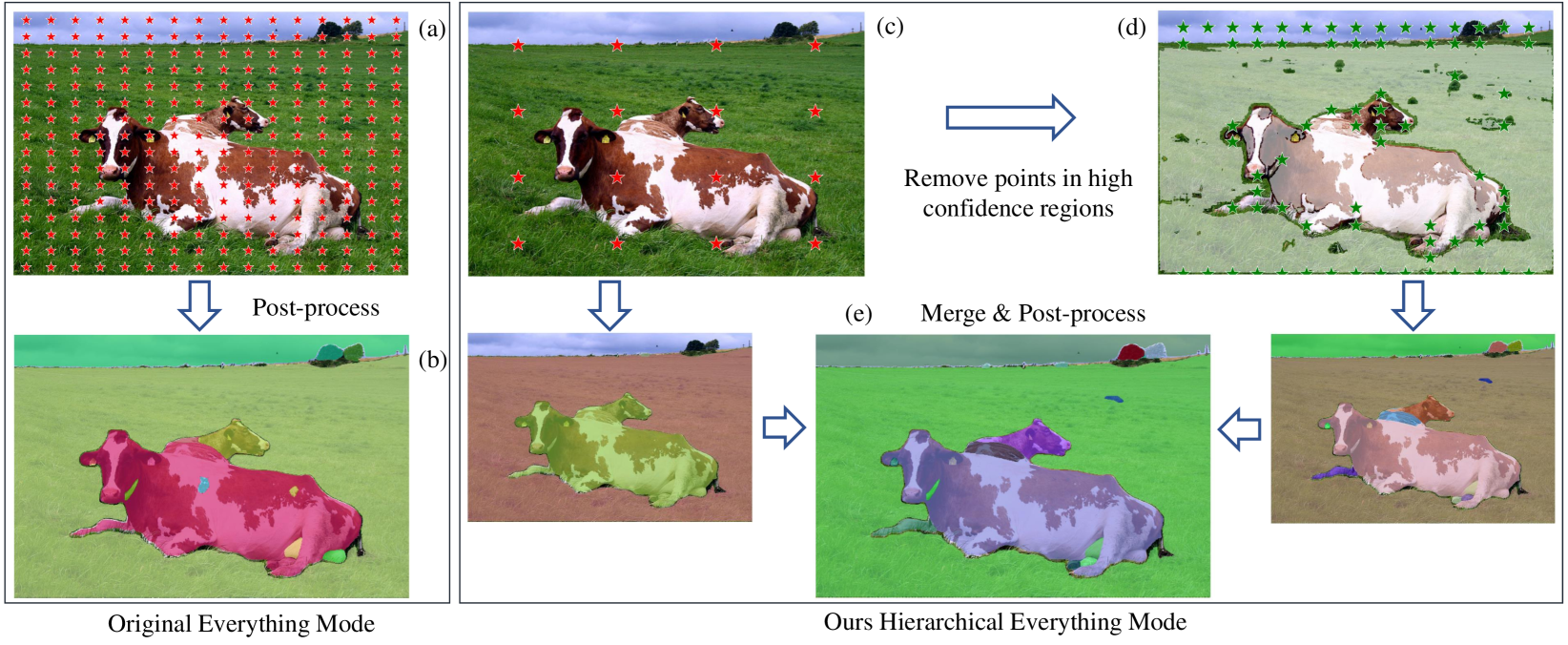

We propose a framework to obtain a tiny segment anything model (TinySAM) while maintaining the strong zero-shot performance. We first propose a full-stage knowledge distillation method with online hard prompt sampling strategy to distill a lightweight student model. We also adapt the post-training quantization to the promptable segmentation task and further reducing the computational cost. Moreover, a hierarchical segmenting everything strategy is proposed to accelerate the everything inference by with almost no performance degradation. With all these proposed methods, our TinySAM leads to orders of magnitude computational reduction and pushes the envelope for efficient segment anything task. Extensive experiments on various zero-shot transfer tasks demonstrate the significantly advantageous performance of our TinySAM against counterpart methods.

The code requires python>=3.7 and we use torch==1.10.2 and torchvision==0.11.3. To visualize the results, matplotlib>=3.5.1 is also required.

- python 3.7

- pytorch == 1.10.2

- torchvision == 0.11.3

- matplotlib==3.5.1

-

Download checkpoints into the directory of weights.

-

Run the demo code for single prompt of point or box.

python demo.py

- Run the demo code for hierarchical segment everything strategy.

python demo_hierachical_everything.py

- Run the demo code for quantization inference.

python demo_quant.py

We follow the setting of original SAM paper and evaluate the zero-shot instance segmentaion on COCO and LVIS dataset. The experiment results are described as followed.

| Model | FLOPs (G) | COCO AP (%) | LVIS AP (%) |

|---|---|---|---|

| SAM-H | 3166 | 46.6/46.5* | 44.7 |

| SAM-L | 1681 | 46.2/45.5* | 43.5 |

| SAM-B | 677 | 43.4/41.0* | 40.8 |

| FastSAM | 344 | 37.9 | 34.5 |

| MobileSAM | 232 | 41.0 | 37.0 |

| TinySAM [ckpt] | 232 | 41.9 | 38.6 |

| Q-TinySAM [ckpt] | 61 | 41.3 | 37.2 |

* Results of single output (multimask_output=False).

First download the detection boxes (coco_instances_results_vitdet.json) produced by ViTDet model, as well as the ground-truth instance segmentation labels(instances_val2017.json) and put them into eval/json_files.

Related json files for LVIS dataset are available in lvis_instances_results_vitdet.json and lvis_v1_val.json.

Run the following code to perform evaluation for zero-shot instance segmentation on COCO dataset.

cd eval; sh eval_coco.sh

The results should be:

Average Precision (AP) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.419

Average Precision (AP) @[ IoU=0.50 | area= all | maxDets=100 ] = 0.683

Average Precision (AP) @[ IoU=0.75 | area= all | maxDets=100 ] = 0.436

Average Precision (AP) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.260

Average Precision (AP) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.456

Average Precision (AP) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.583

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 1 ] = 0.325

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 10 ] = 0.511

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.532

Average Recall (AR) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.390

Average Recall (AR) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.577

Average Recall (AR) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.671

We thank the following projects: SAM, MobileSAM, TinyViT.

@article{tinysam,

title={TinySAM: Pushing the Envelope for Efficient Segment Anything Model},

author={Shu, Han and Li, Wenshuo and Tang, Yehui and Zhang, Yiman and Chen, Yihao and Li, Houqiang and Wang, Yunhe and Chen, Xinghao},

journal={arXiv preprint arXiv:2312.13789},

year={2023}

}This project is licensed under Apache License 2.0. Redistribution and use should follow this license.