Official implementation of a neural operator as described in Involution: Inverting the Inherence of Convolution for Visual Recognition (CVPR'21)

By Duo Li, Jie Hu, Changhu Wang, Xiangtai Li, Qi She, Lei Zhu, Tong Zhang, and Qifeng Chen

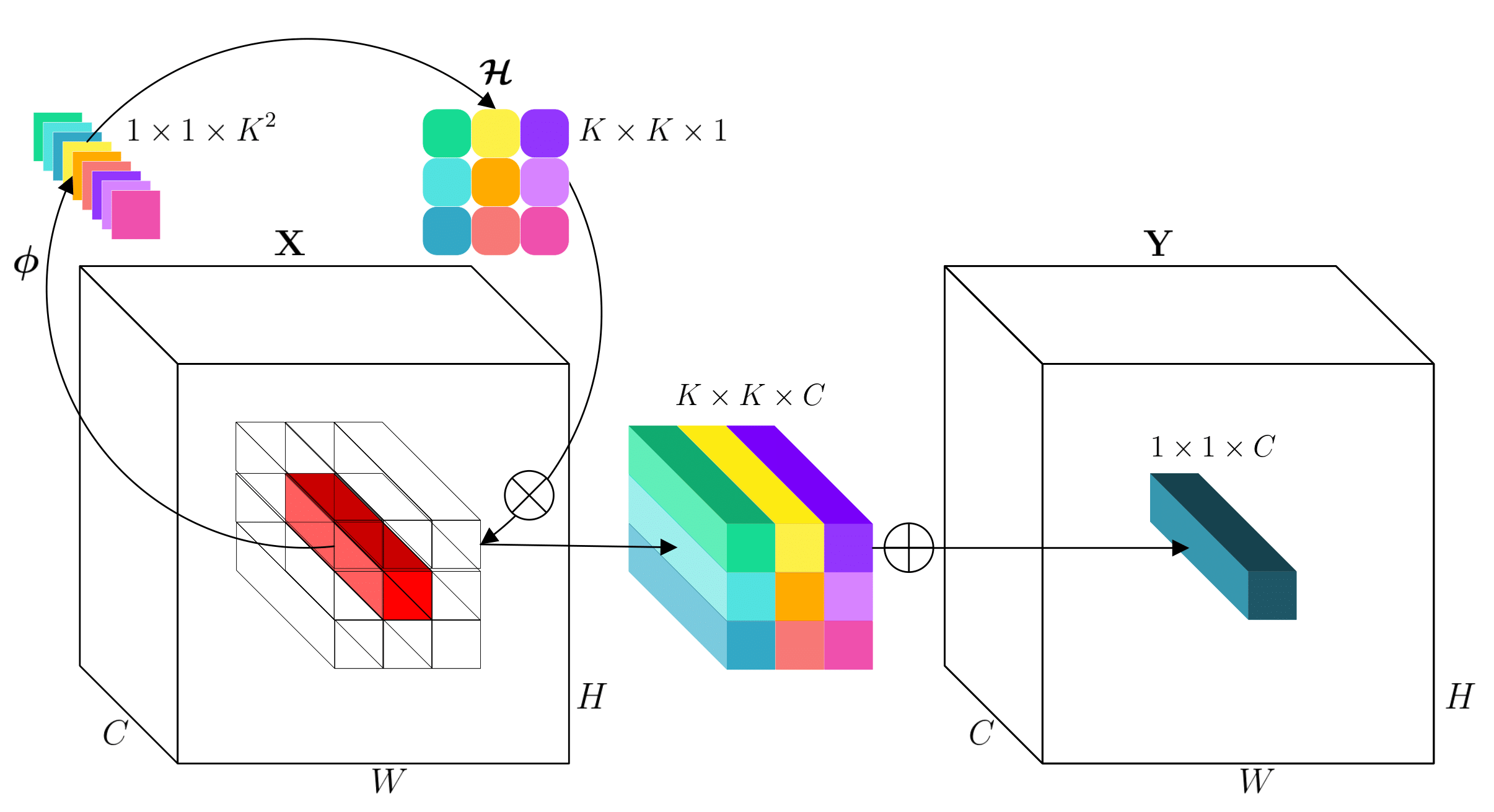

TL; DR. involution is a general-purpose neural primitive that is versatile for a spectrum of deep learning models on different vision tasks. involution bridges convolution and self-attention in design, while being more efficient and effective than convolution, simpler than self-attention in form.

This repository is fully built upon the OpenMMLab toolkits. For each individual task, the config and model files follow the same directory organization as mmcls, mmdet, and mmseg respectively, so just copy-and-paste them to the corresponding locations to get started.

For example, in terms of evaluating detectors

git clone https://github.com/open-mmlab/mmdetection # and install

# copy model files

cp det/mmdet/models/backbones/* mmdetection/mmdet/models/backbones

cp det/mmdet/models/necks/* mmdetection/mmdet/models/necks

cp det/mmdet/models/utils/* mmdetection/mmdet/models/utils

# copy config files

cp det/configs/_base_/models/* mmdetection/mmdet/configs/_base_/models

cp det/configs/_base_/schedules/* mmdetection/mmdet/configs/_base_/schedules

cp det/configs/involution mmdetection/mmdet/configs -r

# evaluate checkpoints

cd mmdetection

bash tools/dist_test.sh ${CONFIG_FILE} ${CHECKPOINT_FILE} ${GPU_NUM} [--out ${RESULT_FILE}] [--eval ${EVAL_METRICS}]For more detailed guidance, please refer to the original mmcls, mmdet, and mmseg tutorials.

Currently, we provide an memory-efficient implementation of the involuton operator based on CuPy. Please install this library in advance. A customized CUDA kernel would bring about further acceleration on the hardware. Any contribution from the community regarding this is welcomed!

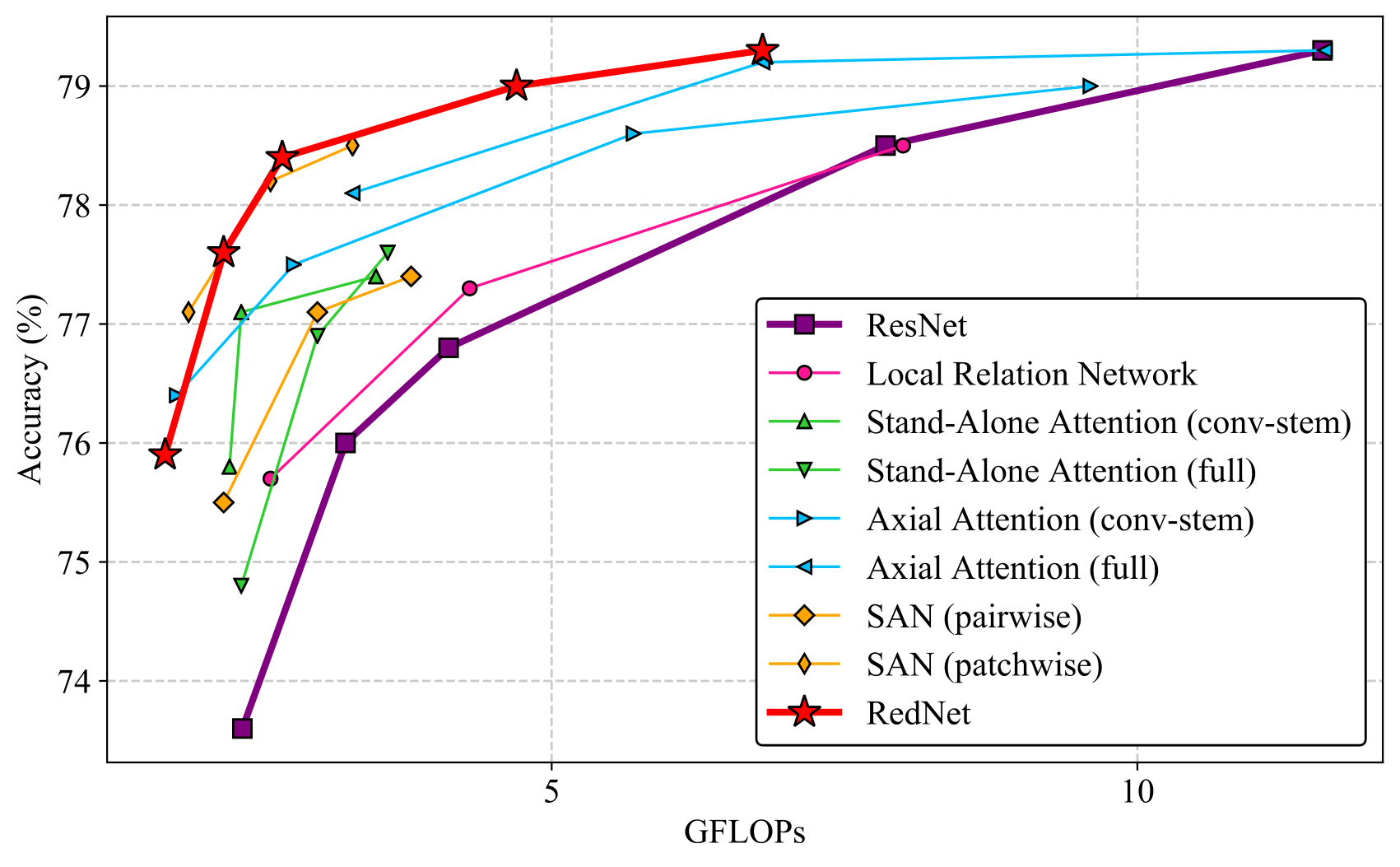

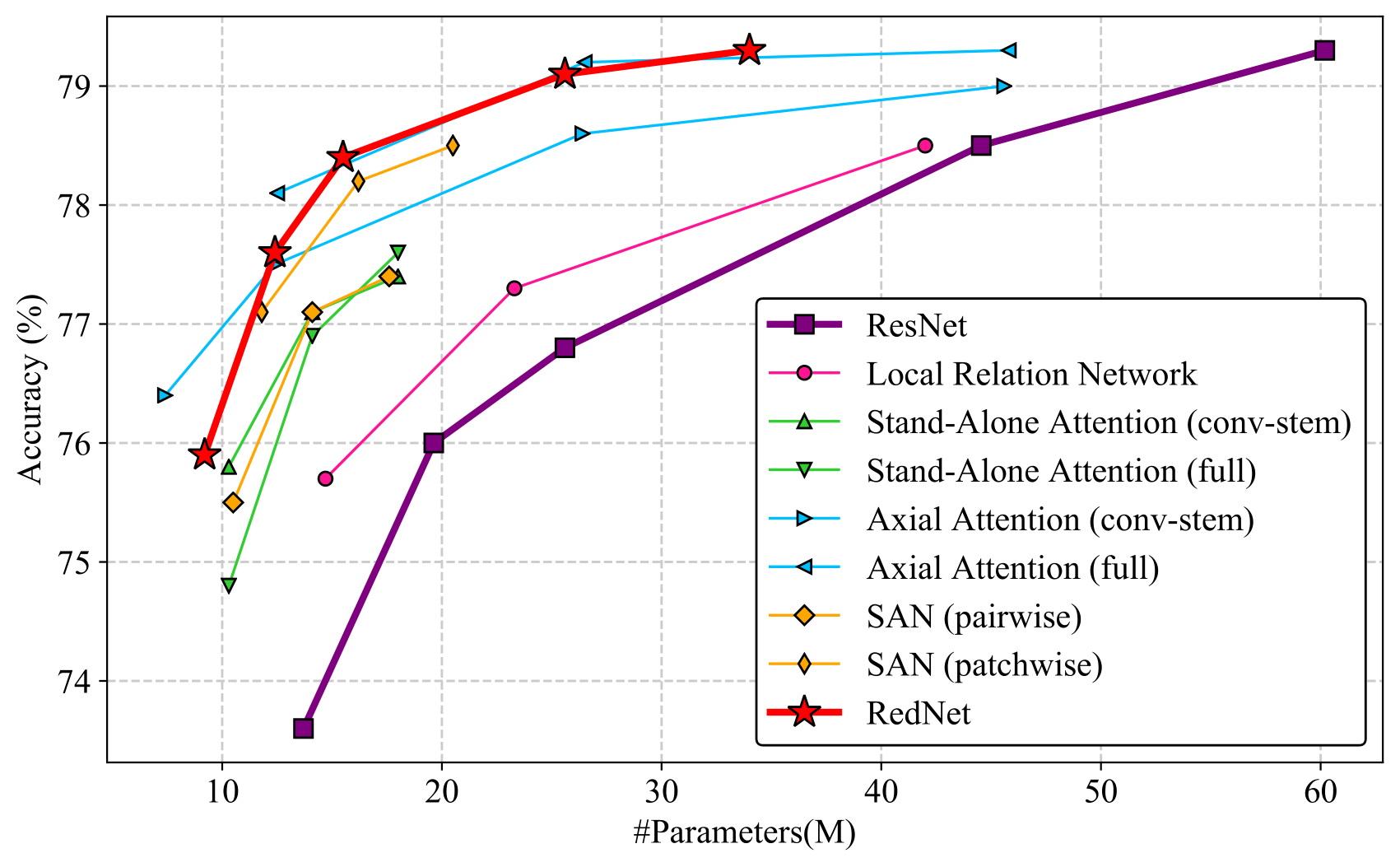

The parameters/FLOPs↓ and performance↑ compared to the convolution baselines are marked in the parentheses. Part of these checkpoints are obtained in our reimplementation runs, whose performance may show slight differences with those reported in our paper. Models are trained with 64 GPUs on ImageNet, 8 GPUs on COCO, and 4 GPUs on Cityscapes.

| Model | Params(M) | FLOPs(G) | Top-1 (%) | Top-5 (%) | Config | Download |

|---|---|---|---|---|---|---|

| RedNet-26 | 9.23(32.8%↓) | 1.73(29.2%↓) | 75.96 | 93.19 | config | model | log |

| RedNet-38 | 12.39(36.7%↓) | 2.22(31.3%↓) | 77.48 | 93.57 | config | model | log |

| RedNet-50 | 15.54(39.5%↓) | 2.71(34.1%↓) | 78.35 | 94.13 | config | model | log |

| RedNet-101 | 25.65(42.6%↓) | 4.74(40.5%↓) | 78.92 | 94.35 | config | model | log |

| RedNet-152 | 33.99(43.5%↓) | 6.79(41.4%↓) | 79.12 | 94.38 | config | model | log |

Before finetuning on the following downstream tasks, download the ImageNet pre-trained RedNet-50 weights and set the pretrained argument in det/configs/_base_/models/*.py or seg/configs/_base_/models/*.py to your local path.

| Backbone | Neck | Style | Lr schd | Params(M) | FLOPs(G) | box AP | Config | Download |

|---|---|---|---|---|---|---|---|---|

| RedNet-50-FPN | convolution | pytorch | 1x | 31.6(23.9%↓) | 177.9(14.1%↓) | 39.5(1.8↑) | config | model | log |

| RedNet-50-FPN | involution | pytorch | 1x | 29.5(28.9%↓) | 135.0(34.8%↓) | 40.2(2.5↑) | config | model | log |

| Backbone | Neck | Style | Lr schd | Params(M) | FLOPs(G) | box AP | mask AP | Config | Download |

|---|---|---|---|---|---|---|---|---|---|

| RedNet-50-FPN | convolution | pytorch | 1x | 34.2(22.6%↓) | 224.2(11.5%↓) | 39.9(1.5↑) | 35.7(0.8↑) | config | model | log |

| RedNet-50-FPN | involution | pytorch | 1x | 32.2(27.1%↓) | 181.3(28.5%↓) | 40.8(2.4↑) | 36.4(1.3↑) | config | model | log |

| Backbone | Neck | Style | Lr schd | Params(M) | FLOPs(G) | box AP | Config | Download |

|---|---|---|---|---|---|---|---|---|

| RedNet-50-FPN | convolution | pytorch | 1x | 27.8(26.3%↓) | 210.1(12.2%↓) | 38.2(1.6↑) | config | model | log |

| RedNet-50-FPN | involution | pytorch | 1x | 26.3(30.2%↓) | 199.9(16.5%↓) | 38.2(1.6↑) | config | model | log |

| Method | Backbone | Neck | Crop Size | Lr schd | Params(M) | FLOPs(G) | mIoU | Config | download |

|---|---|---|---|---|---|---|---|---|---|

| FPN | RedNet-50 | convolution | 512x1024 | 80000 | 18.5(35.1%↓) | 293.9(19.0%↓) | 78.0(3.6↑) | config | model | log |

| FPN | RedNet-50 | involution | 512x1024 | 80000 | 16.4(42.5%↓) | 205.2(43.4%↓) | 79.1(4.7↑) | config | model | log |

| UPerNet | RedNet-50 | convolution | 512x1024 | 80000 | 56.4(15.1%↓) | 1825.6(3.6%↓) | 80.6(2.4↑) | config | model | log |

If you find our work useful in your research, please cite:

@InProceedings{Li_2021_CVPR,

author = {Li, Duo and Hu, Jie and Wang, Changhu and Li, Xiangtai and She, Qi and Zhu, Lei and Zhang, Tong and Chen, Qifeng},

title = {Involution: Inverting the Inherence of Convolution for Visual Recognition},

booktitle = {IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2021}

}