This repository contains code to instantiate and deploy an object detection model. This model recognizes the objects present in an image from the 80 different high-level classes of objects in the COCO Dataset. The model consists of a deep convolutional net base model for image feature extraction, together with additional convolutional layers specialized for the task of object detection, that was trained on the COCO data set. The input to the model is an image, and the output is a list of estimated class probabilities for the objects detected in the image.

The model is based on the SSD Mobilenet V1 and Faster RCNN ResNet101 object detection model for TensorFlow. The model files are hosted on IBM Cloud Object Storage: ssd_mobilenet_v1.tar.gz and faster_rcnn_resnet101.tar.gz. The code in this repository deploys the model as a web service in a Docker container. This repository was developed as part of the IBM Developer Model Asset Exchange and the public API is powered by IBM Cloud.

| Domain | Application | Industry | Framework | Training Data | Input Data Format |

|---|---|---|---|---|---|

| Vision | Object Detection | General | TensorFlow | COCO Dataset | Image (RGB/HWC) |

- J. Huang, V. Rathod, C. Sun, M. Zhu, A. Korattikara, A. Fathi, I. Fischer, Z. Wojna, Y. Song, S. Guadarrama, K. Murphy, "Speed/accuracy trade-offs for modern convolutional object detectors", CVPR 2017

- Tsung-Yi Lin, M. Maire, S. Belongie, L. Bourdev, R. Girshick, J. Hays, P. Perona, D. Ramanan, C. Lawrence Zitnick, P. Dollár, "Microsoft COCO: Common Objects in Context", arXiv 2015

- W. Liu, D. Anguelov, D. Erhan, C. Szegedy, S. Reed, C. Fu, A. C. Berg, "SSD: Single Shot MultiBox Detector ", CoRR (abs/1512.02325), 2016

- A.G. Howard, M. Zhu, B. Chen, D. Kalenichenko, W. Wang, T. Weyand, M. Andreetto, H. Adam, "MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications", arXiv 2017

- TensorFlow Object Detection GitHub Repo

| Component | License | Link |

|---|---|---|

| This repository | Apache 2.0 | LICENSE |

| Model Weights | Apache 2.0 | TensorFlow Models Repo |

| Model Code (3rd party) | Apache 2.0 | TensorFlow Models Repo |

| Test Samples | Various | Samples README |

docker: The Docker command-line interface. Follow the installation instructions for your system.- The minimum recommended resources for this model is 2GB Memory and 2 CPUs.

To run the docker image, which automatically starts the model serving API, run:

Intel CPUs:

$ docker run -it -p 5000:5000 quay.io/codait/max-object-detectorARM CPUs (eg Raspberry Pi):

$ docker run -it -p 5000:5000 quay.io/codait/max-object-detector:arm-arm32v7-latestThis will pull a pre-built image from the Quay.io container registry (or use an existing image if already cached locally) and run it. If you'd rather checkout and build the model locally you can follow the run locally steps below.

You can deploy the model-serving microservice on Red Hat OpenShift by following the instructions for the OpenShift web

console or the OpenShift Container Platform CLI in this tutorial,

specifying quay.io/codait/max-object-detector as the image name.

You can also deploy the model on Kubernetes using the latest docker image on Quay.

On your Kubernetes cluster, run the following commands:

$ kubectl apply -f https://raw.githubusercontent.com/IBM/MAX-Object-Detector/master/max-object-detector.yamlThe model will be available internally at port 5000, but can also be accessed externally through the NodePort.

A more elaborate tutorial on how to deploy this MAX model to production on IBM Cloud can be found here.

Clone this repository locally. In a terminal, run the following command:

$ git clone https://github.com/IBM/MAX-Object-Detector.gitChange directory into the repository base folder:

$ cd MAX-Object-DetectorTo build the docker image locally for Intel CPUs, run:

$ docker build -t max-object-detector .To select a model, pass in the --build-arg model=<desired-model> switch:

$ docker build --build-arg model=faster_rcnn_resnet101 -t max-object-detector .Currently we support two models, ssd_mobilenet_v1 (default) and faster_rcnn_resnet101.

For ARM CPUs (eg Raspberry Pi), run:

$ docker build -f Dockerfile.arm32v7 -t max-object-detector .All required model assets will be downloaded during the build process. Note that currently this docker image is CPU only (we will add support for GPU images later).

To run the docker image, which automatically starts the model serving API, run:

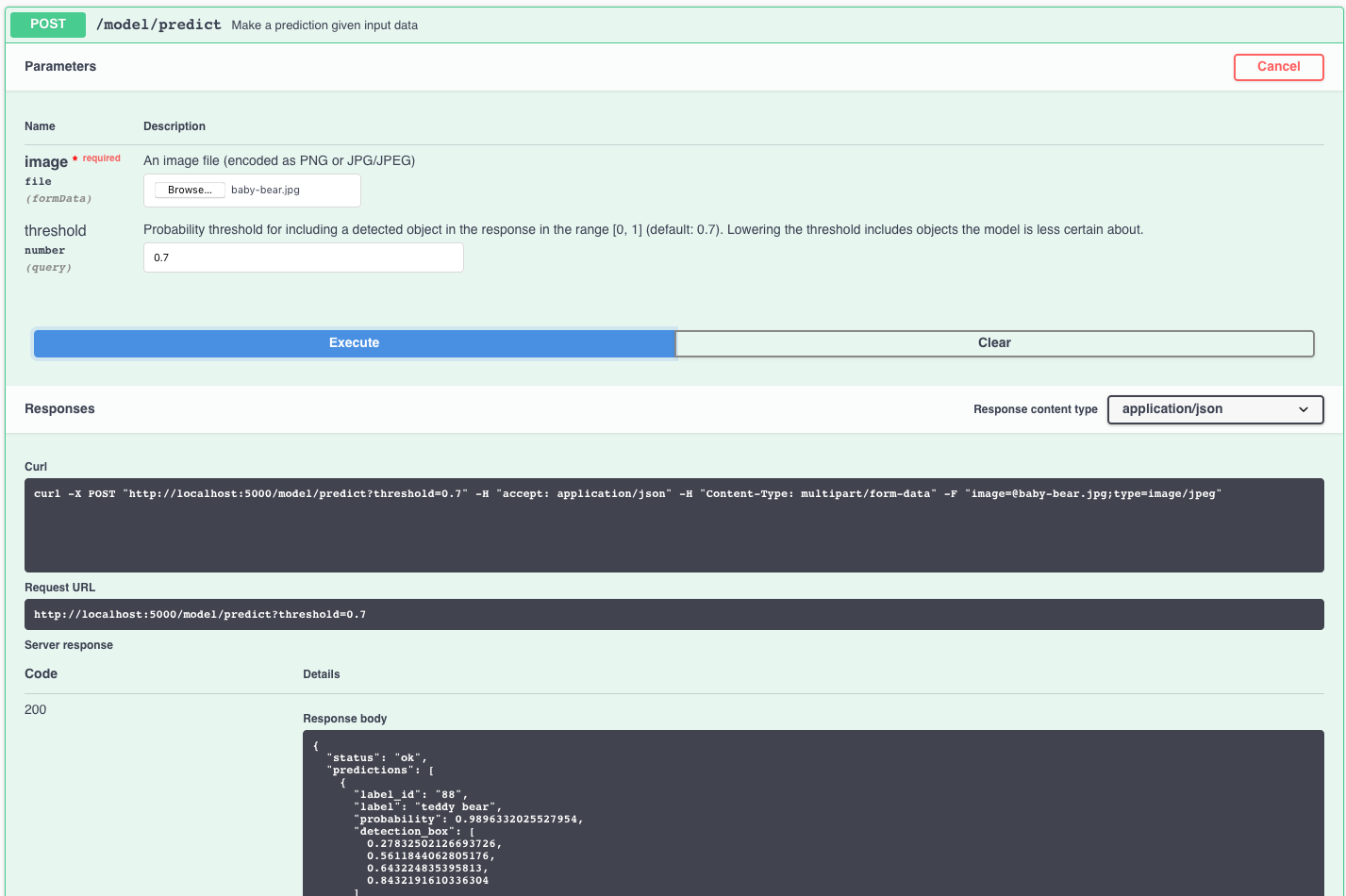

$ docker run -it -p 5000:5000 max-object-detectorThe API server automatically generates an interactive Swagger documentation page. Go to http://localhost:5000 to load it. From there you can explore the API and also create test requests.

Use the model/predict endpoint to load a test image (you can use one of the test images from the samples folder) and get predicted labels for the image from the API. The coordinates of the bounding box are returned in the detection_box field, and contain the array of normalized coordinates (ranging from 0 to 1) in the form [ymin, xmin, ymax, xmax].

You can also test it on the command line, for example:

$ curl -F "image=@samples/dog-human.jpg" -XPOST http://127.0.0.1:5000/model/predictYou should see a JSON response like that below:

{

"status": "ok",

"predictions": [

{

"label_id": "1",

"label": "person",

"probability": 0.944034993648529,

"detection_box": [

0.1242099404335022,

0.12507188320159912,

0.8423267006874084,

0.5974075794219971

]

},

{

"label_id": "18",

"label": "dog",

"probability": 0.8645511865615845,

"detection_box": [

0.10447660088539124,

0.17799153923988342,

0.8422801494598389,

0.732001781463623

]

}

]

}You can also control the probability threshold for what objects are returned using the threshold argument like below:

$ curl -F "image=@samples/dog-human.jpg" -XPOST http://127.0.0.1:5000/model/predict?threshold=0.5The optional threshold parameter is the minimum probability value for predicted labels returned by the model.

The default value for threshold is 0.7.

The demo notebook walks through how to use the model to detect objects in an image and visualize the results. By default, the notebook uses the hosted demo instance, but you can use a locally running instance (see the comments in Cell 3 for details). Note the demo requires jupyter, matplotlib, Pillow, and requests.

Run the following command from the model repo base folder, in a new terminal window:

$ jupyter notebookThis will start the notebook server. You can launch the demo notebook by clicking on demo.ipynb.

To run the Flask API app in debug mode, edit config.py to set DEBUG = True under the application settings. You will then need to rebuild the docker image (see step 1).

To stop the Docker container, type CTRL + C in your terminal.

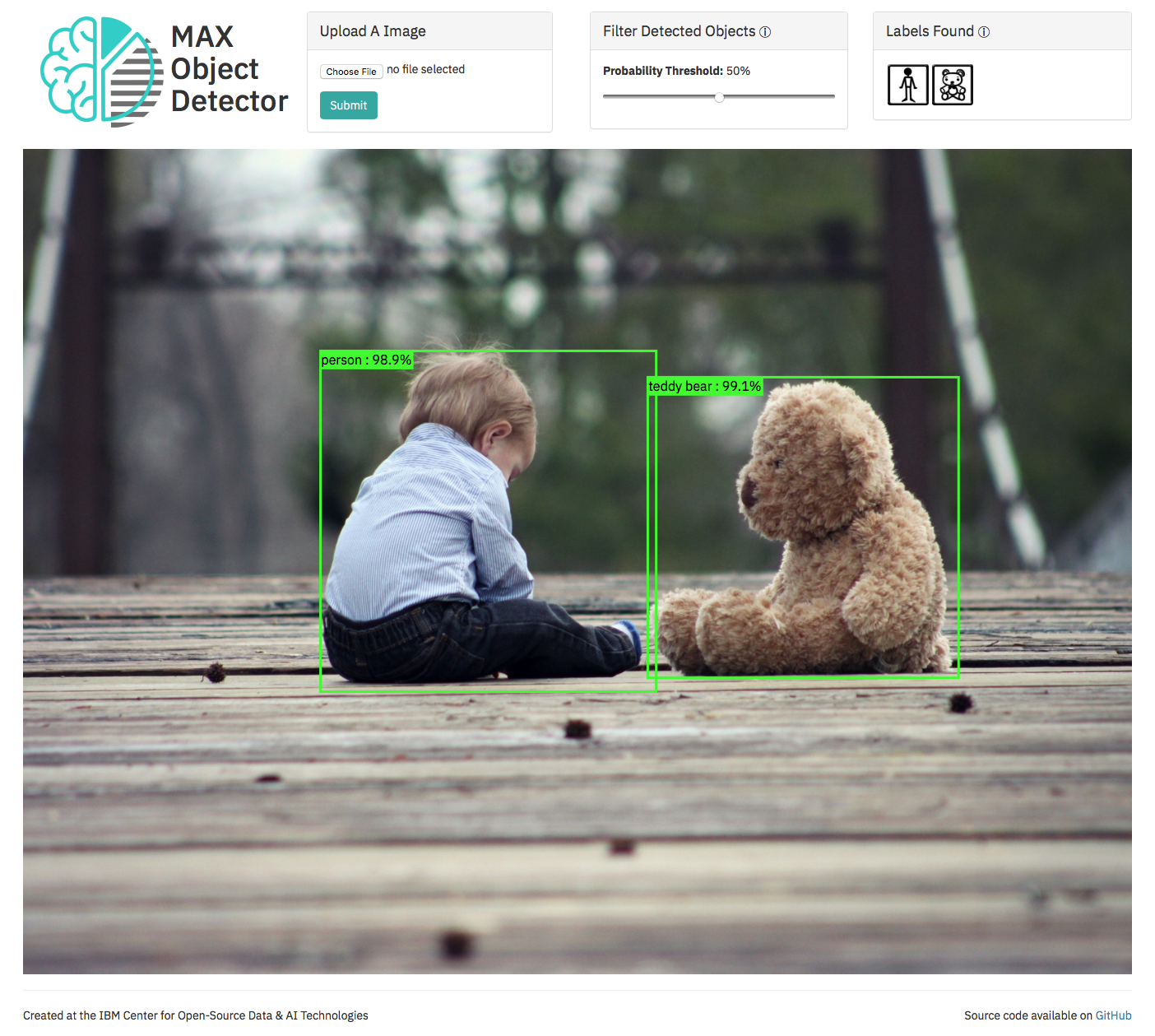

The latest release of the MAX Object Detector Web App is included in the Object Detector docker image.

When the model API server is running, the web app can be accessed at http://localhost:5000/app

and provides interactive visualization of the bounding boxes and their related labels returned by the model.

If you wish to disable the web app, start the model serving API by running:

$ docker run -it -p 5000:5000 -e DISABLE_WEB_APP=true quay.io/codait/max-object-detectorThis model supports training from scratch on a custom dataset. Please follow the steps listed under the training README to retrain the model on Watson Machine Learning, a deep learning as a service offering of IBM Cloud.

If you are interested in contributing to the Model Asset Exchange project or have any queries, please follow the instructions here.

- Object Detector Web App: A reference application created by the IBM CODAIT team that uses the Object Detector