Adversarial Robustness Toolbox (ART) v1.3

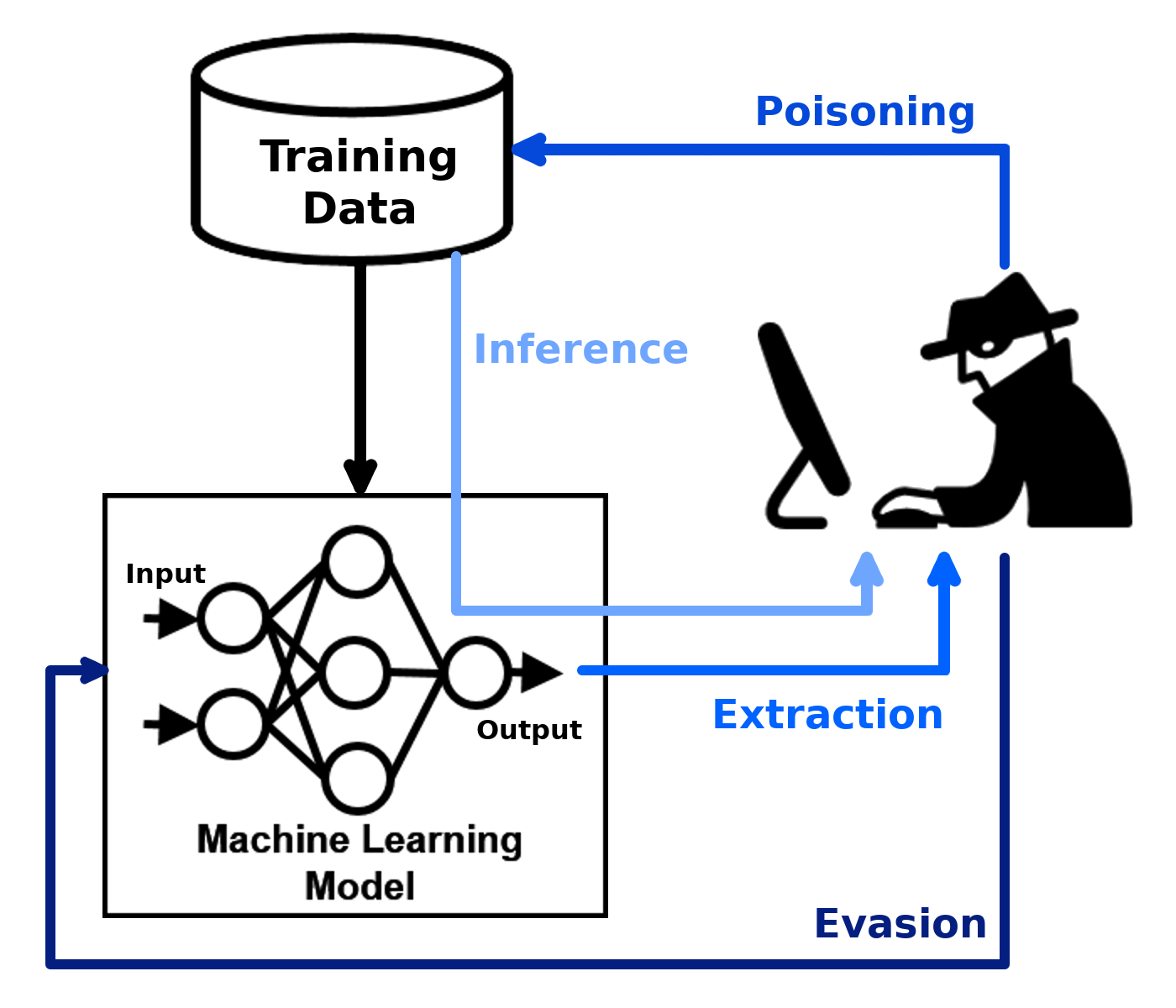

Adversarial Robustness Toolbox (ART) is a Python library for Machine Learning Security. ART provides tools that enable developers and researchers to evaluate, defend, certify and verify Machine Learning models and applications against the adversarial threats of Evasion, Poisoning, Extraction, and Inference. ART supports all popular machine learning frameworks (TensorFlow, Keras, PyTorch, MXNet, scikit-learn, XGBoost, LightGBM, CatBoost, GPy, etc.), all data types (images, tables, audio, video, etc.) and machine learning tasks (classification, object detection, generation, certification, etc.).

Learn more

| Get Started | Documentation | Contributing |

|---|---|---|

| - Installation - Examples - Notebooks |

- Attacks - Defences - Estimators - Metrics - Technical Documentation |

- Slack, Invitation - Contributing - Roadmap - Citing |

The library is under continuous development. Feedback, bug reports and contributions are very welcome!