A tool to get the full text of a multipage item in Harvard Digital Collections

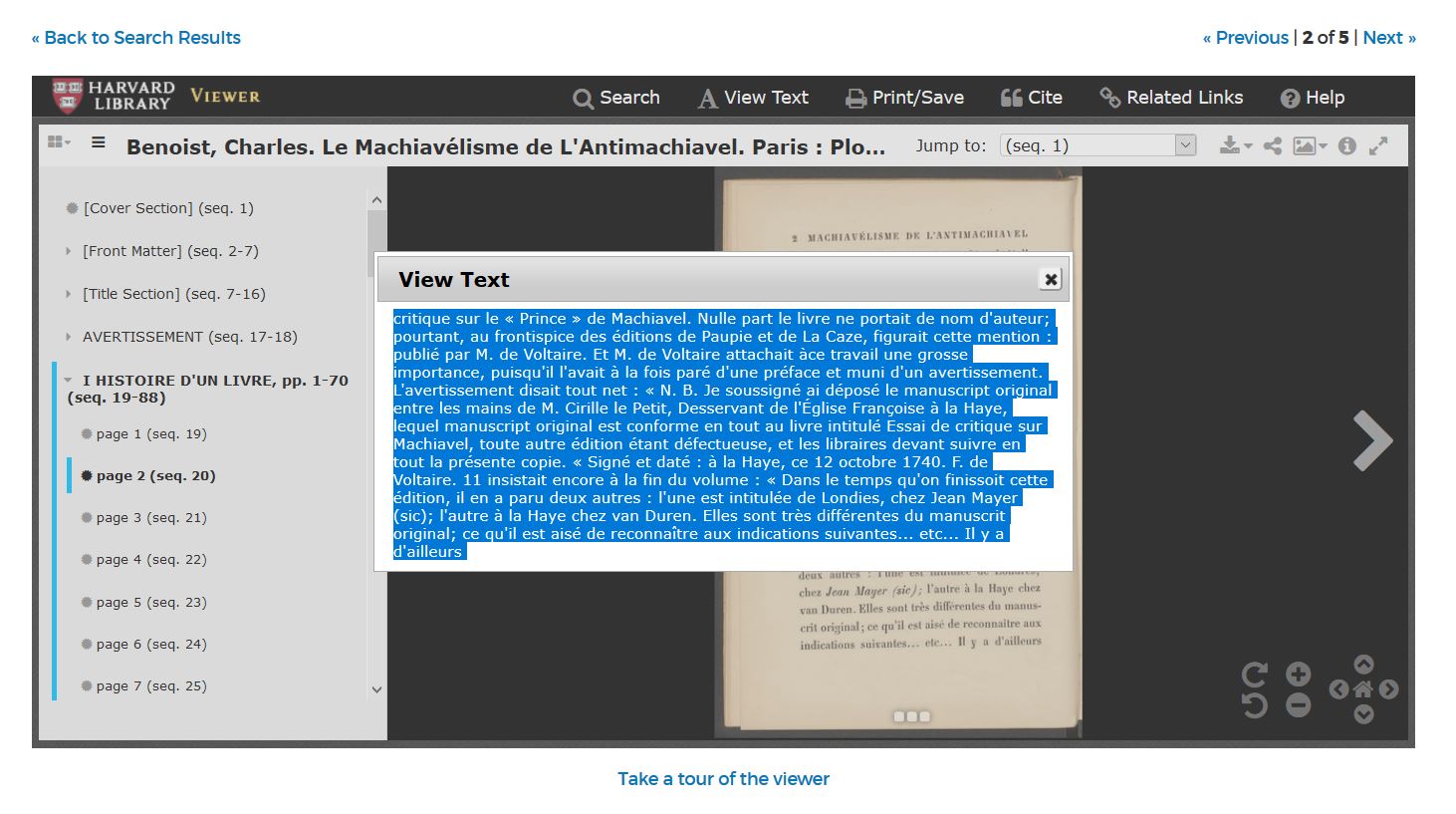

In version 1 of Harvard Digital Collections, users who want the full OCR text of a multi-page item have to copy it from a popup in the mirador viewer, which takes multiple clicks and is time-consuming for more than a few pages.

dlft is a proof of concept for single-click full-text download. The script takes the URL of a Harvard Digital Collections item page as input. After locating the Digital Repository Service (DRS) identifier on the HDC page, the script calls the endpoint https://pds.lib.harvard.edu/pds/get/ to get the text for each page and concatenates them all into a single TXT file, saved in /Results/.

The program was made with Python 3.7.3 and needs the following modules installed in the run environment:

requestsbs4tqdm

To install python modules, you can use pip with this syntax at a bash console: pip install <name of module>

- Check to make sure you have the above external modules installed.

- Change the value of

HDC_urlat the top ofdlft.pyto the desired URL. Example:

HDC_url = 'https://digitalcollections.library.harvard.edu/catalog/990043816950203941'- Run

dlft.pyin a bash console withpython dlft.py. - Wait. It will take a while, depending on the length of the book. The page delivery service seems to be able to return about 2-3 pages per second.

You can set the page range manually to get only the OCR for a specified range. Change False to True and change the numbers in these lines:

manual_pagination = False

manual_page_start = 1

manual_page_end = 11Before this tool is added to the interface of Harvard Digital Collections, it will need to be rewritten using javascript. More work might also be done to find the best endpoint. https://pds.lib.harvard.edu/pds/get/ may not be as fast as http://fds.lib.harvard.edu/fds/deliver/, but the File Delivery Service requires the exact DRS ID for each txt file, which needs to be scraped from a comprehensive XML page available from the same endpoint.