The ChatWithYourDocs Chat App is a Python application that allows you to chat with multiple Docs formats like PDF, WEB pages and YouTube videos. You can ask questions about the PDFs using natural language, and the application will provide relevant responses based on the content of the documents. This app utilizes a language model to generate accurate answers to your queries. Please note that the app will only respond to questions related to the loaded Docs.

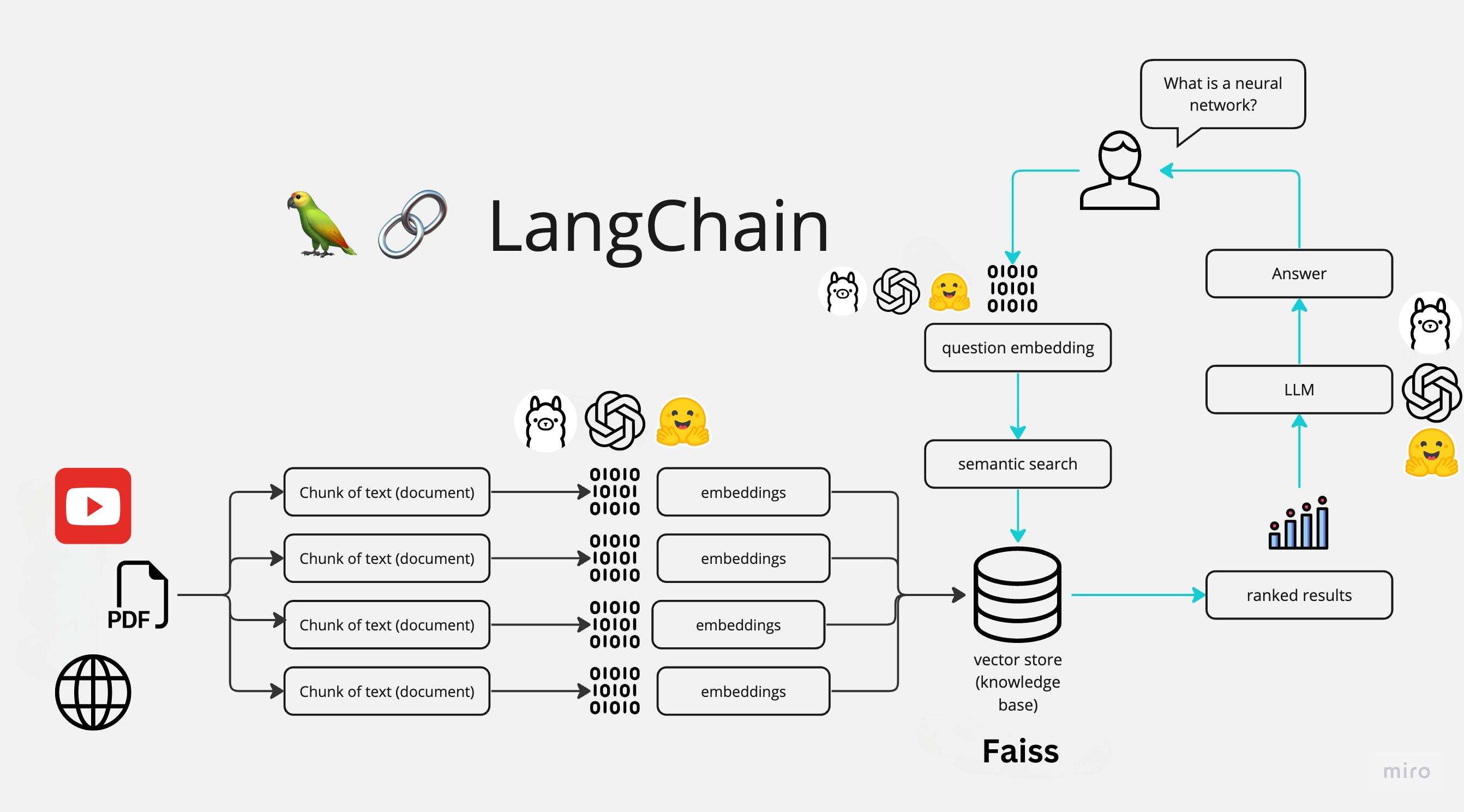

The application follows these steps to provide responses to your questions:

-

Doc Loading: The app reads multiple Docs types and extracts their text content.

-

Text Chunking: The extracted text is divided into smaller chunks that can be processed effectively.

-

Language Model: The application utilizes a language model to generate vector representations (embeddings) of the text chunks.

-

Similarity Matching: When you ask a question, the app compares it with the text chunks and identifies the most semantically similar ones.

-

Response Generation: The selected chunks are passed to the language model, which generates a response based on the relevant content of the Docs.

To install the Chat With Your Docs App, please follow these steps:

-

Download Ollama library

curl https://ollama.ai/install.sh | sh -

pull the chat models we will use, in this case we will use LLAMA2, MISTRAL and GEMMA

ollama pull llama2ollama pull mistralollama pull gemma -

Create new environment with python 3.9 and activate it, in this case we will use conda

conda create -n cwd python=3.9conda activate cwd -

Clone the repository to your local machine.

git clone https://github.com/jorge-armando-navarro-flores/chat_with_your_docs.gitcd chat_with_your_docs -

Install the required dependencies by running the following command:

pip install -r requirements.txt -

Install ffmpeg for YouTube videos:

sudo apt-get install ffmpeg

To use the Chat With Your Docs app, follow these steps:

-

Run the

main.pyfile using the Streamlit CLI. Execute the following command:python3 main.py -

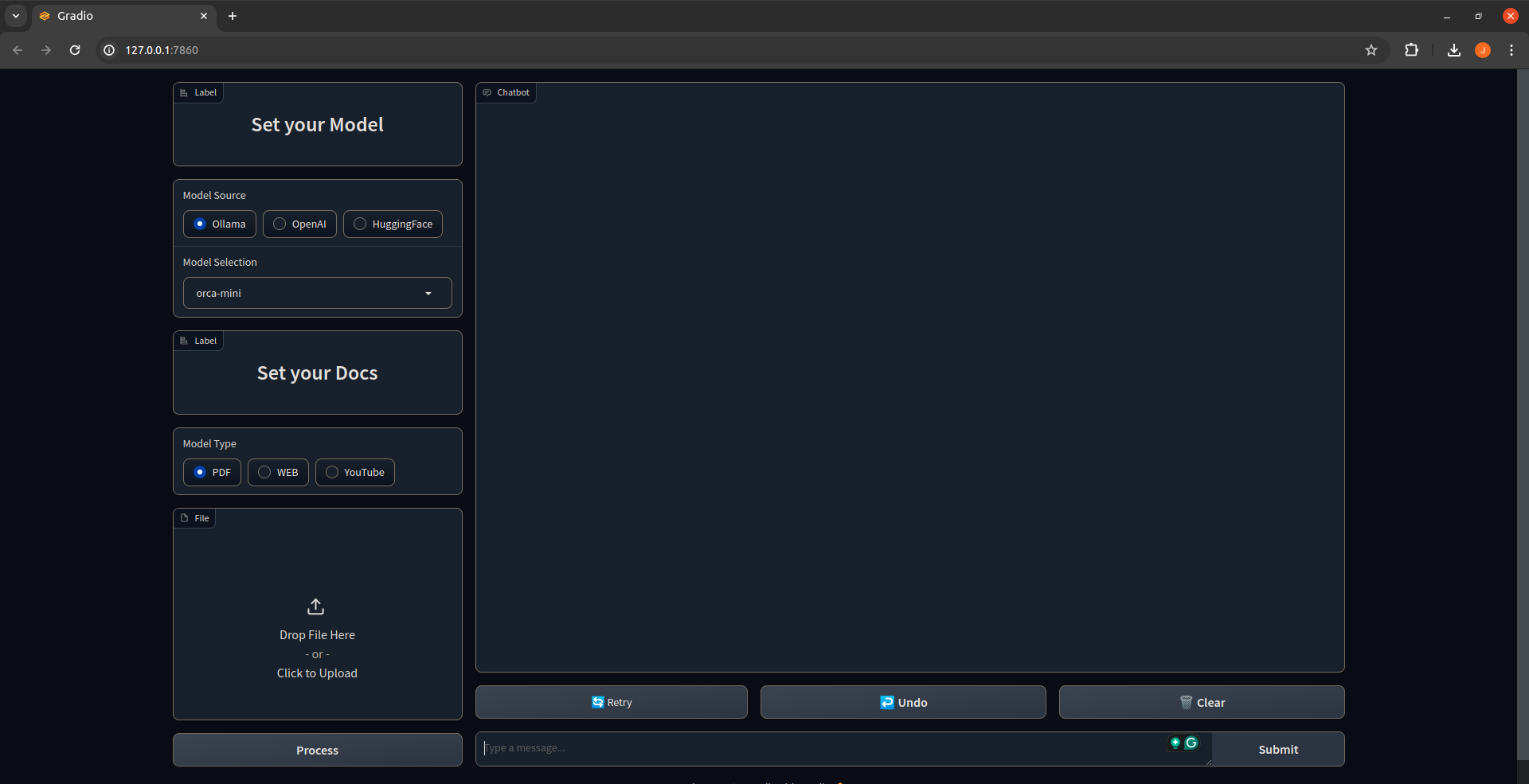

The application will launch in your default web browser, displaying the user interface.

Classes:

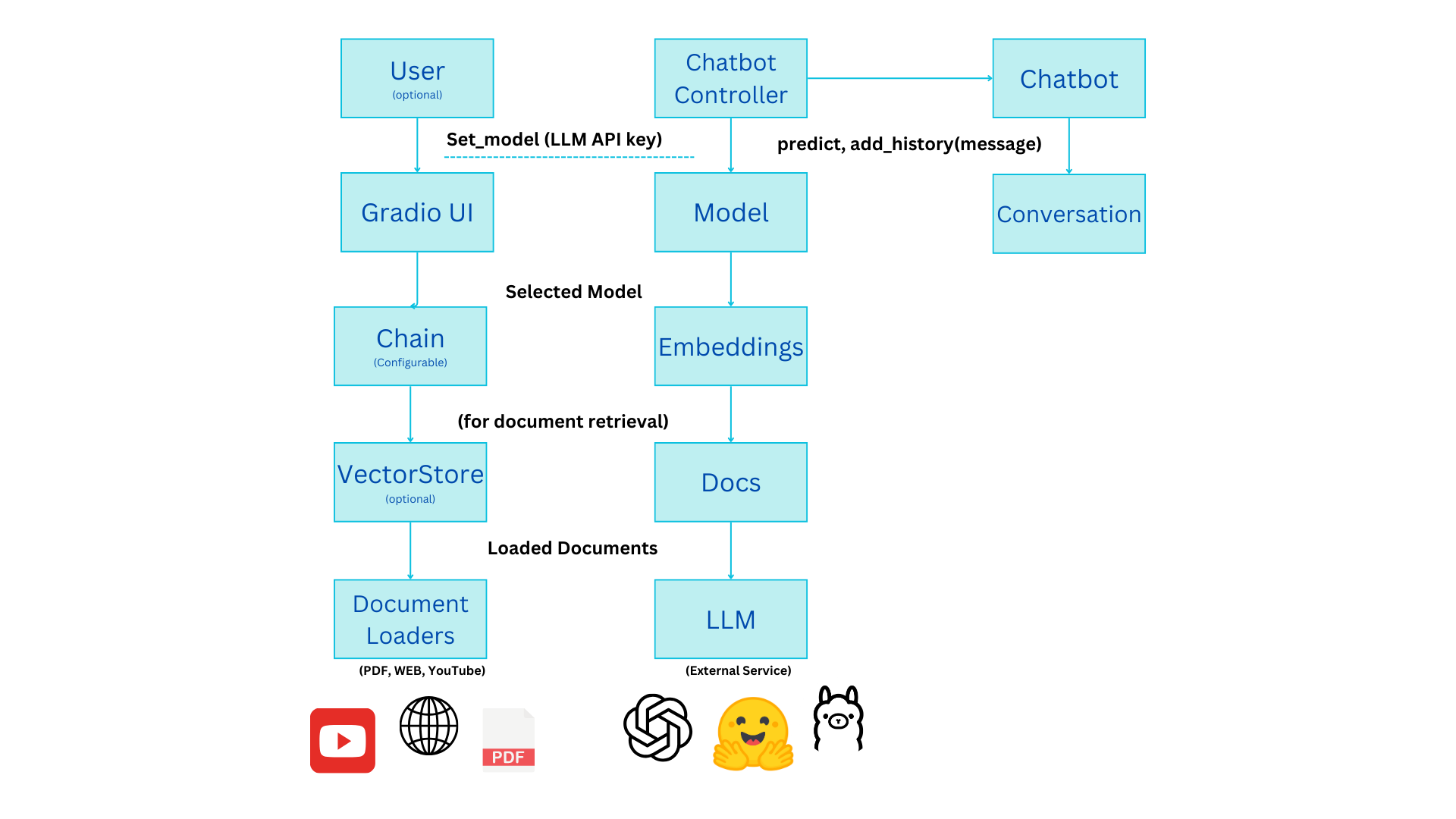

- LLM: This abstract class serves as a blueprint for different LLM implementations. It has subclasses like

OllamaModel,OpenAIModel, andHFModelthat handle specific LLM providers (Ollama, OpenAI, and Hugging Face). - Docs: This class manages document loading from various sources like PDFs, web pages, and YouTube videos.

- VectorStore: This class creates a vector representation of the documents using LLM embeddings for efficient retrieval.

- Chain: This class defines the processing pipeline for the chatbot. It can be a simple chain for basic question answering or a retrieval chain that searches documents based on the conversation history.

- ChatBot: This class handles user interaction, maintains conversation history, and calls the appropriate chain method to generate responses.

- ChatBotController: This class is the main interface for the user. It allows setting the LLM model, document source, and retrieval options. It also handles user queries and interacts with the ChatBot instance.

Functionality:

-

Setting Up:

- The user selects the LLM provider (Ollama, OpenAI, or HuggingFace) and model from a dropdown menu. Optionally, an API key might be needed for certain providers.

- The user can choose to add documents (PDF, web page URL, or YouTube video URL) for retrieval tasks.

-

Processing:

- Based on the chosen LLM and documents (if any), the controller configures the processing chain.

- A simple chain is used for basic question answering without document retrieval.

- A retrieval chain involves:

- Creating a vector representation of the documents using the LLM's embeddings.

- Defining prompts to generate search queries based on the conversation history.

- Retrieving relevant documents based on the generated queries.

- Using the retrieved documents to answer the user's question.

-

Interaction:

- The user types their questions in the chat interface.

- The controller calls the

predictmethod of the ChatBot, passing the user's query and conversation history (if retrieval is enabled). - The ChatBot retrieves the appropriate response based on the chosen chain configuration.

Additional Features:

- The interface includes buttons for "Undo" and "Clear" conversation history.

Overall, this code demonstrates a well-structured architecture for a chatbot that can leverage different LLMs and incorporate document retrieval for enhanced capabilities.