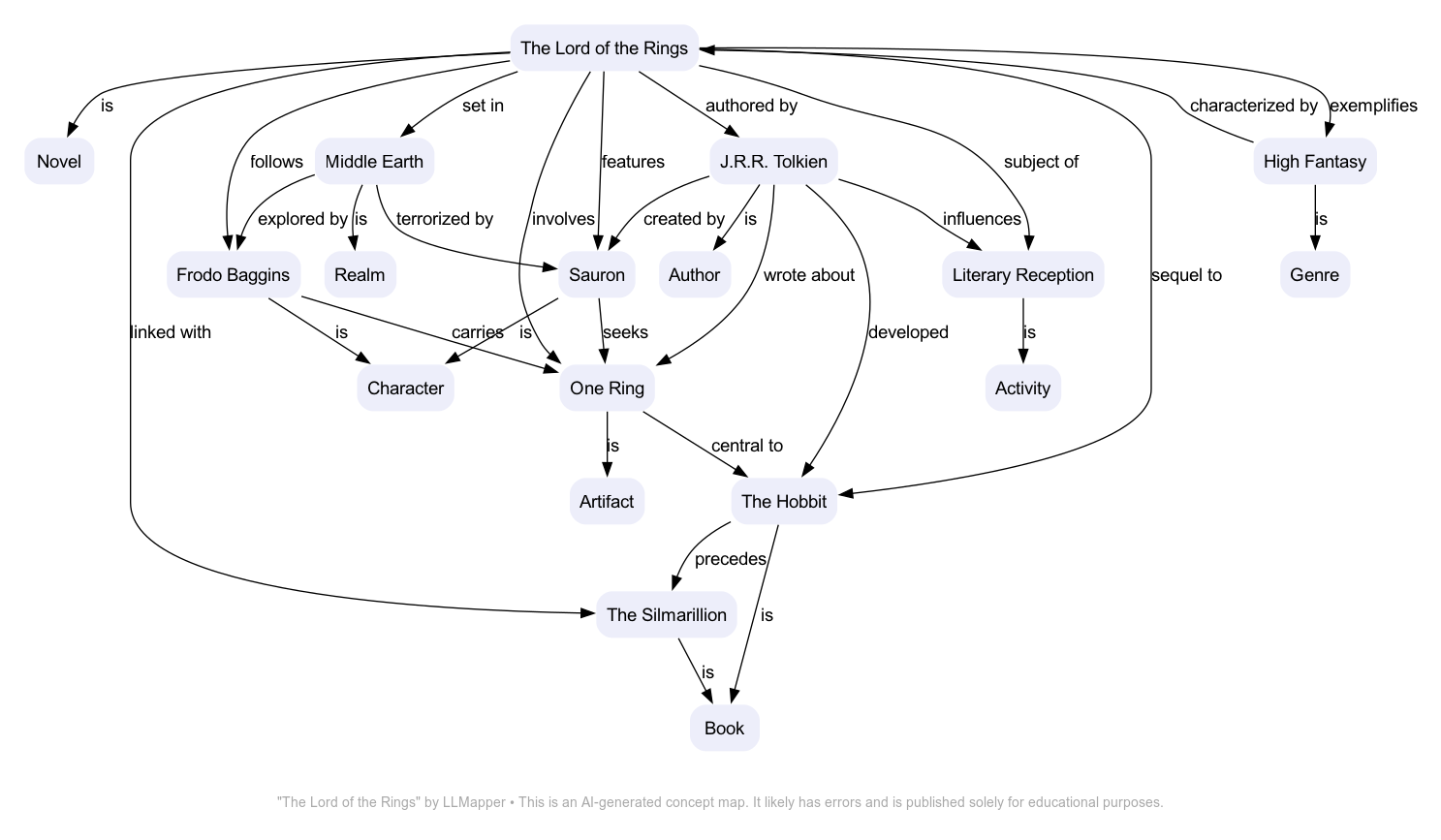

An experiment in using LLMs to draw simple concept maps. Currently, a simple bash script that uses Simon Willison's llm, strip-tags, and ttok tools to generate a PNG via Graphviz.

LLMapper is a crude prototype for refining the prompts. Which is to say, this isn't (yet) a serious tool; it's a toy for learning about generative AI.

It's very early days. Among other things, there's no error detection or graceful failures. Use at your own risk.

The script runs in a bash shell. It's only been tested in macOS, but will likely work in Linux with minor changes.

Dependencies:

Except where noted, llm uses GPT-4 in this script. If you don't have access to the paid version of OpenAI's API, replace the model (-m) option on the llm calls.

Pass llmapper a Wikipedia URL. E.g.:

./llmapper https://en.wikipedia.org/wiki/The_Lord_of_the_Rings

Try running it several times. The map will be different each time.

See more samples at modelor.ai.

- curl retrieves the URL's content.

- strip-tags filters everything out except the div with the article's body.

- ttok truncates the article to 8,000 tokens

- llm summarizes the truncated article

- The summary is cleaned up and piped to an llm prompt that formats it as RDF.

- The RDF is passed to another llm that translates it to DOT code for rendering as a concept map in Graphviz.

- A sequence of calls to ImageMagick tools adds margins and the bottom caption.