This repo includes some modifications to do transfer learning on Jaco robotic arms

-

Mujoco model for

-

Script for running transfer experiments

python mbexp.py -env jacopython render.py -env jaco -model-dir path/to/model -logdir path/to/logpython transfer.py -env jaco -model-dir path/to/model -logdir path/to/logpython mbexp.py -env jaco -physicsThis repo contains a pytorch implementation of the wonderful model-based Reinforcement Learning algorithms proposed in Deep Reinforcement Learning in a Handful of Trials using Probabilistic Dynamics Models.

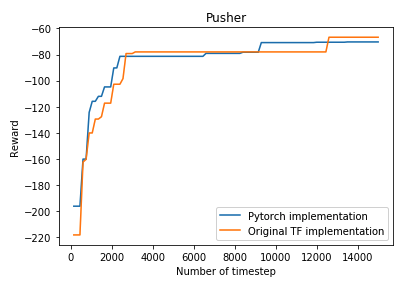

As of now, the repo only supports the most high-performing variant: probabilistic ensemble for the learned dynamics model, TSinf trajectory sampling and Cross Entropy method for action optimization.

The code is structured with the same levels of abstraction as the original TF implementation, with the exception that the TF dynamics model is replaced by a Pytorch dynamics model.

I'm happy to take pull request if you see ways to improve the repo :).

The y-axis indicates the maximum reward seen so far, as is done in the paper.

- The requirements in the original TF implementation

- Pytorch 1.0.0

For specific requirements, please take a look at the pip dependency file requirements.txt and conda dependency file environments.yml.

- install mujoco 2.0

wget https://www.roboti.us/download/mujoco200_linux.zip

unzip mujoco200_linux.zip

mv mujoco200_linux.zip ~/.mujoco

- install dm_control

pip install git+git://github.com/deepmind/dm_control.git

- install dm_control2gym

git clone https://github.com/martinseilair/dm_control2gym

pip install .

- install dependencies

pip install -r requirements.txt

Experiments for a particular environment can be run using:

python mbexp.py

-env ENV (required) The name of the environment. Select from

[cartpole, reacher, pusher, halfcheetah, jaco, manipulator].

Results will be saved in <logdir>/<date+time of experiment start>/.

Trial data will be contained in logs.mat, with the following contents:

{

"observations": NumPy array of shape

[num_train_iters * nrollouts_per_iter + ninit_rollouts, trial_lengths, obs_dim]

"actions": NumPy array of shape

[num_train_iters * nrollouts_per_iter + ninit_rollouts, trial_lengths, ac_dim]

"rewards": NumPy array of shape

[num_train_iters * nrollouts_per_iter + ninit_rollouts, trial_lengths, 1]

"returns": Numpy array of shape [1, num_train_iters * neval]

}

To visualize the result, please take a look at plotter.ipynb

python render.py -env ENV -model-dir path/to/model/ -logdir path/to/log

Huge thank to the authors of the paper for open-sourcing their code. Most of this repo is taken from the official TF implementation.