***** New: StarGAN v2 is available at https://github.com/clovaai/stargan-v2 *****

This repository provides the official PyTorch implementation of the following paper:

StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation

Yunjey Choi1,2, Minje Choi1,2, Munyoung Kim2,3, Jung-Woo Ha2, Sung Kim2,4, Jaegul Choo1,2

1Korea University, 2Clova AI Research, NAVER Corp.

3The College of New Jersey, 4Hong Kong University of Science and Technology

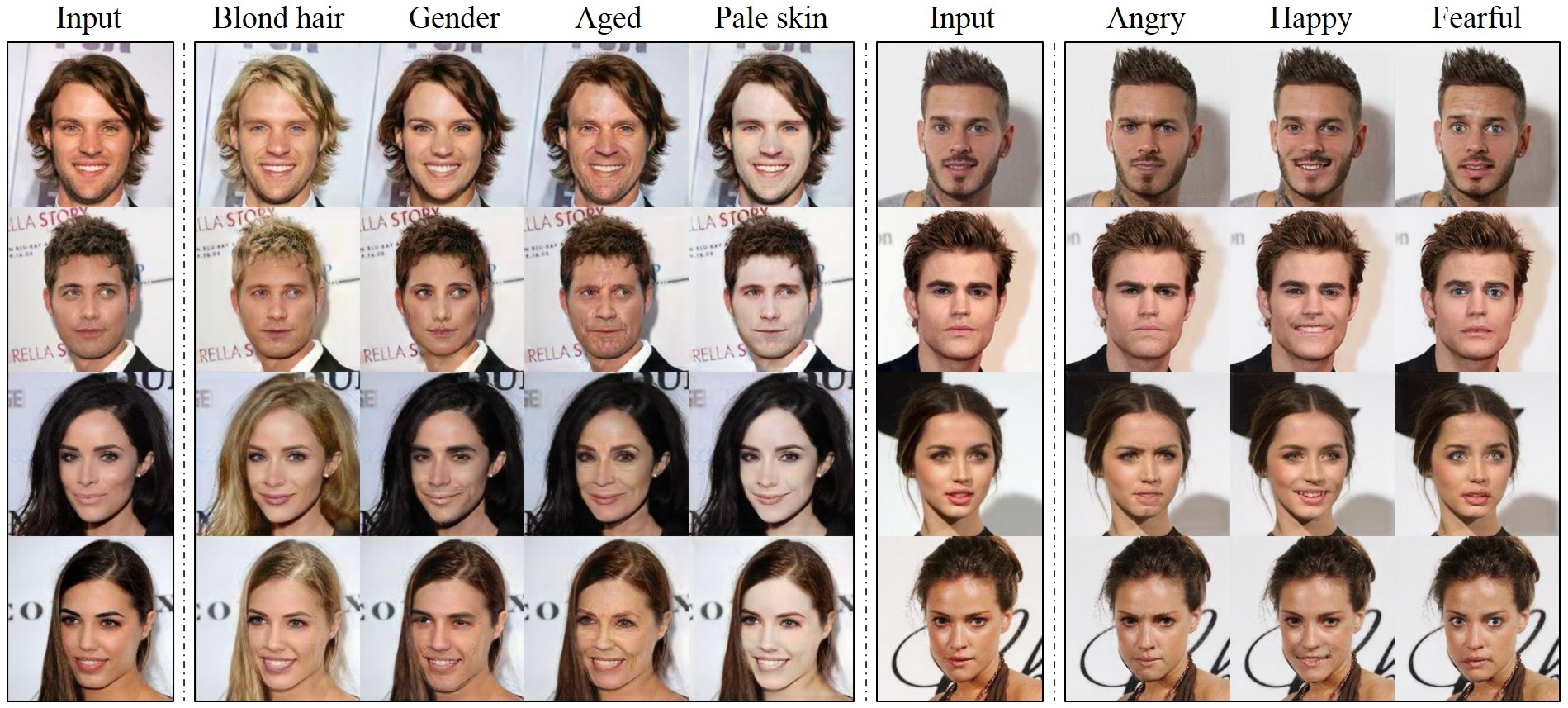

https://arxiv.org/abs/1711.09020Abstract: Recent studies have shown remarkable success in image-to-image translation for two domains. However, existing approaches have limited scalability and robustness in handling more than two domains, since different models should be built independently for every pair of image domains. To address this limitation, we propose StarGAN, a novel and scalable approach that can perform image-to-image translations for multiple domains using only a single model. Such a unified model architecture of StarGAN allows simultaneous training of multiple datasets with different domains within a single network. This leads to StarGAN's superior quality of translated images compared to existing models as well as the novel capability of flexibly translating an input image to any desired target domain. We empirically demonstrate the effectiveness of our approach on a facial attribute transfer and a facial expression synthesis tasks.

- Python 3.5+

- PyTorch 0.4.0+

- TensorFlow 1.3+ (optional for tensorboard)

To download the CelebA dataset:

git clone https://github.com/yunjey/StarGAN.git

cd StarGAN/

bash download.sh celebaTo download the RaFD dataset, you must request access to the dataset from the Radboud Faces Database website. Then, you need to create a folder structure as described here.

To train StarGAN on CelebA, run the training script below. See here for a list of selectable attributes in the CelebA dataset. If you change the selected_attrs argument, you should also change the c_dim argument accordingly.

# Train StarGAN using the CelebA dataset

python main.py --mode train --dataset CelebA --image_size 128 --c_dim 5 \

--sample_dir stargan_celeba/samples --log_dir stargan_celeba/logs \

--model_save_dir stargan_celeba/models --result_dir stargan_celeba/results \

--selected_attrs Black_Hair Blond_Hair Brown_Hair Male Young

# Test StarGAN using the CelebA dataset

python main.py --mode test --dataset CelebA --image_size 128 --c_dim 5 \

--sample_dir stargan_celeba/samples --log_dir stargan_celeba/logs \

--model_save_dir stargan_celeba/models --result_dir stargan_celeba/results \

--selected_attrs Black_Hair Blond_Hair Brown_Hair Male YoungTo train StarGAN on RaFD:

# Train StarGAN using the RaFD dataset

python main.py --mode train --dataset RaFD --image_size 128 \

--c_dim 8 --rafd_image_dir data/RaFD/train \

--sample_dir stargan_rafd/samples --log_dir stargan_rafd/logs \

--model_save_dir stargan_rafd/models --result_dir stargan_rafd/results

# Test StarGAN using the RaFD dataset

python main.py --mode test --dataset RaFD --image_size 128 \

--c_dim 8 --rafd_image_dir data/RaFD/test \

--sample_dir stargan_rafd/samples --log_dir stargan_rafd/logs \

--model_save_dir stargan_rafd/models --result_dir stargan_rafd/resultsTo train StarGAN on both CelebA and RafD:

# Train StarGAN using both CelebA and RaFD datasets

python main.py --mode=train --dataset Both --image_size 256 --c_dim 5 --c2_dim 8 \

--sample_dir stargan_both/samples --log_dir stargan_both/logs \

--model_save_dir stargan_both/models --result_dir stargan_both/results

# Test StarGAN using both CelebA and RaFD datasets

python main.py --mode test --dataset Both --image_size 256 --c_dim 5 --c2_dim 8 \

--sample_dir stargan_both/samples --log_dir stargan_both/logs \

--model_save_dir stargan_both/models --result_dir stargan_both/resultsTo train StarGAN on your own dataset, create a folder structure in the same format as RaFD and run the command:

# Train StarGAN on custom datasets

python main.py --mode train --dataset RaFD --rafd_crop_size CROP_SIZE --image_size IMG_SIZE \

--c_dim LABEL_DIM --rafd_image_dir TRAIN_IMG_DIR \

--sample_dir stargan_custom/samples --log_dir stargan_custom/logs \

--model_save_dir stargan_custom/models --result_dir stargan_custom/results

# Test StarGAN on custom datasets

python main.py --mode test --dataset RaFD --rafd_crop_size CROP_SIZE --image_size IMG_SIZE \

--c_dim LABEL_DIM --rafd_image_dir TEST_IMG_DIR \

--sample_dir stargan_custom/samples --log_dir stargan_custom/logs \

--model_save_dir stargan_custom/models --result_dir stargan_custom/resultsTo download a pre-trained model checkpoint, run the script below. The pre-trained model checkpoint will be downloaded and saved into ./stargan_celeba_128/models directory.

$ bash download.sh pretrained-celeba-128x128To translate images using the pre-trained model, run the evaluation script below. The translated images will be saved into ./stargan_celeba_128/results directory.

$ python main.py --mode test --dataset CelebA --image_size 128 --c_dim 5 \

--selected_attrs Black_Hair Blond_Hair Brown_Hair Male Young \

--model_save_dir='stargan_celeba_128/models' \

--result_dir='stargan_celeba_128/results'If you find this work useful for your research, please cite our paper:

@inproceedings{choi2018stargan,

author={Yunjey Choi and Minje Choi and Munyoung Kim and Jung-Woo Ha and Sunghun Kim and Jaegul Choo},

title={StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

year={2018}

}

This work was mainly done while the first author did a research internship at Clova AI Research, NAVER. We thank all the researchers at NAVER, especially Donghyun Kwak, for insightful discussions.