Machine Learning Ops Workshop with SageMaker: lab guides and materials.

Data Scientists and ML developers need more than a Jupyter notebook to create a ML model, test it, put into production and integrate it with a portal and/or a basic web/mobile application in a reliable and flexible way.

There are two basic questions that you should consider when you start developing a ML model for a real Business Case:

- How long would it take your organization to deploy a change that involves a single line of code?

- Can you do this on a repeatable, reliable basis?

So, if you're not happy with the answers, MLOps is a concept that can help you: a) to create or improve the organization culture for CI/CD; b) to create an automated infrastructure that will support your processes.

Amazon Sagemaker, a service that supports the whole pipeline of a ML Model development, is the heart of this solution. Around it, you can add several different services as the AWS Code* for creating an automated pipeline, building your docker images, train/test/deploy/integrate your models, etc.

Here you can find more information about DevOps at AWS (What is DevOps).

You should have some basic experience with:

- Train/test a ML model

- Python (scikit-learn)

- Jupyter Notebook

- AWS CodePipeline

- AWS CodeCommit

- AWS CodeBuild

- Amazon ECR

- Amazon SageMaker

- AWS CloudFormation

Some experience working with the AWS console is helpful as well.

In order to complete this workshop you'll need an AWS Account with access to the services above. There are resources required by this workshop that are eligible for the AWS free tier if your account is less than 12 months old. See the AWS Free Tier page for more details.

In this workshop you'll implement and experiment a basic MLOps process, supported by an automated infrastructure for training/testing/deploying/integrating ML Models. It is comprised into four parts:

- You'll start with a WarmUp, for reviewing the basic features of Amazon Sagemaker;

- Then you will create a basic Docker Image for supporting any scikit-learn model;

- Then you will create a dispatcher Docker Image that supports two different algorithms;

- Finally you will train the models, deploy them into a DEV environment, approve and deploy them into a PRD environment with High Availability and Elasticity;

Parts 2, 3 and 4 are supported by automated pipelines that reads the assets produced by the ML devoloper and execute/control the whole process.

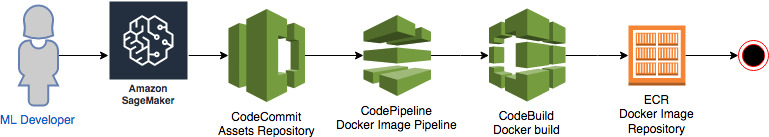

For parts 2 and 3 the following architecture will support the process. In part 2 you'll create an Abstract ScikitLearn Docker Image. In part 3 you'll extend that Abscract image and create the final image using two distinct Scikit Learn algorithms.

- The ML Developer creates the assets for Docker Image based on Scikit Learn, using Sagemaker, and pushes all the assets to a Git Repo (CodeCommit);

- CodePipeline listens the push event of CodeCommit, gets the source code and launches CodeBuild;

- CodeBuild authenticates into ECR, build the Docker Image and pushes it into the ECR repository

- Done.

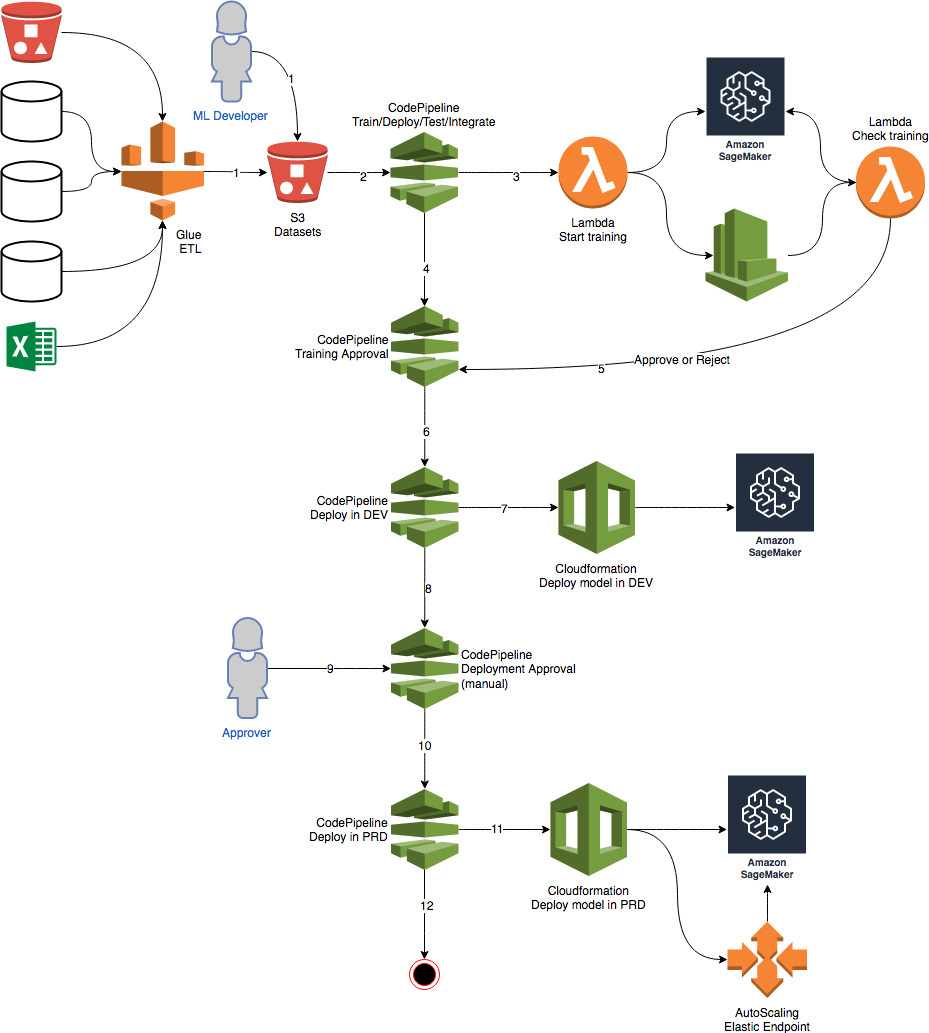

For part 4 you'll make use of the following structure for training the model, testing it, deploying it in two different environments: DEV - QA/Development (simple endpoint) and PRD - Production (HA/Elastic endpoint).

Altough there is an ETL part in the Architecture, we'll not use Glue or other ETL tool in this workshop. The idea is just to show you how simple it is to integrate this Architecture with your Data Lake and/or Legacy databases using an ETL process

- An ETL process or the ML Developer, prepares a new dataset for training the model and copies it into an S3 Bucket;

- CodePipeline listens to this S3 Bucket, calls a Lambda function for start training a job in Sagemaker;

- The lambda function send a training job to Sagemaker, enables a rule in CloudWatchEvents that will check each minute, through another Lambda Function, if the training job has finished or failed

- CodePipeline will awaits for the training approval with success or failure;

- This lambda will approve or reject this pipeline, based on the Sagemaker results;

- If rejected the pipeline stops here; If approved it goes to the next stage;

- CodePipeline calls CloudFormation to deploy a model in a Development/QA environment into Sagemaker;

- After finishing the deployment in DEV/QA, CodePipeline awaits for a manual approval

- An approver approves or rejects the deployment. If rejected the pipeline stops here; If approved it goes to the next stage;

- CodePipeline calls CloudFormation to deploy a model into production. This time, the endpoint will count with an AutoScaling policy for HA and Elasticity.

- Done.

It is important to mention that the process above was based on an Industry process for Data Mining and Machine Learning called CRISP-DM.

CRISP-DM stands for “Cross Industry Standard Process – Data Mining” and is an excellent skeleton to build a data science project around.

There are 6 phases to CRISP:

- Business understanding: Don’t dive into the data immediately! First take some time to understand: Business objectives, Surrounding context, ML problem category.

- Data understanding: Exploring the data gives us insights about tha paths we should follow.

- Data preparation: Data cleaning, normalization, feature selection, feature engineering, etc.

- Modeling: Select the algorithms, train your model, optimize it as necessary.

- Evaluation: Test your model with different samples, with real data if possible and decide if the model will fit the requirements of your business case.

- Deployment: Deploy into production, integrate it, do A/B tests, integration tests, etc.

Notice the arrows in the diagram though. CRISP frames data science as a cyclical endeavor - more insights leads to better business understanding, which kicks off the process again.

First, you need to execute a CloudFormation script to create all the components required for the exercises.

- Select the below to launch CloudFormation stack.

| Region | Launch |

|---|---|

| US East (N. Virginia) |  |

-

Then open the Jupyter Notebook instance in Sagemaker and start doing the exercises:

- Warmup: This is a basic exercise for exploring the Sagemaker features like: training, deploying and optmizing a model. If you already have experience with Sagemaker, you can skip this exercise.

- Abstract Scikit-learn Image: Here we'll create an abstract docker image with the codebase for virtually any Scikit-learn algorithm. This is not the final Image. We need to complete this exercise before executing the next one.

- Concrete Scikit-learn models: In this exercise we'll inherits the Docker image from the previous step and create a concrete Docker image with two different algorithms: Logistic Regression and Random Forest Tree in a dispatcher architecture.

- Test the models locally: This is part of the exercise #3. You can use this jupyter to test your local WebService, to simulate how Sagemaker will call it when you ask it to create an Endpoint or launch a Batch job for you.

- Train your models: In this exercise you'll use the training pipeline. You'll see how to train or retrain a particular model by just copying a zip file with the required assets to a given S3 bucket.

- Check Training progress and test: Here you can monitor the training process, approve the production deployment and test your endpoints.

- Stress Test: Here you can execute stress tests to see how well your model is performing.

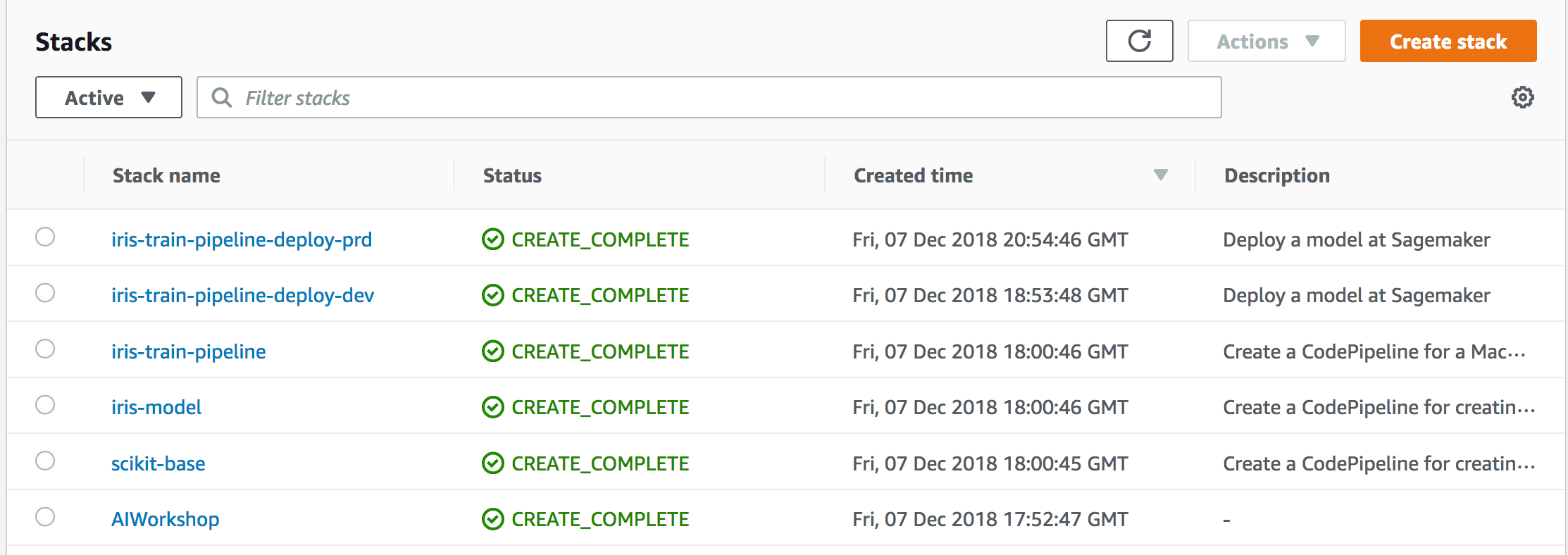

In order to delete all the assets, created by this workshop, delete the following cloudformation stacks.

Just follow the reverse order

- AIWorkshop

- scikit-base

- iris-model

- iris-train-pipeline

- iris-train-pipeline-deploy-dev

- iris-train-pipeline-deploy-prd

Also, delete the S3 bucket created by the first Cloudformation: mlops-<region>-<accountid>

This sample code is made available under a modified MIT license. See the LICENSE file.

Thank you!