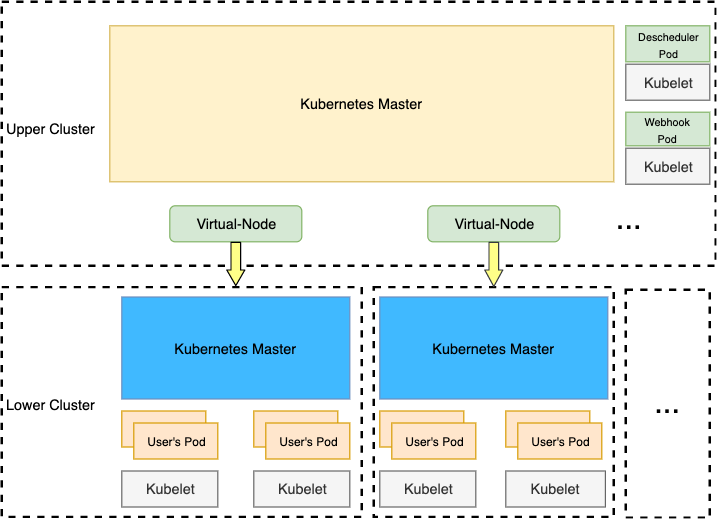

tensile-kube enables kubernetes clusters work together. Based on virtual-kubelet, tensile-kube

provides the following abilities:

- Cluster resource automatically discovery

- Notify pods modification async, decrease the cost of frequently list

- Support all actions of

kubectl logsandkubectl exec - Globally schedule pods to avoid unschedulable pod due to resource fragmentation when using multi-scheduler

- Re-schedule pods if pod can not be scheduled in lower clusters by using descheduler

- PV/PVC

- Service

- virtual-node

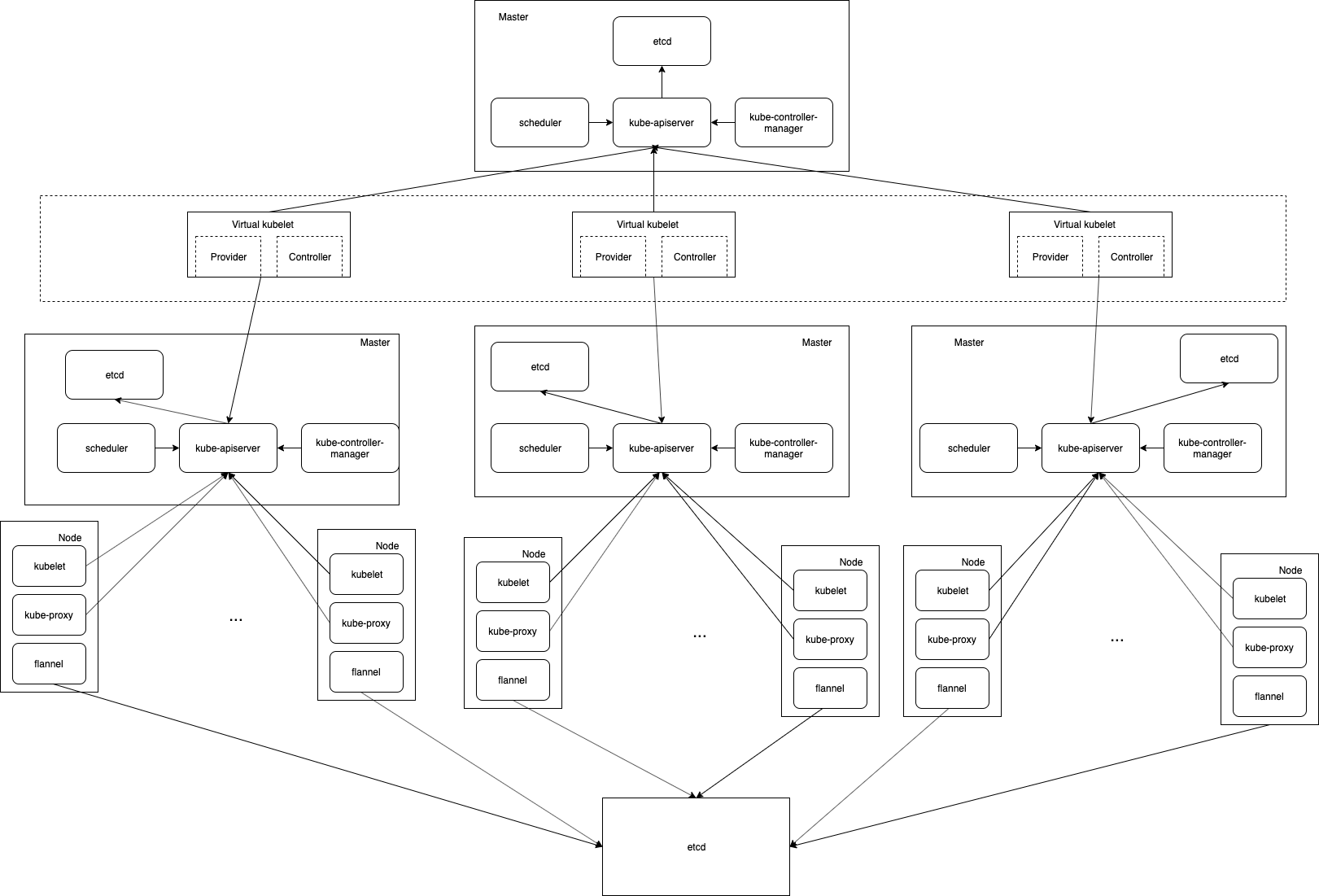

This is a kubernetes provider implemented based on virtual-kubelet. Pods created in the upper cluster will be synced to the lower cluster. If pods are depend on configmaps or secrets, dependencies would also be created in the cluster.

- multi-scheduler

The scheduler is implemented based on K8s scheduling framework. It would watch all of the lower

clusters's capacity and call filter while scheduling pods. If the number of available nodes is greator

than or equal to 1, the pods can be scheduler. As you see, this may cost more resources, so we add another

implementation(descheduler).

- descheduler

descheduler is inspired by K8s descheduler, but it cannot satisfy all our requirements, so we change some logic. Some unschedulable pods would be re-created by it with some nodeAffinity injected.

We can choose one of the multi-scheduler and descheduler in the upper cluster or both.

Large cluster is not recommended to use multi-scheduler, e.g. sum of nodes in sub cluster is more than 10000, descheduler would cost less.

Multi-scheduler would be better when there are fewer nodes in a cluster, e.g. we have 10 clusters but each cluster only has 100 nodes.

- webhook

Webhook are designed based on K8s mutation webhook. It helps convert some fields that can affect scheduling pods(not in kube-system) in the upper cluster, e.g. nodeSelector, nodeAffinity and tolerations. But only the pods have a label virtual-pod:true would be converted. These fields would be converted into the annotation as follows:

clusterSelector: '{"tolerations":[{"key":"node.kubernetes.io/not-ready","operator":"Exists","effect":"NoExecute"},{"key":"node.kubernetes.io/unreachable","operator":"Exists","effect":"NoExecute"},{"key":"test","operator":"Exists","effect":"NoExecute"},{"key":"test1","operator":"Exists","effect":"NoExecute"},{"key":"test2","operator":"Exists","effect":"NoExecute"},{"key":"test3","operator":"Exists","effect":"NoExecute"}]}'

This fields we would be added back when the pods created in the lower cluster.

Pods are strongly recommended to run in the lower clusters and add a label virtual-pod:true, except for those pods must be deployed in kube-system in the upper cluster.

For K8s< 1.16, pods without the label would not be converted. But queries would still send to the webhook.

For K8s>=1.16, we can use label selector to enable the webhook for some specified pods.

Overall, the initial idea is that we only run pods in lower clusters.

-

If you want to use service, must keep inter-pods communication normal. Pod A in cluster A can be accessed by pod B in cluster B through ip. The service

kubernetesindefaultnamespaces and other services inkube-systemwould be synced to lower clusters. -

multi-scheduler developed in the repo may cost more resource because it would sync all objects that a scheduler need from all of lower clusters.

-

descheduler cannot absolutely avoid resource fragmentation.

-

PV/PVC only support

WaitForFirstConsumerfor local PV, the scheduler in the upper cluster should ignoreVolumeBindCheck

In Tencent Games, we build the kubernetes cluster based on the flannel and all node CIDR allocation is based on the same etcd. So the pods actually can access each other directly by IP.

git clone https://github.com/virtual-kubelet/tensile-kube.git && make

--client-burst int qpi burst for client cluster. (default 1000)

--client-kubeconfig string kube config for client cluster.

--client-qps int qpi qps for client cluster. (default 500)

--enable-controllers string support PVControllers,ServiceControllers, default, all of these (default "PVControllers,ServiceControllers")

--enable-serviceaccount enable service account for pods, like spark driver, mpi launcher (default true)

--ignore-labels string ignore-labels are the labels we would like to ignore when build pod for client clusters, usually these labels will infulence schedule, default group.batch.scheduler.tencent.com, multi labels should be seperated by comma(,) (default "group.batch.scheduler.tencent.com")

--log-level string set the log level, e.g. "debug", "info", "warn", "error" (default "info")

export KUBELET_PORT=10350

export APISERVER_CERT_LOCATION=/etc/k8s.cer

export APISERVER_KEY_LOCATION=/etc/k8s.key

nohup ./virtual-node --nodename $IP --provider k8s --kube-api-qps 500 --kube-api-burst 1000 --client-qps 500 --client

-burst 1000 --kubeconfig /root/server-kube.config --client-kubeconfig /client-kube.config --klog.v 4 --log-level

debug 2>&1 > node.log &

nohup ./multi-scheduler --v=5 --config=./multi-scheduler-config.json --authentication-kubeconfig=/etc/kubernetes/scheduler.conf --authorization-kubeconfig=/etc/kubernetes/scheduler.conf --bind-address=127.0.0.1 --kubeconfig=/etc/kubernetes/scheduler.conf --leader-elect=true 2>&1 >multi.log &

multi-scheduler-config.json is as follow:

{

"kind": "KubeSchedulerConfiguration",

"apiVersion": "kubescheduler.config.k8s.io/v1alpha1",

"clientConnection": {

"kubeconfig": "~/root/kube/config"

},

"leaderElection": {

"leaderElect": true

},

"plugins": {

"filter": {

"enabled": [

{

"name": "multi-scheduler"

}

]

}

},

"pluginConfig": [

{

"name": "multi-scheduler",

"args": {

"cluster_configuration": {

"test": {

"kube_config": "/root/cloud.config"

}

}

}

}

]

}

it is recommended to be deployed in K8s cluster

- replace the ${caBoudle}, ${cert}, ${key} with yours

- replace the image of webhook

- deploy it in K8s cluster

kubectl apply -f hack/webhook.yaml

- replace the image with yours

- deploy it in K8s cluster

kubectl apply -f hack/descheduler.yaml

- Weidong Cai from Tencent Games

- Ye Yin from Tencent Games