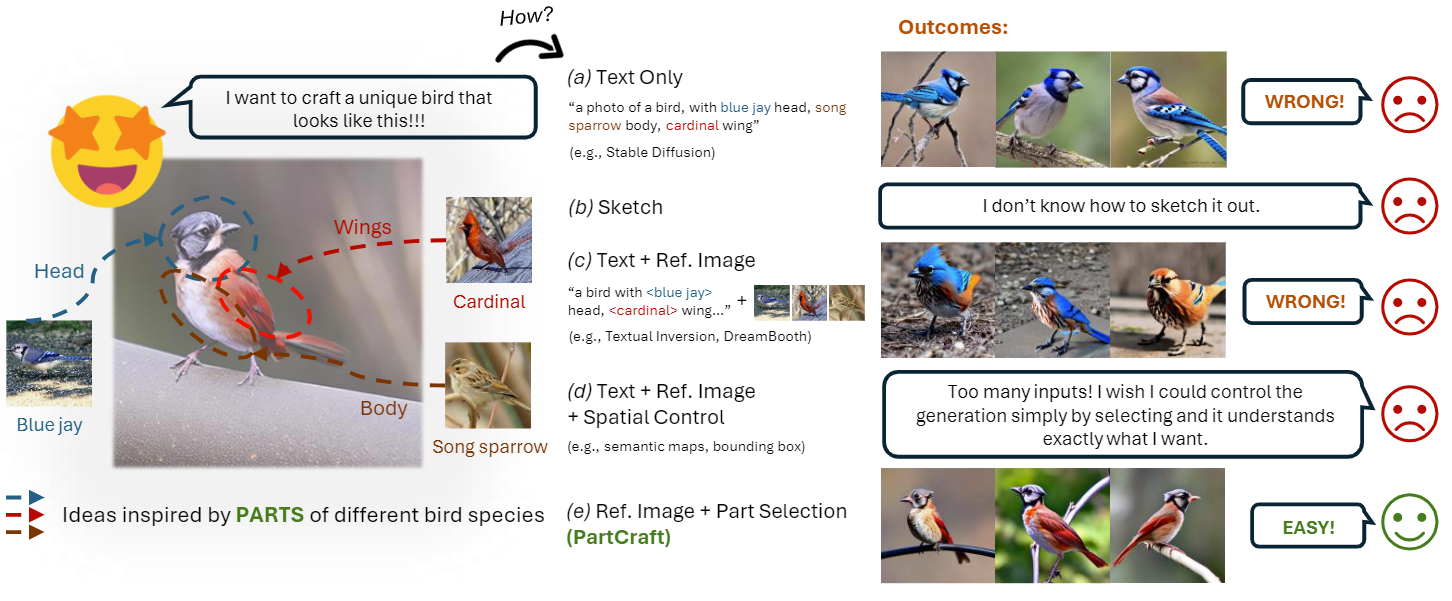

Abstract: This paper propels creative control in generative visual AI by allowing users to "select". Departing from traditional text or sketch-based methods, we for the first time allow users to choose visual concepts by parts for their creative endeavors. The outcome is fine-grained generation that precisely captures selected visual concepts, ensuring a holistically faithful and plausible result. To achieve this, we first parse objects into parts through unsupervised feature clustering. Then, we encode parts into text tokens and introduce an entropy-based normalized attention loss that operates on them. This loss design enables our model to learn generic prior topology knowledge about object's part composition, and further generalize to novel part compositions to ensure the generation looks holistically faithful. Lastly, we employ a bottleneck encoder to project the part tokens. This not only enhances fidelity but also accelerates learning, by leveraging shared knowledge and facilitating information exchange among instances. Visual results in the paper and supplementary material showcase the compelling power of PartCraft in crafting highly customized, innovative creations, exemplified by the "charming" and creative birds.

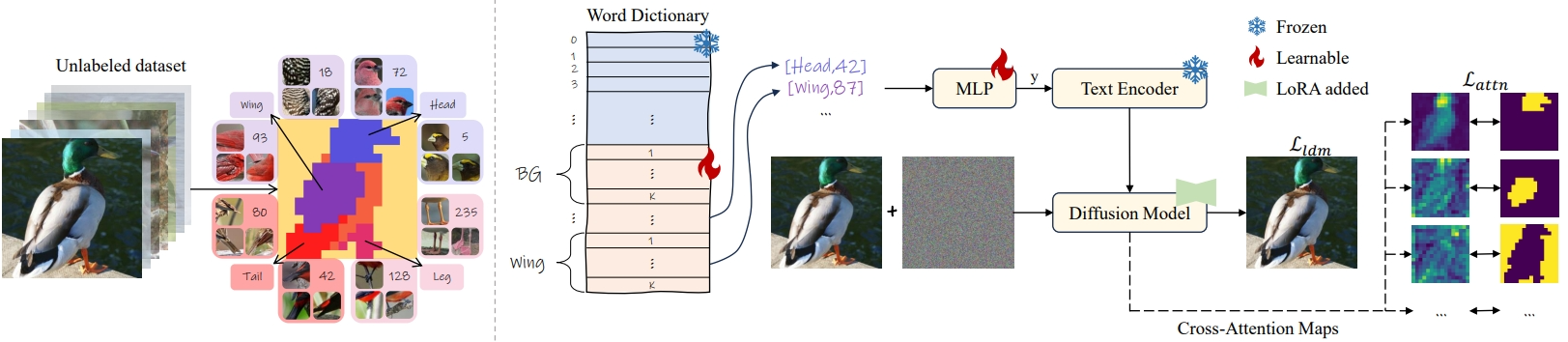

Overview of our PartCraft. (Left) Part discovery within a semantic hierarchy involves partitioning each

image into distinct parts and forming semantic clusters across unlabeled training data.

(Right) All parts are organized into a dictionary, and their semantic embeddings are learned through a textual inversion approach.

For instance, a text description like a photo of a [Head,42] [Wing,87]... guides the optimization of the corresponding textual embedding by reconstructing the associated image.

To improve generation fidelity, we incorporate a bottleneck encoder

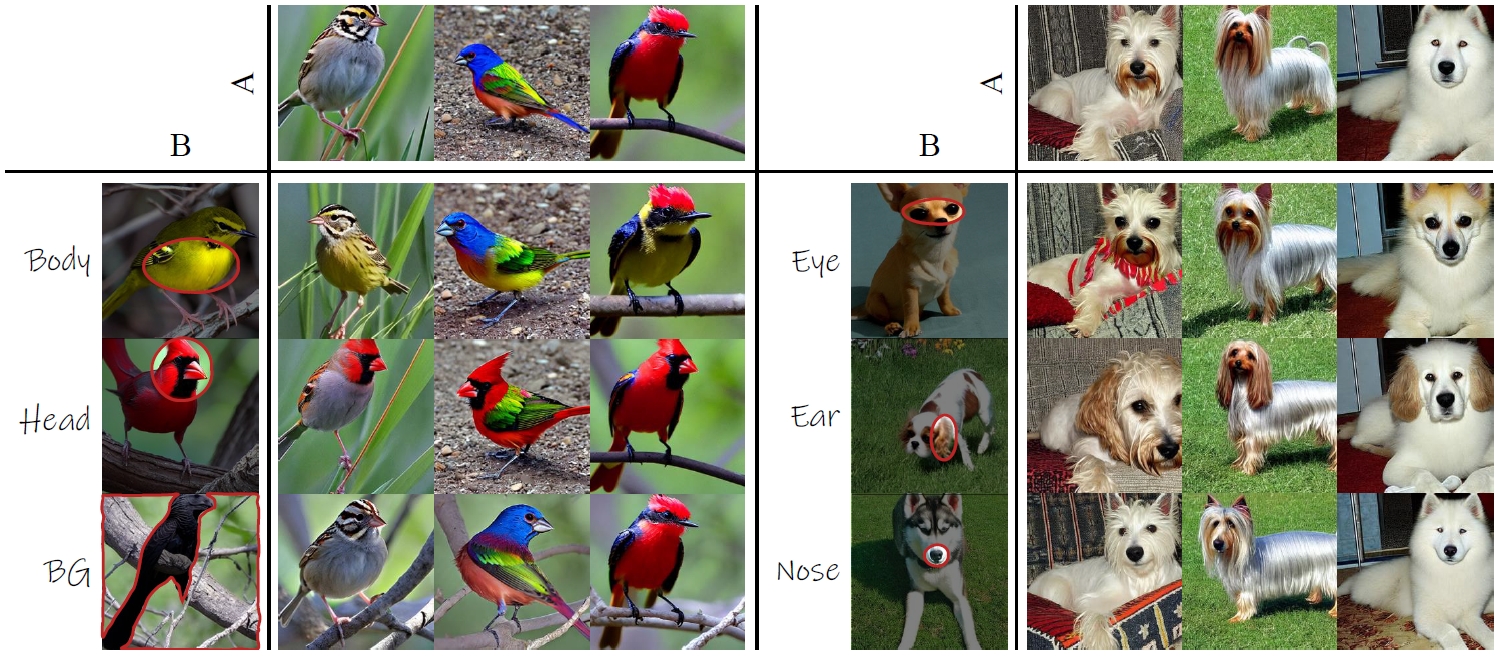

Integrating a specific part (e.g., body, head, or even background) of a source concept B to the target concept A.

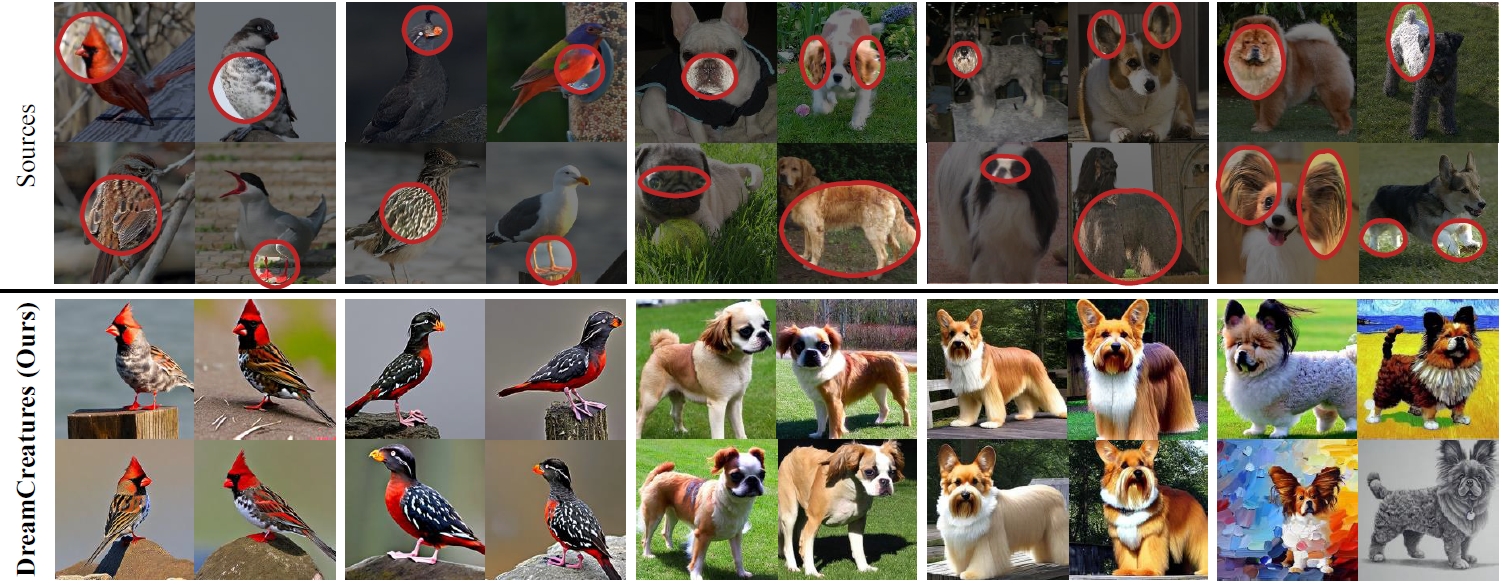

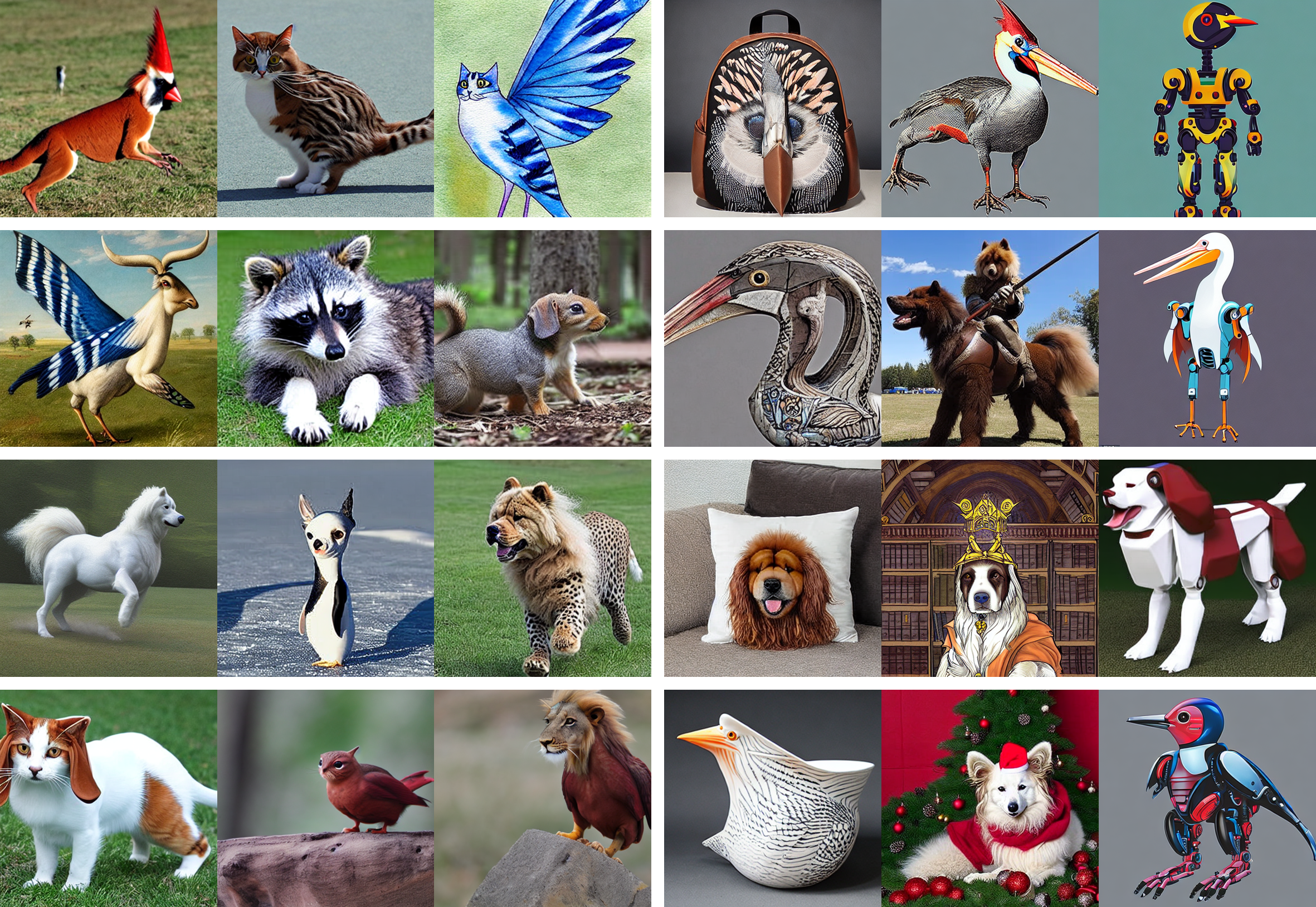

Mixing 4 different species:

More examples;

Creative generation:

- A demo is available on

the

kamwoh/dreamcreatureHugging Face Space. (Very very slow due to CPU only) - You can run the demo on a

Colab:

.

- You can use the gradio demo locally by running

python app.pyorgradio_demo_cub200.pyorgradio_demo_dog.pyin thesrcfolder.

- Check out

train_kmeans_segmentation.ipynbto obtain a DINO-based KMeans Segmentation that can segment the parts. This is to obtain the "attention mask" used during the training. - Assuming no labels, we can also use the kmeans labels as a supervision, otherwise we can use the supervised labels ( such as ground-truth class) as we can obtain higher quality of reconstruction.

- Check out

run_sd_sup.shorrun_sd_unsup.shfor training. All hyperparameters in these scripts are used in the paper. - SDXL version also available (see

run_sdxl_sup.sh) but due to resource limitation, we cannot efficiently train a model, hence we do not have a pre-trained model on SDXL.

- The original paper title was:

DreamCreature: Crafting Photorealistic Virtual Creatures from Imagination

- Pre-trained model on unsupervised KMeans Labels as we used in the paper (CUB200)

- Pre-trained model on unsupervised KMeans Labels as we used in the paper (Stanford Dogs)

- Evaluation script (EMR & CoSim)

- Update readme

- Update website

@inproceedings{

ng2024partcraft,

title={PartCraft: Crafting Creative Objects by Parts},

author={Kam Woh Ng and Xiatian Zhu and Yi-Zhe Song and Tao Xiang},

booktitle=ECCV,

year={2024}

}