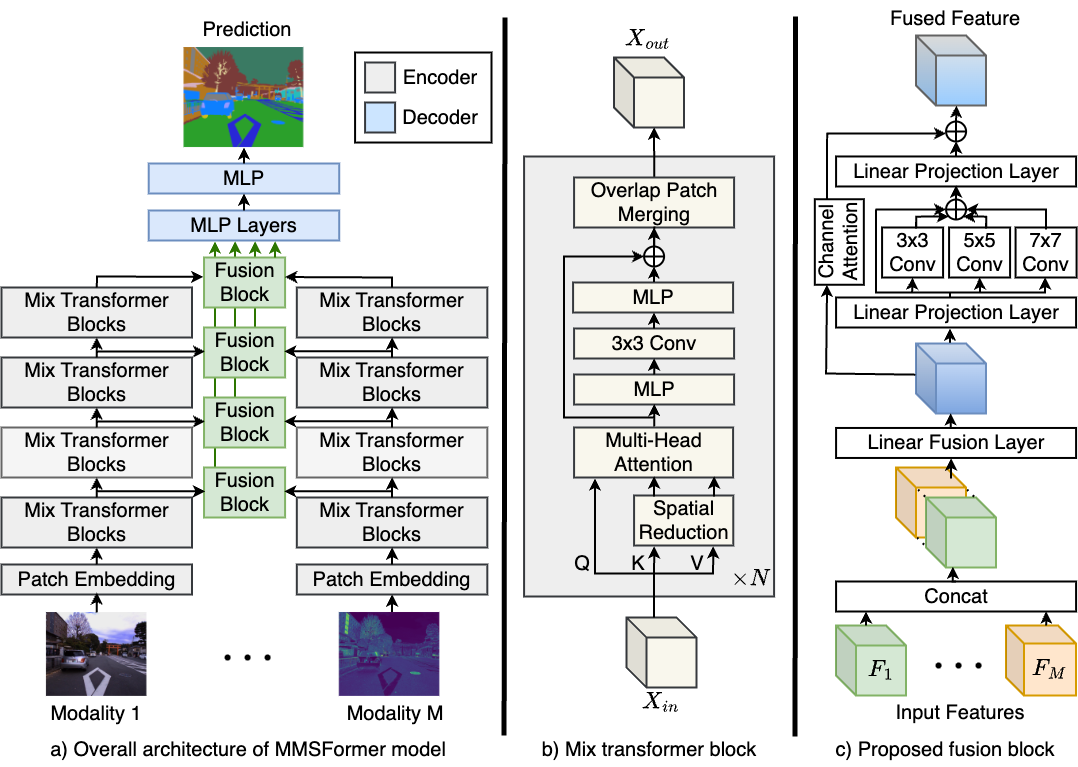

Leveraging information across diverse modalities is known to enhance performance on multimodal segmentation tasks. However, effectively fusing information from different modalities remains challenging due to the unique characteristics of each modality. In this paper, we propose a novel fusion strategy that can effectively fuse information from different modality combinations. We also propose a new model named Multi-Modal Segmentation TransFormer (MMSFormer) that incorporates the proposed fusion strategy to perform multimodal material and semantic segmentation tasks. MMSFormer outperforms current state-of-the-art models on three different datasets. As we begin with only one input modality, performance improves progressively as additional modalities are incorporated, showcasing the effectiveness of the fusion block in combining useful information from diverse input modalities. Ablation studies show that different modules in the fusion block are crucial for overall model performance. Furthermore, our ablation studies also highlight the capacity of different input modalities to improve performance in the identification of different types of materials.

For more details, please check our arXiv paper.

- 09/2023: Init repository.

- 09/2023: Release the code for MMSFormer.

- 09/2023: Release MMSFormer model weights. Download from GoogleDrive.

- 01/2024: Update code, description and pretrained weights.

- 04/2024: Accepted by IEEE Open Journal of Signal Processing.

First, create and activate the environment using the following commands:

conda env create -f environment.yaml

conda activate mmsformerDownload the dataset:

- MCubeS, for multimodal material segmentation with RGB-A-D-N modalities.

- FMB, for FMB dataset with RGB-Infrared modalities.

- PST, for PST900 dataset with RGB-Thermal modalities.

Then, put the dataset under data directory as follows:

data/

├── MCubeS

│ ├── polL_color

│ ├── polL_aolp_sin

│ ├── polL_aolp_cos

│ ├── polL_dolp

│ ├── NIR_warped

│ ├── NIR_warped_mask

│ ├── GT

│ ├── SSGT4MS

│ ├── list_folder

│ └── SS

├── FMB

│ ├── test

│ │ ├── color

│ │ ├── Infrared

│ │ ├── Label

│ │ └── Visible

│ ├── train

│ │ ├── color

│ │ ├── Infrared

│ │ ├── Label

│ │ └── Visible

├── PST

│ ├── test

│ │ ├── rgb

│ │ ├── thermal

│ │ └── labels

│ ├── train

│ │ ├── rgb

│ │ ├── thermal

│ │ └── labels

| Model-Modal | mIoU | weight |

|---|---|---|

| MCubeS-RGB | 50.44 | GoogleDrive |

| MCubeS-RGB-A | 51.30 | GoogleDrive |

| MCubeS-RGB-A-D | 52.03 | GoogleDrive |

| MCubeS-RGB-A-D-N | 53.11 | GoogleDrive |

| Model-Modal | mIoU | weight |

|---|---|---|

| FMB-RGB | 57.17 | GoogleDrive |

| FMB-RGB-Infrared | 61.68 | GoogleDrive |

| Model-Modal | mIoU | weight |

|---|---|---|

| PST-RGB-T | 87.45 | GoogleDrive |

Before training, please download pre-trained SegFormer, and put it in the correct directory following this structure:

checkpoints/pretrained/segformer

├── mit_b0.pth

├── mit_b1.pth

├── mit_b2.pth

├── mit_b3.pth

└── mit_b4.pth

To train MMSFormer model, please update the appropriate configuration file in configs/ with appropriate paths and hyper-parameters. Then run as follows:

cd path/to/MMSFormer

conda activate mmsformer

python -m tools.train_mm --cfg configs/mcubes_rgbadn.yaml

python -m tools.train_mm --cfg configs/fmb_rgbt.yaml

python -m tools.train_mm --cfg configs/pst_rgbt.yamlTo evaluate MMSFormer models, please download respective model weights (GoogleDrive) and save them under any folder you like.

Then, update the EVAL section of the appropriate configuration file in configs/ and run:

cd path/to/MMSFormer

conda activate mmsformer

python -m tools.val_mm --cfg configs/mcubes_rgbadn.yaml

python -m tools.val_mm --cfg configs/fmb_rgbt.yaml

python -m tools.val_mm --cfg configs/pst_rgbt.yamlThis repository is under the Apache-2.0 license. For commercial use, please contact with the authors.

If you use MMSFormer model, please cite the following work:

- MMSFormer [arXiv]

@ARTICLE{Reza2024MMSFormer,

author={Reza, Md Kaykobad and Prater-Bennette, Ashley and Asif, M. Salman},

journal={IEEE Open Journal of Signal Processing},

title={MMSFormer: Multimodal Transformer for Material and Semantic Segmentation},

year={2024},

volume={},

number={},

pages={1-12},

keywords={Image segmentation;Feature extraction;Transformers;Task analysis;Fuses;Semantic segmentation;Decoding;multimodal image segmentation;material segmentation;semantic segmentation;multimodal fusion;transformer},

doi={10.1109/OJSP.2024.3389812}

}

Our codebase is based on the following Github repositories. Thanks to the following public repositories:

Note: This is a research level repository and might contain issues/bugs. Please contact the authors for any query.