Optimization shows up everywhere in machine learning, from the ubiquitous gradient descent, to quadratic programming in SVM, to expectation-maximization algorithm in Gaussian mixture models.

However, one aspect of optimization that always puzzled me is duality: what on earth is a primal form and dual form of an optimization problem, and what good do they really serve?

Therefore, in this project, I will:

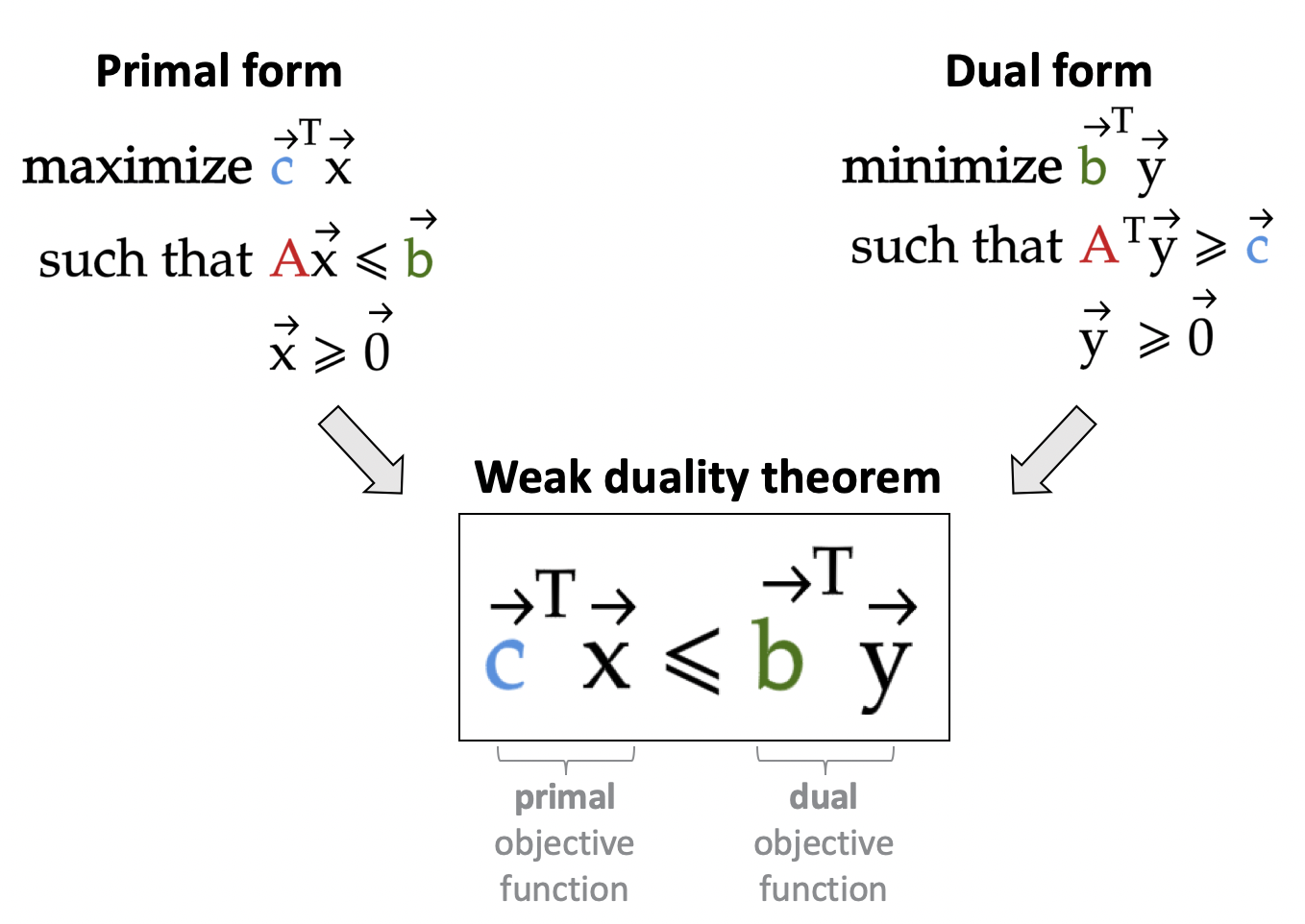

- Go over the primal and dual forms for the most basic of optimization problem: linear programming.

- Show that by solving one form of the optimization problem, we will have also solved the other one. This requires us to prove two fundamental duality theorems in linear programming: weak duality theorem and strong duality theorem.

- Explain why we should care about duality by showing its application to some data science problems.