EvalAI is an open source web application that helps researchers, students and data-scientists to create, collaborate and participate in various AI challenges organized round the globe.

In recent years, it has become increasingly difficult to compare an algorithm solving a given task with other existing approaches. These comparisons suffer from minor differences in algorithm implementation, use of non-standard dataset splits and different evaluation metrics. By providing a central leaderboard and submission interface, we make it easier for researchers to reproduce the results mentioned in the paper and perform reliable & accurate quantitative analysis. By providing swift and robust backends based on map-reduce frameworks that speed up evaluation on the fly, EvalAI aims to make it easier for researchers to reproduce results from technical papers and perform reliable and accurate analyses.

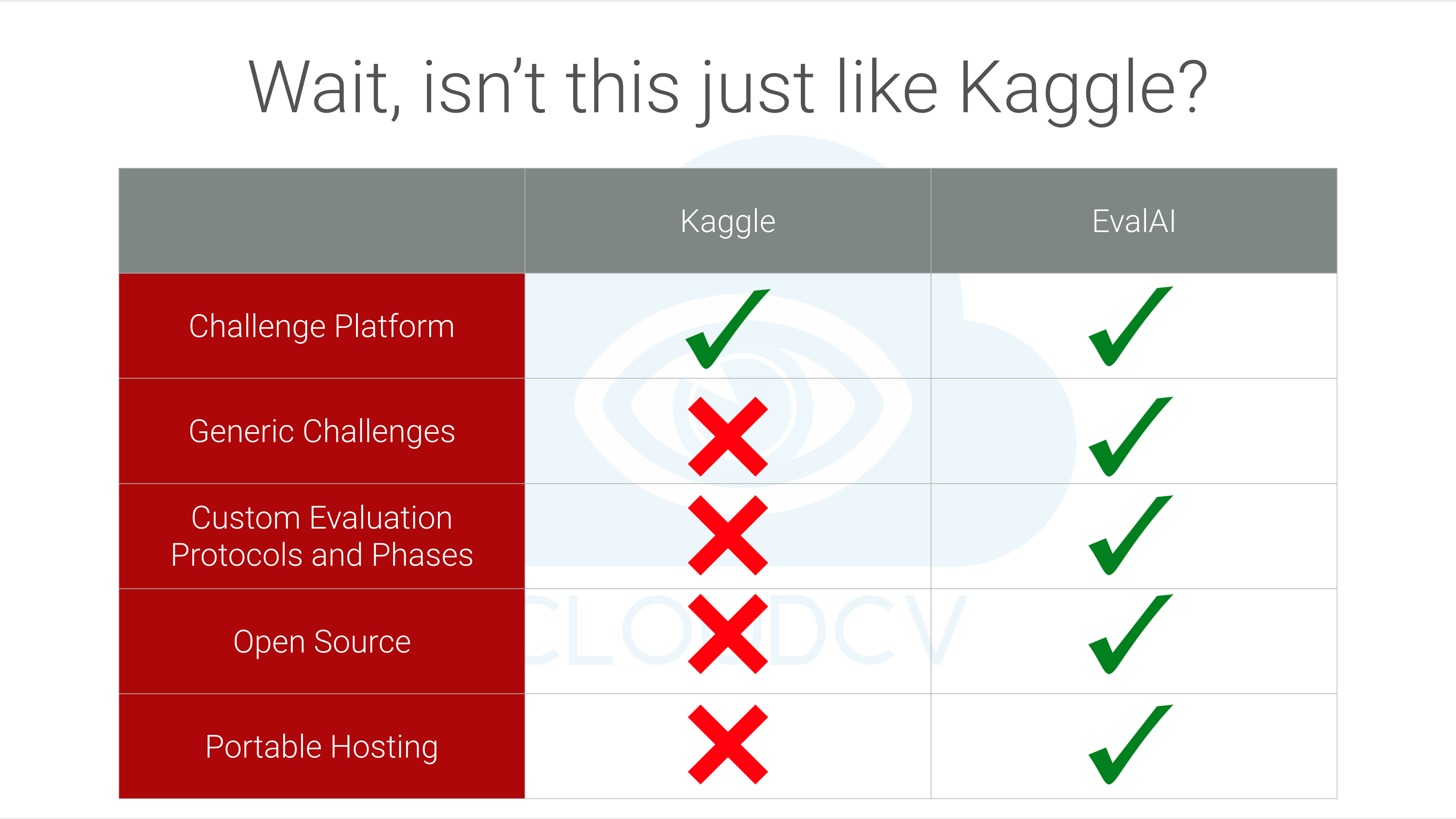

A question we’re often asked is: Doesn’t Kaggle already do this? The central differences are:

-

Custom Evaluation Protocols and Phases: We have designed versatile backend framework that can support user-defined evaluation metrics, various evaluation phases, private and public leaderboard.

-

Faster Evaluation: The backend evaluation pipeline is engineered so that submissions can be evaluated parallelly using multiple cores on multiple machines via mapreduce frameworks offering a significant performance boost over similar web AI-challenge platforms.

-

Portability: Since the platform is open-source, users have the freedom to host challenges on their own private servers rather than having to explicitly depend on Cloud Services such as AWS, Azure, etc.

-

Easy Hosting: Hosting a challenge is streamlined. One can create the challenge on EvalAI using the intuitive UI (work-in-progress) or using zip configuration file.

-

Centralized Leaderboard: Challenge Organizers whether host their challenge on EvalAI or forked version of EvalAI, they can send the results to main EvalAI server. This helps to build a centralized platform to keep track of different challenges.

Our ultimate goal is to build a centralized platform to host, participate and collaborate in AI challenges organized around the globe and we hope to help in benchmarking progress in AI.

Some background: The Visual Question Answering Challenge (VQA) 2016 hosted on some other platform in 2016, took ~10 minutes for evaluation of a submission. EvalAI hosted VQA Challenge 2017 and VQA Challenge 2018 and the dataset for the VQA Challenge 2017, 2018 is twice as large. Despite this, we’ve found that our parallelized backend only takes ~130 seconds to evaluate on the whole test set VQA 2.0 dataset.

Setting up EvalAI on your local machine is really easy. You can setup EvalAI using docker: The steps are:

-

Install docker and docker-compose on your machine.

-

Get the source code on to your machine via git.

git clone https://github.com/Cloud-CV/EvalAI.git evalai && cd evalai

-

Build and run the Docker containers. This might take a while.

docker-compose up --build -

That's it. Open web browser and hit the url http://127.0.0.1:8888. Three users will be created by default which are listed below -

SUPERUSER- username:

adminpassword:password

HOST USER- username:hostpassword:password

PARTICIPANT USER- username:participantpassword:password

If you are facing any issue during installation, please see our common errors during installation page.

EvalAI is currently maintained by Deshraj Yadav, Akash Jain, Taranjeet Singh, Shiv Baran Singh and Rishabh Jain. A non-exhaustive list of other major contributors includes: Harsh Agarwal, Prithvijit Chattopadhyay, Devi Parikh and Dhruv Batra.

If you are interested in contributing to EvalAI, follow our contribution guidelines.