This is a simple REST service used to host pre-trained Keras models. The service consists of three modules running in separate Docker containers: a REST API which is developed using Flask microframework and is running behind uWSGI server, a model server, and Redis database used to store images and corresponding predictions. The entire stack can be easily deployed using provided Docker Compose files.

The REST API handles user requests with image files and passes them to Redis database. The model server constantly fetches images from Redis, makes batch predictions using a pre-trained Keras model, and stores the results back to Redis. Once the results are ready for the submitted image, the web service sends predictions to the user in JSON response.

The project is inspired by this blog post.

First, install Docker and Docker Compose by following instructions from the official websites. Once you have Docker installed, everything is ready for deployment.

In order to start the service in development mode, execute the following command from the project's root directory:

docker-compose upThe service should be up and running on 8080 port.

In production, run the following command instead:

docker-compose -f docker-compose.yml -f docker-compose.production.yml up -dOnce the service is up and running, you can use curl to make a prediction request:

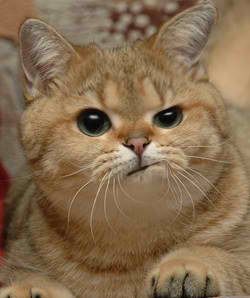

curl -F image=@sample.jpg http://localhost:8080/api/predictThe JSON response will look something like this:

{

"success": true,

"message": {

"predictions": [

{

"label": "tiger_cat",

"proba": 0.3253738582134247

},

{

"label": "tabby",

"proba": 0.315514475107193

},

{

"label": "Egyptian_cat",

"proba": 0.12767720222473145

},

{

"label": "Persian_cat",

"proba": 0.1148894876241684

},

{

"label": "lynx",

"proba": 0.019103804603219032

}

],

"predicted_at": "20190618_162447"

}

}