Create characters in Unity with LLMs!

LLMUnity enables seamless integration of Large Language Models (LLMs) within the Unity engine.

It allows to create intelligent characters that your players can interact with for an immersive experience.

LLMUnity is built on top of the awesome llama.cpp and llamafile libraries.

At a glance

- 💻 Cross-platform! Supports Windows, Linux and macOS (supported versions)

- 🏠 Runs locally without internet access but also supports remote servers

- ⚡ Fast inference on CPU and GPU (NVIDIA and AMD)

- 🤗 Support of the major LLM models (supported models)

- 🔧 Easy to setup, call with a single line code

- 💰 Free to use for both personal and commercial purposes

🧪 Tested on Unity: 2021 LTS, 2022 LTS, 2023

🚦 Upcoming Releases

How to help

- Join us at Discord and say hi!

- ⭐ Star the repo and spread the word about the project!

- Submit feature requests or bugs as issues or even submit a PR and become a collaborator!

Setup

- Open the Package Manager in Unity:

Window > Package Manager - Click the

+button and selectAdd package from git URL - Use the repository URL

https://github.com/undreamai/LLMUnity.gitand clickAdd

On macOS you further need to have Xcode Command Line Tools installed:

- From inside a terminal run

xcode-select --install

How to use

For a step-by-step tutorial you can have a look at our guide:

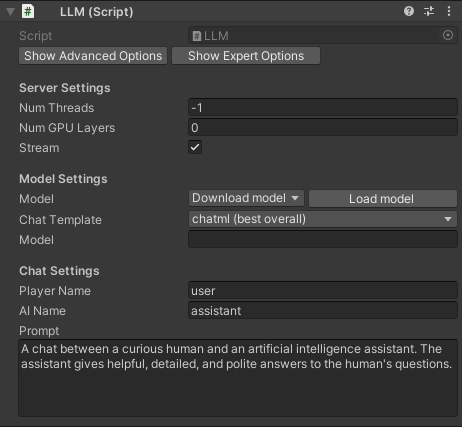

Create a GameObject for the LLM ♟️:

- Create an empty GameObject. In the GameObject Inspector click

Add Componentand select the LLM script (Scripts>LLM). - Download the default model with the

Download Modelbutton (this will take a while as it is ~4GB).

You can also load your own model in .gguf format with theLoad modelbutton (see Use your own model). - Define the role of your AI in the

Prompt. You can also define the name of the AI (AI Name) and the player (Player Name). - (Optional) By default the LLM script is set up to receive the reply from the model as is it is produced in real-time (recommended). If you prefer to receive the full reply in one go, you can deselect the

Streamoption. - (Optional) Adjust the server or model settings to your preference (see Options).

In your script you can then use it as follows 🦄:

using LLMUnity;

public class MyScript {

public LLM llm;

void HandleReply(string reply){

// do something with the reply from the model

Debug.Log(reply);

}

void Game(){

// your game function

...

string message = "Hello bot!";

_ = llm.Chat(message, HandleReply);

...

}

}You can also specify a function to call when the model reply has been completed.

This is useful if the Stream option is selected for continuous output from the model (default behaviour):

void ReplyCompleted(){

// do something when the reply from the model is complete

Debug.Log("The AI replied");

}

void Game(){

// your game function

...

string message = "Hello bot!";

_ = llm.Chat(message, HandleReply, ReplyCompleted);

...

}- Finally, in the Inspector of the GameObject of your script, select the LLM GameObject created above as the llm property.

That's all ✨!

You can also:

Add or not the message to the chat/prompt history

The last argument of the Chat function is a boolean that specifies whether to add the message to the history (default: true):

void Game(){

// your game function

...

string message = "Hello bot!";

_ = llm.Chat(message, HandleReply, ReplyCompleted, false);

...

}Wait for the reply before proceeding to the next lines of code

For this you can use the async/await functionality:

async void Game(){

// your game function

...

string message = "Hello bot!";

string reply = await llm.Chat(message, HandleReply, ReplyCompleted);

Debug.Log(reply);

...

}Process the prompt at the beginning of your app for faster initial processing time

void WarmupCompleted(){

// do something when the warmup is complete

Debug.Log("The AI is warm");

}

void Game(){

// your game function

...

_ = llm.Warmup(WarmupCompleted);

...

}Add a LLM / LLMClient component dynamically

using UnityEngine;

using LLMUnity;

public class MyScript : MonoBehaviour

{

LLM llm;

LLMClient llmclient;

async void Start()

{

// Add and setup a LLM object

gameObject.SetActive(false);

llm = gameObject.AddComponent<LLM>();

await llm.SetModel("mistral-7b-instruct-v0.2.Q4_K_M.gguf");

llm.prompt = "A chat between a curious human and an artificial intelligence assistant.";

gameObject.SetActive(true);

// or a LLMClient object

gameObject.SetActive(false);

llmclient = gameObject.AddComponent<LLMClient>();

llmclient.prompt = "A chat between a curious human and an artificial intelligence assistant.";

gameObject.SetActive(true);

}

}Examples

The Samples~ folder contains several examples of interaction 🤖:

- SimpleInteraction: Demonstrates simple interaction between a player and a AI

- ServerClient: Demonstrates simple interaction between a player and multiple AIs using a

LLMand aLLMClient - ChatBot: Demonstrates interaction between a player and a AI with a UI similar to a messaging app (see image below)

To install a sample:

- Open the Package Manager:

Window > Package Manager - Select the

LLMUnityPackage. From theSamplesTab, clickImportnext to the sample you want to install.

The samples can be run with the Scene.unity scene they contain inside their folder.

In the scene, select the LLM GameObject and click the Download Model button to download the default model.

You can also load your own model in .gguf format with the Load model button (see Use your own model).

Save the scene, run and enjoy!

Use your own model

LLMUnity uses the Mistral 7B Instruct model by default, quantised with the Q4 method (link).

Alternative models can be downloaded from HuggingFace.

The required model format is .gguf as defined by the llama.cpp.

The easiest way is to download gguf models directly by TheBloke who has converted an astonishing number of models 🌈!

Otherwise other model formats can be converted to gguf with the convert.py script of the llama.cpp as described here.

❕ Before using any model make sure you check their license ❕

Multiple AI / Remote server

LLMUnity allows to have multiple AI characters efficiently.

Each character can be implemented with a different client with its own prompt (and other parameters), and all of the clients send their requests to a single server.

This is essential as multiple server instances would require additional compute resources.

In addition to the LLM server functionality, we define the LLMClient class that handles the client functionality.

The LLMClient contains a subset of options of the LLM class described in the Options.

To use multiple instances, you can define one LLM GameObject (as described in How to use) and then multiple LLMClient objects.

See the ServerClient sample for a server-client example.

The LLMClient can be configured to connect to a remote instance by providing the IP address of the server in the host property.

The server can be either a LLMUnity server or a standard llama.cpp server.

Options

Show/Hide Advanced OptionsToggle to show/hide advanced options from belowShow/Hide Expert OptionsToggle to show/hide expert options from below

💻 Server Settings

-

Num Threadsnumber of threads to use (default: -1 = all) -

Num GPU Layersnumber of model layers to offload to the GPU. If set to 0 the GPU is not used. Use a large number i.e. >30 to utilise the GPU as much as possible. If the user's GPU is not supported, the LLM will fall back to the CPU -

Streamselect to receive the reply from the model as it is produced (recommended!).

If it is not selected, the full reply from the model is received in one go -

Advanced options

Parallel Promptsnumber of prompts that can happen in parallel (default: -1 = number of LLM/LLMClient objects)Debugselect to log the output of the model in the Unity EditorPortport to run the server

🤗 Model Settings

-

Download modelclick to download the default model (Mistral 7B Instruct) -

Load modelclick to load your own model in .gguf format -

Modelthe model being used (inside the Assets/StreamingAssets folder) -

Advanced options

Load loraclick to load a LORA model in .bin formatLoad grammarclick to load a grammar in .gbnf formatLorathe LORA model being used (inside the Assets/StreamingAssets folder)Grammarthe grammar being used (inside the Assets/StreamingAssets folder)Context SizeSize of the prompt context (0 = context size of the model)Batch SizeBatch size for prompt processing (default: 512)Seedseed for reproducibility. For random results every time select -1-

Saves the prompt as it is being created by the chat to avoid reprocessing the entire prompt every timeCache Promptsave the ongoing prompt from the chat (default: true) -

This is the amount of tokens the model will maximum predict. When N predict is reached the model will stop generating. This means words / sentences might not get finished if this is too low.Num Predictnumber of tokens to predict (default: 256, -1 = infinity, -2 = until context filled) -

The temperature setting adjusts how random the generated responses are. Turning it up makes the generated choices more varied and unpredictable. Turning it down makes the generated responses more predictable and focused on the most likely options.TemperatureLLM temperature, lower values give more deterministic answers -

The top k value controls the top k most probable tokens at each step of generation. This value can help fine tune the output and make this adhere to specific patterns or constraints.Top Ktop-k sampling (default: 40, 0 = disabled) -

The top p value controls the cumulative probability of generated tokens. The model will generate tokens until this theshold (p) is reached. By lowering this value you can shorten output & encourage / discourage more diverse output.Top Ptop-p sampling (default: 0.9, 1.0 = disabled) -

The probability is defined relative to the probability of the most likely token.Min Pminimum probability for a token to be used (default: 0.05) -

The penalty is applied to repeated tokens.Repeat PenaltyControl the repetition of token sequences in the generated text (default: 1.1) -

Positive values penalize new tokens based on whether they appear in the text so far, increasing the model's likelihood to talk about new topics.Presence Penaltyrepeated token presence penalty (default: 0.0, 0.0 = disabled) -

Positive values penalize new tokens based on their existing frequency in the text so far, decreasing the model's likelihood to repeat the same line verbatim.Frequency Penaltyrepeated token frequency penalty (default: 0.0, 0.0 = disabled)

-

Expert options

-

tfs_z: Enable tail free sampling with parameter z (default: 1.0, 1.0 = disabled). -

typical_p: Enable locally typical sampling with parameter p (default: 1.0, 1.0 = disabled). -

repeat_last_n: Last n tokens to consider for penalizing repetition (default: 64, 0 = disabled, -1 = ctx-size). -

penalize_nl: Penalize newline tokens when applying the repeat penalty (default: true). -

penalty_prompt: Prompt for the purpose of the penalty evaluation. Can be eithernull, a string or an array of numbers representing tokens (default:null= use originalprompt). -

mirostat: Enable Mirostat sampling, controlling perplexity during text generation (default: 0, 0 = disabled, 1 = Mirostat, 2 = Mirostat 2.0). -

mirostat_tau: Set the Mirostat target entropy, parameter tau (default: 5.0). -

mirostat_eta: Set the Mirostat learning rate, parameter eta (default: 0.1). -

n_probs: If greater than 0, the response also contains the probabilities of top N tokens for each generated token (default: 0) -

ignore_eos: Ignore end of stream token and continue generating (default: false).

-

🗨️ Chat Settings

Player Namethe name of the playerAI Namethe name of the AIPrompta description of the AI role

Games using LLMUnity

License

The license of LLMUnity is MIT (LICENSE.md) and uses third-party software with MIT and Apache licenses (Third Party Notices.md).