Convex Hull Escape Perturbation at Embedding Space and Spherical Bins Coloring for 3D Face De-identification

.

├──/cpp_codes (to create shape by using perturbed alphas)

└──/py_codes

├──/alphas (to create and perturb alphas by using CHEP)

├──/demoCode (to create shape by using alphas)

├──/projection_2D (to do SBC for color reinstatement and 2D project etc.)

└──/evaluation (to do identity classification)

- Do the setup for Extreme 3D Reconstruction, download the Bump-CNN model, BFM model, dlib face prediction model etc. and put in the respective folder as instructed in the readme file of Extreme 3D Reconstruction

- Use the

FaceServices2.cppof this repo to replace the one in/extreme_3d_faces/modules/PoseExpr/src/FaceServices2.cpp. The modified code (lines 766 - 812, and lines 53 - 83) will extract the perturbed alpha files (which are generated by the Python codes) to replace the original alpha. You may want to modifyFaceServices2.cppfurther if the location of alpha files are at somewhere else. - Compile the C++ codes as instructed by the Extreme 3D Reconstruction

- Place the

/alphasdirectory (and files inside) as the/demoCode/alphasdirectory in the Docker container. Note that the files inside/alphas/FEIare in fact the output files generated by/alphas/create_alphas_from_database.pyand/alphas/perturbation.py - Place the files inside

/demoCodein/demoCodein the Docker container

At Docket container

- Download the FEI images and place the 4

originalimages_partXfolders inside/shared/input/FEI_Face_Database - Run

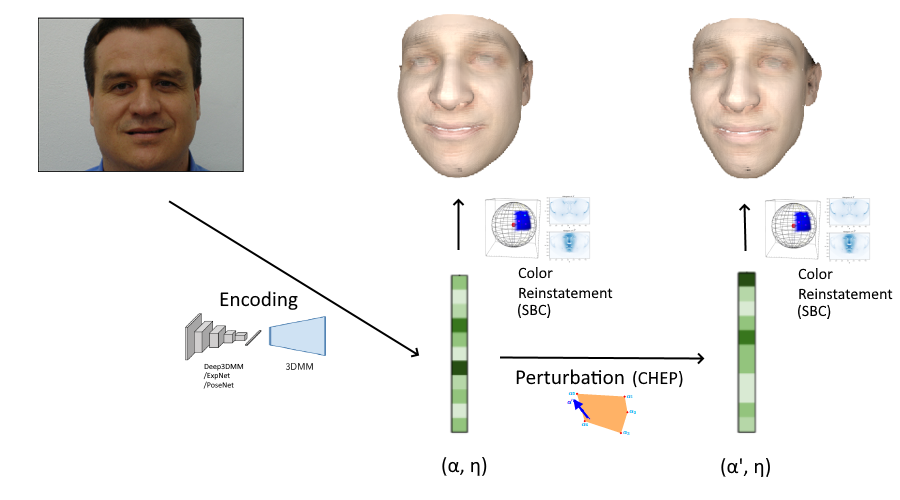

/alphas/create_alphas_from_database.pyto embed FEI images into non-perturbed alphas and stored in the.npyfiles - Run

/alphas/perturbation.pyto generate the perturbed alphas and the.alphafiles - Run the

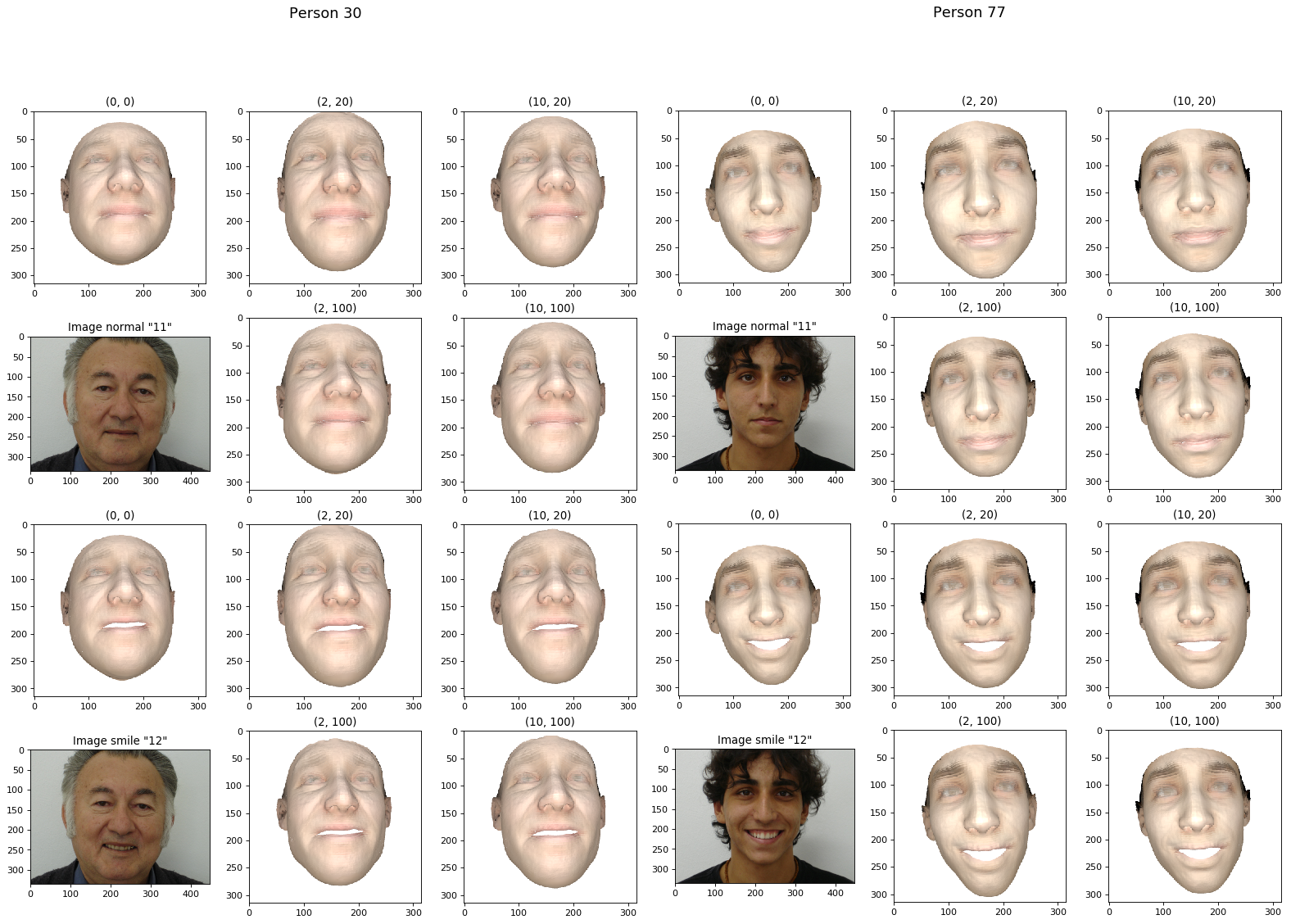

/demoCode/testBatchModel.py(which will call the C++ code) to generate the perturbed 3D faces with wrinkles (i.e..plyfiles) using 5 different perturbation approach. e.g.> python testBatchModel.py testImages_180_normal.txt /shared/output/output_180_normal_PB/pb2_pivot20. - To get the non-perturbed face, use the original

FaceServices2.cppof Extreme 3D Reconstruction (i.e. not the modified one), re-compile the C++ code, and then run/demoCode/testBatchModel.pyagain.

Copy files from Docker Container to host Machine

- Copy the

.plyoutput from Docker Container toyour_path/output/output_180_normal_initial,your_path/output/output_180_normal_prePBandyour_path/output/output_180_normal_PB/pbX_pivotX(4 folders) at your host machine - Copy the

/shared/input/FEI_Face_Databasefrom Docker Container toyour_path/input/FEI_Face_Database - Suggest to share folder between the Docker container and the host machine so that the above copy & paste are not required

- Download

facenet_keras.h5andfacenet_keras_weights.h5from FaceNet's folder and put them insideyour_path/output/facenetin host machine

At host

- Run

/projection_2D/list_generation.pyto generate the listinputList_180_normal_smile.txt - Run

/projection_2D/colored_2D.pyto reinstate color, and project the 3D faces onto the 2D images as.pngfiles - Run

/projection_2D/visualize_2D_images.pyto review result 2D images of the selected individuals - If needed, use

random_rot_vector()of/projection_2D/rotate_resize_3D.pyto generate a new list of random rotation, i.e.rotation.npy - Run

/evaluation/embed_2d.pyto embed the 2D images as FaceNet embeddings, i.e. a single.npzfile for each directory - Move the

.npzfiles to the directory/evaluation/embeddings_FaceNet. There are some previous output there. rename the files in similar format - Run

/evaluation/classification.pyto get the final result