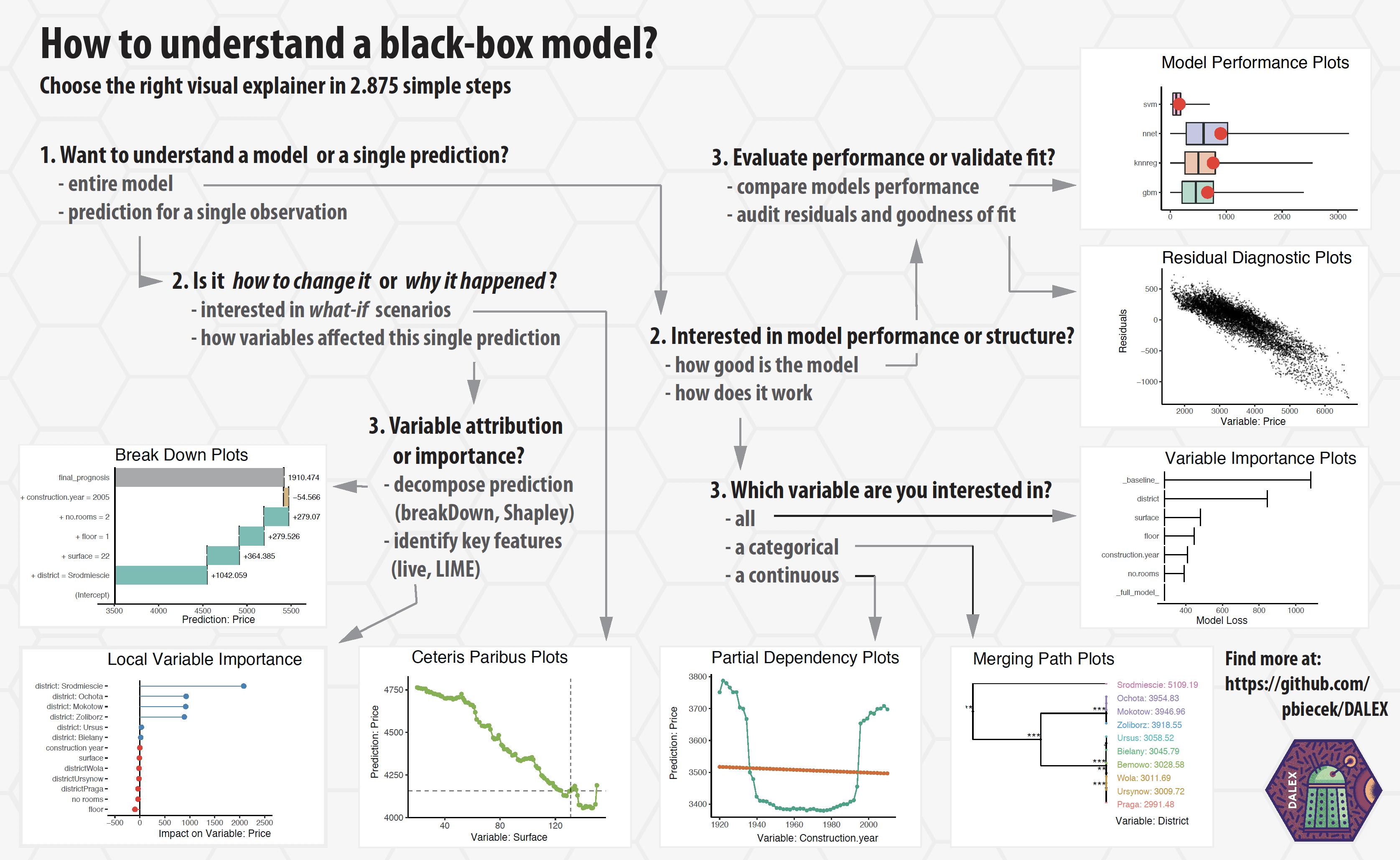

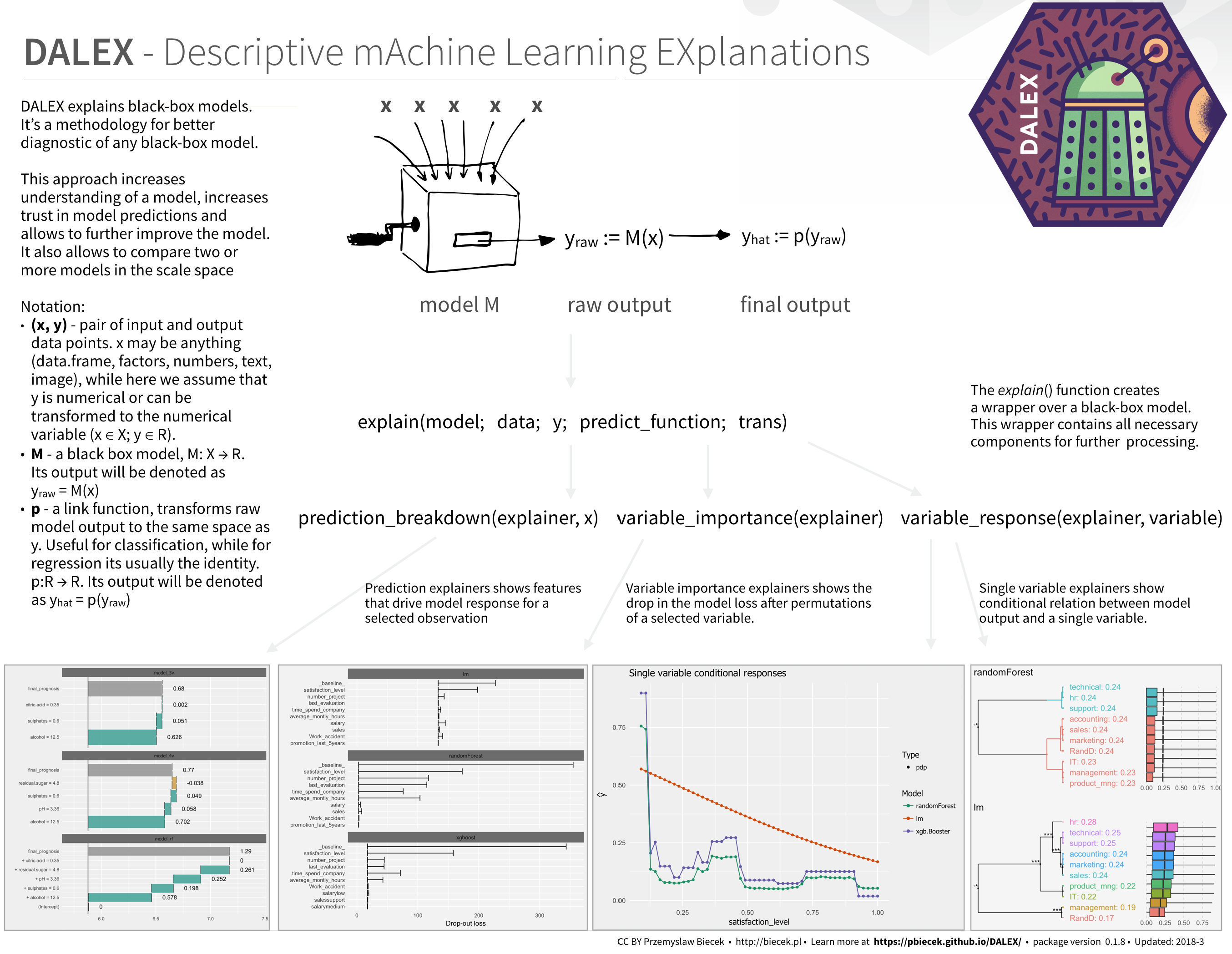

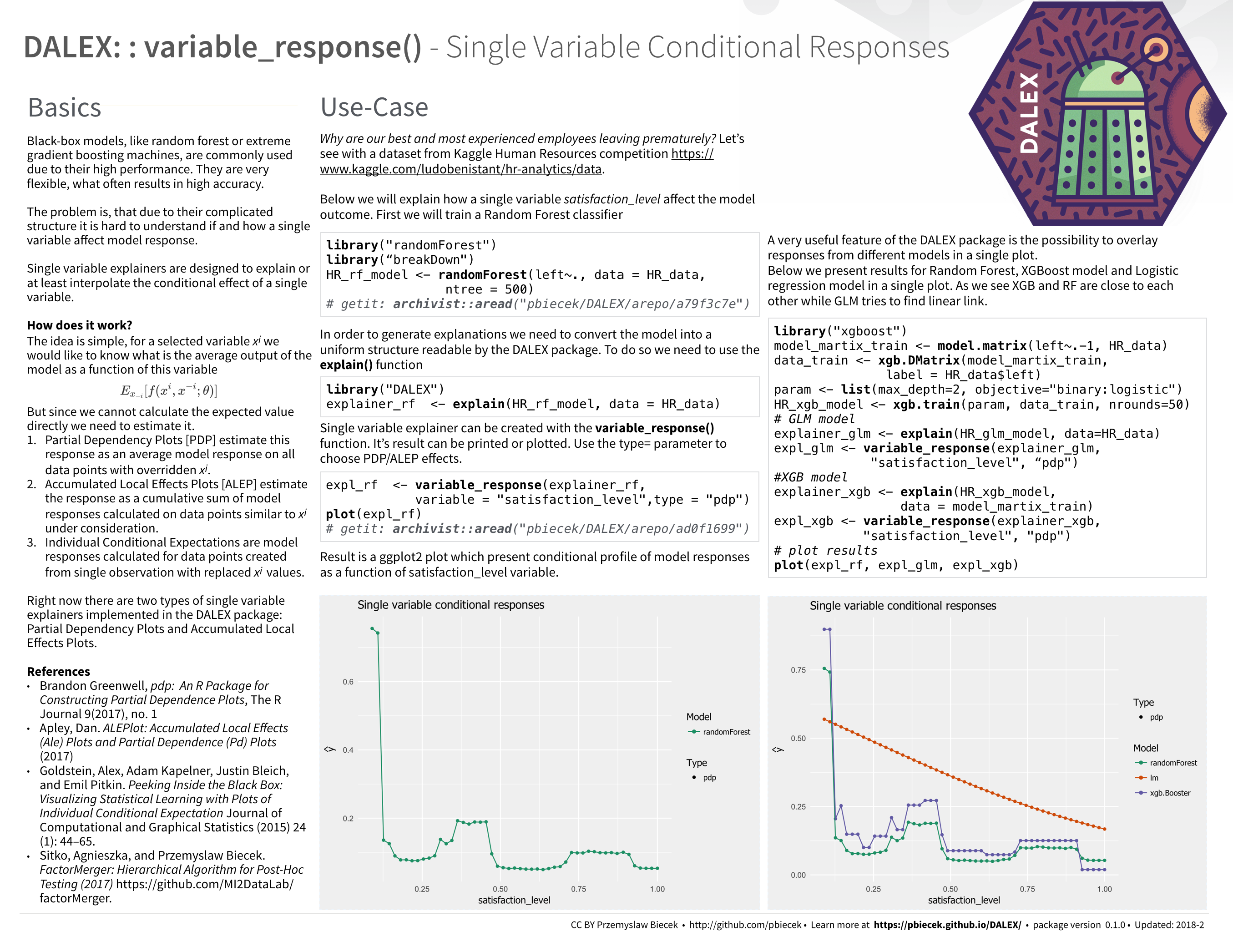

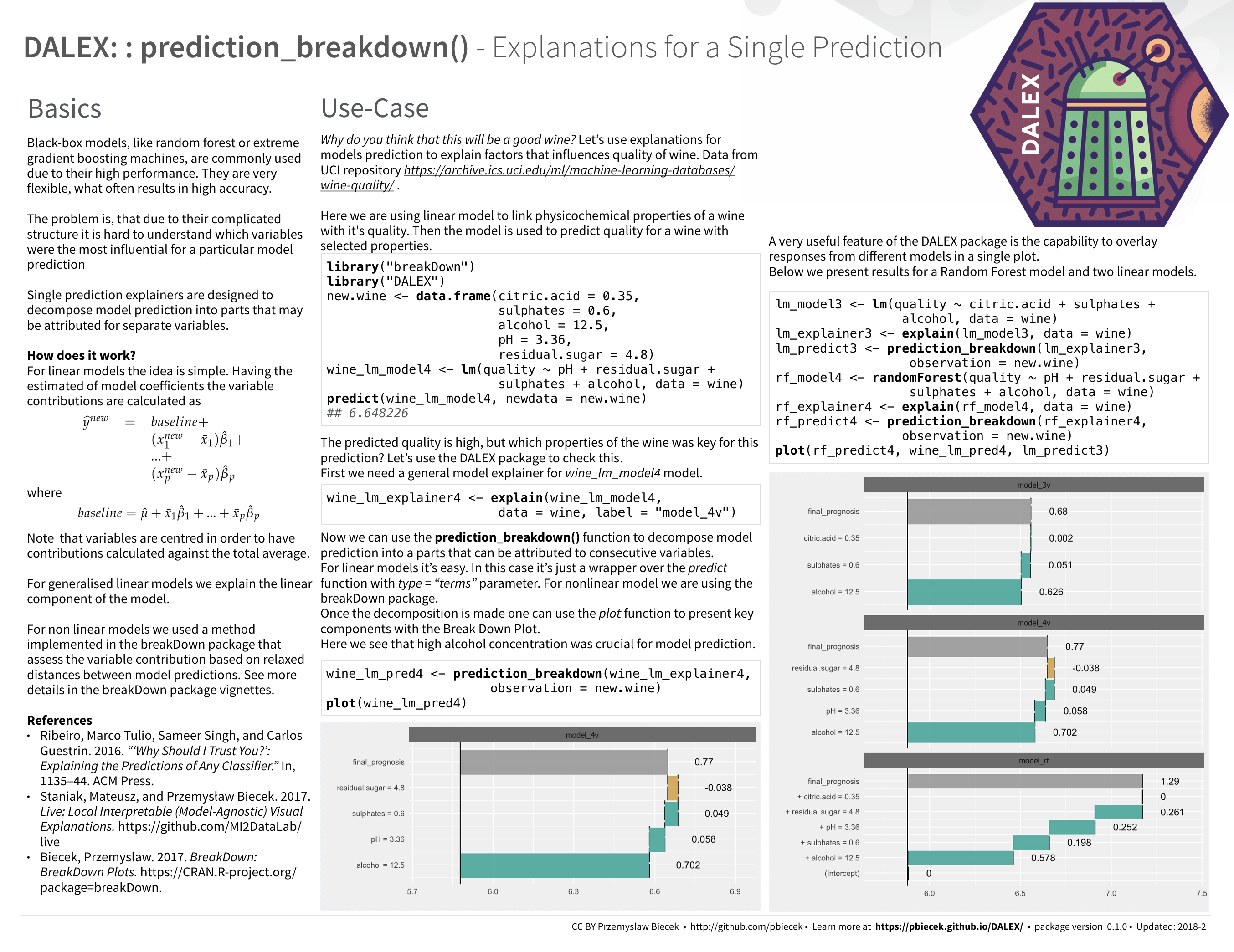

Machine Learning models are widely used and have various applications in classification or regression tasks. Due to increasing computational power, availability of new data sources and new methods, ML models are more and more complex. Models created with techniques like boosting, bagging of neural networks are true black boxes. It is hard to trace the link between input variables and model outcomes. They are use because of high performance, but lack of interpretability is one of their weakest sides.

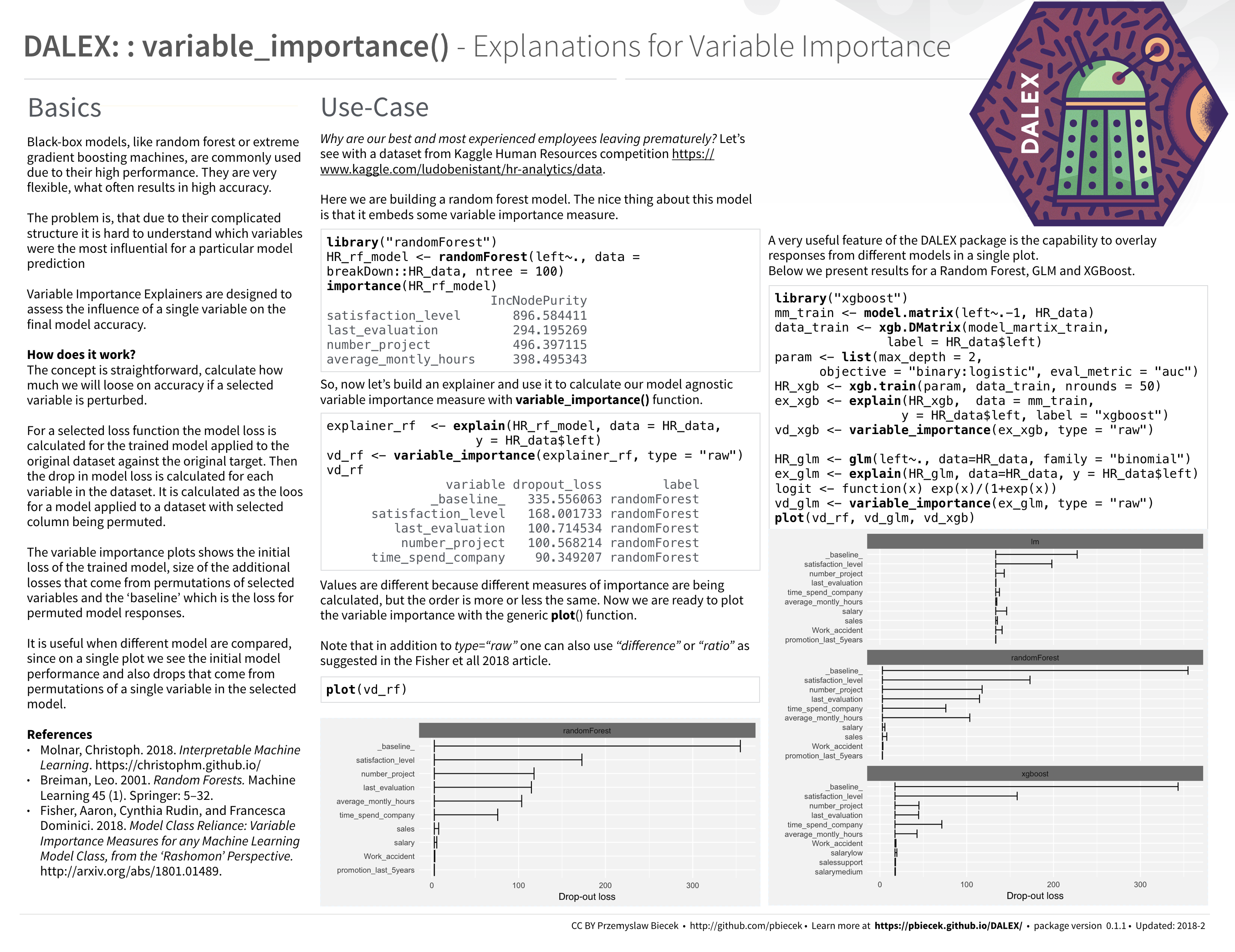

In many applications we need to know, understand or prove how input variables are used in the model and what impact do they have on final model prediction. DALEX is a set of tools that help to understand how complex models are working.

Find more about DALEX in this Gentle introduction to DALEX with examples.

- How to use DALEX with caret

- How to use DALEX with mlr

- How to use DALEX with H2O

- How to use DALEX with xgboost package

- How to use DALEX for teaching. Part 1

- How to use DALEX for teaching. Part 2

- breakDown vs lime vs shapleyR

- Talk about DALEX at Complexity Institute / NTU February 2018

- Talk about DALEX at SER / WTU April 2018

- Talk about DALEX at STWUR May 2018 (in Polish)

From CRAN

install.packages("DALEX")

or from GitHub

# dependencies

devtools::install_github("MI2DataLab/factorMerger")

devtools::install_github("pbiecek/breakDown")

# DALEX package

devtools::install_github("pbiecek/DALEX")

Work on this package was financially supported by the 'NCN Opus grant 2016/21/B/ST6/02176'.