This repo is a benchmark for ML experiment tracking tools. We build some ML projects from scratch and upgrade them with different experiment tracking tools. The goal is to provide a detailed comparison of different experiment tracking tools, so users can choose the best one for their projects.

-

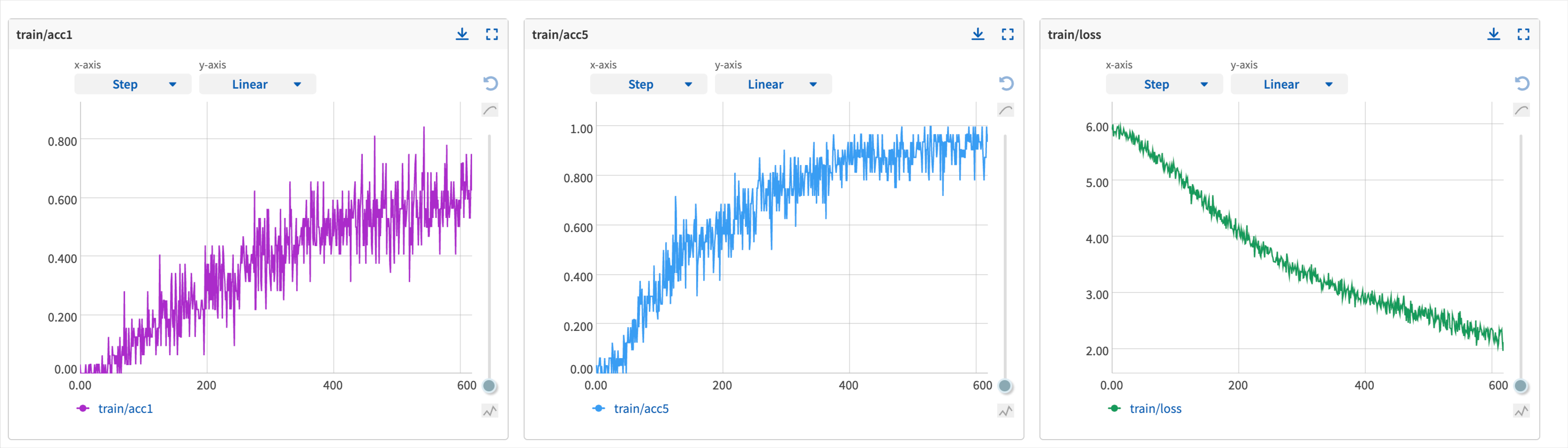

Training and Inference are supported.

-

Experiment management with

- hydra

- tensorboard

- neptune.ai

- wandb

- mlflow

-

Various Frameworks and Models

- PyTorch Vision for Scene Classification

- TIMM for Image Classification

- HuggingFace for NLP

-

Model Zoo with pretrained models

# Download the code

git clone git@github.com:MLSysOps/ml_exp_tracking_benchmark.git

cd ml_exp_tracking_benchmark

# Create a conda environment

conda create -n ml_track_benchmark python=3.8

conda activate ml_track_benchmark

# Install dependencies

pip install - r requirements.txtPlease download the data from [Place2 Data]

# 1. Download and unzip the data

sh download_data_pytorch.sh

# 2. Train a model

export PYTHONPATH=$PYTHONPATH:$(pwd)

python benchmark/main_tensorboard.pyPlease refer [Model Zoo]

The dataset and basic code comes from [MIT Place365]

Thanks for the great work!