This is the official repository of the paper SimDistill: Simulated Multi-modal Distillation for BEV 3D Object Detection.

News | Abstract | Method | Results | Preparation | Code | Statement

- (2023/3/29) SimDistill is accepted by AAAI 2024!.

- (2023/3/29) BEVSimDet is released on arXiv.

Other applications of ViTAE Transformer include: image classification | object detection | semantic segmentation | pose estimation | remote sensing|image matting | scene text spotting

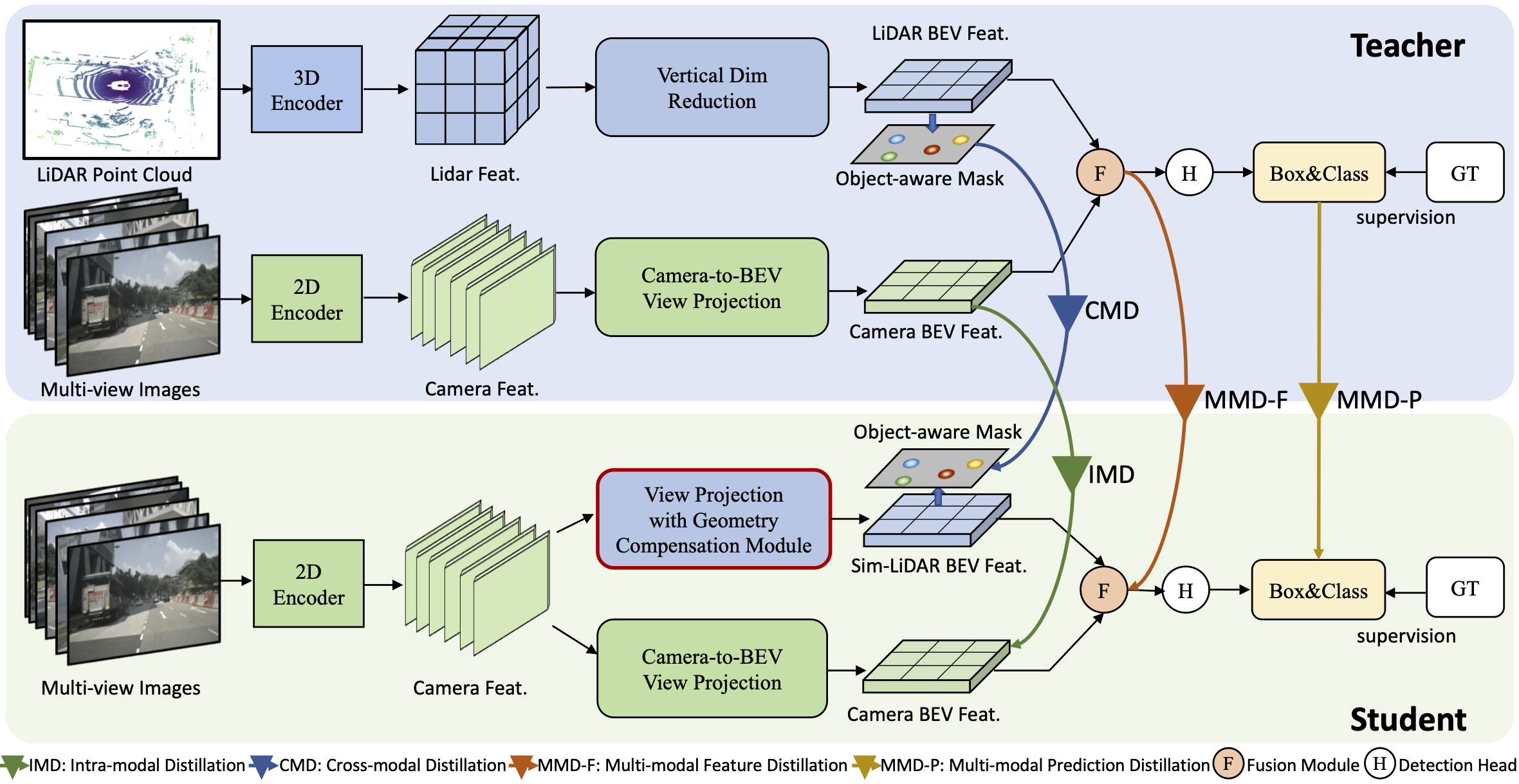

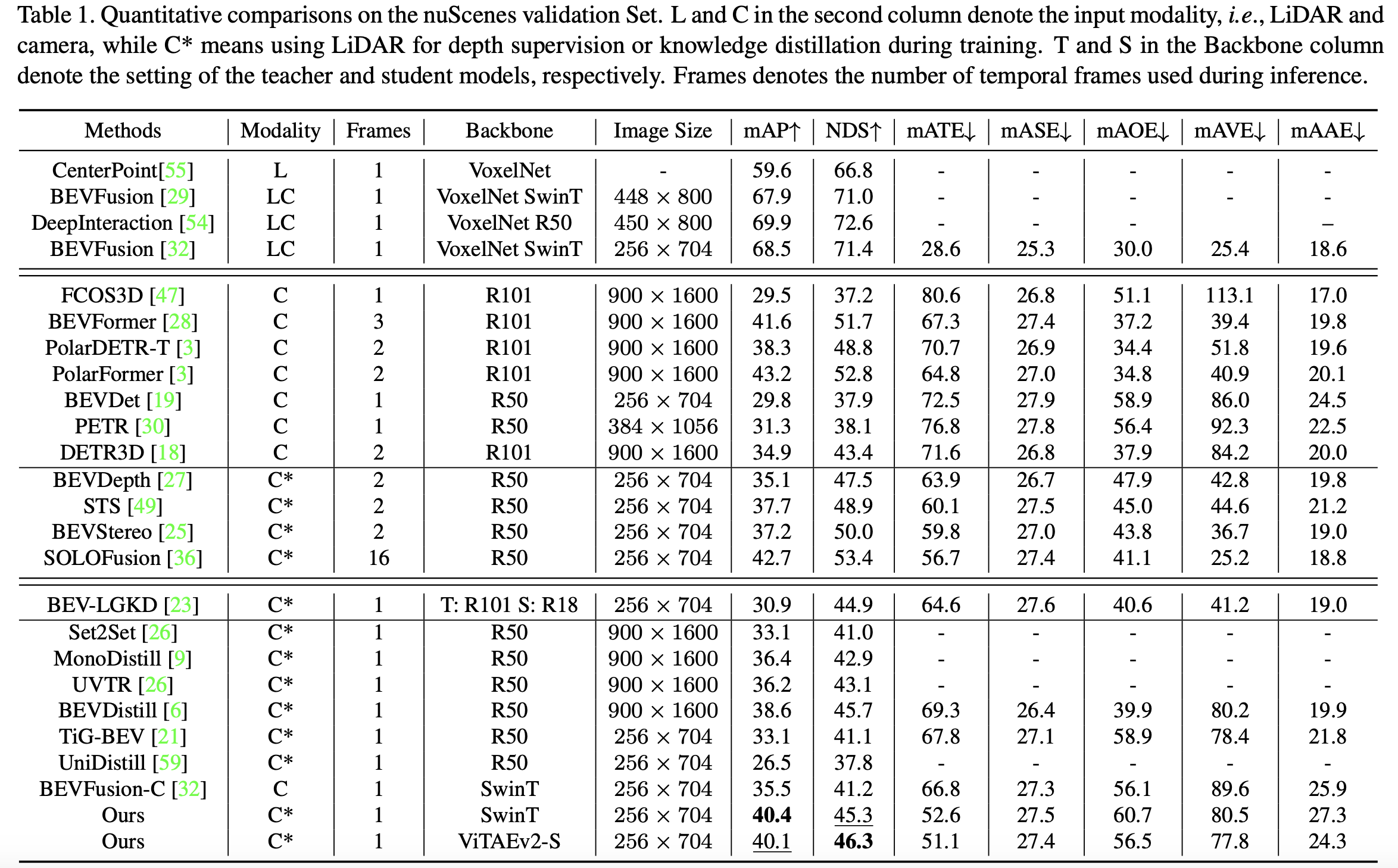

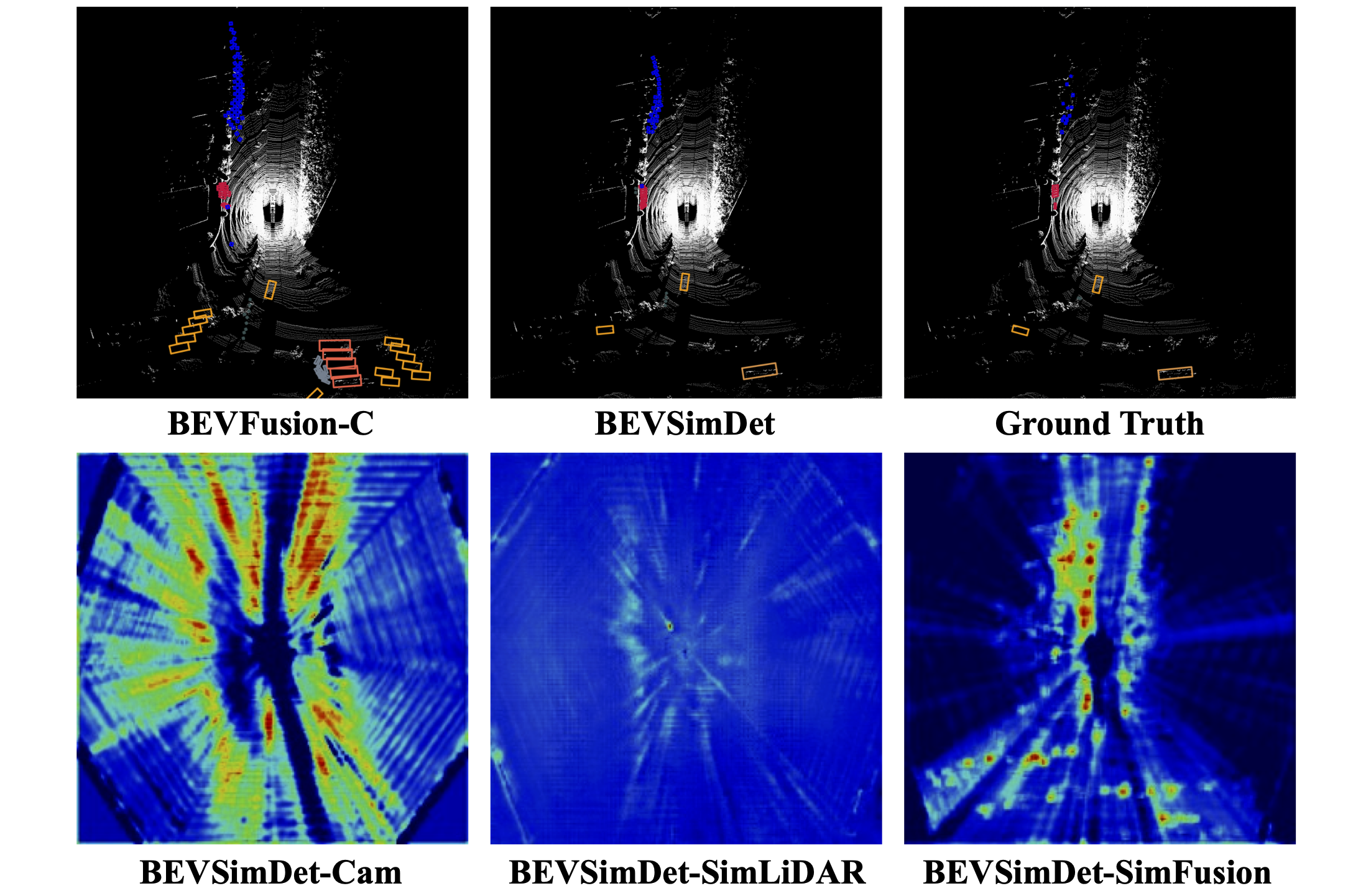

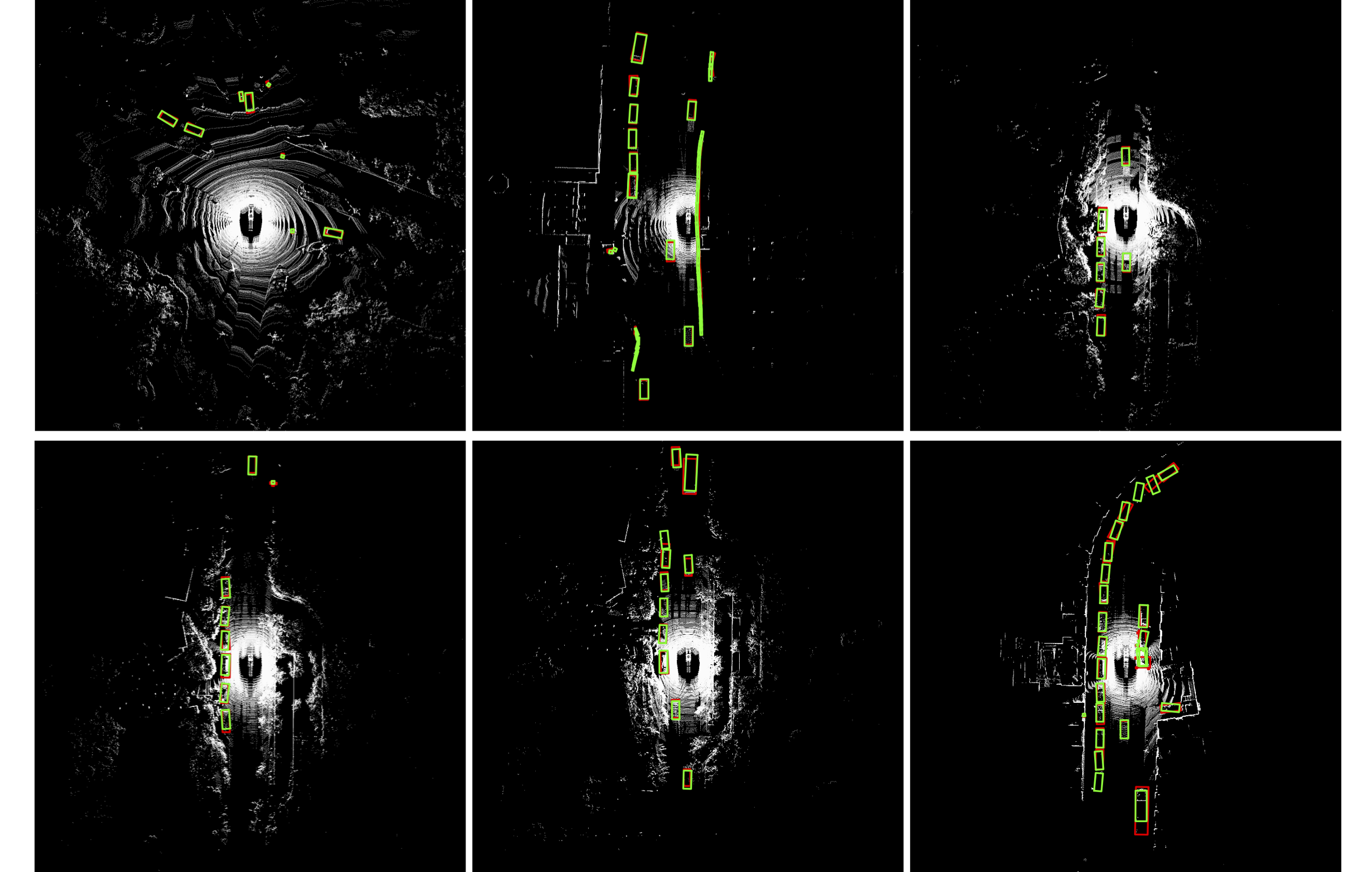

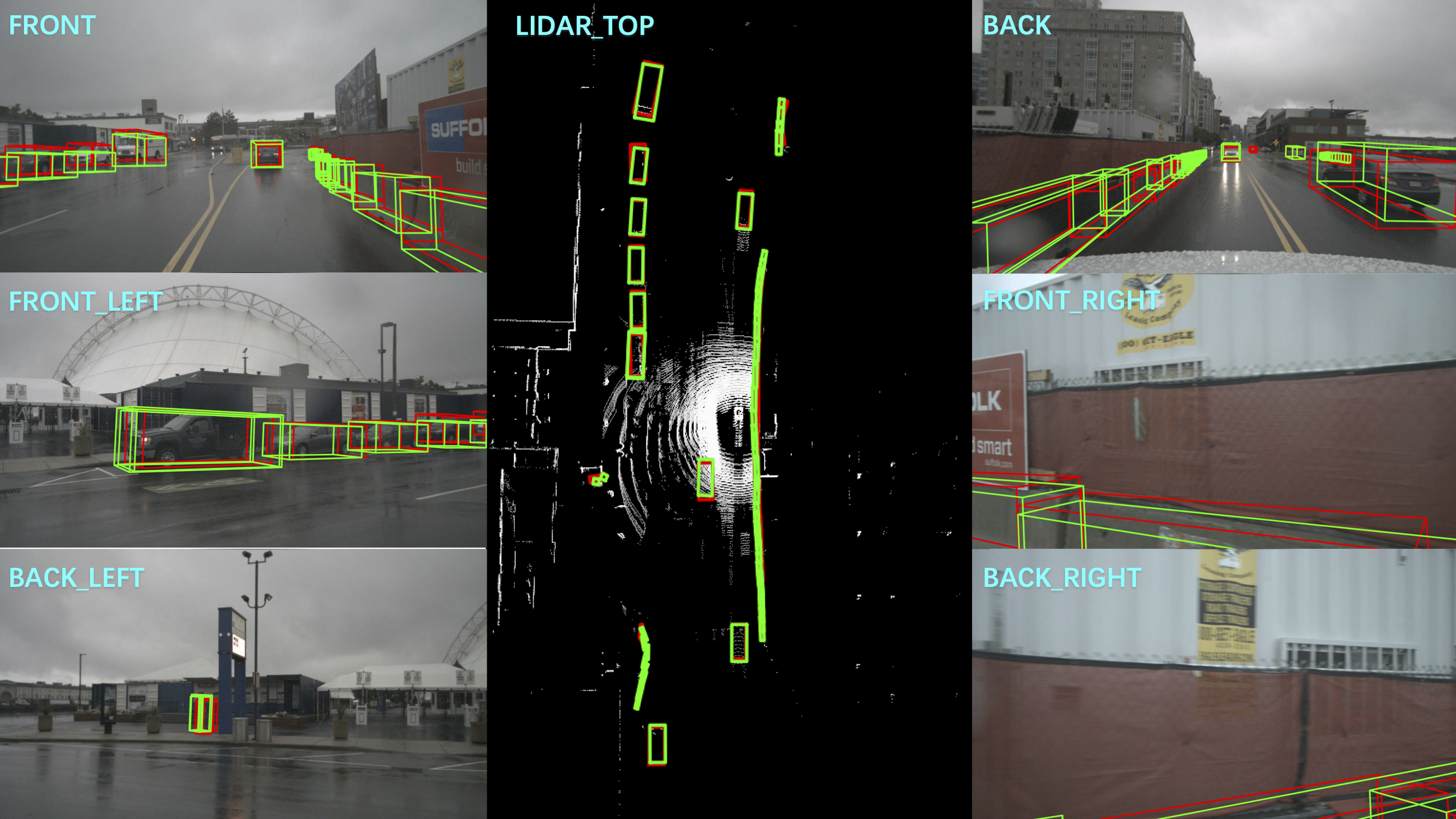

Multi-view camera-based 3D object detection has become popular due to its low cost, but accurately inferring 3D geometry solely from camera data remains challenging and may lead to inferior performance. Although distilling precise 3D geometry knowledge from LiDAR data could help tackle this challenge, the benefits of LiDAR information could be greatly hindered by the significant modality gap between different sensory modalities. To address this issue, we propose a \textbf{Si}mulated \textbf{m}ulti-modal \textbf{Distill}ation (\textbf{SimDistill}) method by carefully crafting the model architecture and distillation strategy. Specifically, we devise multi-modal architectures for both teacher and student models, including a LiDAR-camera fusion-based teacher and a simulated fusion-based student. Owing to the ``identical'' architecture design, the student can mimic the teacher to generate multi-modal features with merely multi-view images as input, where a geometry compensation module is introduced to bridge the modality gap. Furthermore, we propose a comprehensive multi-modal distillation scheme that supports intra-modal, cross-modal, and multi-modal fusion distillation simultaneously in the Bird's-eye-view space. Incorporating them together, our SimDistill can learn better feature representations for 3D object detection while maintaining a cost-effective camera-only deployment. Extensive experiments validate the effectiveness and superiority of SimDistill over state-of-the-art methods, achieving an improvement of 4.8% mAP and 4.1% NDS over the baseline detector.

The code is built with following libraries:

- Python >= 3.8, <3.9

- OpenMPI = 4.0.4 and mpi4py = 3.0.3 (Needed for torchpack)

- Pillow = 8.4.0 (see here)

- PyTorch >= 1.9, <= 1.10.2

- tqdm

- torchpack

- mmcv = 1.4.0

- mmdetection = 2.20.0

- nuscenes-dev-kit

After installing these dependencies, please run this command to install the codebase:

python setup.py developPlease follow the instructions from here to download and preprocess the nuScenes dataset. Please remember to download both detection dataset and the map extension (for BEV map segmentation). After data preparation, you will be able to see the following directory structure (as is indicated in mmdetection3d):

mmdetection3d

├── mmdet3d

├── tools

├── configs

├── data

│ ├── nuscenes

│ │ ├── maps

│ │ ├── samples

│ │ ├── sweeps

│ │ ├── v1.0-test

| | ├── v1.0-trainval

│ │ ├── nuscenes_database

│ │ ├── nuscenes_infos_train.pkl

│ │ ├── nuscenes_infos_val.pkl

│ │ ├── nuscenes_infos_test.pkl

│ │ ├── nuscenes_dbinfos_train.pkl

@article{zhao2023bevsimdet,

title={BEVSimDet: Simulated Multi-modal Distillation in Bird's-Eye View for Multi-view 3D Object Detection},

author={Zhao, Haimei and Zhang, Qiming and Zhao, Shanshan and Zhang, Jing and Tao, Dacheng},

journal={arXiv preprint arXiv:2303.16818},

year={2023}

}