Aligning Pre-training and Fine-tuning in Object Detection [Project Page] [arXiv] [Paper] [Poster]

Official PyTorch Implementation of AlignDet: Aligning Pre-training and Fine-tuning in Object Detection (ICCV 2023)

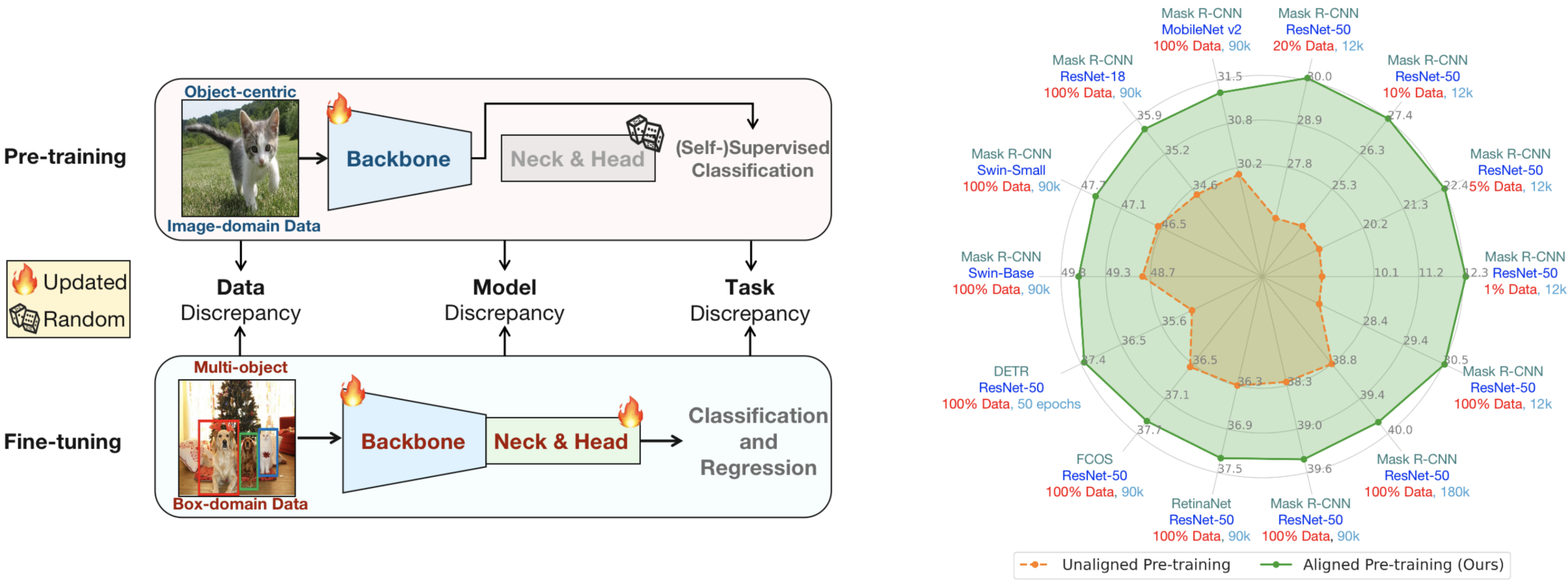

- Existing detection algorithms are constrained by the data, model, and task discrepancies between pre-training and fine-tuning.

- AlignDet aligns these discrepancies in an efficient and unsupervised paradigm, leading to significant performance improvements across different settings.

Comparison with other self-supervised pre-training methods on data, models and tasks aspects. AlignDet achieves more efficient, adequate and detection-oriented pre-training

Our pipeline takes full advantage of the existing pre-trained backbones to efficiently pre-train other modules. By incorporating self-supervised pre-trained backbones, we make the first attempt to fully pre-train various detectors using a completely unsupervised paradigm.

Data Download

Please download the COCO 2017 dataset, and the folder structure should be:

data

├── coco

│ ├── annotations

│ ├── filtered_proposals

│ ├── semi_supervised_annotations

│ ├── test2017

│ ├── train2017

│ └── val2017

The folder filtered_proposals for self-supervised pre-training can be downloaded in Google Drive.

The folder semi_supervised_annotations for semi-supervised fine-tuning can be downloaded in Google Drive, or generated by tools/generate_semi_coco.py

Environments

# Sorry our code is not based on latest mmdet 3.0+

pip3 install -r requirements.txtPre-training and Fine-tuning Instructions

Pre-training

bash tools/dist_train.sh configs/selfsup/mask_rcnn.py 8 --work-dir work_dirs/selfsup_mask-rcnnFine-tuning

- Using

tools/model_converters/extract_detector_weights.pyto extract the weights.

python3 tools/model_converters/extract_detector_weights.py \

work_dirs/selfsup_mask-rcnn/epoch_12.pth \ # pretrain weights

work_dirs/selfsup_mask-rcnn/final_model.pth # finetune weights- Fine-tuning models like normal mmdet training process, usually the learning rate is increased by 1.5 times, and the weight decay is reduced to half of the original setting. Please refer to the released logs for more details.

bash tools/dist_train.sh configs/coco/mask_rcnn_r50_fpn_1x_coco.py 8 \

--cfg-options load_from=work_dirs/selfsup_mask-rcnn/final_model.pth \ # load pre-trained weights

optimizer.lr=3e-2 optimizer.weight_decay=5e-5 \ # adjust lr and wd

--work-dir work_dirs/finetune_mask-rcnn_1x_coco_lr3e-2_wd5e-5Checkpoints and Logs

We show part of the results here, and all the checkpoints & logs can be found in the HuggingFace Space.

Different Methods

| Method (Backbone) | Pre-training | Fine-tuning |

|---|---|---|

| FCOS (R50) | link | link |

| RetinaNet (R50) | link | link |

| Faster R-CNN (R50) | link | link |

| Mask R-CNN (R50) | link | link |

| DETR (R50) | link | link |

| SimMIM (Swin-B) | link | link |

| CBNet v2 (Swin-L) | link | link |

Mask R-CNN with Different Backbones Sizes

| Backbone | Pre-training | Fine-tuning |

|---|---|---|

| MobileNet v2 | link | link |

| ResNet-18 | link | link |

| Swin-Small | link | link |

| Swin-Base | link | link |

Models with Self-supervised Backbones (ResNet-50)

| Mask R-CNN | Pre-training | Fine-tuning |

|---|---|---|

| MoCo v2 | link | link |

| PixPro | link | link |

| SwAV | link | link |

| RetinaNet | Pre-training | Fine-tuning |

| MoCo v2 | link | link |

| PixPro | link | link |

| SwAV | link | link |

Transfer Learning on VOC Dataset

| Method | Fine-tuning |

|---|---|

| FCOS | link |

| RetinaNet | link |

| Faster R-CNN | link |

| DETR | link |

Citation

If you find our work to be useful for your research, please consider citing.

@InProceedings{AlignDet,

author = {Li, Ming and Wu, Jie and Wang, Xionghui and Chen, Chen and Qin, Jie and Xiao, Xuefeng and Wang, Rui and Zheng, Min and Pan, Xin},

title = {AlignDet: Aligning Pre-training and Fine-tuning in Object Detection},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

year = {2023},

}