Ruilong Li

·

Julian Tanke

·

Minh Vo

·

Michael Zollhoefer

Jürgen Gall

·

Angjoo Kanazawa

·

Christoph Lassner

arXiv 2022

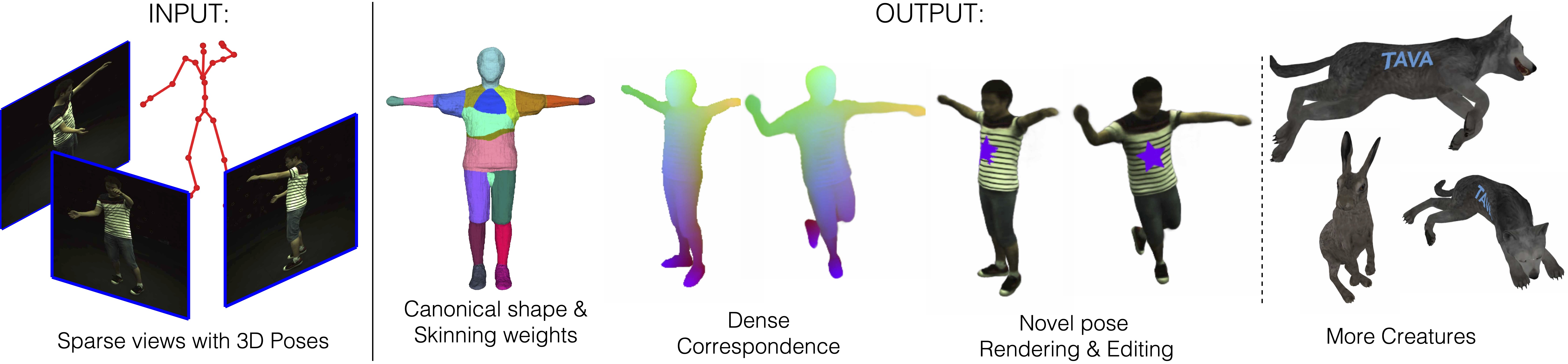

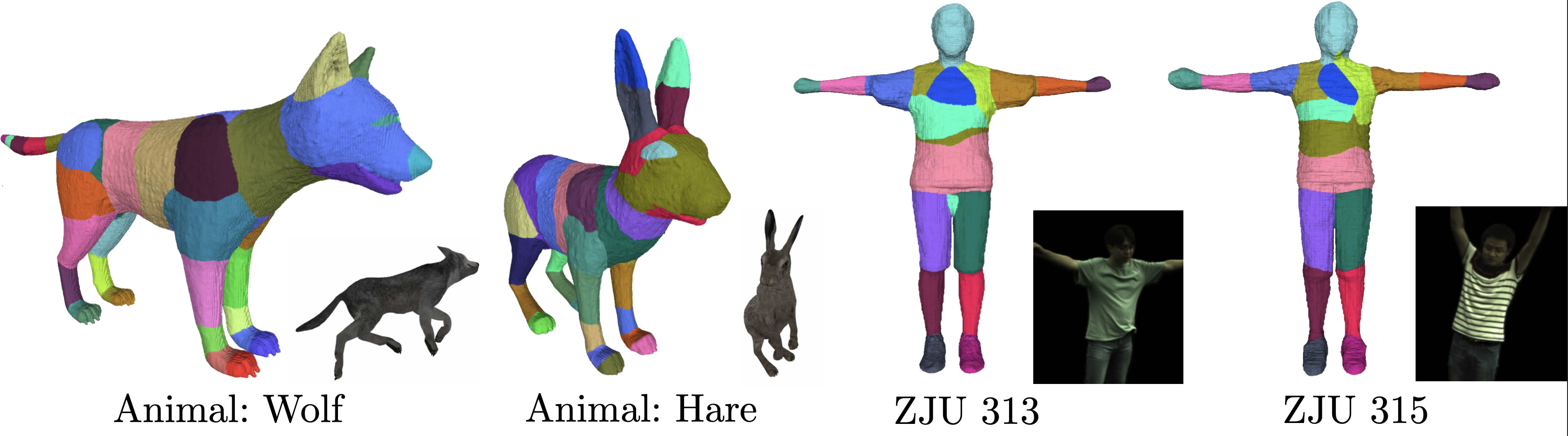

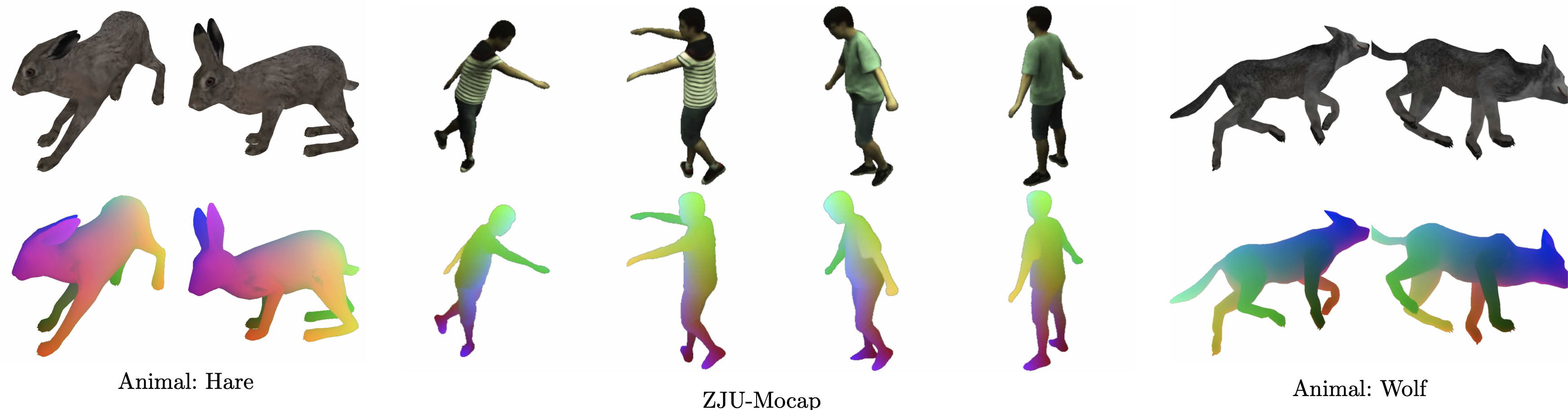

Given multiple sparse video views as well as 3D poses as inputs, TAVA creates a virtual actor consists of implicit shape, apperance, skinning weights in the canonical space, which is ready to be animated and rendered even with out-of-distribution poses. Dense correspondences across views and poses can also be established, which enables content editing during rendering. Without requiring body template, TAVA can be directly used for creatures beyond human as along as the skeleton can be defined.

conda create -n tava python=3.9

conda activate tava

conda install pytorch cudatoolkit=11.3 -c pytorch

python setup.py develop

- See docs/dataset.md on how to accquire data used in this paper.

- See docs/experiment.md on using this code base for training and evaluation.

- See docs/benchmark.md for replication of our paper.

- tools/extract_mesh_weights.ipynb: Extracting mesh and skinning weights from the learnt canonical space.

- tools/eval_corr.ipynb: Evaluate the pixel-to-pixel correspondences using ground-truth mesh on Animal Data.

BSD 3-clause (see LICENSE.txt).

- Training NeRF on NeRF synthetic dataset: See docs/nerf.md for instructions.

- Training Mip-NeRF on NeRF synthetic dataset: See docs/mipnerf.md for instructions.

@article{li2022tava,

title = {TAVA: Template-free Animatable Volumetric Actors},

author = {Ruilong Li, Julian Tanke, Minh Vo, Michael Zollhoefer, Jürgen Gall, Angjoo Kanazawa and Christoph Lassner},

journal = {arXiv preprint arXiv:2206.08929},

year = {2022},

}