The pooler application allows you to manage pools of OTP behaviors

such as gen_servers, gen_fsms, or supervisors, and provide consumers

with exclusive access to pool members using pooler:take_member.

The main pooler interface is pooler:take_member/1 and

pooler:return_member/3. The pooler server will keep track of which

members are in use and which are free. There is no need to call

pooler:return_member if the consumer is a short-lived process; in

this case, pooler will detect the consumer’s normal exit and reclaim

the member. To achieve this, pooler tracks the calling process of

take_member as the consumer of the pool member. Thus pooler assumes

that there is no middle-man process calling take_member and handing

out the member pid to another worker process.

You specify an initial and a maximum number of members in the pool. Pooler will create new members on demand until the maximum member count is reached. New pool members are added to replace members that crash. If a consumer crashes, the member it was using will be destroyed and replaced. You can configure Pooler to periodically check for and remove members that have not been used recently to reduce the member count back to its initial size.

You can use pooler to manage multiple independent pools and multiple

grouped pools. Independent pools allow you to pool clients for

different backend services (e.g. postgresql and redis). Grouped pools

can optionally be accessed using pooler:take_group_member/1 to

provide load balancing of the pools in the group. A typical use of

grouped pools is to have each pool contain clients connected to a

particular node in a cluster (think database read slaves). Pooler’s

take_group_member function will randomly select a pool in the group

to fetch a member from. If the randomly selected pool has no free

members, pooler will attempt to obtain a member from each pool in the

group. If there is no pool with available members, pooler will return

error_no_members.

The need for pooler arose while writing an Erlang-based application

that uses Riak for data storage. Riak’s protocol buffer client is a

gen_server process that initiates a connection to a Riak node. A

pool is needed to avoid spinning up a new client for each request in

the application. Reusing clients also has the benefit of keeping the

vector clocks smaller since each client ID corresponds to an entry in

the vector clock.

When using the Erlang protocol buffer client for Riak, one should avoid accessing a given client concurrently. This is because each client is associated with a unique client ID that corresponds to an element in an object’s vector clock. Concurrent action from the same client ID defeats the vector clock. For some further explanation, see post 1 and post 2. Note that concurrent access to Riak’s pb client is actual ok as long as you avoid updating the same key at the same time. So the pool needs to have checkout/checkin semantics that give consumers exclusive access to a client.

On top of that, in order to evenly load a Riak cluster and be able to continue in the face of Riak node failures, consumers should spread their requests across clients connected to each node. The client pool provides an easy way to load balance.

Since writing pooler, I’ve seen it used to pool database connections for PostgreSQL, MySQL, and Redis. These uses led to a redesign to better support multiple independent pools.

Pool configuration is specified in the pooler application’s

environment. This can be provided in a config file using -config or

set at startup using application:set_env(pooler, pools,

Pools). Here’s an example config file that creates two pools of

Riak pb clients each talking to a different node in a local cluster

and one pool talking to a Postgresql database:

% pooler.config

% Start Erlang as: erl -config pooler

% -*- mode: erlang -*-

% pooler app config

[

{pooler, [

{pools, [

[{name, rc8081},

{group, riak},

{max_count, 5},

{init_count, 2},

{start_mfa,

{riakc_pb_socket, start_link, ["localhost", 8081]}}],

[{name, rc8082},

{group, riak},

{max_count, 5},

{init_count, 2},

{start_mfa,

{riakc_pb_socket, start_link, ["localhost", 8082]}}],

[{name, pg_db1},

{max_count, 10},

{init_count, 2},

{start_mfa,

{my_pg_sql_driver, start_link, ["db_host"]}}]

]}

%% if you want to enable metrics, set this to a module with

%% an API conformant to the folsom_metrics module.

%% If this config is missing, then no metrics are sent.

%% {metrics_module, folsom_metrics}

]}

].Each pool has a unique name, specified as an atom, an initial and maximum number of members,

and an {M, F, A} describing how to start members of the pool. When

pooler starts, it will create members in each pool according to

init_count. Optionally, you can indicate that a pool is part of a

group. You can use pooler to load balance across pools labeled with

the same group tag.

The cull_interval and max_age pool configuration parameters allow

you to control how (or if) the pool should be returned to its initial

size after a traffic burst. Both parameters specify a time value which

is specified as a tuple with the intended units. The following

examples are valid:

%% two minutes, your way

{2, min}

{120, sec}

{1200, ms}The cull_interval determines the schedule when a check will be made

for stale members. Checks are scheduled using erlang:send_after/3

which provides a light-weight timing mechanism. The next check is

scheduled after the prior check completes.

During a check, pool members that have not been used in more than

max_age minutes will be removed until the pool size reaches

init_count.

The default value for cull_interval is {1, min}. You can disable

culling by specifying a value os {0, min}. The max_age parameter

defaults to {30, sec}.

You can create pools using pooler:new_pool/1 when accepts a

proplist of pool configuration. Here’s an example:

PoolConfig = [{name, rc8081},

{group, riak},

{max_count, 5},

{init_count, 2},

{start_mfa,

{riakc_pb_socket,

start_link, ["localhost", 8081]}}],

pooler:new_pool(PoolConfig).Here’s an example session:

application:start(pooler).

P = pooler:take_member(mysql),

% use P

pooler:return_member(mysql, P, ok).Once started, the main interaction you will have with pooler is

through two functions, take_member/1 and return_member/3 (or

return_member/2).

Call pooler:take_member(Pool) to obtain the pid belonging to a

member of the pool Pool. When you are done with it, return it to

the pool using pooler:return_member(Pool, Pid, ok). If you

encountered an error using the member, you can pass fail as the

second argument. In this case, pooler will permanently remove that

member from the pool and start a new member to replace it. If your

process is short lived, you can omit the call to return_member. In

this case, pooler will detect the normal exit of the consumer and

reclaim the member.

If you would like to obtain a member from a randomly selected pool in

a group, call pooler:take_group_member(Group). This will return a

Pid which must be returned using pooler:return_group_member/2 or

pooler:return_group_member/3.

In order for pooler to start properly, all applications required to start a pool member must be start before pooler starts. Since pooler does not depend on members and since OTP may parallelize application starts for applications with no detectable dependencies, this can cause problems. One way to work around this is to specify pooler as an included application in your app. This means you will call pooler’s top-level supervisor in your app’s top-level supervisor and can regain control over the application start order. To do this, you would remove pooler from the list of applications in your_app.app and add it to the included_application key:

{application, your_app,

[

{description, "Your App"},

{vsn, "0.1"},

{registered, []},

{applications, [kernel,

stdlib,

crypto,

mod_xyz]},

{included_applications, [pooler]},

{mod, {your_app, []}}

]}.Then start pooler’s top-level supervisor with something like the following in your app’s top-level supervisor:

PoolerSup = {pooler_sup, {pooler_sup, start_link, []},

permanent, infinity, supervisor, [pooler_sup]},

{ok, {{one_for_one, 5, 10}, [PoolerSup]}}.You can enable metrics collection by adding a metrics_module entry

to pooler’s app config. Metrics are disabled by default. The module

specified must have an API matching that of the folsom_metrics module

in folsom (to use folsom, specify {metrics_module, folsom_metrics}}

and ensure that folsom is in your code path and has been started.

When enabled, the following metrics will be tracked:

| Metric Label | Description |

| pooler.POOL_NAME.take_rate | meter recording rate at which take_member is called |

| pooler.error_no_members_count | counter indicating how many times take_member has returned error_no_members |

| pooler.killed_free_count | counter how many members have been killed when in the free state |

| pooler.killed_in_use_count | counter how many members have been killed when in the in_use state |

| pooler.event | history various error conditions |

- Clone the repo:

git clone https://github.com/seth/pooler.git - Build and run tests:

cd pooler; make && make test - Start a demo

erl -pa .eunit ebin -config demo Eshell V5.8.4 (abort with ^G) 1> application:start(pooler). ok 2> M = pooler:take_member(). <0.49.0> 3> pooled_gs:get_id(M). {"p2",#Ref<0.0.0.47>} 4> M2 = pooler:take_member(). <0.48.0> 5> pooled_gs:get_id(M2). {"p2",#Ref<0.0.0.45>} 6> pooler:return_member(M). ok 7> pooler:return_member(M2). ok

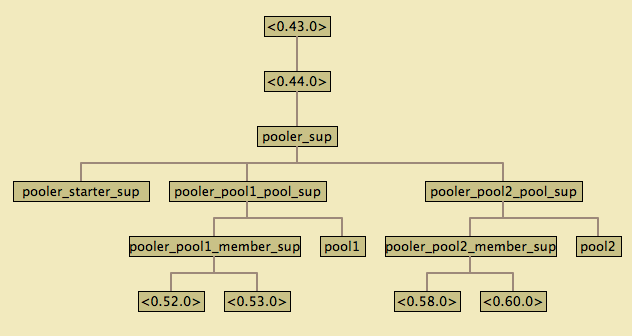

The top-level supervisor is pooler_sup. It supervises one supervisor for each pool configured in pooler’s app config.

At startup, a pooler_NAME_pool_sup is started for each pool described in pooler’s app config with NAME matching the name attribute of the config.

The pooler_NAME_pool_sup starts the gen_server that will register with pooler_NAME_pool as well as a pooler_NAME_member_sup that will be used to start and supervise the members of this pool. The pooler_starter_sup is used to start temporary workers used for managing async member start.

pooler_sup: one_for_one pooler_NAME_pool_sup: all_for_one pooler_NAME_member_sup: simple_one_for_one pooler_starter_sup: simple_one_for_one

Groups of pools are managed using the pg2 application. This imposes a requirement to set a configuration parameter on the kernel application in an OTP release. Like this in sys.config:

{kernel, [{start_pg2, true}]}Pooler is licensed under the Apache License Version 2.0. See the LICENSE file for details.