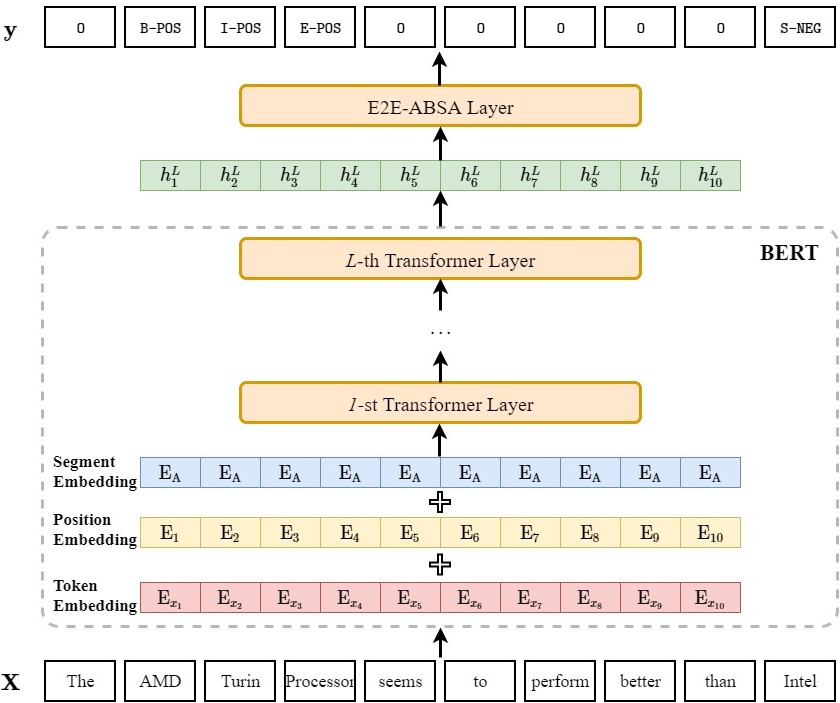

Exploiting BERT End-to-End Aspect-Based Sentiment Analysis

- python 3.7.3

- pytorch 1.2.0 (also tested on pytorch 1.3.0)

transformers 2.0.0transformers 4.1.1- numpy 1.16.4

- tensorboardX 1.9

- tqdm 4.32.1

- some codes are borrowed from allennlp (https://github.com/allenai/allennlp, an awesome open-source NLP toolkit) and transformers (https://github.com/huggingface/transformers, formerly known as pytorch-pretrained-bert or pytorch-transformers)

- Pre-trained embedding layer: BERT-Base-Uncased (12-layer, 768-hidden, 12-heads, 110M parameters)

- Task-specific layer:

- Linear

- Recurrent Neural Networks (GRU)

- Self-Attention Networks (SAN, TFM)

- Conditional Random Fields (CRF)

Restaurant: retaurant reviews from SemEval 2014 (task 4), SemEval 2015 (task 12) and SemEval 2016 (task 5) (rest_total)- (IMPORTANT) Restaurant: restaurant reviews from SemEval 2014 (rest14), restaurant reviews from SemEval 2015 (rest15), restaurant reviews from SemEval 2016 (rest16). Please refer to the newly updated files in

./data - (IMPORTANT) DO NOT use the

rest_totaldataset built by ourselves again, more details can be found in Updated Results. - Laptop: laptop reviews from SemEval 2014 (laptop14)

-

The valid tagging strategies/schemes (i.e., the ways representing text or entity span) in this project are BIEOS (also called BIOES or BMES), BIO (also called IOB2) and OT (also called IO). If you are not familiar with these terms, I strongly recommend you to read the following materials before running the program:

a. Inside–outside–beginning (tagging).

c. The paper associated with this project.

-

Reproduce the results on Restaurant and Laptop dataset:

# train the model with 5 different seed numbers python fast_run.py -

Train the model on other ABSA dataset:

-

place data files in the directory

./data/[YOUR_DATASET_NAME](please note that you need to re-organize your data files so that it can be directly adapted to this project, following the input format of./data/laptop14/train.txtshould be OK). -

set

TASK_NAMEintrain.shas[YOUR_DATASET_NAME]. -

train the model:

sh train.sh

-

-

(** New feature **) Perform pure inference/direct transfer over test/unseen data using the trained ABSA model:

-

place data file in the directory

./data/[YOUR_EVAL_DATASET_NAME]. -

set

TASK_NAMEinwork.shas[YOUR_EVAL_DATASET_NAME] -

set

ABSA_HOMEinwork.shas[HOME_DIRECTORY_OF_PRETRAINED_ABSA_MODEL] -

run:

sh work.sh

-

- OS: REHL Server 6.4 (Santiago)

- GPU: NVIDIA GTX 1080 ti

- CUDA: 10.0

- cuDNN: v7.6.1

-

The data files of the

rest_totaldataset are created by concatenating the train/test counterparts fromrest14,rest15andrest16and our motivation is to build a larger training/testing dataset to stabilize the training/faithfully reflect the capability of the ABSA model. However, we recently found that the SemEval organizers directly treat the union set ofrest15.trainandrest15.testas the training set of rest16 (i.e.,rest16.train), and thus, there exists overlap between therest_total.trainand therest_total.test, which makes this dataset invalid. When you follow our works on this E2E-ABSA task, we hope you DO NOT use thisrest_totaldataset any more but change to the officially releasedrest14,rest15andrest16. -

To facilitate the comparison in the future, we re-run our models following the above mentioned settings and report the results on

rest14,rest15andrest16:Model rest14 rest15 rest16 E2E-ABSA (OURS) 67.10 57.27 64.31 He et al. (2019) 69.54 59.18 n/a Liu et al. (2020) 68.91 58.37 n/a BERT-Linear (OURS) 72.61 60.29 69.67 BERT-GRU (OURS) 73.17 59.60 70.21 BERT-SAN (OURS) 73.68 59.90 70.51 BERT-TFM (OURS) 73.98 60.24 70.25 BERT-CRF (OURS) 73.17 60.70 70.37 Chen and Qian (2019) 75.42 66.05 n/a Liang et al. (2020) 72.60 62.37 n/a

If the code is used in your research, please star our repo and cite our paper as follows:

@inproceedings{li-etal-2019-exploiting,

title = "Exploiting {BERT} for End-to-End Aspect-based Sentiment Analysis",

author = "Li, Xin and

Bing, Lidong and

Zhang, Wenxuan and

Lam, Wai",

booktitle = "Proceedings of the 5th Workshop on Noisy User-generated Text (W-NUT 2019)",

year = "2019",

url = "https://www.aclweb.org/anthology/D19-5505",

pages = "34--41"

}