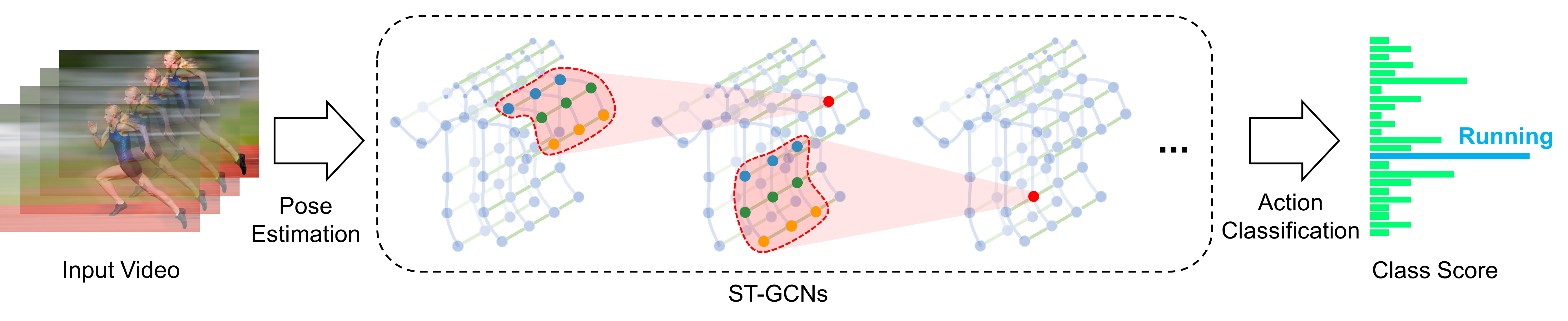

A graph convolutional network for skeleton based action recognition.

This repository holds the codebase, dataset and models for the paper>

Spatial Temporal Graph Convolutional Networks for Skeleton-Based Action Recognition Sijie Yan, Yuanjun Xiong and Dahua Lin, AAAI 2018.

- Feb. 21, 2019 - We provide pretrained models and training scripts on NTU-RGB+D and kinetics-skeleton datasets. So that you can achieve the performance we mentioned in the paper.

- June. 5, 2018 - A demo for feature visualization and skeleton based action recognition is released.

- June. 1, 2018 - We update our code base and complete the PyTorch 0.4.0 migration. You can switch to the old version v0.1.0 to acquire the original setting in the paper.

Our demo for skeleton based action recognition:

ST-GCN is able to exploit local pattern and correlation from human skeletons. Below figures show the neural response magnitude of each node in the last layer of our ST-GCN.

|

|

|

|

|

| Touch head | Sitting down | Take off a shoe | Eat meal/snack | Kick other person |

|

|

|

|

|

| Hammer throw | Clean and jerk | Pull ups | Tai chi | Juggling ball |

The first row of above results is from NTU-RGB+D dataset, and the second row is from Kinetics-skeleton.

Our codebase is based on Python3 (>=3.5). There are a few dependencies to run the code. The major libraries we depend are

- PyTorch (Release version 0.4.0)

- Openpose@92cdcad (Optional: for demo only)

- FFmpeg (Optional: for demo only), which can be installed by

sudo apt-get install ffmpeg - Other Python libraries can be installed by

pip install -r requirements.txt

cd torchlight; python setup.py install; cd ..

We provided the pretrained model weithts of our ST-GCN. The model weights can be downloaded by running the script

bash tools/get_models.sh

You can also obtain models from GoogleDrive or BaiduYun, and manually put them into ./models.

To visualize how ST-GCN exploit local correlation and local pattern, we compute the feature vector magnitude of each node in the final spatial temporal graph, and overlay them on the original video. Openpose should be ready for extracting human skeletons from videos. The skeleton based action recognition results is also shwon thereon.

Run the demo by this command:

python main.py demo --openpose <path to openpose build directory> [--video <path to your video> --device <gpu0> <gpu1>]

A video as above will be generated and saved under data/demo_result/.

We experimented on two skeleton-based action recognition datasts: Kinetics-skeleton and NTU RGB+D.

Kinetics is a video-based dataset for action recognition which only provide raw video clips without skeleton data. Kinetics dataset include To obatin the joint locations, we first resized all videos to the resolution of 340x256 and converted the frame rate to 30 fps. Then, we extracted skeletons from each frame in Kinetics by Openpose. The extracted skeleton data we called Kinetics-skeleton(7.5GB) can be directly downloaded from GoogleDrive or BaiduYun.

After uncompressing, rebuild the database by this command:

python tools/kinetics_gendata.py --data_path <path to kinetics-skeleton>

NTU RGB+D can be downloaded from their website. Only the 3D skeletons(5.8GB) modality is required in our experiments. After that, this command should be used to build the database for training or evaluation:

python tools/ntu_gendata.py --data_path <path to nturgbd+d_skeletons>

where the <path to nturgbd+d_skeletons> points to the 3D skeletons modality of NTU RGB+D dataset you download.

To evaluate ST-GCN model pretrained on Kinetcis-skeleton, run

python main.py recognition -c config/st_gcn/kinetics-skeleton/test.yaml

For cross-view evaluation in NTU RGB+D, run

python main.py recognition -c config/st_gcn/ntu-xview/test.yaml

For cross-subject evaluation in NTU RGB+D, run

python main.py recognition -c config/st_gcn/ntu-xsub/test.yaml

To speed up evaluation by multi-gpu inference or modify batch size for reducing the memory cost, set --test_batch_size and --device like:

python main.py recognition -c <config file> --test_batch_size <batch size> --device <gpu0> <gpu1> ...

The expected Top-1 accuracy of provided models are shown here:

| Model | Kinetics- skeleton (%) |

NTU RGB+D Cross View (%) |

NTU RGB+D Cross Subject (%) |

|---|---|---|---|

| Baseline[1] | 20.3 | 83.1 | 74.3 |

| ST-GCN (Ours) | 31.6 | 88.8 | 81.6 |

[1] Kim, T. S., and Reiter, A. 2017. Interpretable 3d human action analysis with temporal convolutional networks. In BNMW CVPRW.

To train a new ST-GCN model, run

python main.py recognition -c config/st_gcn/<dataset>/train.yaml [--work_dir <work folder>]

where the <dataset> must be ntu-xsub, ntu-xview or kinetics-skeleton, depending on the dataset you want to use.

The training results, including model weights, configurations and logging files, will be saved under the ./work_dir by default or <work folder> if you appoint it.

You can modify the training parameters such as work_dir, batch_size, step, base_lr and device in the command line or configuration files. The order of priority is: command line > config file > default parameter. For more information, use main.py -h.

Finally, custom model evaluation can be achieved by this command as we mentioned above:

python main.py recognition -c config/st_gcn/<dataset>/test.yaml --weights <path to model weights>

Please cite the following paper if you use this repository in your reseach.

@inproceedings{stgcn2018aaai,

title = {Spatial Temporal Graph Convolutional Networks for Skeleton-Based Action Recognition},

author = {Sijie Yan and Yuanjun Xiong and Dahua Lin},

booktitle = {AAAI},

year = {2018},

}

For any question, feel free to contact

Sijie Yan : ys016@ie.cuhk.edu.hk

Yuanjun Xiong : bitxiong@gmail.com