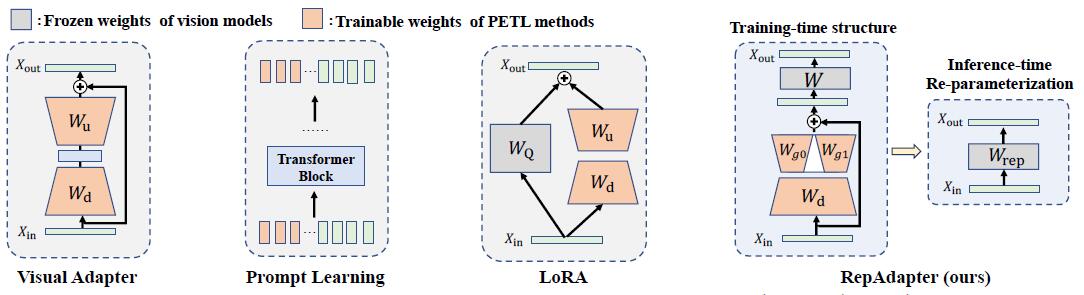

Official implementation of "Towards Efficient Visual Adaption via Structural Re-parameterization". Repadapter is a parameter-efficient and computationally friendly adapter for giant vision models, which can be seamlessly integrated into most vision models via structural re-parameterization. Compared to Full Tuning, RepAdapter saves up to 25% training time, 20% GPU memory, and 94.6% storage cost of ViT-B/16 on VTAB-1k.

- (2023/02/16) Release our RepAdapter project.

We provide two ways for preparing VTAB-1k:

- Download the source datasets, please refer to NOAH.

- We provide the prepared datasets, which can be download from google drive.

After that, the file structure should look like:

$ROOT/data

|-- cifar

|-- caltech101

......

|-- diabetic_retinopathy

- Download the pretrained ViT-B/16 to

./ViT-B_16.npz

- Search the hyper-parameter s for RepAdapter (optional)

bash search_repblock.sh- Train RepAdapter

bash train_repblock.sh- Test RepAdapter

python test.py --method repblock --dataset <dataset-name> The following is a simple example of using RepAdapter to load and train a model

- Import repadapter.py from module

from repadapter import set_repadapter, save_repadapter,load_repadapter- Insert RepAdapter layers into all linear layers in the model

set_repadapter(model=model)If you need to train only specific linear layers, you can modify set_repadapter to use regular expressions to match specific names.

import re

import torch.nn as nn

def set_repadapter(model, pattern):

# Compile regular expression patterns

regex = re.compile(pattern)

for name, module in model.named_modules():

# Check if the module is a linear layer and if the name matches a regular expression

if isinstance(module, nn.Linear) and regex.match(name):- Set the requires_grad attribute of the model parameters.

- Set the requires_grad attribute of the model parameters as needed to determine which parameters require training.

trainable = []

for n, p in model.named_parameters():

if any([x in n for x in ['repadapter']]):

trainable.append(p)

p.requires_grad = True

else:

p.requires_grad = False- Save the checkpoint of RepAdapter

- After training is completed, generally only the repadapter parameters of the model are saved. This can save a significant amount of disk space, which is one of the advantages of using RepAdapter.

import os

save_repadapter(os.path.join(output_dir,"final.pt"), model=model)- Load the checkpoint of RepAdapter

- If you need to load a model that has been saved after training, the model needs to execute set_repadapter before loading.

load_repadapter(load_path, model=model)- Reparameterize the model

- merge_repadapter is used after model training to simplify the model structure, reducing the model size and inference time.

- merge_repadapter takes the model and the save path of the repadapter as inputs and performs reparameterization.

merge_repadapter(model,load_path=None,has_loaded=False)If this repository is helpful for your research, or you want to refer the provided results in your paper, consider cite:

@article{luo2023towards,

title={Towards Efficient Visual Adaption via Structural Re-parameterization},

author={Luo, Gen and Huang, Minglang and Zhou, Yiyi and Sun, Xiaoshuai and Jiang, Guangnan and Wang, Zhiyu and Ji, Rongrong},

journal={arXiv preprint arXiv:2302.08106},

year={2023}

}