MuseTalk: Real-Time High Quality Lip Synchronization with Latent Space Inpainting

This is the unofficial train code of MuseTalk. "Real-Time High Quality Lip Synchronization with Latent Space Inpainting", Most code from https://github.com/TMElyralab/MuseTalk . This project try to realize the train code and add some experiments for it.

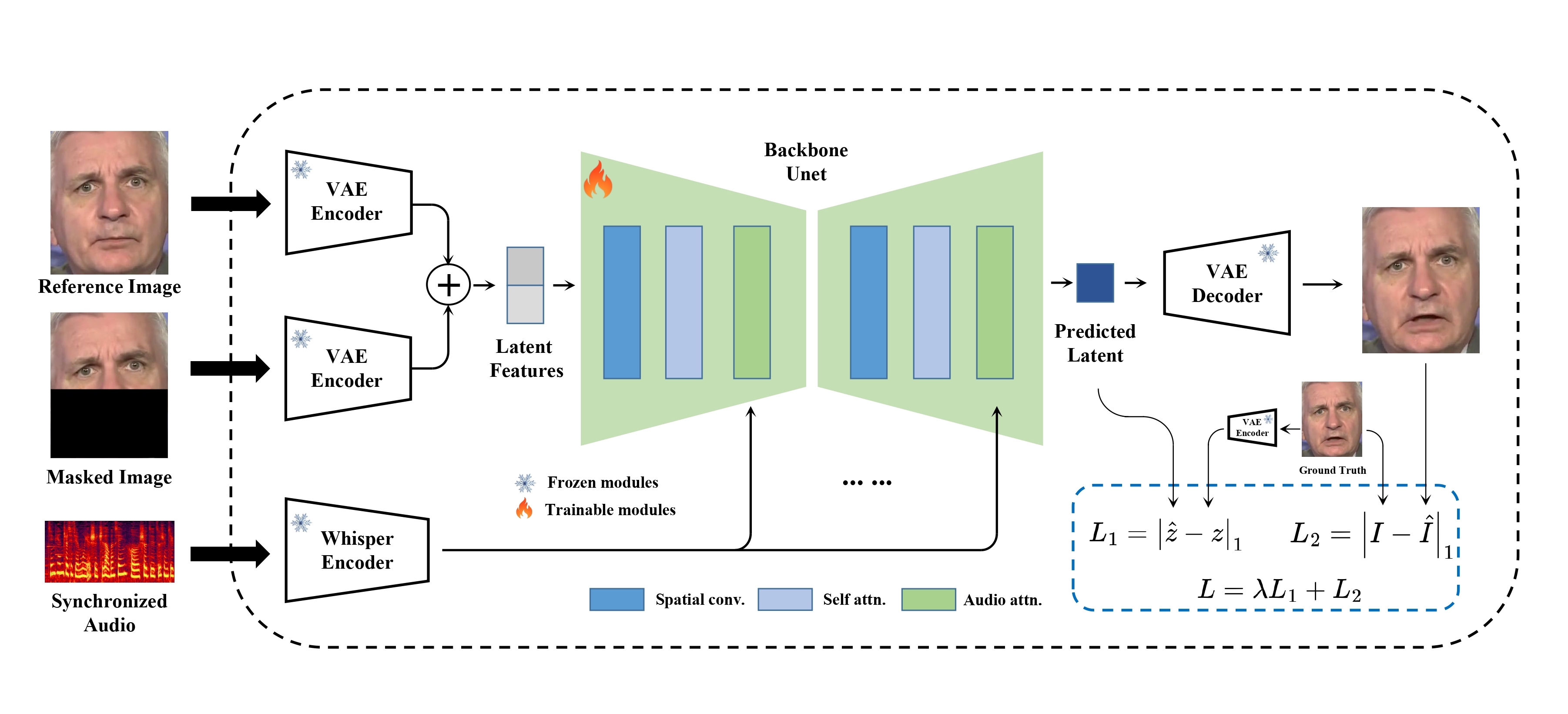

MuseTalk is a real-time high quality audio-driven lip-syncing model trained in the latent space of ft-mse-vae,

MuseTalk was trained in latent spaces, where the images were encoded by a freezed VAE. The audio was encoded by a freezed

MuseTalk was trained in latent spaces, where the images were encoded by a freezed VAE. The audio was encoded by a freezed whisper-tiny model. The architecture of the generation network was borrowed from the UNet of the stable-diffusion-v1-4, where the audio embeddings were fused to the image embeddings by cross-attention.

Note that although we use a very similar architecture as Stable Diffusion, MuseTalk is distinct in that it is NOT a diffusion model. Instead, MuseTalk operates by inpainting in the latent space with a single step.

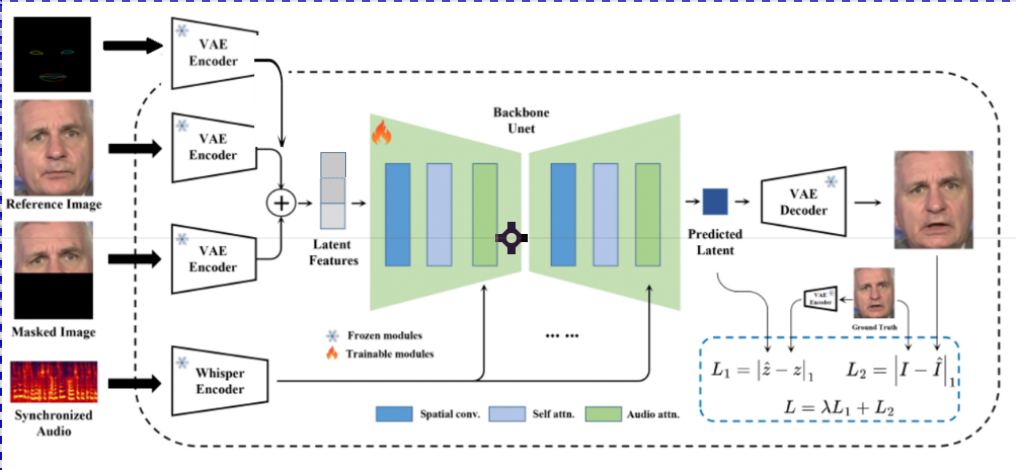

In the experiment, it was found that when half of the face is masked, if the nose is within the masked area, the generated nose will exhibit differences, leading to flickering in the video. So We try to add the landmark control to the model and add lpip loss for the generate face.

In the experiment, it was found that when half of the face is masked, if the nose is within the masked area, the generated nose will exhibit differences, leading to flickering in the video. So We try to add the landmark control to the model and add lpip loss for the generate face.

To prepare the Python environment and install additional packages such as opencv, diffusers, mmcv, etc., please follow the steps below:

We recommend a python version >=3.10 and cuda version =11.7. Then build environment as follows:

pip install -r requirements.txtpip install --no-cache-dir -U openmim

mim install mmengine

mim install "mmcv>=2.0.1"

mim install "mmdet>=3.1.0"

mim install "mmpose>=1.1.0" Download the ffmpeg-static and

export FFMPEG_PATH=/path/to/ffmpeg

for example:

export FFMPEG_PATH=/musetalk/ffmpeg-4.4-amd64-static

You can download weights manually as follows:

-

Download our trained weights.

-

Download the weights of other components:

Finally, these weights should be organized in models as follows:

./models/

├── musetalk

│ └── musetalk.json

│ └── pytorch_model.bin

├── dwpose

│ └── dw-ll_ucoco_384.pth

├── face-parse-bisent

│ ├── 79999_iter.pth

│ └── resnet18-5c106cde.pth

├── sd-vae-ft-mse

│ ├── config.json

│ └── diffusion_pytorch_model.bin

└── whisper

└── tiny.pt

Here, we provide the inference script.

python -m scripts.inference --inference_config configs/inference/test.yaml

configs/inference/test.yaml is the path to the inference configuration file, including video_path and audio_path. The video_path should be either a video file, an image file or a directory of images.

You are recommended to input video with 25fps, the same fps used when training the model. If your video is far less than 25fps, you are recommended to apply frame interpolation or directly convert the video to 25fps using ffmpeg.

🔎 We have found that upper-bound of the mask has an important impact on mouth openness. Thus, to control the mask region, we suggest using the bbox_shift parameter. Positive values (moving towards the lower half) increase mouth openness, while negative values (moving towards the upper half) decrease mouth openness.

You can start by running with the default configuration to obtain the adjustable value range, and then re-run the script within this range.

For example, in the case of Xinying Sun, after running the default configuration, it shows that the adjustable value rage is [-9, 9]. Then, to decrease the mouth openness, we set the value to be -7.

python -m scripts.inference --inference_config configs/inference/test.yaml --bbox_shift -7

📌 More technical details can be found in bbox_shift.

As a complete solution to virtual human generation, you are suggested to first apply MuseV to generate a video (text-to-video, image-to-video or pose-to-video) by referring this. Frame interpolation is suggested to increase frame rate. Then, you can use MuseTalk to generate a lip-sync video by referring this.

Here, we provide the inference script. This script first applies necessary pre-processing such as face detection, face parsing and VAE encode in advance. During inference, only UNet and the VAE decoder are involved, which makes MuseTalk real-time.

python -m scripts.realtime_inference --inference_config configs/inference/realtime.yaml --batch_size 4

configs/inference/realtime.yaml is the path to the real-time inference configuration file, including preparation, video_path , bbox_shift and audio_clips.

- Set

preparationtoTrueinrealtime.yamlto prepare the materials for a newavatar. (If thebbox_shifthas changed, you also need to re-prepare the materials.) - After that, the

avatarwill use an audio clip selected fromaudio_clipsto generate video.Inferring using: data/audio/yongen.wav - While MuseTalk is inferring, sub-threads can simultaneously stream the results to the users. The generation process can achieve 30fps+ on an NVIDIA Tesla V100.

- Set

preparationtoFalseand run this script if you want to genrate more videos using the same avatar.

- If you want to generate multiple videos using the same avatar/video, you can also use this script to SIGNIFICANTLY expedite the generation process.

- In the previous script, the generation time is also limited by I/O (e.g. saving images). If you just want to test the generation speed without saving the images, you can run

python -m scripts.realtime_inference --inference_config configs/inference/realtime.yaml --skip_save_images

- We thank open-source components like whisper, dwpose, face-alignment, face-parsing, S3FD.

- MuseTalk has referred much to diffusers and isaacOnline/whisper.

- MuseTalk has been built on HDTF datasets.