PyTorch code for ICCV 2023 paper "SLCA: Slow Learner with Classifier Alignment for Continual Learning on a Pre-trained Model".

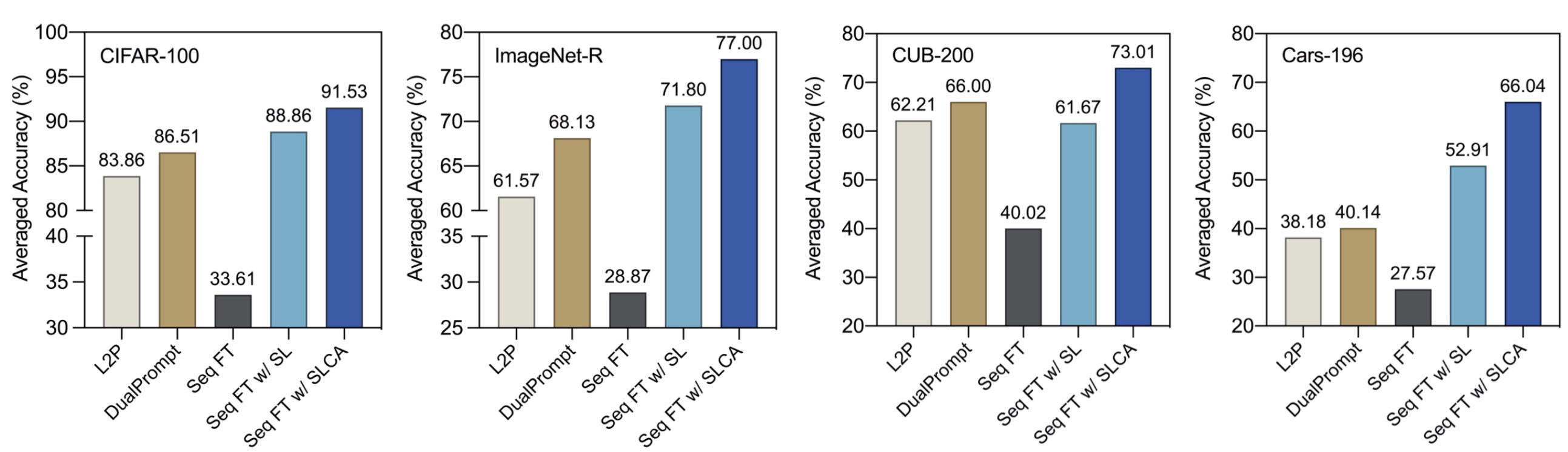

In this work, we present an extensive analysis for continual learning on a pre-trained model (CLPM), and attribute the key challenge to a progressive overfitting problem. Observing that selectively reducing the learning rate can almost resolve this issue in the representation layer, we propose a simple but extremely effective approach named Slow Learner with Classifier Alignment (SLCA), which further improves the classification layer by modelling the class-wise distributions and aligning the classification layers in a post-hoc fashion. Across a variety of scenarios, our proposal provides substantial improvements for CLPM (e.g., up to 49.76%, 50.05%, 44.69% and 40.16% on Split CIFAR-100, Split ImageNet-R, Split CUB-200 and Split Cars-196, respectively), and thus outperforms state-of-the-art approaches by a large margin. Based on such a strong baseline, critical factors and promising directions are analyzed in-depth to facilitate subsequent research.

- torch==1.12.0

- torchvision==0.13.0

- timm==0.5.4

- tqdm

- numpy

- scipy

- quadprog

- POT

Please download pre-trained ViT-Base models from MoCo v3 and ImaegNet-21K and then put or link the pre-trained models to SLCA/pretrained

This repo is heavily based on PyCIL, many thanks.

If you find our codes or paper useful, please consider giving us a star or cite with:

@inproceedings{zhang2023slca,

title={SLCA: Slow Learner with Classifier Alignment for Continual Learning on a Pre-trained Model},

author={Zhang, Gengwei and Wang, Liyuan and Kang, Guoliang and Chen, Ling and Wei, Yunchao},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

year={2023}

}