Extract from Hugging Face:

Automatic speech recognition (ASR) converts a speech signal to text. It is an example of a sequence-to-sequence task, going from a sequence of audio inputs to textual outputs. Voice assistants like Siri and Alexa utilize ASR models to assist users.

This guide will give you a quick step by step tutorial about how to create an end to end Automatic Speech Recognition (ASR) solution that deals with long-form audio e.g. podcasts, videos, audiobooks, etc.

- Intro to Automatic Speech Recognition on 🤗

- Robust Speech Challenge Results on 🤗

- Mozilla Common Voice 9.0

- Thunder-speech, A Hackable speech recognition library

- SpeechBrain - PyTorch powered speech toolkit

- Neural building blocks for speaker diarization: speech activity detection, speaker change detection, overlapped speech detection, speaker embedding

- SPEECH RECOGNITION WITH WAV2VEC2

- How to add timestamps to ASR output

- Host Hugging Face transformer models using Amazon SageMaker Serverless Inference

- SageMaker Studio Lab account. See this explainer video to learn more about this.

- Python>=3.7

- PyTorch>=1.10

- Hugging Face Transformers

- Several audio processing libraries (see

environment.yml)

Follow the steps shown in example_w_HuggingFace.ipynb Click on

Copy to project in the top right corner. This will open the Studio Lab web interface and ask you whether you want to clone the entire repo or just the Notebook. Clone the entire repo and click Yes when asked about building the Conda environment automatically. You will now be running on top of a Python environment with Streamlit and Gradio already installed along with other libraries.

We first point to the Spaces url that we want to run on Studio Lab:

from os.path import exists as path_exists

YouTubeID = 'YOUR_YOUTUBE_ID'

OutputFile = 'YOUR_AUDIO_FILE.m4a'

if not path_exists(OutputFile):

!youtube-dl -o $OutputFile $YouTubeID --extract-audio --restrict-filenames -f 'bestaudio[ext=m4a]'import librosa

from transformers import pipeline

pipe = pipeline(model=model_name)

speech, sample_rate = librosa.load(OutputFile,sr=16000)

transcript = pipe(speech, chunk_length_s=10, stride_length_s=(4,2))import torch

import pydub

import array

import numpy as np

from pydub.utils import mediainfo

from pydub import AudioSegment

from pydub.utils import get_array_type

def audio_resampler(sound, sample_rate=16000):

sound = sound.set_frame_rate(sample_rate)

left = sound.split_to_mono()[0]

bit_depth = left.sample_width * 8

array_type = pydub.utils.get_array_type(bit_depth)

numeric_array = np.array(array.array(array_type, left._data))

return np.asarray(numeric_array,dtype=np.double), sample_rate

pydub_speech = pydub.AudioSegment.from_file(OutputFile)

speech, sample_rate = audio_resampler(pydub_speech)

transcript = ''

for chunk in np.array_split(speech,len(speech)/sample_rate/30)[:2]: # split every 30 seconds, take only first minute

output = pipe(chunk)

transcript = transcript + ' ' + output['text']

print(output)

transcript = transcript.strip()import librosa

from librosa import display

import matplotlib.pyplot as plt

speech, sample_rate = librosa.load(OutputFile,sr=16000)

non_mute_sections_in_speech = librosa.effects.split(speech,top_db=50)

transcript = ''

for chunk in non_mute_sections_in_speech[:6]:

speech_chunk = speech[chunk[0]:chunk[1]]

output = pipe(speech_chunk)

transcript = transcript + ' ' + output['text']

print(output)

transcript = transcript.strip()from youtube_transcript_api import YouTubeTranscriptApi

transcript = YouTubeTranscriptApi.get_transcript(YouTubeID,languages=['es'])

transcript_from_YouTube = ' '.join([i['text'] for i in transcript])

from utils import *

from IPython.display import HTML, display

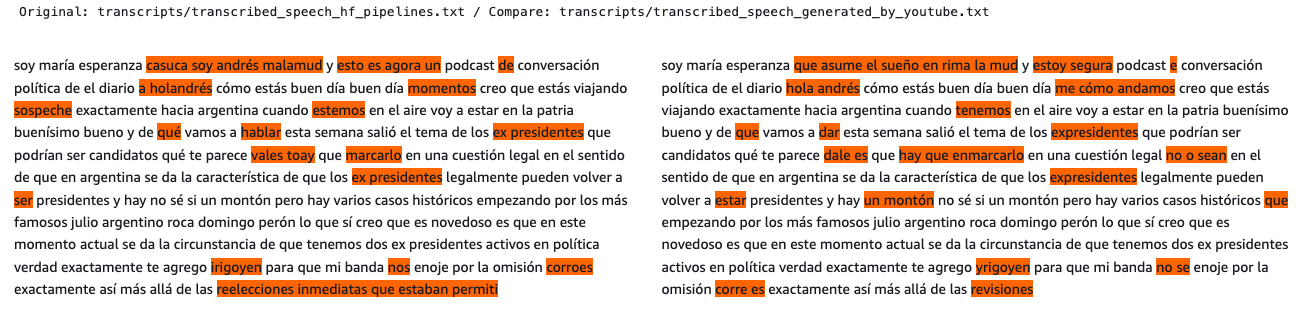

base = "transcripts/transcribed_speech_hf_pipelines.txt"

compare = "transcripts/transcribed_speech_generated_by_youtube.txt"

a = open(base,'r').readlines()[0][:1000]

b = open(compare,'r').readlines()[0][:1000]

print(f'Original: {base} / Compare: {compare}')

display(HTML(html_diffs(a,b)))- Pyctcdecode & Speech2text decoding

- XLS-R: Large-Scale Cross-lingual Speech Representation Learning on 128 Languages

- Unlocking global speech with Mozilla Common Voice

@misc{grosman2022wav2vec2-xls-r-1b-spanish,

title={XLS-R Wav2Vec2 Spanish by Jonatas Grosman},

author={Grosman, Jonatas},

publisher={Hugging Face},

journal={Hugging Face Hub},

howpublished={\url{https://huggingface.co/jonatasgrosman/wav2vec2-xls-r-1b-spanish}},

year={2022}

}- The content provided in this repository is for demonstration purposes and not meant for production. You should use your own discretion when using the content.

- The ideas and opinions outlined in these examples are my own and do not represent the opinions of AWS.