Tales from the Crypto - Natural Language Processing

As there's been a lot of hype in the news lately about cryptocurrency, we would like to invest, so to speak, of the latest news headlines regarding Bitcoin & Ethereum to get a better feel for the current public sentiment around each coin.

Using fundamental NLP techniques to understand the sentiment in the latest news article featuring Bitcoin & Ethereum and also other factors involved with the coin prices such as common words & phrases and organizations & entities mentioned in the articles.

-

Use of Vader Sentiment Analysis

from nltk.sentiment.vader import SentimentIntensityAnalyzer analyzer = SentimentIntensityAnalyzer()

2. Natural Language Processing

- Natural Language Toolkit NLTK

- Tokenizing Words & Sentences with NLTK Tokenizing

- Generate N-grams N-grams

- Word Cloud Word_Cloud

Sentiment Analysis

1. Use of newsapi to pull the latest news articles for Bitcoin and Ethereum

btc_articles = newsapi.get_everything(q='bitcoin', language='en', sort_by='relevancy', )

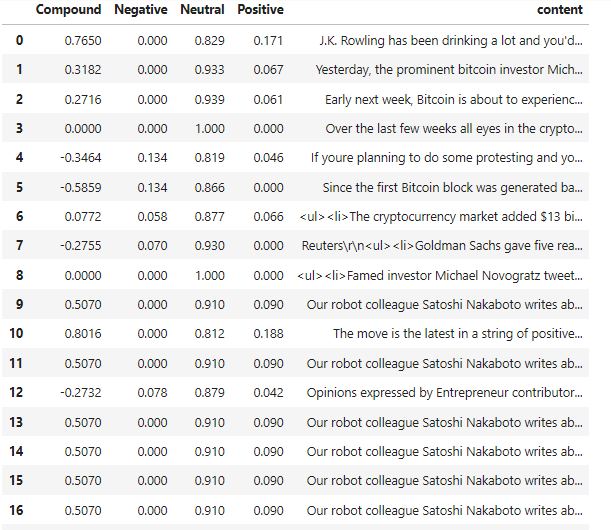

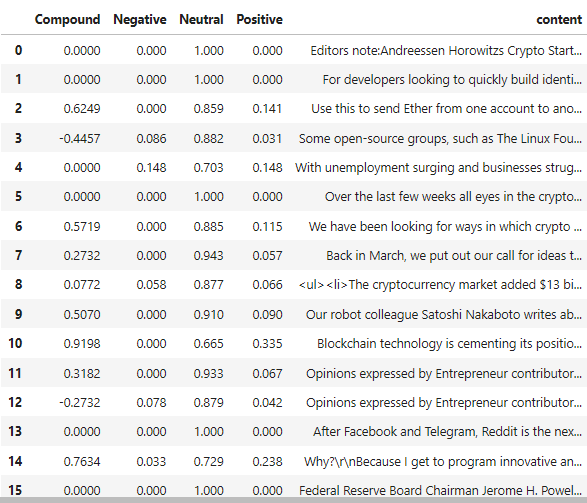

2. Creation of Dataframe of Sentiment Scores for each coin

| Bitcoin | Ethereum |

|---|---|

|

|

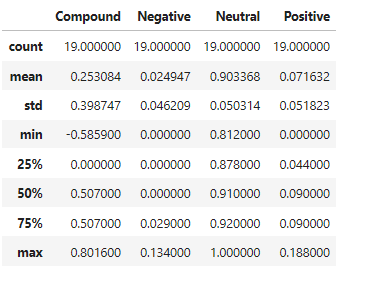

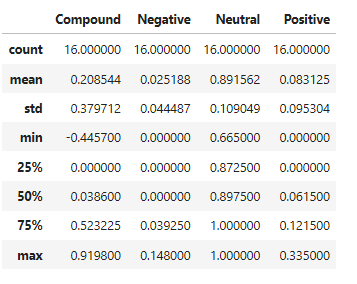

3. Descriptive statistics

| Bitcoin | Ethereum |

|---|---|

|

|

-

Which coin had the highest mean positive score?

Bitcoin - 0.07

-

Which coin had the highest negative score?

Ethereum - 0.025

-

Which coin had the highest positive score?

Ethereum - 0.9198

Natural Language Processing

1. Import the following Libraries from nltk:

```python

from nltk.tokenize import word_tokenize, sent_tokenize

from nltk.corpus import stopwords

from nltk.stem import WordNetLemmatizer, PorterStemmer

from string import punctuation

import re

```

2. Use NLTK and Python to tokenize the text for each coin

- Remove punctuation

regex = re.compile("[^a-zA-Z0-9 ]") re_clean = regex.sub('', text)

- Lowercase each word

words = word_tokenize(re_clean.lower())

- Remove stop words

sw = set(stopwords.words('english'))

- Lemmatize Words into Root words

lemmatizer = WordNetLemmatizer() lem = [lemmatizer.lemmatize(word) for word in words]

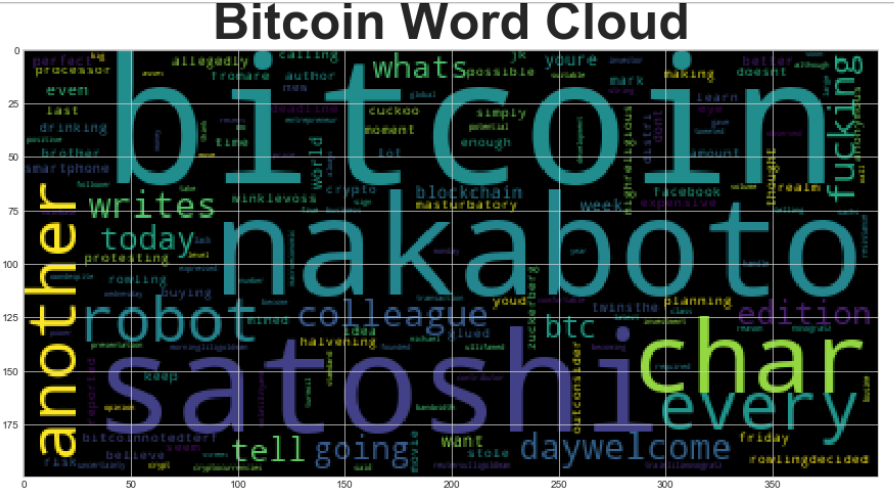

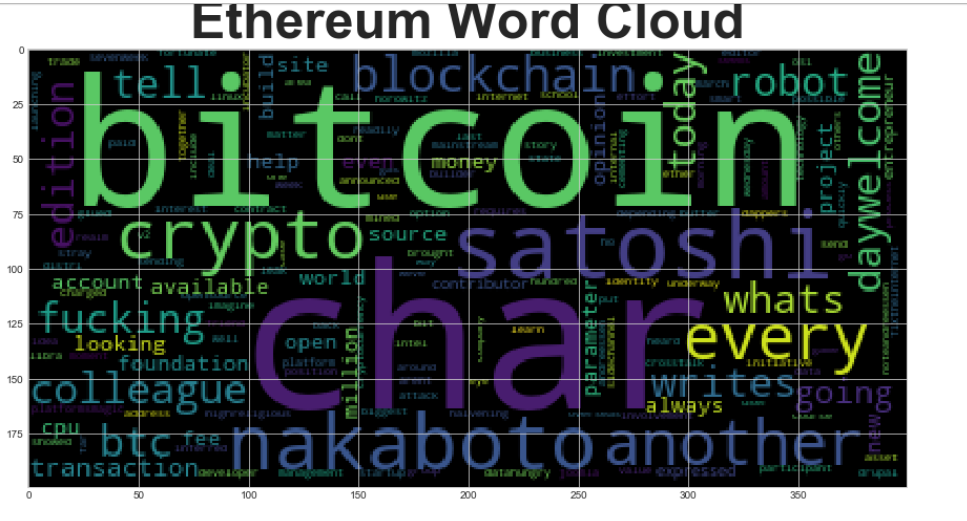

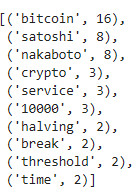

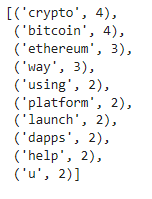

3. Look at the ngrams and word frequency for each coin

-

Use NLTK to produce the ngrams for N = 2

def get_token(df): tokens = [] for i in df['tokens']: tokens.extend(i) return tokens btc_tokens = get_token(btc_sentiment_df) eth_tokens = get_token(eth_sentiment_df) #Generate the Bitcoin N-grams where N=2 def bigram_counter(tokens, N): words_count = dict(Counter(ngrams(tokens, n=N))) return words_count bigram_btc = bigram_counter(btc_tokens, 2)

-

List the top 10 words for each coin

# Use the token_count function to generate the top 10 words from each coin def token_count(tokens, N=10): """Returns the top N tokens from the frequency count""" return Counter(tokens).most_common(N)

| Bitcoin | Ethereum |

|---|---|

|

|

- Generate word clouds for each coin to summarize the news for each coin.

from wordcloud import WordCloud import matplotlib.pyplot as plt plt.style.use('seaborn-whitegrid') import matplotlib as mpl mpl.rcParams['figure.figsize'] = [20.0, 10.0]

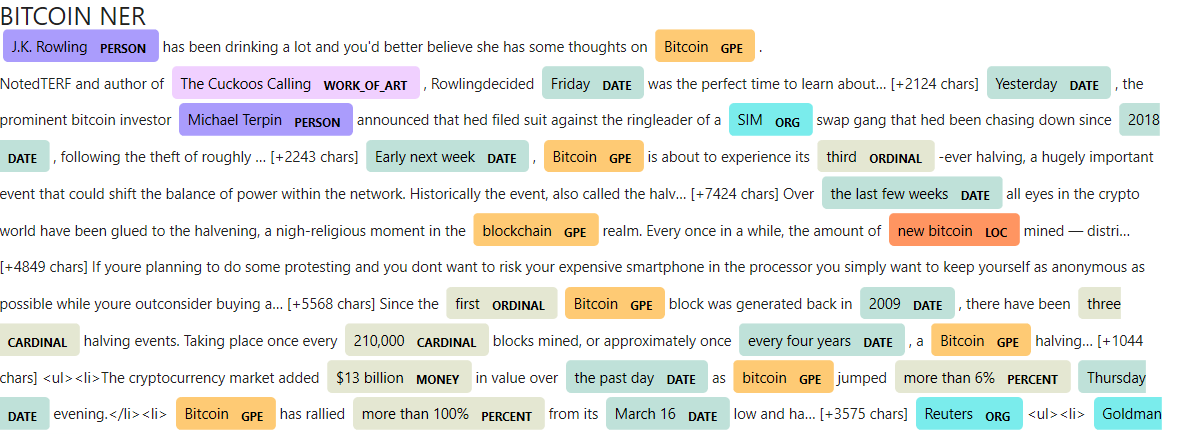

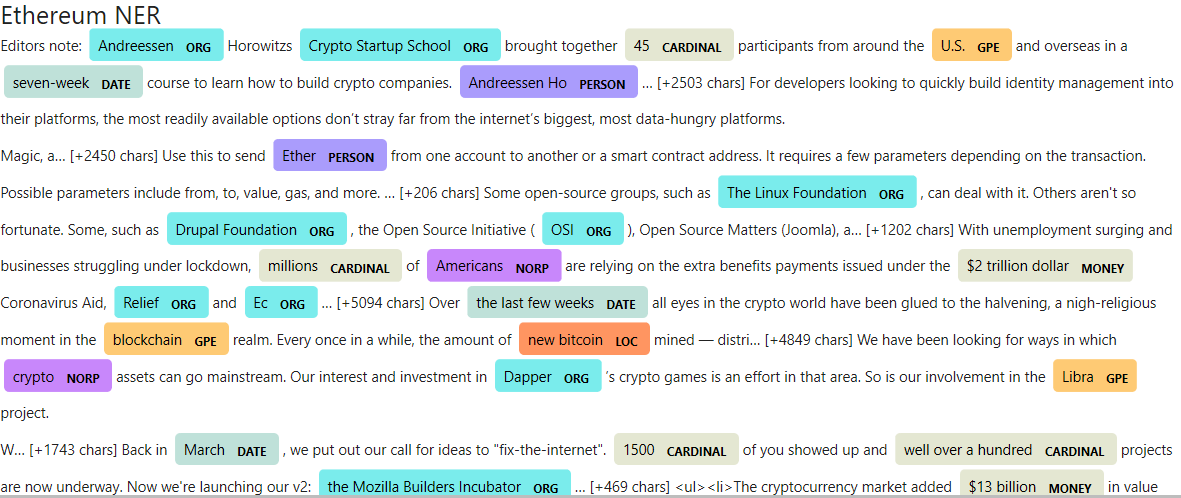

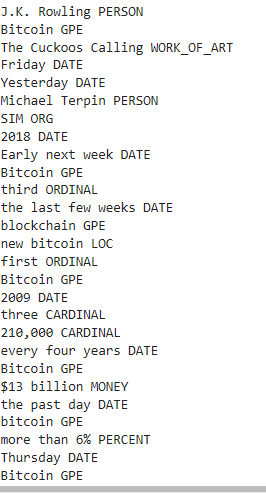

Named Entity Recognition

1. Import SpaCy and displacy

python import spacy from spacy import displacy # Load the spaCy model nlp = spacy.load('en_core_web_sm')

2. Build a named entity recognition model for both coins

python # Run the NER processor on all of the text doc = nlp(btc_content) # Add a title to the document doc.user_data["title"] = "BITCOIN NER"

3. Visualize the tags using SpaCy

python displacy.render(doc, style='ent')

4. List all Entities

python for ent in doc.ents: print('{} {}'.format(ent.text, ent.label_))

| Bitcoin | Ethereum |

|---|---|

|

|