The goals / steps of this project are the following:

- Perform a Histogram of Oriented Gradients (HOG) feature extraction on a labeled training set of images and train a classifier Linear SVM classifier

- Optionally, you can also apply a color transform and append binned color features, as well as histograms of color, to your HOG feature vector.

- Note: for those first two steps don't forget to normalize your features and randomize a selection for training and testing.

- Implement a sliding-window technique and use your trained classifier to search for vehicles in images.

- Run your pipeline on a video stream (start with the test_video.mp4 and later implement on full project_video.mp4) and create a heat map of recurring detections frame by frame to reject outliers and follow detected vehicles.

- Estimate a bounding box for vehicles detected.

Rubric Points

Here I will consider the rubric points individually and describe how I addressed each point in my implementation.

You're reading it!

1. Explain how (and identify where in your code) you extracted HOG features from the training images.

The code for extracted HOG features is contained in lines # 21 through # 39 in utils.py.

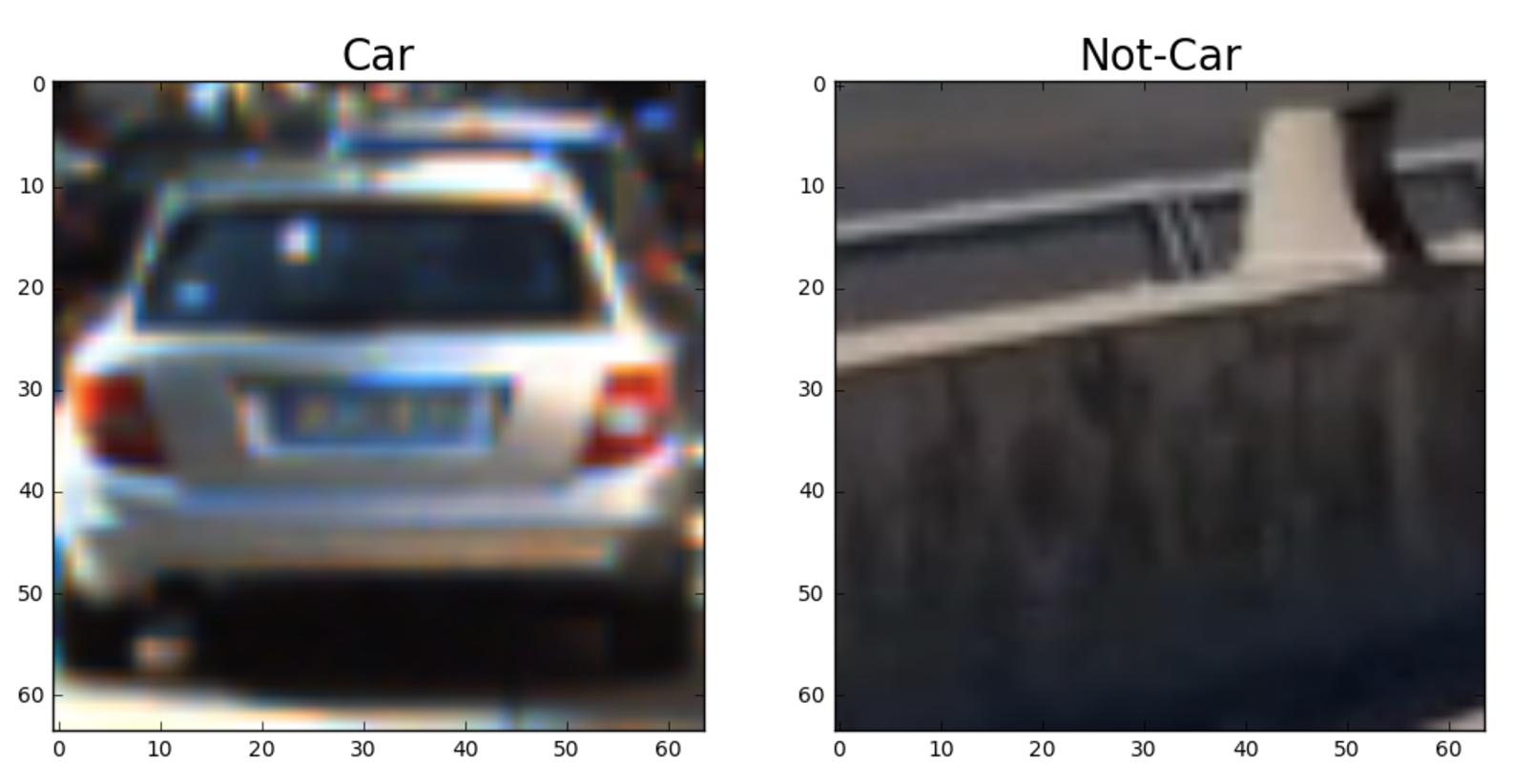

I started by reading in all the vehicle and non-vehicle images. Here is an example of one of each of the vehicle and non-vehicle classes:

I then explored different color spaces and different skimage.hog() parameters (orientations, pixels_per_cell, and cells_per_block). I grabbed random images from each of the two classes and displayed them to get a feel for what the skimage.hog() output looks like.

Here is an example using the YCrCb color space and HOG parameters of orientations=9, pixels_per_cell=(8, 8) and cells_per_block=(2, 2):

I tried various combinations of parameters such as I decrease pixels_per_cell = (2,2) and I selected randoms orientations between 6 and 12, however, I found that the best combinations that balance between accuracy and speed is the one I mentioned earlier. For example, select lower number for pixels_per_cell it could increase the accuracy a bit, but it takes longer to extract HOG features.

3. Describe how (and identify where in your code) you trained a classifier using your selected HOG features (and color features if you used them).

All the code need to train a model in train.py file.

- I loaded car and non-cars data.

- Extract features (HOG, color and spatial features) for both data by using

extract_featuresfunction inutils.pyin lines # 66 through # 106 - split data into training and testing manual because some of the vehicles data are the same, which appear more than once, but typically under significantly different lighting/angle from other instances.

- I trained a SVM using

sklearn.svm.SVCwith the following parameterskernel=linear,C=0.1,gamma=auto

I used SVM because it was recommend by Udacity to give a better result on classification for such data.

Also, I used linear kernel because it train faster on my laptop and still give me more than 98% accuracy on test set.

Since this is a binary classification (vehicle,non-vehicle) data seems to be separate linear as well.

I tried non-linear SVM such as rbf’ and ‘poly’ however, I never get more than 90% accuracy.

Also, it took 5 times longer to train comparing to linear kernel.

1. Describe how (and identify where in your code) you implemented a sliding window search. How did you decide what scales to search and how much to overlap windows?

I implemented by sliding window by using sub-sampling, the entire code can be found in line # 123 through # 214 in utils.py.

- Crop unnecessary region of the image from top and bottom (400px, 656px), and left (450px,)

- convert the image if the training images have been converted

- resize the image by scale it down (16.6%)

- get the three channels of

YCrCb - Compute individual channel HOG features for the entire image

- loop through the entire image and of each cell block do the following:

- Obtain the hog features if it turn on, by combining HOG features for each individual channel

- Obtain spatial features if turn on

- Obtain color features if turn on

- concatenate features and apply normalization since it was applied on training data.

- predict if a car or not,

- if the cell block was a predicted to be a car then add its box coordinates to an array.

- return boxes that predicted to be a car

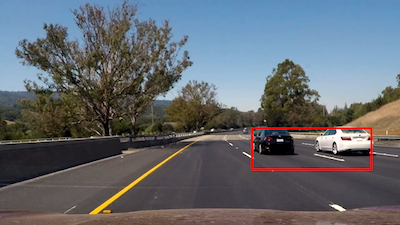

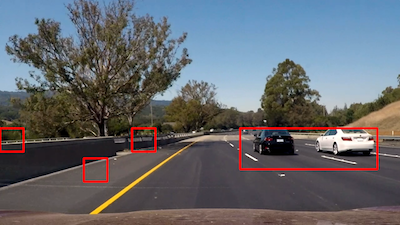

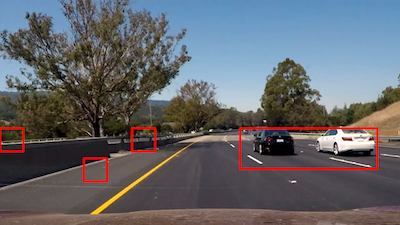

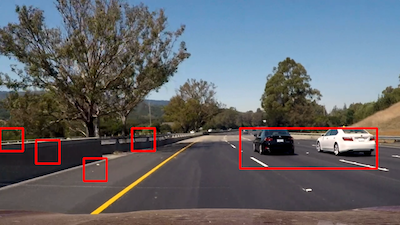

2. Show some examples of test images to demonstrate how your pipeline is working. What did you do to optimize the performance of your classifier?

Ultimately I searched on 1.2 scales using YCrCb 3-channel HOG features plus spatially binned color and histograms of color in the feature vector, which provided a nice result. Here are some example images:

1. Provide a link to your final video output. Your pipeline should perform reasonably well on the entire project video (somewhat wobbly or unstable bounding boxes are ok as long as you are identifying the vehicles most of the time with minimal false positives.)

Here's a link to my video result

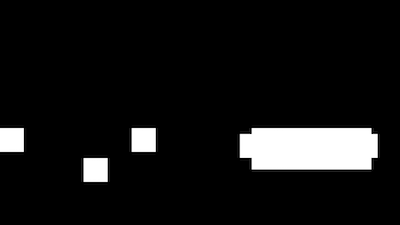

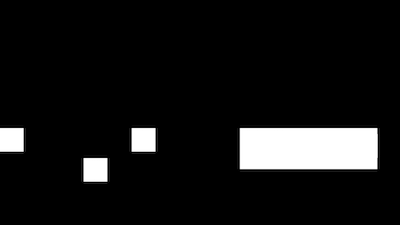

2. Describe how (and identify where in your code) you implemented some kind of filter for false positives and some method for combining overlapping bounding boxes.

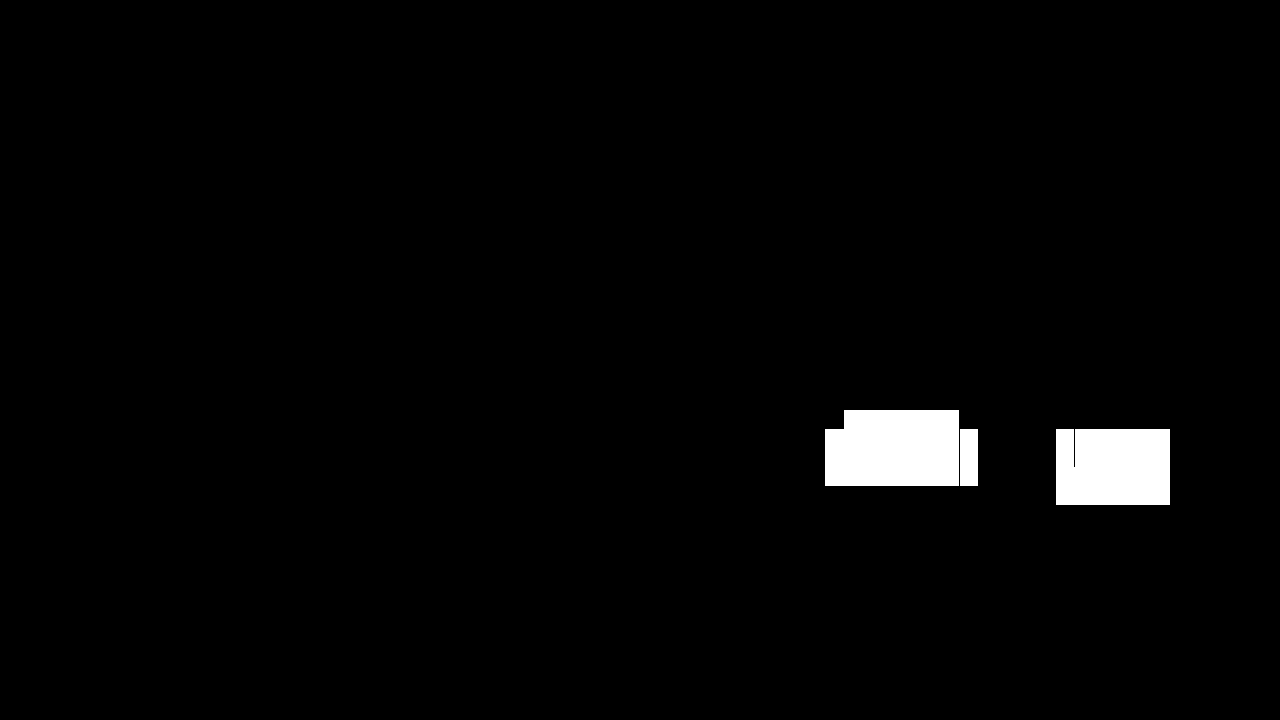

I recorded the positions of positive detections in each frame of the video. From the positive detections I created a heatmap and then thresholded that map to identify vehicle positions. I then used scipy.ndimage.measurements.label() to identify individual blobs in the heatmap. I then assumed each blob corresponded to a vehicle. I constructed bounding boxes to cover the area of each blob detected.

Here's an example result showing the heatmap from a series of frames of video, the result of scipy.ndimage.measurements.label() and the bounding boxes then overlaid on the last frame of video:

Here is the output of scipy.ndimage.measurements.label() on the integrated heatmap from all six frames:

1. Briefly discuss any problems / issues you faced in your implementation of this project. Where will your pipeline likely fail? What could you do to make it more robust?

Here I'll talk about the approach I took, what techniques I used, what worked and why, where the pipeline might fail and how I might improve it if I were going to pursue this project further.

I used the dataset which was provided with this project. even though I split them manually training and testing data, the data was not enough to obtain a generalization algorithm. Using the Udacity data which can be found [here] (https://github.com/udacity/self-driving-car/tree/master/annotations) ,it will improve generalization the trained model.

SVM is a very good model to be used in classification problems, and it has very good parameters to tweak such as kernel (‘linear’, ‘poly’, ‘rbf’, ‘sigmoid’, ‘precomputed’), Also we can play with parameter C to smooth the decision boundary or obtains more train points correct. Another interesting parameter is gamma that indicates which training data has influence in the decision boundary, the far or the closes ones.

Playing with SVM parameters can improve the accuracy result. However, other algorithm can be used instead such as decision tree or deep neural network.

I used HOG features and color features to identify the car, which is good start, but we could improve by using Convolutional Neural Networks(CNNs) instead of HOG features and SVM and in particular one of the variation of Regional CNN

- All the code can be found in

tracker.py: - Accept frame and boxes (position of cars in that frame).

- Create a car from each box and add to

Tracker.carsarray - After 7 frames (one cycle) apply filter for false positives and

scipy.ndimage.measurements.label()for combining overlapping bounding boxes.filter_carsfunction does the previous operation. - Then add the result to

Tracker.display_carsarray. which is responsible for tracking cars - If a car has been seen more than 2 times then display.

- If a car has been seen more than 5 times then check its direction, it its moving towered left then set trackable to true.

the code corresponding to these can be found in

update_display_carsfunction line # 53 through # 69. - The car will be deleted if it was not seen for 15 consecutive frame and 55 for trackable cars