This repository contains examples and automation used in various Raspberry Pi clustering scenarios, as seen on Jeff Geerling's YouTube channel.

The inspiration for this project was my first Pi cluster, the Raspberry Pi Dramble, which is still running in my basement to this day!

- Make sure you have Ansible installed.

- Copy the

example.hosts.iniinventory file tohosts.ini. Make sure it has thecontrol_planeandnodes configured correctly (for my examples I named my nodesnode[1-4].local). - Copy the

example.config.ymlfile toconfig.yml, and modify the variables to your liking.

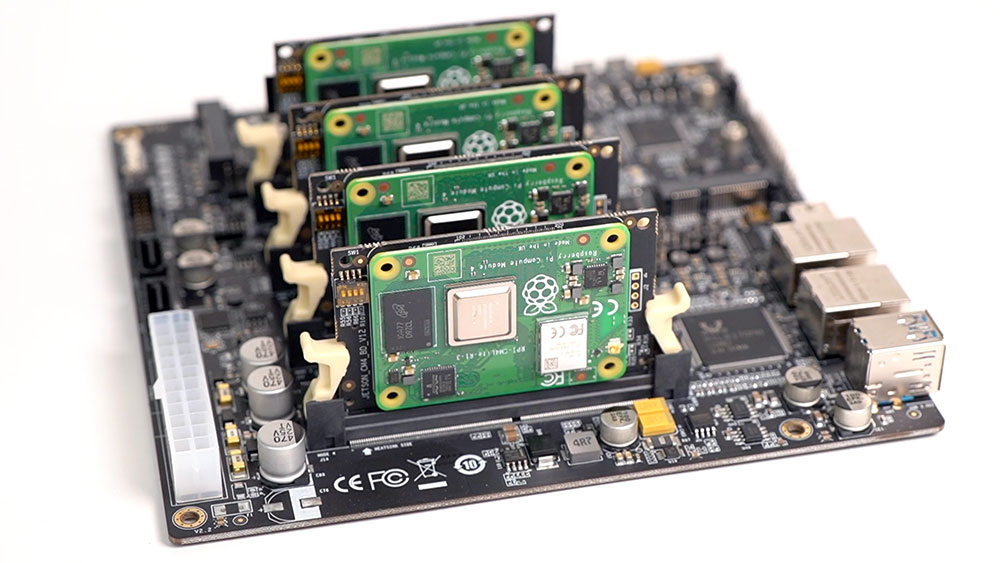

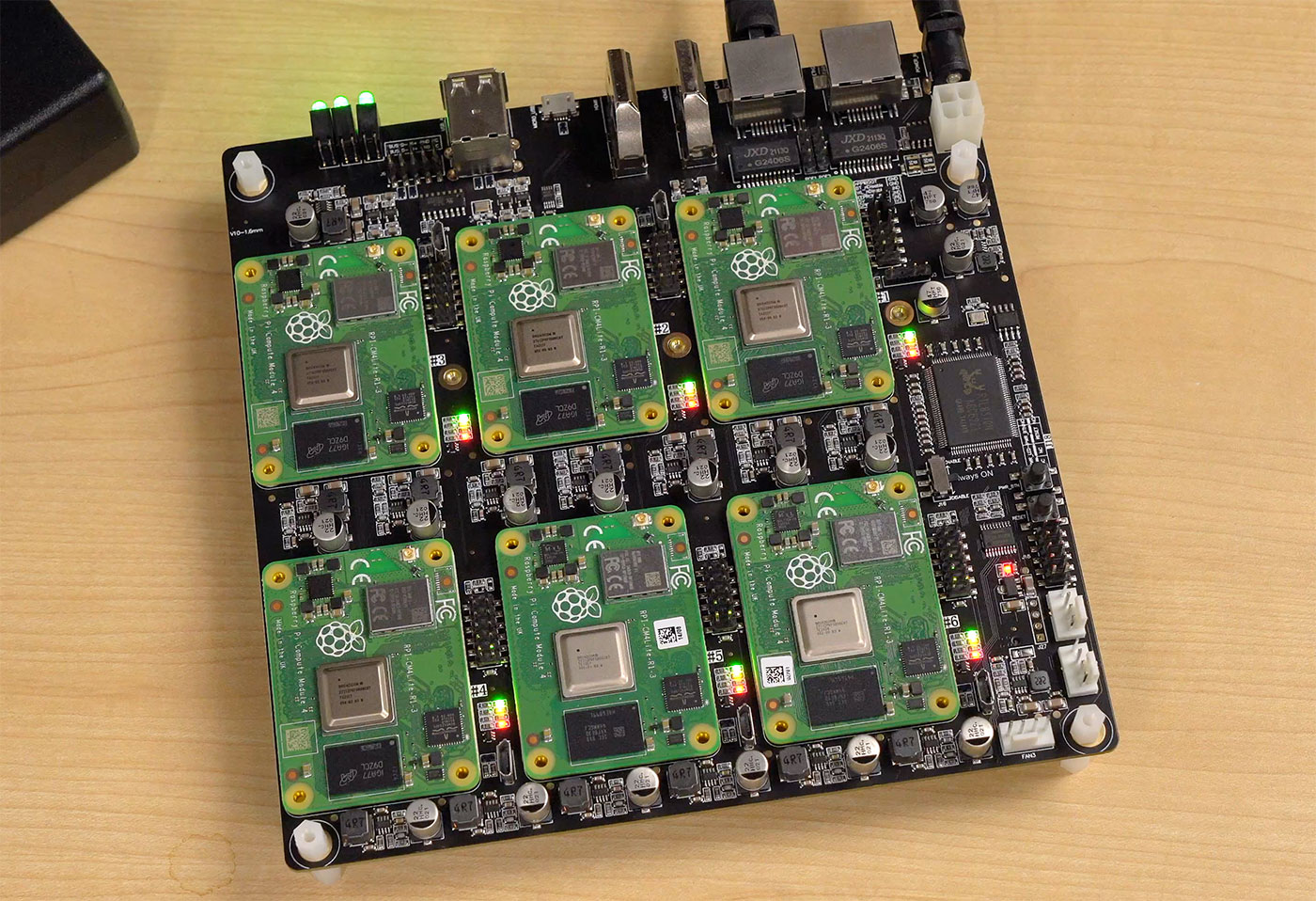

I am running Raspberry Pi OS on various Pi clusters. You can run this on any Pi cluster, but I tend to use Compute Modules without eMMC ('Lite' versions) and I often run them using 32 GB SanDisk Extreme microSD cards to boot each node. For some setups (like when I run the Compute Blade or DeskPi Super6c, I boot off NVMe SSDs instead.

In every case, I flashed Raspberry Pi OS (64-bit, lite) to the storage devices using Raspberry Pi Imager.

To make network discovery and integration easier, I edit the advanced configuration in Imager, and set the following options:

- Set hostname:

node1.local(set to2for node 2,3for node 3, etc.) - Enable SSH: 'Allow public-key', and paste in my public SSH key(s)

- Configure wifi: (ONLY on node 1, if desired) enter SSID and password for local WiFi network

After setting all those options, making sure only node 1 has WiFi configured, and the hostname is unique to each node (and matches what is in hosts.ini), I inserted the microSD cards into the respective Pis, or installed the NVMe SSDs into the correct slots, and booted the cluster.

To test the SSH connection from my Ansible controller (my main workstation, where I'm running all the playbooks), I connected to each server individually, and accepted the hostkey:

ssh pi@node1.local

This ensures Ansible will also be able to connect via SSH in the following steps. You can test Ansible's connection with:

ansible all -m ping

It should respond with a 'SUCCESS' message for each node.

Warning: This playbook is configured to set up a ZFS mirror volume on node 3, with two drives connected to the built-in SATA ports on the Turing Pi 2.

It is not yet genericized for other use cases (e.g. boards that are not the Turing Pi 2).

This playbook will create a storage location on node 3 by default. You can use one of the storage configurations by switching the storage_type variable from filesystem to zfs in your config.yml file.

If using filesystem (storage_type: filesystem), make sure to use the appropriate storage_nfs_dir variable in config.yml.

If using ZFS (storage_type: zfs, you should have two volumes available on node 3, /dev/sda, and /dev/sdb, able to be pooled into a mirror. Make sure your two SATA drives are wiped:

pi@node3:~ $ sudo wipefs --all --force /dev/sda?; sudo wipefs --all --force /dev/sda

pi@node3:~ $ sudo wipefs --all --force /dev/sdb?; sudo wipefs --all --force /dev/sdb

If you run lsblk, you should see sda and sdb have no partitions, and are ready to use:

pi@node3:~ $ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 1.8T 0 disk

sdb 8:16 0 1.8T 0 disk

You should also make sure the storage_nfs_dir variable is set appropriately for ZFS in your config.yml.

This ZFS layout was configured originally for the Turing Pi 2 board, which has two built-in SATA ports connected directly to node 3. In the future, the configuration may be genericized a bit better.

You could also run Ceph on a Pi cluster—see the storage configuration playbook inside the ceph directory.

This configuration is not yet integrated into the general K3s setup.

Run the playbook:

ansible-playbook main.yml

At the end of the playbook, there should be an instance of Drupal running on the cluster. If you log into node 1, you should be able to access it with curl localhost.

Alternatively, if you have SSH tunnelling configured (see later section), you could access http://[your-vps-ip-or-hostname]:8080/ and you'd see the site.

You can also log into node 1, switch to the root user account (sudo su), then use kubectl to manage the cluster (e.g. view Drupal pods with kubectl get pods -n drupal).

The Kubernetes Ingress object for Drupal (how HTTP requests from outside the cluster make it to Drupal) can be found by running kubectl get ingress -n drupal. Take the IP address or hostname there and enter it in your browser on a computer on the same network, and voila! You should see Drupal's installer.

K3s' kubeconfig file is located at /etc/rancher/k3s/k3s.yaml. If you'd like to manage the cluster from other hosts (or using a tool like Lens), copy the contents of that file, replacing localhost with the IP address or hostname of the control plane node, and paste the contents into a file ~/.kube/config.

Run the upgrade playbook:

ansible-playbook upgrade.yml

Prometheus and Grafana are used for monitoring. Grafana can be accessed via port forwarding (or you could choose to expose it another way).

To access Grafana:

- Make sure you set up a valid

~/.kube/configfile (see 'K3s installation' above). - Run

kubectl port-forward service/cluster-monitoring-grafana :80 - Grab the port that's output, and browse to

localhost:[port], and bingo! Grafana.

The default login is admin / prom-operator, but you can also get the secret with kubectl get secret cluster-monitoring-grafana -o jsonpath="{.data.admin-password}" | base64 -D.

You can then browse to all the Kubernetes and Pi-related dashboards by browsing the Dashboards in the 'General' folder.

See the README file within the benchmarks folder.

The safest way to shut down the cluster is to run the following command:

ansible all -m community.general.shutdown -b

Note: If using the SSH tunnel, you might want to run the command first on nodes 2-4, then on node 1. So first run

ansible 'all:!control_plane' [...], then run it again just forcontrol_plane.

Then after you confirm the nodes are shut down (with K3s running, it can take a few minutes), press the cluster's power button (or yank the Ethernet cables if using PoE) to power down all Pis physically. Then you can switch off or disconnect your power supply.

I using my cluster both on-premise and remote (using a 4G LTE modem connected to the first Pi), I set it up on its own subnet (10.1.1.x). You can change the subnet that's used via the ipv4_subnet_prefix variable in config.yml.

To configure the local network for the Pi cluster (this is optional—you can still use the rest of the configurations without a custom local network), run the playbook:

ansible-playbook networking.yml

After running the playbook, until a reboot, the Pis will still be accessible over their former DHCP-assigned IP address. After the nodes are rebooted, you will need to make sure your workstation is connected to an interface using the same subnet as the cluster (e.g. 10.1.1.x).

Note: After the networking changes are made, since this playbook uses DNS names (e.g.

node1.local) instead of IP addresses, your computer will still be able to connect to the nodes directly—assuming your network has IPv6 support. Pinging the nodes on their new IP addresses will not work, however. For better network compatibility, it's recommended you set up a separate network interface on the Ansible controller that's on the same subnet as the Pis in the cluster:On my Mac, I connected a second network interface and manually configured its IP address as

10.1.1.10, with subnet mask255.255.255.0, and that way I could still access all the nodes via IP address or their hostnames (e.g.node2.local).

Because the cluster subnet needs its own router, node 1 is configured as a router, using wlan0 as the primary interface for Internet traffic by default. The other nodes get their Internet access through node 1.

The network configuration defaults to an active_internet_interface of wlan0, meaning node 1 will route all Internet traffic for the cluster through it's WiFi interface.

Assuming you have a working 4G card in slot 1, you can switch node 1 to route through an alternate interface (e.g. usb0):

- Set

active_internet_interface: "usb0"in yourconfig.yml - Run the networking playbook again:

ansible-playbook networking.yml

You can switch back and forth between interfaces using the steps above.

For my own experimentation, I ran my Pi cluster 'off-grid', using a 4G LTE modem, as mentioned above.

Because my mobile network provider uses CG-NAT, there is no way to remotely access the cluster, or serve web traffic to the public internet from it, at least not out of the box.

I am using a reverse SSH tunnel to enable direct remote SSH and HTTP access. To set that up, I configured a VPS I run to use TCP Forwarding (see this blog post for details), and I configured an SSH key so node 1 could connect to my VPS (e.g. ssh my-vps-username@my-vps-hostname-or-ip).

Then I set the reverse_tunnel_enable variable to true in my config.yml, and configured the VPS username and hostname options.

Doing that and running the main.yml playbook configures autossh on node 1, and will try to get a connection through to the VPS on ports 2222 (to node 1's port 22) and 8080 (to node 1's port 80).

After that's done, you should be able to log into the cluster through your VPS with a command like:

$ ssh -p 2222 pi@[my-vps-hostname]

Note: If autossh isn't working, it could be that it didn't exit cleanly, and a tunnel is still reserving the port on the remote VPS. That's often the case if you run

sudo systemctl status autosshand see messages likeWarning: remote port forwarding failed for listen port 2222.In that case, log into the remote VPS and run

pgrep ssh | xargs killto kill off all active SSH sessions, thenautosshshould pick back up again.

Warning: Use this feature at your own risk. Security is your own responsibility, and for better protection, you should probably avoid directly exposing your cluster (e.g. by disabling the

GatewayPortsoption) so you can only access the cluster while already logged into your VPS).

These playbooks are used in both production and test clusters, but security is always your responsibility. If you want to use any of this configuration in production, take ownership of it and understand how it works so you don't wake up to a hacked Pi cluster one day!

The repository was created in 2023 by Jeff Geerling, author of Ansible for DevOps, Ansible for Kubernetes, and Kubernetes 101.