An AI web browsing framework focused on simplicity and extensibility.

- Intro

- Getting Started

- API Reference

- Model Support

- How It Works

- Prompting Tips

- Roadmap

- Contributing

- Acknowledgements

- License

Note

Stagehand is currently available as an early release, and we're actively seeking feedback from the community. Please join our Slack community to stay updated on the latest developments and provide feedback.

Stagehand is the AI-powered successor to Playwright, offering three simple APIs (act, extract, and observe) that provide the building blocks for natural language driven web automation.

The goal of Stagehand is to provide a lightweight, configurable framework, without overly complex abstractions, as well as modular support for different models and model providers. It's not going to order you a pizza, but it will help you reliably automate the web.

Each Stagehand function takes in an atomic instruction, such as act("click the login button") or extract("find the red shoes"), generates the appropriate Playwright code to accomplish that instruction, and executes it.

Instructions should be atomic to increase reliability, and step planning should be handled by the higher level agent. You can use observe() to get a suggested list of actions that can be taken on the current page, and then use those to ground your step planning prompts.

Stagehand is open source and maintained by the Browserbase team. We believe that by enabling more developers to build reliable web automations, we'll expand the market of developers who benefit from our headless browser infrastructure. This is the framework that we wished we had while tinkering on our own applications, and we're excited to share it with you.

We also install zod to power typed extraction

npm install @browserbasehq/stagehand zodYou'll need to provide your API Key for the model provider you'd like to use. The default model provider is OpenAI, but you can also use Anthropic or others. More information on supported models can be found in the API Reference.

Ensure that an OpenAI API Key or Anthropic API key is accessible in your local environment.

export OPENAI_API_KEY=sk-...

export ANTHROPIC_API_KEY=sk-...

If you plan to run the browser locally, you'll also need to install Playwright's browser dependencies.

npm exec playwright installThen you can create a Stagehand instance like so:

import { Stagehand } from "@browserbasehq/stagehand";

import { z } from "zod";

const stagehand = new Stagehand({

env: "LOCAL",

});If you plan to run the browser remotely, you'll need to set a Browserbase API Key and Project ID.

export BROWSERBASE_API_KEY=...

export BROWSERBASE_PROJECT_ID=...import { Stagehand } from "@browserbasehq/stagehand";

import { z } from "zod";

const stagehand = new Stagehand({

env: "BROWSERBASE",

enableCaching: true,

});await stagehand.init();

await stagehand.page.goto("https://github.com/browserbase/stagehand");

await stagehand.act({ action: "click on the contributors" });

const contributor = await stagehand.extract({

instruction: "extract the top contributor",

schema: z.object({

username: z.string(),

url: z.string(),

}),

});

console.log(`Our favorite contributor is ${contributor.username}`);This simple snippet will open a browser, navigate to the Stagehand repo, and log the top contributor.

This constructor is used to create an instance of Stagehand.

-

Arguments:

env:'LOCAL'or'BROWSERBASE'.verbose: anintegerthat enables several levels of logging during automation:0: limited to no logging1: SDK-level logging2: LLM-client level logging (most granular)

debugDom: abooleanthat draws bounding boxes around elements presented to the LLM during automation.domSettleTimeoutMs: anintegerthat specifies the timeout in milliseconds for waiting for the DOM to settle. Defaults to 30000 (30 seconds).enableCaching: abooleanthat enables caching of LLM responses. When set totrue, the LLM requests will be cached on disk and reused for identical requests. Defaults tofalse.

-

Returns:

- An instance of the

Stagehandclass configured with the specified options.

- An instance of the

-

Example:

const stagehand = new Stagehand();

init() asynchronously initializes the Stagehand instance. It should be called before any other methods.

-

Arguments:

modelName: (optional) anAvailableModelstring to specify the model to use. This will be used for all other methods unless overridden. Defaults to"gpt-4o".

-

Returns:

- A

Promisethat resolves to an object containing:debugUrl: astringrepresenting the URL for live debugging. This is only available when using a Browserbase browser.sessionUrl: astringrepresenting the session URL. This is only available when using a Browserbase browser.

- A

-

Example:

await stagehand.init({ modelName: "gpt-4o" });

act() allows Stagehand to interact with a web page. Provide an action like "search for 'x'", or "select the cheapest flight presented" (small atomic goals perform the best).

-

Arguments:

action: astringdescribing the action to perform, e.g.,"search for 'x'".modelName: (optional) anAvailableModelstring to specify the model to use.useVision: (optional) abooleanor"fallback"to determine if vision-based processing should be used. Defaults to"fallback".

-

Returns:

- A

Promisethat resolves to an object containing:success: abooleanindicating if the action was completed successfully.message: astringproviding details about the action's execution.action: astringdescribing the action performed.

- A

-

Example:

await stagehand.act({ action: "click on add to cart" });

extract() grabs structured text from the current page using zod. Given instructions and schema, you will receive structured data. Unlike some extraction libraries, stagehand can extract any information on a page, not just the main article contents.

-

Arguments:

instruction: astringproviding instructions for extraction.schema: az.AnyZodObjectdefining the structure of the data to extract.modelName: (optional) anAvailableModelstring to specify the model to use.

-

Returns:

- A

Promisethat resolves to the structured data as defined by the providedschema.

- A

-

Example:

const price = await stagehand.extract({ instruction: "extract the price of the item", schema: z.object({ price: z.number(), }), });

Note

observe() currently only evaluates the first chunk in the page.

observe() is used to get a list of actions that can be taken on the current page. It's useful for adding context to your planning step, or if you unsure of what page you're on.

If you are looking for a specific element, you can also pass in an instruction to observe via: observe({ instruction: "{your instruction}"}).

-

Arguments:

instruction: astringproviding instructions for the observation.useVision: (optional) abooleanor"fallback"to determine if vision-based processing should be used. Defaults to"fallback".

-

Returns:

- A

Promisethat resolves to an array ofstrings representing the actions that can be taken on the current page.

- A

-

Example:

const actions = await stagehand.observe();

page and context are instances of Playwright's Page and BrowserContext respectively. Use these methods to interact with the Playwright instance that Stagehand is using. Most commonly, you'll use page.goto() to navigate to a URL.

- Example:

await stagehand.page.goto("https://github.com/browserbase/stagehand");

log() is used to print a message to the browser console. These messages will be persisted in the Browserbase session logs, and can be used to debug sessions after they've completed.

Make sure the log level is above the verbose level you set when initializing the Stagehand instance.

- Example:

stagehand.log("Hello, world!");

Stagehand leverages a generic LLM client architecture to support various language models from different providers. This design allows for flexibility, enabling the integration of new models with minimal changes to the core system. Different models work better for different tasks, so you can choose the model that best suits your needs.

Stagehand currently supports the following models from OpenAI and Anthropic:

-

OpenAI Models:

gpt-4ogpt-4o-minigpt-4o-2024-08-06

-

Anthropic Models:

claude-3-5-sonnet-latestclaude-3-5-sonnet-20240620claude-3-5-sonnet-20241022

These models can be specified when initializing the Stagehand instance or when calling methods like act() and extract().

The SDK has two major phases:

- Processing the DOM (including chunking - see below).

- Taking LLM powered actions based on the current state of the DOM.

Stagehand uses a combination of techniques to prepare the DOM.

The DOM Processing steps look as follows:

- Via Playwright, inject a script into the DOM accessible by the SDK that can run processing.

- Crawl the DOM and create a list of candidate elements.

- Candidate elements are either leaf elements (DOM elements that contain actual user facing substance), or are interactive elements.

- Interactive elements are determined by a combination of roles and HTML tags.

- Candidate elements that are not active, visible, or at the top of the DOM are discarded.

- The LLM should only receive elements it can faithfully act on on behalf of the agent/user.

- For each candidate element, an xPath is generated. this guarantees that if this element is picked by the LLM, we'll be able to reliably target it.

- Return both the list of candidate elements, as well as the map of elements to xPath selectors across the browser back to the SDK, to be analyzed by the LLM.

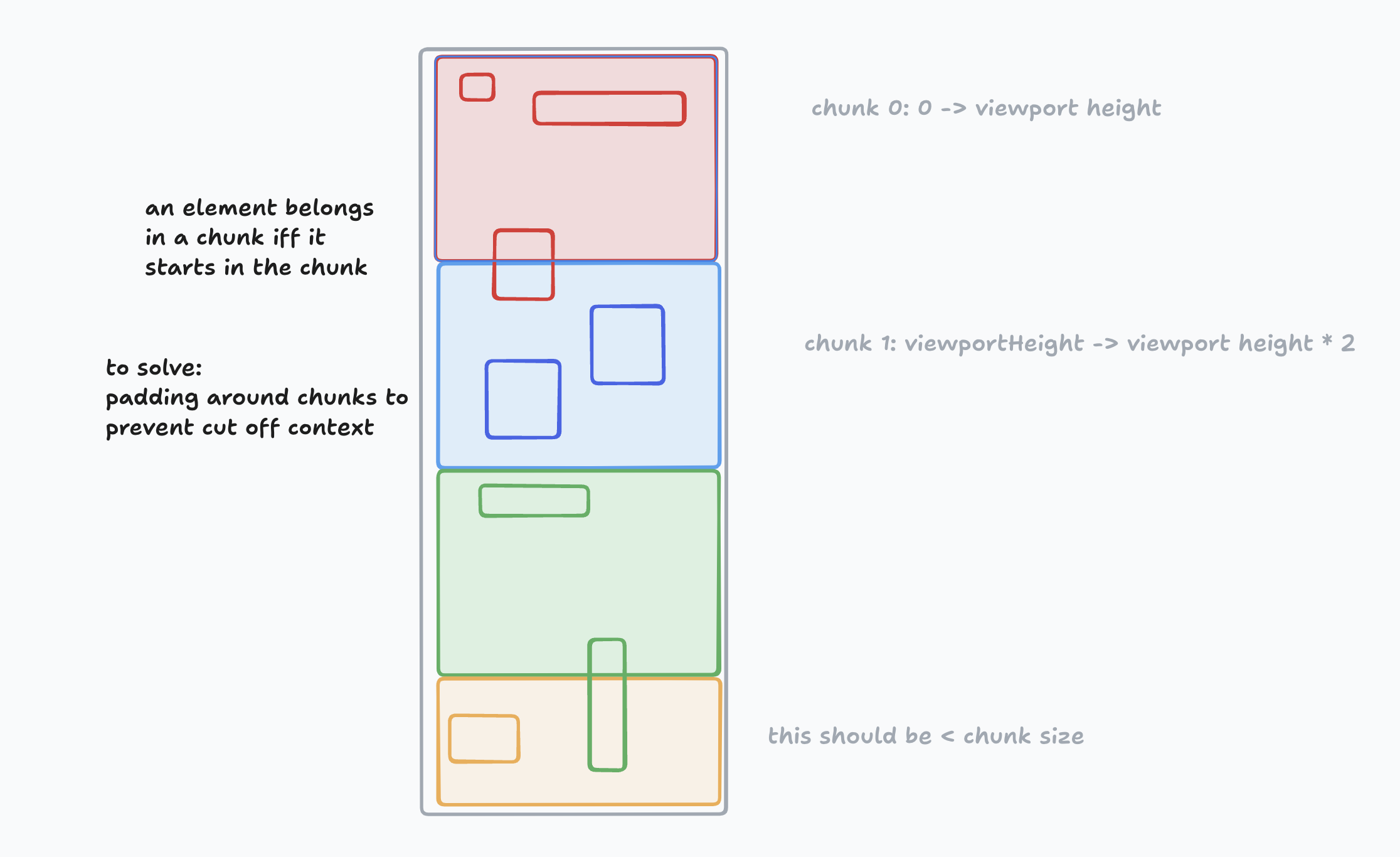

While LLMs will continue to increase context window length and reduce latency, giving any reasoning system less stuff to think about should make it more reliable. As a result, DOM processing is done in chunks in order to keep the context small per inference call. In order to chunk, the SDK considers a candidate element that starts in a section of the viewport to be a part of that chunk. In the future, padding will be added to ensure that an individual chunk does not lack relevant context. See this diagram for how it looks:

The act() and observe() methods can take a useVision flag. If this is set to true, the LLM will be provided with a annotated screenshot of the current page to identify which elements to act on. This is useful for complex DOMs that the LLM has a hard time reasoning about, even after processing and chunking. By default, this flag is set to "fallback", which means that if the LLM fails to successfully identify a single element, Stagehand will retry the attempt using vision.

Now we have a list of candidate elements and a way to select them. We can present those elements with additional context to the LLM for extraction or action. While untested on a large scale, presenting a "numbered list of elements" guides the model to not treat the context as a full DOM, but as a list of related but independent elements to operate on.

In the case of action, we ask the LLM to write a playwright method in order to do the correct thing. In our limited testing, playwright syntax is much more effective than relying on built in javascript APIs, possibly due to tokenization.

Lastly, we use the LLM to write future instructions to itself to help manage it's progress and goals when operating across chunks.

Prompting Stagehand is more literal and atomic than other higher level frameworks, including agentic frameworks. Here are some guidelines to help you craft effective prompts:

- Use specific and concise actions

await stagehand.act({ action: "click the login button" });

const productInfo = await stagehand.extract({

instruction: "find the red shoes",

schema: z.object({

productName: z.string(),

price: z.number(),

}),

});- Break down complex tasks into smaller, atomic steps

Instead of combining actions:

// Avoid this

await stagehand.act({ action: "log in and purchase the first item" });Split them into individual steps:

await stagehand.act({ action: "click the login button" });

// ...additional steps to log in...

await stagehand.act({ action: "click on the first item" });

await stagehand.act({ action: "click the purchase button" });- Use

observe()to get actionable suggestions from the current page

const actions = await stagehand.observe();

console.log("Possible actions:", actions);- Use broad or ambiguous instructions

// Too vague

await stagehand.act({ action: "find something interesting on the page" });- Combine multiple actions into one instruction

// Avoid combining actions

await stagehand.act({ action: "fill out the form and submit it" });- Expect Stagehand to perform high-level planning or reasoning

// Outside Stagehand's scope

await stagehand.act({ action: "book the cheapest flight available" });By following these guidelines, you'll increase the reliability and effectiveness of your web automations with Stagehand. Remember, Stagehand excels at executing precise, well-defined actions so keeping your instructions atomic will lead to the best outcomes.

We leave the agentic behaviour to higher-level agentic systems which can use Stagehand as a tool.

At a high level, we're focused on improving reliability, speed, and cost in that order of priority.

You can see the roadmap here. Looking to contribute? Read on!

Note

We highly value contributions to Stagehand! For support or code review, please join our Slack community.

First, clone the repo

git clone git@github.com:browserbase/stagehand.gitThen install dependencies

npm installEnsure you have the .env file as documented above in the Getting Started section.

Then, run the example script npm run example.

A good development loop is:

- Try things in the example file

- Use that to make changes to the SDK

- Write evals that help validate your changes

- Make sure you don't break existing evals!

- Open a PR and get it reviewed by the team.

You'll need a Braintrust API key to run evals

BRAINTRUST_API_KEY=""After that, you can run the eval using npm run evals

Running all evals can take some time. We have a convenience script example.ts where you can develop your new single eval before adding it to the set of all evals.

You can run npm run example to execute and iterate on the eval you are currently developing.

To add a new model to Stagehand, follow these steps:

-

Define the Model: Add the new model name to the

AvailableModeltype in theLLMProvider.tsfile. This ensures that the model is recognized by the system. -

Map the Model to a Provider: Update the

modelToProviderMapin theLLMProviderclass to associate the new model with its corresponding provider. This mapping is crucial for determining which client to use. -

Implement the Client: If the new model requires a new client, implement a class that adheres to the

LLMClientinterface. This class should define all necessary methods, such ascreateChatCompletion. -

Update the

getClientMethod: Modify thegetClientmethod in theLLMProviderclass to return an instance of the new client when the new model is requested.

Stagehand uses tsup to build the SDK and vanilla esbuild to build scripts that run in the DOM.

- run

npm run build - run

npm packto get a tarball for distribution

This project heavily relies on Playwright as a resilient backbone to automate the web. It also would not be possible without the awesome techniques and discoveries made by tarsier, and fuji-web.

Jeremy Press wrote the original MVP of Stagehand and continues to be a major ally to the project.

Licensed under the MIT License.

Copyright 2024 Browserbase, Inc.