This repo contains a new corpus benchmark called DialoAMC for automated medical consultation system, as well as the code for reproducing the experiments in the paper A Benchmark for Automatic Medical Consultation System: Frameworks, Tasks and Datasets.

- The test set of DialoAMC is host on CBLEU at TIANCHI platform. See more details in https://github.com/lemuria-wchen/imcs21-cblue. Welcome to submit your results on CBLEU, or compare our results on the validation set.

- Please see more details in our arxiv paper A Benchmark for Automatic Medical Consultation System: Frameworks, Tasks and Datasets.

- DialoAMC is released, containing a total of 4,116 annotated medical consultation records that covers 10 pediatric diseases.

- Update the results of dev set for DDP task

- Detailed documents of instruction on DDP task

We provide the code for most of the baseline models, all of which are based on python 3, and we provide the environment and running procedure for each baseline.

The baseline includes:

- NER task: Lattice LSTM, BERT, ERNIE, FLAT, LEBERT

- DAC task: TextCNN, TextRNN, TextRCNN, DPCNN, BERT, ERNIE

- SLI task: BERT-MLC, BERT-MTL

- MRG task: Seq2Seq, PG, Transformer, T5, ProphetNet

- DDP task: DQN, KQ-DQN, REFUEL, GAMP, HRL

To evaluate NER task, we use two types of metrics, entity-level and token-level. Due to space limitations, we only keep the results of token-level metrics in our paper.

For entity-level, we report token-level F1 score for each entity category, as well as the overall F1 score, following the setting of CoNLL-2003.

For token-level, we report the Precision, Recall and F1 score (micro).

The follow baseline codes are available:

| Models | Split | Entity-Level | Token-Level | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| SX | DN | DC | EX | OP | Overall | P | R | F1 | ||

| Lattice LSTM | Dev | 90.61 | 88.12 | 90.89 | 90.44 | 91.14 | 90.33 | 89.62 | 91.00 | 90.31 |

| Test | 90.00 | 87.84 | 91.32 | 90.55 | 93.42 | 90.10 | 89.37 | 90.84 | 90.10 | |

| BERT | Dev | 91.15 | 89.74 | 90.97 | 90.74 | 92.57 | 90.95 | 88.99 | 92.43 | 90.68 |

| Test | 90.59 | 89.97 | 90.54 | 90.48 | 94.39 | 90.64 | 88.46 | 92.35 | 90.37 | |

| ERNIE | Dev | 91.28 | 89.68 | 90.92 | 91.15 | 92.65 | 91.08 | 89.36 | 92.46 | 90.88 |

| Test | 90.67 | 89.89 | 90.73 | 90.97 | 94.33 | 90.78 | 88.87 | 92.27 | 90.53 | |

| FLAT | Dev | 90.90 | 89.95 | 90.64 | 90.58 | 93.14 | 90.80 | 88.89 | 92.23 | 90.53 |

| Test | 90.45 | 89.67 | 90.35 | 91.12 | 93.47 | 90.58 | 88.76 | 92.07 | 90.38 | |

| LEBERT | Dev | 92.61 | 90.67 | 90.71 | 92.39 | 92.30 | 92.11 | 86.95 | 93.05 | 89.90 |

| Test | 92.14 | 90.31 | 91.16 | 92.35 | 93.94 | 91.92 | 86.53 | 92.91 | 89.60 | |

To evaluate DAC task, we report the Precision, Recall, F1 score (macro), as well as Accuracy.

The follow baseline codes are available:

| Models | Split | P | R | F1 | Acc |

|---|---|---|---|---|---|

| TextCNN | Dev | 73.09 | 70.26 | 71.26 | 77.77 |

| Test | 74.76 | 70.06 | 71.91 | 78.93 | |

| TextRNN | Dev | 74.02 | 68.43 | 70.71 | 78.14 |

| Test | 72.53 | 70.99 | 71.23 | 78.46 | |

| TextRCNN | Dev | 71.43 | 72.68 | 71.50 | 77.67 |

| Test | 74.29 | 72.07 | 72.84 | 79.49 | |

| DPCNN | Dev | 70.10 | 70.91 | 69.85 | 77.14 |

| Test | 71.29 | 71.82 | 71.38 | 77.91 | |

| BERT | Dev | 75.19 | 76.31 | 75.66 | 81.00 |

| Test | 75.53 | 77.24 | 76.28 | 81.65 | |

| ERNIE | Dev | 76.04 | 76.82 | 76.37 | 81.60 |

| Test | 75.72 | 76.94 | 76.25 | 81.91 |

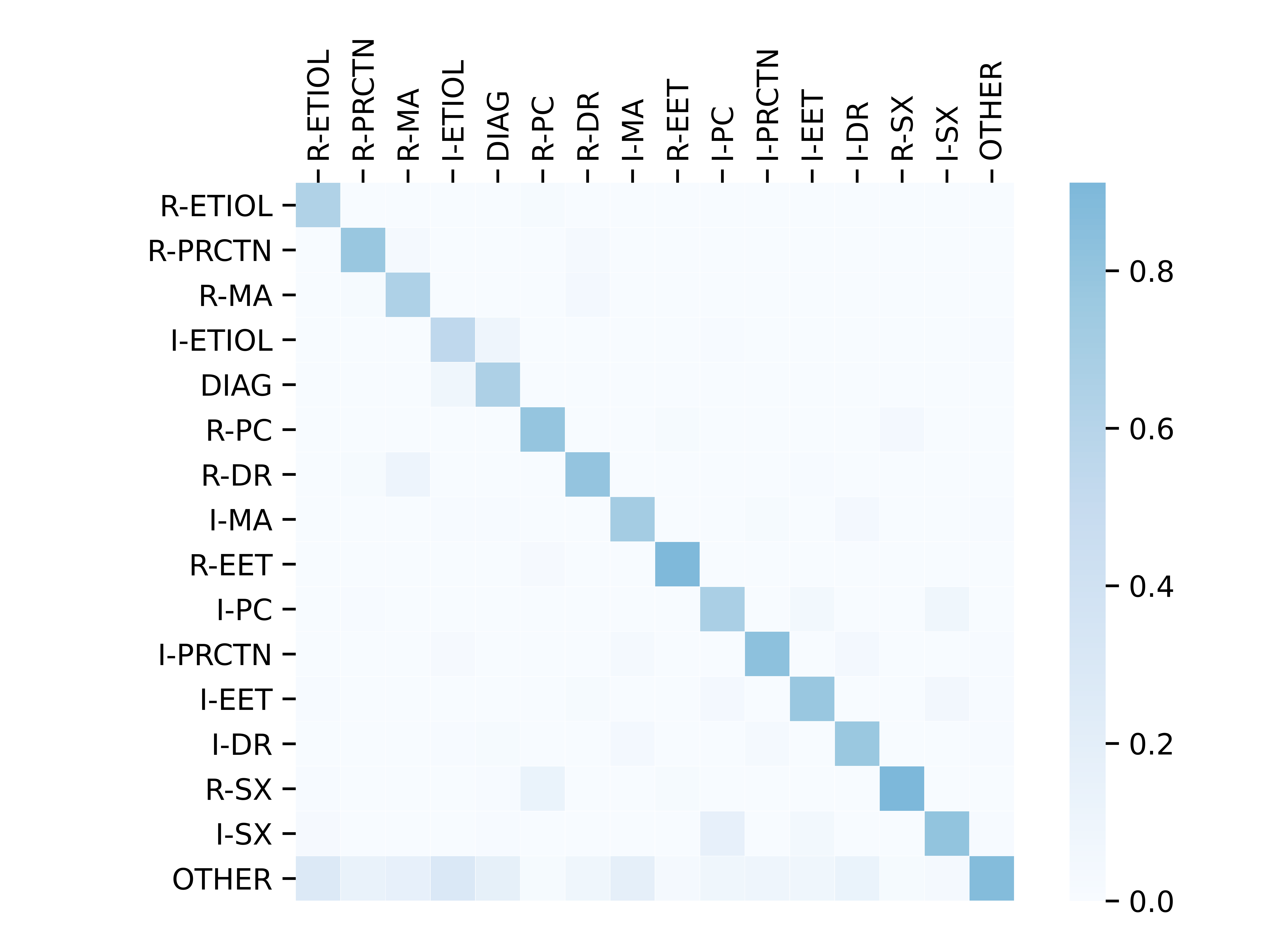

The visualization of the classification confusion matrix predicted by ERNIE model on the test set is demonstrated in the below figure. It can be seen that there are few classification errors in most utterance categories, except for OTHER category.

For the evaluation of SLI-EXP and SLI-IMP task, there is a little difference since SLI-IMP task needs to additionally predict the symptom label. We divide the evaluation process into two steps, the first step is to evaluate the performance of symptom recognition, and the second step is to evaluate the performance of symptom (label) inference.

For symptom recognition, it cares only whether the symptom entities are identified or not. We use metrics of multi-label classification that are widely explored in the paper A Unified View of Multi-Label Performance Measures. It includes example-based metrics: Subset Accuracy (SA), Hamming Loss (HL), Hamming Score (HS), and label-based metrics: Precision (P), Recall (R) and F1 score (F1) (micro).

For symptom label inference, it evaluates only on those symptoms that are correctly identified, about whether their label is correct or not. We report the F1 score for each symptom label (Positive, Negative and Not sure), as well as the overall F1 score (macro) and the accuracy.

The follow baseline codes are available:

NOTE: BERT-MLC are valid for SLI-EXP and SLI-IMP tasks, while BERT-MTL is valid only for SLI-IMP task.

| Models | Split | Example-based | Label-based | ||||

|---|---|---|---|---|---|---|---|

| SA | HL | HS | P | R | F1 | ||

| SLI-EXP (Symptom Recognition) | |||||||

| BERT-MLC | Dev | 75.63 | 10.12 | 86.53 | 86.50 | 93.80 | 90.00 |

| Test | 73.24 | 10.10 | 84.58 | 86.33 | 93.14 | 89.60 | |

| SLI-IMP (Symptom Recognition) | |||||||

| BERT-MLC | Dev | 33.61 | 40.87 | 81.34 | 85.03 | 95.40 | 89.91 |

| Test | 34.16 | 39.52 | 82.22 | 84.98 | 94.81 | 89.63 | |

| BERT-MTL | Dev | 36.61 | 38.12 | 84.33 | 95.83 | 86.67 | 91.02 |

| Test | 35.88 | 38.77 | 83.76 | 96.11 | 86.18 | 90.88 | |

| SLI-IMP (Symptom Inference) | |||||||

| POS | NEG | NS | Overall | Acc | |||

| BERT-MLC | Dev | 81.85 | 47.99 | 58.42 | 62.76 | 72.84 | |

| Test | 81.25 | 46.53 | 59.14 | 62.31 | 71.99 | ||

| BERT-MTL | Dev | 79.83 | 53.38 | 60.94 | 64.72 | 71.38 | |

| Test | 79.64 | 53.87 | 60.20 | 64.57 | 71.08 | ||

In MRG task, we use the concatenation of all NON-OTHER categories of utterances to generate medical reports. During inference, the categories of utterances in the test set is pre-predicted by the trained ERNIE model of DAC task.

To evaluate MRG task, we report both BLEU and ROUGE score, i.e., BLEU-2/4 and ROUGE-1/2/L. We also report Concept F1 score (C-F1) to measure the model’s effectiveness in capturing the medical concepts that are of importance, and Regex-based Diagnostic Accuracy (RD-Acc), to measure the model’s ability to judge the disease.

The follow baseline codes are available:

NOTE: To calculate C-F1 score, the trained BERT model in NER task is utilized. See details in eval_ner_f1.py. To calculate RD-Ac, a simple regex-based method is utilized, see details in eval_acc.py.

| Models | B-2 | B-4 | R-1 | R-2 | R-L | C-F1 | D-Acc |

|---|---|---|---|---|---|---|---|

| Seq2Seq | 54.43 | 43.95 | 54.13 | 43.98 | 50.42 | 36.73 | 48.34 |

| PG | 58.31 | 49.31 | 59.46 | 49.79 | 56.34 | 46.36 | 56.60 |

| Transformer | 58.57 | 47.67 | 57.25 | 46.29 | 53.29 | 40.64 | 54.50 |

| T5 | 62.57 | 52.48 | 61.20 | 50.98 | 58.18 | 46.55 | 47.60 |

| ProphetNet | 58.11 | 49.06 | 61.18 | 50.33 | 57.94 | 49.61 | 55.36 |

To evaluate DDP task, we report Symptom Recall (Rec), Diagnostic Accuracy (Acc) and the average number of interactions (# Turns).

The follow baseline codes are available:

NOTE: We use the open source implementation for all baselines, since none of these papers provide any official repos or codes.

| Models | Rec | Acc | # Turns |

|---|---|---|---|

| DQN | 0.047 | 0.408 | 9.750 |

| REFUEL | 0.262 | 0.505 | 5.500 |

| KR-DQN | 0.279 | 0.485 | 5.950 |

| GAMP | 0.067 | 0.500 | 1.780 |

| HRL | 0.295 | 0.556 | 6.990 |

If you extend or use this work, please cite the paper where it was introduced.

@article{chen2022benchmark,

title={A Benchmark for Automatic Medical Consultation System: Frameworks, Tasks and Datasets},

author={Chen, Wei and Li, Zhiwei and Fang, Hongyi and Yao, Qianyuan and Zhong, Cheng and Hao, Jianye and Zhang, Qi and Huang, Xuanjing and Wei, Zhongyu and others},

journal={arXiv preprint arXiv:2204.08997},

year={2022}

}