diskover - File system crawler, disk space usage, file search engine and storage analytics powered by Elasticsearch

diskover is an open source file system crawler and disk space usage software that uses Elasticsearch to index and manage data across heterogeneous storage systems. Using diskover, you are able to more effectively search and organize files and system administrators are able to manage storage infrastructure, efficiently provision storage, monitor and report on storage use, and effectively make decisions about new infrastructure purchases.

As the amount of file data generated by business' continues to expand, the stress on expensive storage infrastructure, users and system administrators, and IT budgets continues to grow.

Using diskover, users can identify old and unused files and give better insights into data change, file duplication and wasted space. diskover supports crawling local file-systems, over NFS/SMB or through TCP sockets. Amazon S3 inventory files are also supported.

diskover is written and maintained by Chris Park (shirosai) and runs on Linux and OS X/macOS using Python 2/3.

-- linuxserver.io community member

diskover crawler and workerbots running in terminal

diskover-web (diskover's web file manager, analytics app, file system search engine, rest-api)

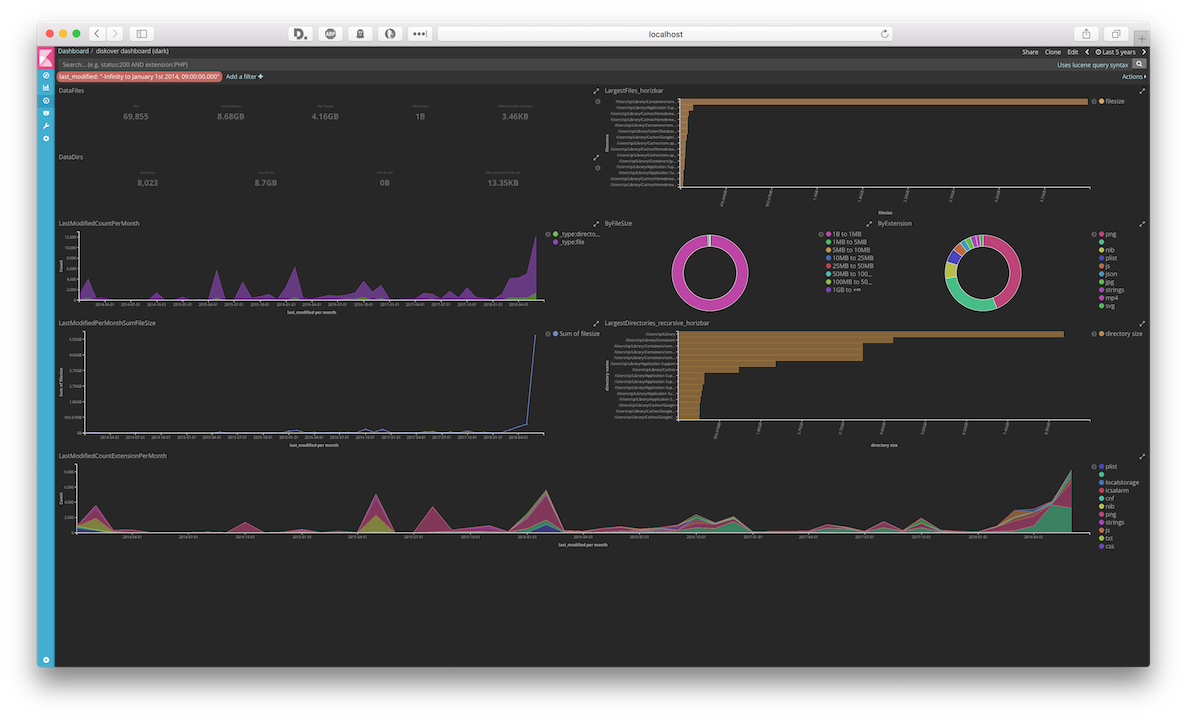

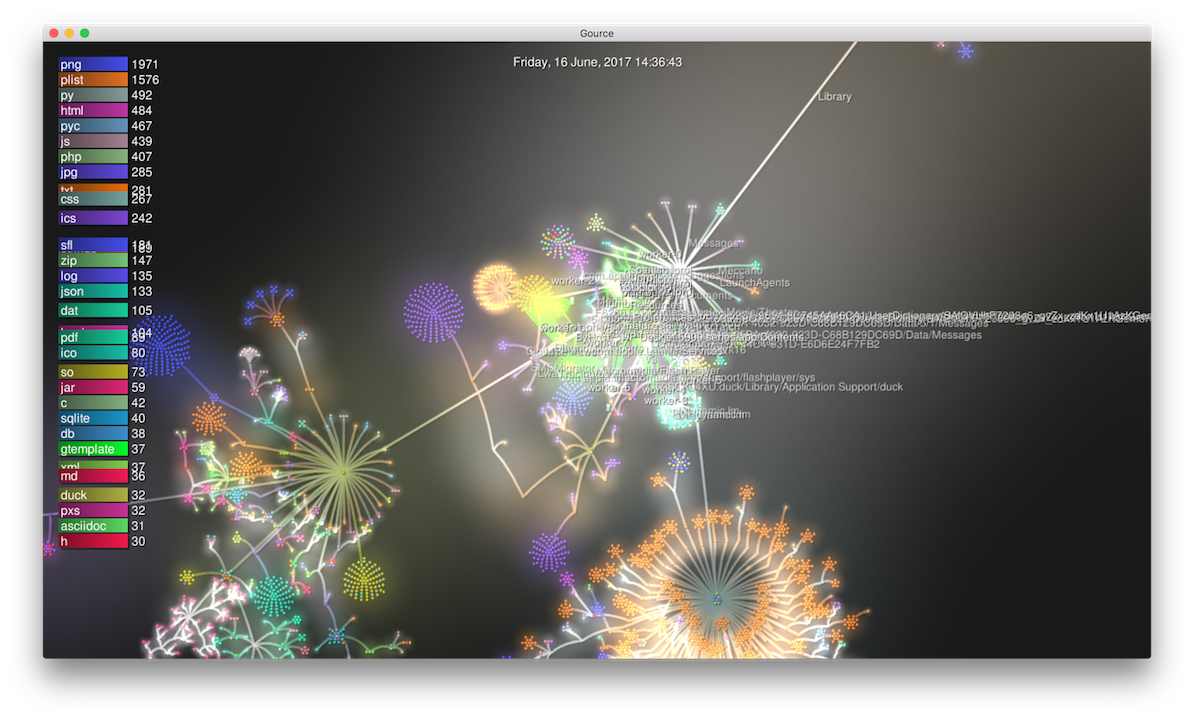

Kibana dashboards/saved searches/visualizations and support for Gource

If you are a fan of the project or you are using diskover and it's helping you save storage space, please consider supporting the project on Patreon or PayPal. Thank you so much to all the fans and supporters!

Linux or OS X/macOS(tested on OS X 10.11.6, Ubuntu 16.04/18.04),Windows 10(using Windows Subsystem for Linux)Python 2.7. or Python 3.5./3.6.(tested on Python 2.7.15, 3.6.5) Python 3 recommendedPython elasticsearch client modulePython requests modulePython scandir modulePython progressbar2 modulePython redis modulePython rq moduleElasticsearch 5.6.x(local or AWS ES Service, tested on Elasticsearch 5.6.9) Elasticsearch 6 not supportedRedis 4.x(tested on 4.0.9)

See requirements.txt for specific python module version numbers since newer versions may not work with diskover.

- diskover-web (diskover's web file manager and analytics app)

- Redis RQ Dashboard (for monitoring redis queue)

- netdata (for realtime monitoring cpu/disk/mem/network/elasticsearch/redis/etc metrics, plugin for rq-dashboard in netdata directory)

- Kibana (for visualizing Elasticsearch data, tested on Kibana 5.6.9)

- X-Pack (Kibana plugin for graphs, reports, monitoring and http auth)

- Grafana ES dashboard (Grafana dashboard for Elasticsearch)

- crontab-ui (web ui for managing cron jobs - for scheduling crawls)

- cronkeep (alternative web ui for managing cron jobs)

- Gource (for Gource visualizations of diskover Elasticsearch data, see videos above)

- sharesniffer (for scanning your network for file shares and auto-mounting for crawls)

Python qumulo-api module(for using Qumulo storage api, --qumulo cli option, install with pip, Python 2.7. only)

$ git clone https://github.com/shirosaidev/diskover.git

$ cd diskoverCheck Elasticsearch and Redis are running and are the required versions (see requirements above).

$ curl -X GET http://localhost:9200/

$ redis-cli infoInstall Python dependencies using pip.

$ pip install -r requirements.txtCopy diskover config diskover.cfg.sample to diskover.cfg and edit for your environment.

Start diskover worker bots (a good number might be cores x 2) with:

$ cd /path/with/diskover

$ python diskover_worker_bot.pyWorker bots can be added during a crawl to help with the queue. To run a worker bot in burst mode (quit after all jobs done), use the -b flag. If the queue is empty these bots will die, so use rq info or rq-dashboard to see if they are running.

To start up multiple bots, run:

$ cd /path/with/diskover

$ ./diskover-bot-launcher.shBy default, this will start up 8 bots. See -h for cli options including changing the number of bots to start. Bots can be run on the same host as the diskover.py crawler or multiple hosts in the network as long as they have the same nfs/cifs mountpoint as rootdir (-d path) and can connect to ES and Redis (see wiki for more info).

Start diskover main job dispatcher and file tree crawler with (using adaptive batch size and optimize index cli flags):

$ python /path/to/diskover.py -d /rootpath/you/want/to/crawl -i diskover-indexname -a -ODefaults for crawl with no flags is to index from . (current directory) and files >0 Bytes and 0 days modified time. Empty files and directores are skipped (unless you use -s 0 and -e flags). Symlinks are not followed and skipped. Use -h to see cli options.

Crawl down to maximum tree depth of 3:

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl -M 3Only index files which are >90 days modified time and >1 KB filesize:

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl -m +90 -s 1024Only index files which have been modified in the last 7 days including empty files and dirs:

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl -m -7 -s 0 -eStore cost per gb in es index from diskover.cfg settings and use size on disk (disk usage) instead of file size:

$ python diskover.py -i diskover-index -a -d /rootpath/to/crawl -G -SCreate index with just level 1 directories and files, then run background crawls in parallel for each directory in rootdir and merge the data into same index. After all crawls are finished, calculate rootdir doc's size/items counts:

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl --maxdepth 1

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl/dir1 --reindexrecurs -q &

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl/dir2 --reindexrecurs -q &

...

$ python diskover.py -i diskover-indexname -a -d /rootpath/to/crawl --dircalcsonly --maxdcdepth 0diskover has a tcp socket client that can tree walk locally on storage and send data to diskover socket server which acts as a proxy for enqueuing to redis rq. Read more.

Becoming a Patron gets you access to the OVA files running the latest version of diskover/diskover-web. Fastest way to get up and running diskover. Check out the Patreon page to learn more about how to get access to the OVA downloads.

You can set up diskover and diskover-web in docker, there are a few choices for easily running diskover in docker using pre-built images/compose files.

linuxserver.io Docker hub image: https://hub.docker.com/r/linuxserver/diskover/

diskover-web has Dockerfile with instructions for docker-compose.

Read the wiki for more documentation on how to use diskover.

For discussions or questions about diskover, please ask on Google Group.

For bugs about diskover, please use the issues page.

For questions or support with diskover you can also contact me on linuxserver.io Discord @shirosai.

See the license file.