ControlVideo

Official pytorch implementation of "ControlVideo: Training-free Controllable Text-to-Video Generation"

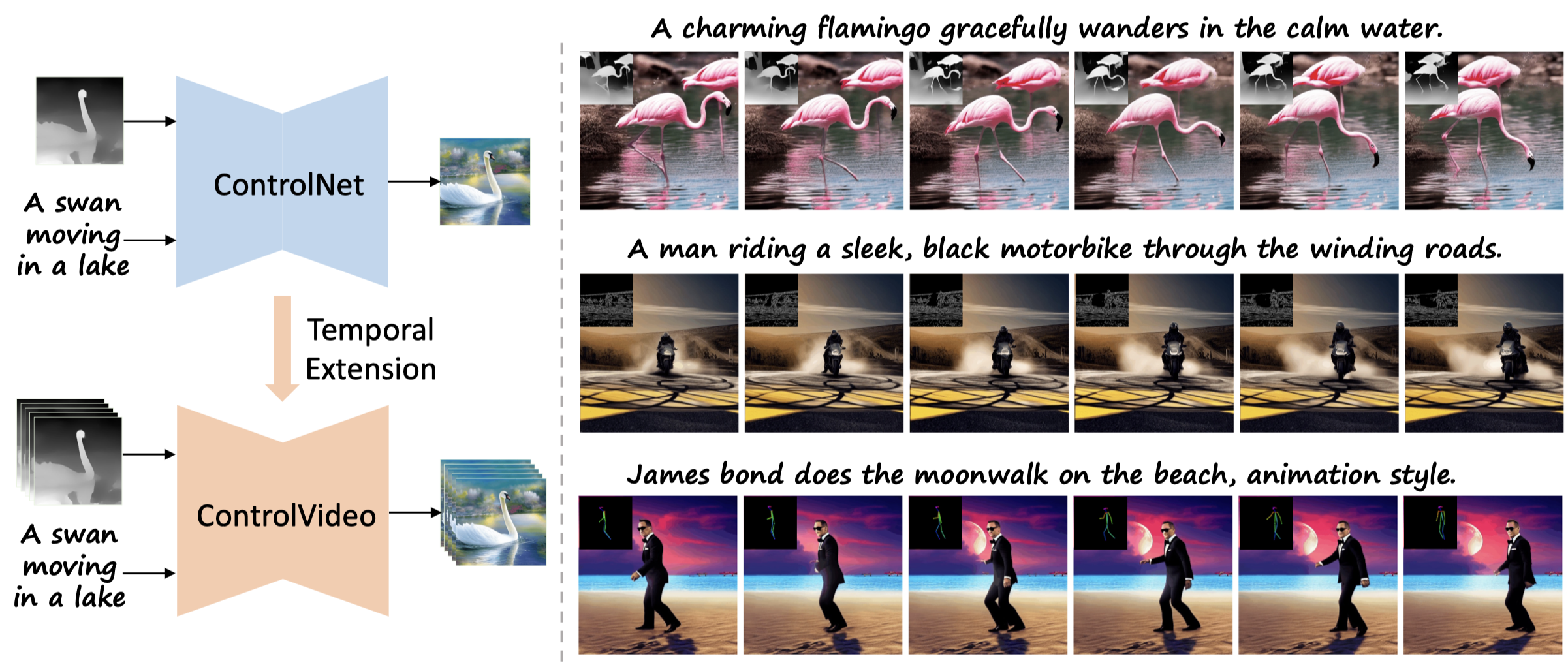

ControlVideo adapts ControlNet to the video counterpart without any finetuning, aiming to directly inherit its high-quality and consistent generation

News

- [05/28/2023] Thanks chenxwh, add a Replicate demo!

- [05/25/2023] Code ControlVideo released!

- [05/23/2023] Paper ControlVideo released!

Setup

1. Download Weights

All pre-trained weights are downloaded to checkpoints/ directory, including the pre-trained weights of Stable Diffusion v1.5, ControlNet conditioned on canny edges, depth maps, human poses.

The flownet.pkl is the weights of RIFE.

The final file tree likes:

checkpoints

├── stable-diffusion-v1-5

├── sd-controlnet-canny

├── sd-controlnet-depth

├── sd-controlnet-openpose

├── flownet.pkl

2. Requirements

conda create -n controlvideo python=3.10

conda activate controlvideo

pip install -r requirements.txtxformers is recommended to save memory and running time.

Inference

To perform text-to-video generation, just run this command in inference.sh:

python inference.py \

--prompt "A striking mallard floats effortlessly on the sparkling pond." \

--condition "depth" \

--video_path "data/mallard-water.mp4" \

--output_path "outputs/" \

--video_length 15 \

--smoother_steps 19 20 \

--width 512 \

--height 512 \

# --is_long_videowhere --video_length is the length of synthesized video, --condition represents the type of structure sequence,

--smoother_steps determines at which timesteps to perform smoothing, and --is_long_video denotes whether to enable efficient long-video synthesis.

Visualizations

ControlVideo on depth maps

ControlVideo on canny edges

ControlVideo on human poses

|

|

|

|

| "James bond moonwalk on the beach, animation style." | "Goku in a mountain range, surreal style." | "Hulk is jumping on the street, cartoon style." | "A robot dances on a road, animation style." |

Long video generation

|

|

| "A steamship on the ocean, at sunset, sketch style." | "Hulk is dancing on the beach, cartoon style." |

Citation

If you make use of our work, please cite our paper.

@article{zhang2023controlvideo,

title={ControlVideo: Training-free Controllable Text-to-Video Generation},

author={Zhang, Yabo and Wei, Yuxiang and Jiang, Dongsheng and Zhang, Xiaopeng and Zuo, Wangmeng and Tian, Qi},

journal={arXiv preprint arXiv:2305.13077},

year={2023}

}Acknowledgement

This work repository borrows heavily from Diffusers, ControlNet, Tune-A-Video, and RIFE.

There are also many interesting works on video generation: Tune-A-Video, Text2Video-Zero, Follow-Your-Pose, Control-A-Video, et al.