Warning

This sample is under active development to showcase new features and evolve with the Azure AI Studio (preview) platform. Keep in mind that the latest build may not be rigorously tested for all environments (local development, GitHub Codespaces, Skillable VM).

Instead refer to the table, identify the right commit version in context, then launch in GitHub Codespaces

| Build Version | Description |

|---|---|

| Stable : #cc2e808 | Version tested & used in Microsoft AI Tour (works on Skillable) |

| Active : main | Version under active development (breaking changes possible) |

Table Of Contents

- Learning Objectives

- Pre-Requisites

- Setup Development Environment

- Provision Azure Resources

- Populate With Your Data

- Build Your Prompt Flow

- Evaluate Your Prompt Flow

- Deploy Using Azure AI SDK

- Deploy with GitHub Actions

If you find this sample useful, consider giving us a star on GitHub! If you have any questions or comments, consider filing an Issue on the source repo.

Learn to build an Large Language Model (LLM) Application with a RAG (Retrieval Augmented Generation) architecture using Azure AI Studio and Prompt Flow. By the end of this workshop you should be able to:

- Describe what Azure AI Studio and Prompt Flow provide

- Explain the RAG Architecture for building LLM Apps

- Build, run, evaluate, and deploy, a RAG-based LLM App to Azure.

- Azure Subscription - Signup for a free account.

- Visual Studio Code - Download it for free.

- GitHub Account - Signup for a free account.

- Access to Azure Open AI Services - Learn about getting access.

- Ability to provision Azure AI Search (Paid) - Required for Semantic Ranker

The repository is instrumented with a devcontainer.json configuration that can provide you with a pre-built environment that can be launched locally, or in the cloud. You can also elect to do a manual environment setup locally, if desired. Here are the three options in increasing order of complexity and effort on your part. Pick one!

- Pre-built environment, in cloud with GitHub Codespaces

- Pre-built environment, on device with Docker Desktop

- Manual setup environment, on device with Anaconda or venv

The first approach is recommended for minimal user effort in startup and maintenance. The third approach will require you to manually update or maintain your local environment, to reflect any future updates to the repo.

To setup the development environment you can leverage either GitHub Codespaces, a local Python environment (using Anaconda or venv), or a VS Code Dev Container environment (using Docker).

This is the recommended option.

- Fork the repo into your personal profile.

- In your fork, click the green

Codebutton on the repository - Select the

Codespacestab and clickCreate codespace...

This should open a new browser tab with a Codespaces container setup process running. On completion, this will launch a Visual Studio Code editor in the browser, with all relevant dependencies already installed in the running development container beneath. Congratulations! Your cloud dev environment is ready!

This option uses the same devcontainer.json configuration, but launches the development container in your local device using Docker Desktop. To use this approach, you need to have the following tools pre-installed in your local device:

- Visual Studio Code (with Dev Containers Extension)

- Docker Desktop (community or free version is fine)

Make sure your Docker Desktop daemon is running on your local device. Then,

- Fork this repo to your personal profile

- Clone that fork to your local device

- Open the cloned repo using Visual Studio Code

If your Dev Containers extension is installed correctly, you will be prompted to "re-open the project in a container" - just confirm to launch the container locally. Alternatively, you may need to trigger this step manually. See the Dev Containers Extension for more information.

Once your project launches in the local Docker desktop container, you should see the Visual Studio Code editor reflect that connection in the status bar (blue icon, bottom left). Congratulations! Your local dev environment is ready!

-

Clone the repo

git clone https://github.com/azure/contoso-chat

-

Open the repo in VS Code

cd contoso-chat code .

-

Install the Prompt Flow Extension in VS Code

- Open the VS Code Extensions tab

- Search for "Prompt Flow"

- Install the extension

-

Install the Azure CLI for your device OS

-

Create a new local Python environment using either anaconda or venv for a managed environment.

-

Option 1: Using anaconda

conda create -n contoso-chat python=3.11 conda activate contoso-chat pip install -r requirements.txt

-

Option 2: Using venv

python3 -m venv .venv source .venv/bin/activate pip install -r requirements.txt

-

We setup our development ennvironment in the previous step. In this step, we'll provision Azure resources for our project, ready to use for developing our LLM Application.

Start by connecting your Visual Studio Code environment to your Azure account:

- Open the terminal in VS Code and use command

az login. - Complete the authentication flow.

If you are running within a dev container, use these instructions to login instead:

- Open the terminal in VS Code and use command

az login --use-device-code - The console message will give you an alphanumeric code

- Navigate to https://microsoft.com/devicelogin in a new tab

- Enter the code from step 2 and complete the flow.

In either case, verify that the console shows a message indicating a successful authentication. Congratulations! Your VS Code session is now connected to your Azure subscription!

The project requires a number of Azure resources to be set up, in a specified order. To simplify this, an auto-provisioning script has been provided. (NOTE: It will use the current active subscription to create the resource. If you have multiple subscriptions, use az account set --subscription "<SUBSCRIPTION-NAME>" first to set the desired active subscription.)

Run the provisioning script as follows:

./provision.shThe script should set up a dedicated resource group with the following resources:

- Azure AI services resource

- Azure Machine Learning workspace (Azure AI Project) resource

- Search service (Azure AI Search) resource

- Azure Cosmos DB account resource

The script will set up an Azure AI Studio project with the following model deployments created by default, in a relevant region that supports them. Your Azure subscription must be enabled for Azure OpenAI access.

- gpt-3.5-turbo

- text-embeddings-ada-002

- gpt-4

The Azure AI Search resource will have Semantic Ranker enabled for this project, which requires the use of a paid tier of that service. It may also be created in a different region, based on availability of that feature.

The script should automatically create a config.json in your root directory, with the relevant Azure subscription, resource group, and AI workspace properties defined. These will be made use of by the Azure AI SDK for relevant API interactions with the Azure AI platform later.

If the config.json file is not created, simply download it from your Azure portal by visiting the Azure AI project resource created, and looking at its Overview page.

The default sample has an .env.sample file that shows the relevant environment variables that need to be configured in this project. The script should create a .env file that has these same variables but populated with the right values for your Azure resources.

If the file is not created, simply copy over .env.sample to .env - then populate those values manually from the respective Azure resource pages using the Azure Portal (for Azure CosmosDB and Azure AI Search) and the Azure AI Studio (for the Azure OpenAI values)

You will need to have your local Prompt Flow extension configured to have the following connection objects set up:

contoso-cosmosto Azure Cosmos DB endpointcontoso-searchto Azure AI Search endpointaoai-connectionto Azure OpenAI endpoint

Verify if these were created by using the pf tool from the VS Code terminal as follows:

pf connection listIf the connections are not visible, create them by running the connections/create-connections.ipynb notebook. Then run the above command to verify they were created correctly.

The auto-provisioning will have setup 2 of the 3 connections for you by default. First, verify this by

- going to Azure AI Studio

- signing in with your Azure account, then clicking "Build"

- selecting the Azure AI project for this repo, from that list

- clicking "Settings" in the sidebar for the project

- clicking "View All" in the Connections panel in Settings

You should see contoso-search and aoai-connection pre-configured, else create them from the Azure AI Studio interface using the Create Connection workflow (and using the relevant values from your .env file).

You will however need to create contoso-cosmos manually from Azure ML Studio. This is a temporary measure for custom connections and may be automated in future. For now, do this:

- Visit https://ai.azure.com and sign in if necessary

- Under Recent Projects, click your Azure AI project (e.g., contoso-chat-aiproj)

- Select Settings (on sidebar), scroll down to the Connections pane, and click "View All"

- Click "+ New connection", modify the Service field, and select Custom from dropdown

- Enter "Connection Name": contoso-cosmos, "Access": Project.

- Click "+ Add key value pairs" four times. Fill in the following details found in the

.envfile:- key=key, value=.env value for COSMOS_KEY, is-secret=checked

- key=endpoint, value=.env value for COSMOS_ENDPOINT

- key=containerId, value=customers

- key=databaseId, value=contoso-outdoor

- Click "Save" to finish setup.

Refresh main Connections list screen to verify that you now have all three required connections listed.

In this step we want to populate the required data for our application use case.

- Populate Search Index in Azure AI Search

- Run the code in the

data/product_info/create-azure-search.ipynbnotebook. - Visit the Azure AI Search resource in the Azure Portal

- Click on "Indexes" and verify that a new index was created

- Run the code in the

- Populate Customer Data in Azure Cosmos DB

- Run the code in the

data/customer_info/create-cosmos-db.ipynbnotebook. - Visit the Azure Cosmos DB resource in the Azure Portal

- Click on "Data Explorer" and verify tat the container and database were created!

- Run the code in the

We are now ready to begin building our prompt flow! The repository comes with a number of pre-written flows that provide the starting points for this project. In the following section, we'll explore what these are and how they work.

A prompt flow is a DAG (directed acyclic graph) that is made up of nodes that are connected together to form a flow. Each node in the flow is a python function tool that can be edited and customized to fit your needs.

-

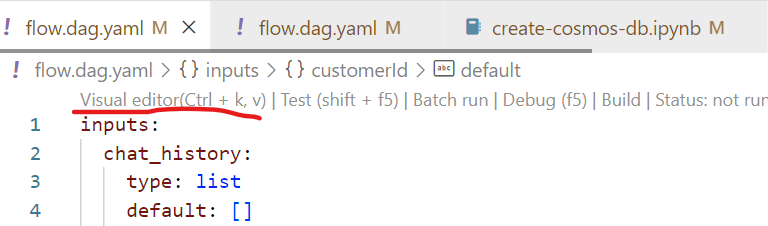

Click on the

contoso-chat/flow.dag.yamlfile in the Visual Studio Code file explorer. -

You should get a view similar to what is shown below.

-

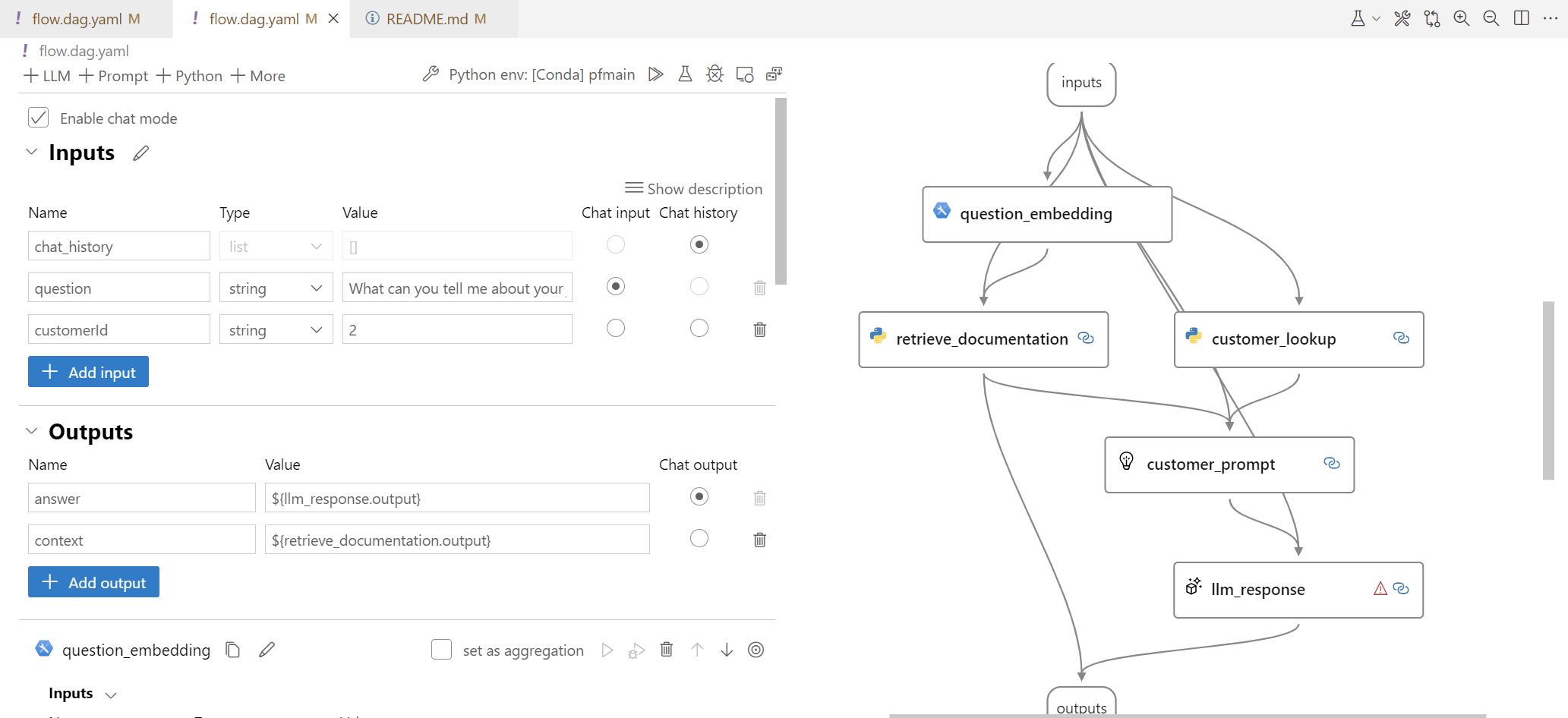

This will open up the prompt flow in the visual editor as shown: -

The prompt flow is a directed acyclic graph (DAG) of nodes, with a starting node (input), a terminating node (output), and an intermediate sub-graph of connected nodes as follows:

| Node | Description |

|---|---|

| inputs | This node is used to start the flow and is the entry point for the flow. It has the input parameters customer_id and question, and chat_history. The customer_id is used to look up the customer information in the Cosmos DB. The question is the question the customer is asking. The chat_history is the chat history of the conversation with the customer. |

| question_embedding | This node is used to embed the question text using the text-embedding-ada-002 model. The embedding is used to find the most relevant documents from the AI Search index. |

| retrieve_documents | This node is used to retrieve the most relevant documents from the AI Search index with the question vector. |

| customer_lookup | This node is used to get the customer information from the Cosmos DB. |

| customer_prompt | This node is used to generate the prompt with the information retrieved and added to the customer_prompt.jinja2 template. |

| llm_response | This node is used to generate the response to the customer using the GPT-35-Turbo model. |

| outputs | This node is used to end the flow and return the response to the customer. |

Let's run the flow to see what happens. Note that the input node is pre-configured with a question. By running the flow, we anticipate that the output node should now provide the result obtained from the LLM when presented with the customer prompt that was created from the initial question with enhanced customer data and retrieved product context.

- To run the flow, click the

Run All(play icon) at the top. When prompted, select "Run it with standard mode". - Watch the console output for execution progress updates

- On completion, the visual graph nodes should light up (green=success, red=failure).

- Click any node to open the declarative version showing details of execution

- Click the

Prompt Flowtab in the Visual Studio Code terminal window for execution times

For more details on running the prompt flow, follow the instructions here.

Congratulations!! You ran the prompt flow and verified it works!

If you like, you can try out other possible customer inputs to see what the output of the Prompt Flow might be. (This step is optional, and you can skip it if you like.)

- As before, run the flow by clicking the

Run All(play icon) at the top. This time when prompted, select "Run it with interactive mode (text only)." - Watch the console output, and when the "User: " prompt appears, enter a question of your choice. The "Bot" response (from the output node) will then appear.

Here are some questions you can try:

- What have I purchased before?

- What is a good sleeping bag for summer use?

- How do you clean the CozyNights Sleeping Bag?

Now, we need to understand how well our prompt flow performs using defined metrics like groundedness, coherence etc. To evaluate the prompt flow, we need to be able to compare it to what we see as "good results" in order to understand how well it aligns with our expectations.

We may be able to evaluate the flow manually (e.g., using Azure AI Studio) but for now, we'll evaluate this by running the prompt flow using gpt-4 and comparing our performance to the results obtained there. To do this, follow the instructions and steps in the notebook evaluate-chat-prompt-flow.ipynb under the eval folder.

At this point, we've built, run, and evaluated, the prompt flow locally in our Visual Studio Code environment. We are now ready to deploy the prompt flow to a hosted endpoint on Azure, allowing others to use that endpoint to send user questions and receive relevant responses.

This process consists of the following steps:

- We push the prompt flow to Azure (effectively uploading flow assets to Azure AI Studio)

- We activate an automatic runtime and run the uploaded flow once, to verify it works.

- We deploy the flow, triggering a series of actions that results in a hosted endpoint.

- We can now use built-in tests on Azure AI Studio to validate the endpoint works as desired.

Just follow the instructions and steps in the notebook push_and_deploy_pf.ipynb under the deployment folder. Once this is done, the deployment endpoint and key can be used in any third-party application to integrate with the deployed flow for real user experiences.

-

Login to Azure Shell

-

Follow the instructions to create a service principal here

-

Follow the instructions in steps 1 - 8 here to add create and add the user-assigned managed identity to the subscription and workspace.

-

Assign

Data Science Roleand theAzure Machine Learning Workspace Connection Secrets Readerto the service principal. Complete this step in the portal under the IAM. -

Setup authentication with Github here

{

"clientId": <GUID>,

"clientSecret": <GUID>,

"subscriptionId": <GUID>,

"tenantId": <GUID>

}- Add

SUBSCRIPTION(this is the subscription) ,GROUP(this is the resource group name),WORKSPACE(this is the project name), andKEY_VAULT_NAMEto GitHub.

-

Follow the instructions to create a custom env with the packages needed here

- Select the

upload existing dockeroption - Upload from the folder

runtime\docker

- Select the

-

Update the deployment.yml image to the newly created environemnt. You can find the name under

Azure container registryin the environment details page.

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.opensource.microsoft.com.

When you submit a pull request, a CLA bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., status check, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow Microsoft's Trademark & Brand Guidelines. Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party's policies.