Terraform scripts for deploying log export to Splunk per Google Cloud reference guide:

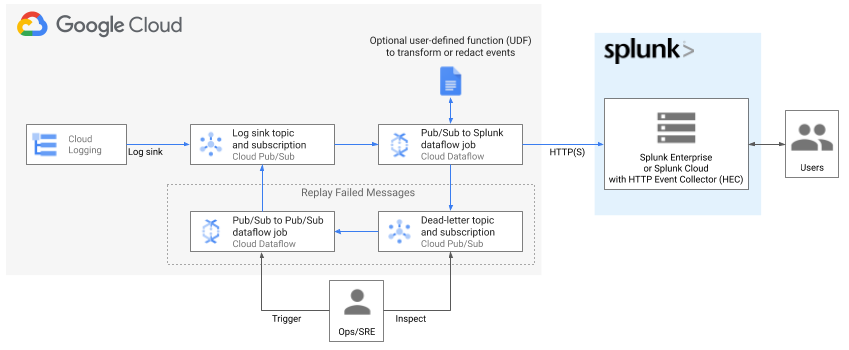

Deploying production-ready log exports to Splunk using Dataflow

.

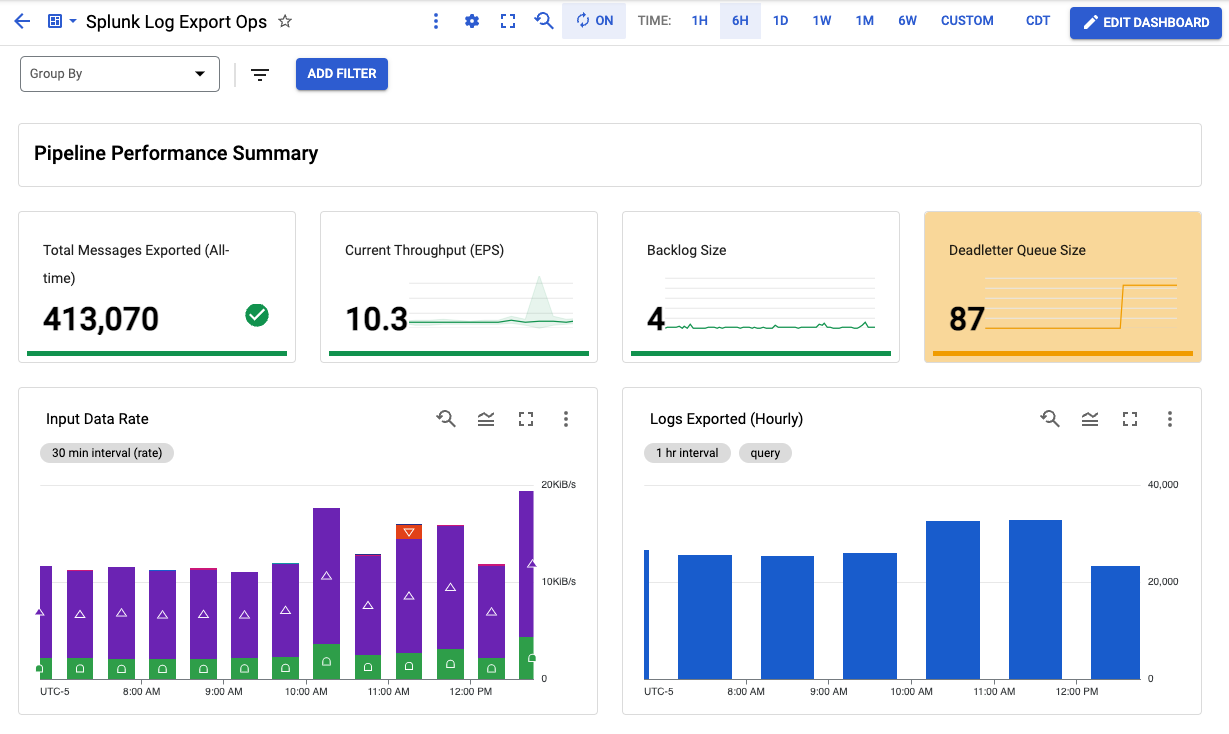

Resources created include an optional Cloud Monitoring custom dashboard to monitor your log export operations. For more details on custom metrics in Splunk Dataflow template, see New observability features for your Splunk Dataflow streaming pipelines.

These deployment templates are provided as is, without warranty. See Copyright & License below.

| Name | Description | Type |

|---|---|---|

| dataflow_job_name | Dataflow job name. No spaces | string |

| log_filter | Log filter to use when exporting logs | string |

| network | Network to deploy into | string |

| project | Project ID to deploy resources in | string |

| region | Region to deploy regional-resources into. This must match subnet's region if deploying into existing network (e.g. Shared VPC). See subnet parameter below |

string |

| splunk_hec_url | Splunk HEC URL to write data to. Example: https://[MY_SPLUNK_IP_OR_FQDN]:8088 | string |

| create_network | Boolean value specifying if a new network needs to be created. | bool |

| dataflow_job_batch_count | (Optional) Batch count of messages in single request to Splunk (default 50) | number |

| dataflow_job_disable_certificate_validation | (Optional) Boolean to disable SSL certificate validation (default false) |

bool |

| dataflow_job_machine_count | (Optional) Dataflow job max worker count (default 2) | number |

| dataflow_job_machine_type | (Optional) Dataflow job worker machine type (default 'n1-standard-4') | string |

| dataflow_job_parallelism | (Optional) Maximum parallel requests to Splunk (default 8) | number |

| dataflow_job_udf_function_name | (Optional) Name of JavaScript function to be called (default No UDF used) | string |

| dataflow_job_udf_gcs_path | (Optional) GCS path for JavaScript file (default No UDF used) | string |

| dataflow_template_version | (Optional) Dataflow template release version (default 'latest'). Override this for version pinning e.g. '2021-08-02-00_RC00'. Must specify version only since template GCS path will be deduced automatically: 'gs://dataflow-templates/version/Cloud_PubSub_to_Splunk' |

string |

| dataflow_worker_service_account | (Optional) Name of Dataflow worker service account to be created and used to execute job operations. In the default case of creating a new service account (use_externally_managed_dataflow_sa=false), this parameter must be 6-30 characters long, and match the regular expression a-z. If the parameter is empty, worker service account defaults to project's Compute Engine default service account. If using external service account (use_externally_managed_dataflow_sa=true), this parameter must be the full email address of the external service account. |

string |

| deploy_replay_job | (Optional) Determines if replay pipeline should be deployed or not (default: false) |

bool |

| primary_subnet_cidr | The CIDR Range of the primary subnet | string |

| scoping_project | Cloud Monitoring scoping project ID to create dashboard under. This assumes a pre-existing scoping project whose metrics scope contains the project where dataflow job is to be deployed.See Cloud Monitoring settings for more details on scoping project. If parameter is empty, scoping project defaults to value of project parameter above. |

string |

| splunk_hec_token | (Optional) Splunk HEC token. Must be defined if splunk_hec_token_source if type of PLAINTEXT or KMS. |

string |

| splunk_hec_token_kms_encryption_key | (Optional) The Cloud KMS key to decrypt the HEC token string. Required if splunk_hec_token_source is type of KMS (default: '') |

string |

| splunk_hec_token_secret_id | (Optional) Id of the Secret for Splunk HEC token. Required if splunk_hec_token_source is type of SECRET_MANAGER (default: '') |

string |

| splunk_hec_token_source | (Optional) Define in which type HEC token is provided. Possible options: [PLAINTEXT, KMS, SECRET_MANAGER]. Default: PLAINTEXT | string |

| subnet | Subnet to deploy into. This is required when deploying into existing network (create_network=false) (e.g. Shared VPC) |

string |

| use_externally_managed_dataflow_sa | (Optional) Determines if the worker service account provided by dataflow_worker_service_account variable should be created by this module (default) or is managed outside of the module. In the latter case, user is expected to apply and manage the service account IAM permissions over external resources (e.g. Cloud KMS key or Secret version) before running this module. |

bool |

| Name | Description |

|---|---|

| dataflow_input_topic | n/a |

| dataflow_job_id | n/a |

| dataflow_log_export_dashboard | n/a |

| dataflow_output_deadletter_subscription | n/a |

Deployment templates include an optional Cloud Monitoring custom dashboard to monitor your log export operations:

At a minimum, you must have the following roles before you deploy the resources in this Terraform:

- Logs Configuration Writer (

roles/logging.configWriter) at the project and/or organization level - Compute Network Admin (

roles/compute.networkAdmin) at the project level - Compute Security Admin (

roles/compute.securityAdmin) at the project level - Dataflow Admin (

roles/dataflow.admin) at the project level - Pub/Sub Admin (

roles/pubsub.admin) at the project level - Storage Admin (

roles/storage.admin) at the project level

To ensure proper pipeline operation, Terraform creates necessary IAM bindings at the resources level as part of this deployment to grant access between newly created resources. For example, Log sink writer is granted Pub/Sub Publisher role over the input topic which collects all the logs, and Dataflow worker service account is granted both Pub/Sub subscriber over the input subscription, and Pub/Sub Publisher role over the deadletter topic.

The Dataflow worker service service account is the identity used by the Dataflow worker VMs. This module offers three options in terms of which worker service account to use and how to manage their IAM permissions:

-

Module uses your project's Compute Engine default service account as Dataflow worker service account, and manages any required IAM permissions. The module grants that service account necessary IAM roles such as

roles/dataflow.workerand IAM permissions over Google Cloud resources required by the job such as Pub/Sub, Cloud Storage, and secret or KMS if applicable. This is the default behavior. -

Module creates a dedicated service account to be used as Dataflow worker service account, and manages any required IAM permissions. The module grants that service account necessary IAM roles such as

roles/dataflow.workerand IAM permissions over Google Cloud resources required by the job such as Pub/Sub, Cloud Storage, and secret or KMS key if applicable. To use this option, setdataflow_worker_service_accountto the name of this new service account. -

Module uses a service account managed outside of the module. The module grants that service account necessary IAM permissions over Google Cloud resources created by the module such as Pub/Sub, Cloud Storage. You must grant this service account the required IAM roles (

roles/dataflow.worker) and IAM permissions over external resources such as any provided secret or KMS key (more below), before running this module. To use this option, setuse_externally_managed_dataflow_satotrueand setdataflow_worker_service_accountto the email address of this external service account.

For production workloads, as a security best practice, it's recommended to use option 2 or 3, both of which rely on user-managed worker service account, instead of the Compute Engine default service account. This ensures a minimally-scoped service account dedicated for this pipeline.

For option 3, make sure to grant:

- The provided Dataflow service account the following roles:

roles/dataflow.workerroles/secretmanager.secretAccessoron secret - ifSECRET_MANAGERHEC token source is usedroles/cloudkms.cryptoKeyDecrypteron KMS key- ifKMSHEC token source is used

For option 1 & 3, make sure to grant:

- Your user account or service account used to run Terraform the following role:

roles/iam.serviceAccountUseron the Dataflow service account in order to impersonate the service account. See following note for more details.

Note about Dataflow worker service account impersonation: To run this Terraform module, you must have permission to impersonate the Dataflow worker service account in order to attach that service account to Dataflow worker VMs. In case of the default Dataflow worker service account (Option 1), ensure you have iam.serviceAccounts.actAs permission over Compute Engine default service account in your project. For security purposes, this Terraform does not modify access to your existing Compute Engine default service account due to risk of granting broad permissions. On the other hand, if you choose to create and use a user-managed worker service account (Option 2) by setting dataflow_worker_service_account (and keeping use_externally_managed_dataflow_sa = false), this Terraform will add necessary impersonation permission over the new service account.

See Security and permissions for pipelines to learn more about Dataflow service accounts and their permissions.

- Terraform 0.13+

- Splunk Dataflow template 2022-04-25-00_RC00 or later

Before deploying the Terraform in a Google Cloud Platform Project, the following APIs must be enabled:

- Compute Engine API

- Dataflow API

For information on enabling Google Cloud Platform APIs, please see Getting Started: Enabling APIs.

- Copy placeholder vars file

variables.yamlinto newterraform.tfvarsto hold your own settings. - Update placeholder values in

terraform.tfvarsto correspond to your GCP environment and desired settings. See list of input parameters above. - Initialize Terraform working directory and download plugins by running:

$ terraform initNote: You can skip this step if this module is inheriting the Terraform Google provider (e.g. from a parent module) with pre-configured credentials.

$ gcloud auth application-default login --project <ENTER_YOUR_PROJECT_ID>This assumes you are running Terraform on your workstation with your own identity. For other methods to authenticate such as using a Terraform-specific service account, see Google Provider authentication docs.

$ terraform plan

$ terraform apply- Retrieve dashboard id from terraform output

$ terraform output dataflow_log_export_dashboadThe output is of the form "projects/{project_id_or_number}/dashboards/{dashboard_id}".

Take note of dashboard_id value.

- Visit newly created Monitoring Dashboard in Cloud Console by replacing dashboard_id in the following URL: https://console.cloud.google.com/monitoring/dashboards/builder/{dashboard_id}

The replay pipeline is not deployed by default; instead it is only used to move failed messages from the PubSub deadletter subscription back to the input topic, in order to be redelivered by the main log export pipeline (as depicted in above diagram). Refer to Handling delivery failures for more detail.

Caution: Make sure to deploy replay pipeline only after the root cause of the delivery failure has been fixed. Otherwise, the replay pipeline will cause an infinite loop where failed messages are sent back for re-delivery, only to fail again, causing an infinite loop and wasted resources. For that same reason, make sure to tear down the replay pipeline once the failed messages from the deadletter subscription are all processed or replayed.

- To deploy replay pipeline, set

deploy_replay_jobvariable totrue, then follow the sequence ofterraform planandterraform apply. - Once the replay pipeline is no longer needed (i.e. the number of messages in the PubSub deadletter subscription is 0), set

deploy_replay_jobvariable tofalse, then follow the sequence ofterraform planandterraform apply.

To delete resources created by Terraform, run the following then confirm:

$ terraform destroy- Expose logging level knob

Support KMS-encrypted HEC tokenCreate replay pipelineCreate secure network for self-contained setup if existing network is not providedAdd Cloud Monitoring dashboard

- Roy Arsan - rarsan

- Nick Predey - npredey

- Igor Lakhtenkov - Lakhtenkov-iv

Copyright 2021 Google LLC

Terraform templates for Google Cloud Log Export to Splunk are licensed under the Apache license, v2.0. Details can be found in LICENSE file.