This is the official Pytorch implementation of FocalNets:

"Focal Modulation Networks" by Jianwei Yang, Chunyuan Li, Xiyang Dai, Lu Yuan and Jianfeng Gao.

- [11/07/2023] Researchers showed that Focal-UNet beats previous methods on several earth system analysis benchmarks. Check out their code, paper, and project!

- [06/30/2023] 💥 Please find FocalNet-DINO checkpoints from huggingface. The old links are deprecated.

- [04/26/2023] By combining with FocalNet-Huge backbone, Focal-Stable-DINO achieves 64.8 AP on COCO test-dev without any test time augmentation! Check our Technical Report for more details!

- [02/13/2023] FocalNet has been integrated to Keras, check out the tutorial!

- [01/18/2023] Checkout a curated paper list which introduce networks beyond attention based on modern convolution and modulation!

- [01/01/2023] Researchers showed that Focal-UNet beats Swin-UNet on several medical image segmentation benchmarks. Check out their code and paper, and happy new year!

- [12/16/2022] 💥 We are pleased to release our FocalNet-Large-DINO checkpoint pretrained on Object365 and finetuned on COCO, which help to get 63.5 mAP without tta on COCO minival! Check it out!

- [11/14/2022] We created a new repo FocalNet-DINO to hold the code to reproduce the object detection performance with DINO. We will be releasing the object detection code and checkpoints there. Stay tunned!

- [11/13/2022] 💥 We release our large, xlarge and huge models pretrained on ImageNet-22K, including the one we used to achieve the SoTA on COCO object detection leaderboard!

- [11/02/2022] We wrote a blog post to introduce the insights and techniques behind our FocalNets in a plain way, check it out!

- [10/31/2022] 💥 We achieved new SoTA with 64.2 box mAP on COCO minival and

64.364.4 box mAP on COCO test-dev based on the powerful OD method DINO! We used huge model size (700M) beating much larger attention-based models like SwinV2-G and BEIT-3. Checkoout our new version and stay tuned! - [09/20/2022] Our FocalNet has been accepted by NeurIPS 2022!

- [04/02/2022] Create a gradio demo in huggingface space to visualize the modulation mechanism. Check it out!

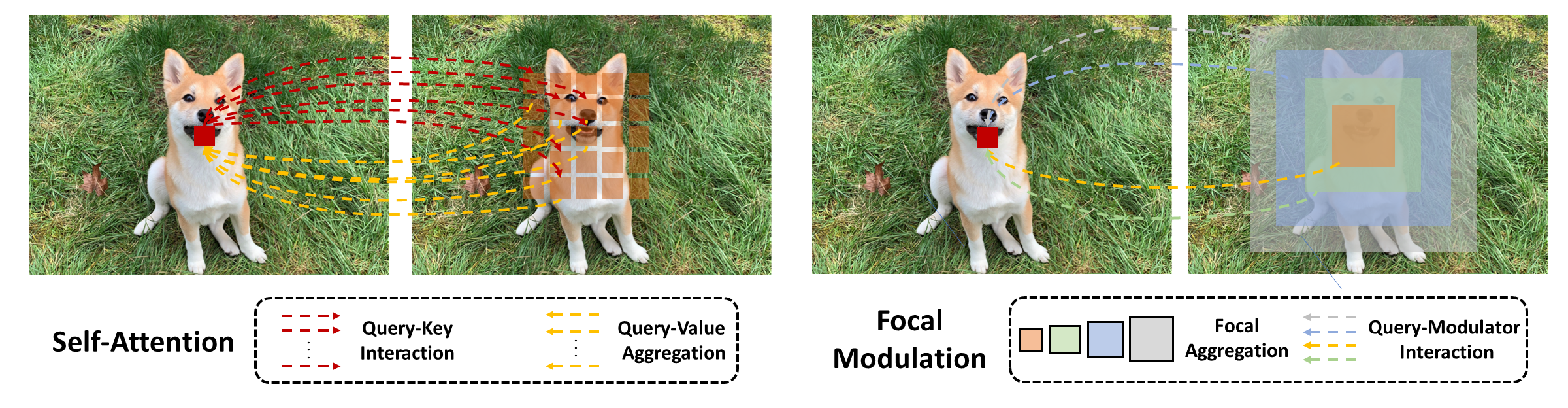

We propose FocalNets: Focal Modulation Networks, an attention-free architecture that achieves superior performance than SoTA self-attention (SA) methods across various vision benchmarks. SA is an first interaction, last aggregation (FILA) process as shown above. Our Focal Modulation inverts the process by first aggregating, last interaction (FALI). This inversion brings several merits:

- Translation-Invariance: It is performed for each target token with the context centered around it.

- Explicit input-dependency: The modulator is computed by aggregating the short- and long-rage context from the input and then applied to the target token.

- Spatial- and channel-specific: It first aggregates the context spatial-wise and then channel-wise, followed by an element-wise modulation.

- Decoupled feature granularity: Query token preserves the invidual information at finest level, while coarser context is extracted surrounding it. They two are decoupled but connected through the modulation operation.

- Easy to implement: We can implement both context aggregation and interaction in a very simple and light-weight way. It does not need softmax, multiple attention heads, feature map rolling or unfolding, etc.

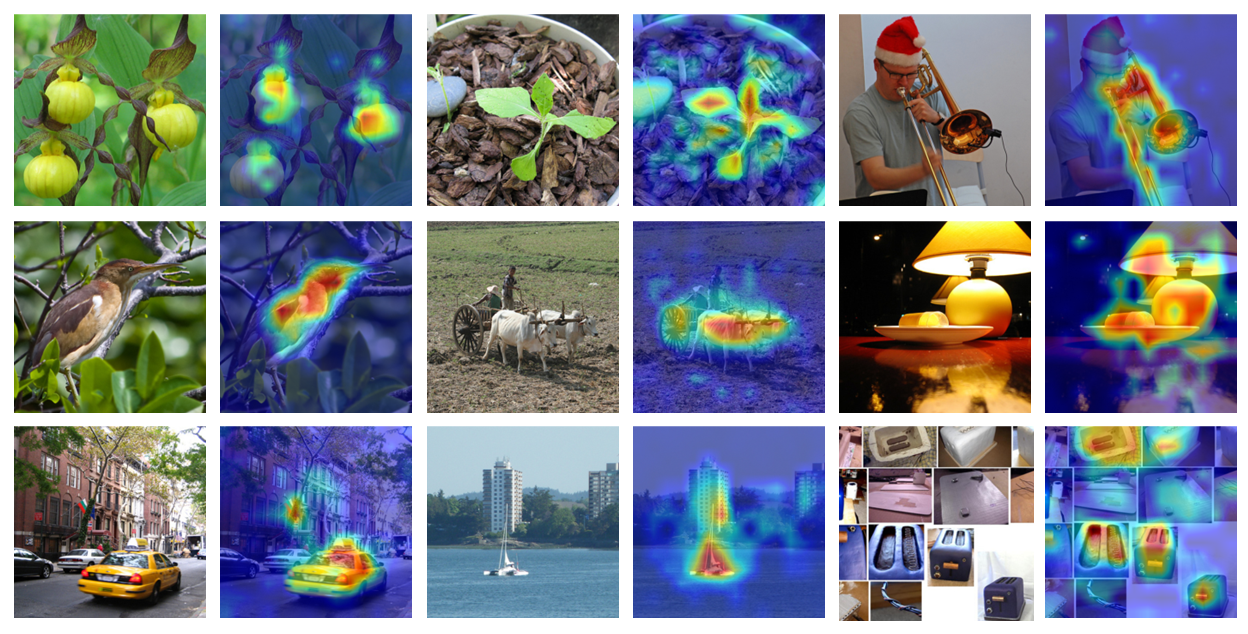

Before getting started, see what our FocalNets have learned to perceive images and where to modulate!

Finally, FocalNets are built with convolutional and linear layers, but goes beyond by proposing a new modulation mechanism that is simple, generic, effective and efficient. We hereby recommend:

Focal-Modulation May be What We Need for Visual Modeling!

- Please follow get_started_for_image_classification to get started for image classification.

- Please follow get_started_for_object_detection to get started for object detection.

- Please follow get_started_for_semantic_segmentation to get started for semantic segmentation.

Image Classification on ImageNet-1K

- Strict comparison with multi-scale Swin and Focal Transformers:

| Model | Depth | Dim | Kernels | #Params. (M) | FLOPs (G) | Throughput (imgs/s) | Top-1 | Download |

|---|---|---|---|---|---|---|---|---|

| FocalNet-T | [2,2,6,2] | 96 | [3,5] | 28.4 | 4.4 | 743 | 82.1 | ckpt/config/log |

| FocalNet-T | [2,2,6,2] | 96 | [3,5,7] | 28.6 | 4.5 | 696 | 82.3 | ckpt/config/log |

| FocalNet-S | [2,2,18,2] | 96 | [3,5] | 49.9 | 8.6 | 434 | 83.4 | ckpt/config/log |

| FocalNet-S | [2,2,18,2] | 96 | [3,5,7] | 50.3 | 8.7 | 406 | 83.5 | ckpt/config/log |

| FocalNet-B | [2,2,18,2] | 128 | [3,5] | 88.1 | 15.3 | 280 | 83.7 | ckpt/config/log |

| FocalNet-B | [2,2,18,2] | 128 | [3,5,7] | 88.7 | 15.4 | 269 | 83.9 | ckpt/config/log |

- Strict comparison with isotropic ViT models:

| Model | Depth | Dim | Kernels | #Params. (M) | FLOPs (G) | Throughput (imgs/s) | Top-1 | Download |

|---|---|---|---|---|---|---|---|---|

| FocalNet-T | 12 | 192 | [3,5,7] | 5.9 | 1.1 | 2334 | 74.1 | ckpt/config/log |

| FocalNet-S | 12 | 384 | [3,5,7] | 22.4 | 4.3 | 920 | 80.9 | ckpt/config/log |

| FocalNet-B | 12 | 768 | [3,5,7] | 87.2 | 16.9 | 300 | 82.4 | ckpt/config/log |

| Model | Depth | Dim | Kernels | #Params. (M) | Download |

|---|---|---|---|---|---|

| FocalNet-L | [2,2,18,2] | 192 | [5,7,9] | 207 | ckpt/config |

| FocalNet-L | [2,2,18,2] | 192 | [3,5,7,9] | 207 | ckpt/config |

| FocalNet-XL | [2,2,18,2] | 256 | [5,7,9] | 366 | ckpt/config |

| FocalNet-XL | [2,2,18,2] | 256 | [3,5,7,9] | 366 | ckpt/config |

| FocalNet-H | [2,2,18,2] | 352 | [3,5,7] | 687 | ckpt/config |

| FocalNet-H | [2,2,18,2] | 352 | [3,5,7,9] | 689 | ckpt/config |

NOTE: We reorder the class names in imagenet-22k so that we can directly use the first 1k logits for evaluating on imagenet-1k. We remind that the 851th class (label=850) in imagenet-1k is missed in imagenet-22k. Please refer to this labelmap. More discussion found in this issue.

Object Detection on COCO

| Backbone | Kernels | Lr Schd | #Params. (M) | FLOPs (G) | box mAP | mask mAP | Download |

|---|---|---|---|---|---|---|---|

| FocalNet-T | [9,11] | 1x | 48.6 | 267 | 45.9 | 41.3 | ckpt/config/log |

| FocalNet-T | [9,11] | 3x | 48.6 | 267 | 47.6 | 42.6 | ckpt/config/log |

| FocalNet-T | [9,11,13] | 1x | 48.8 | 268 | 46.1 | 41.5 | ckpt/config/log |

| FocalNet-T | [9,11,13] | 3x | 48.8 | 268 | 48.0 | 42.9 | ckpt/config/log |

| FocalNet-S | [9,11] | 1x | 70.8 | 356 | 48.0 | 42.7 | ckpt/config/log |

| FocalNet-S | [9,11] | 3x | 70.8 | 356 | 48.9 | 43.6 | ckpt/config/log |

| FocalNet-S | [9,11,13] | 1x | 72.3 | 365 | 48.3 | 43.1 | ckpt/config/log |

| FocalNet-S | [9,11,13] | 3x | 72.3 | 365 | 49.3 | 43.8 | ckpt/config/log |

| FocalNet-B | [9,11] | 1x | 109.4 | 496 | 48.8 | 43.3 | ckpt/config/log |

| FocalNet-B | [9,11] | 3x | 109.4 | 496 | 49.6 | 44.1 | ckpt/config/log |

| FocalNet-B | [9,11,13] | 1x | 111.4 | 507 | 49.0 | 43.5 | ckpt/config/log |

| FocalNet-B | [9,11,13] | 3x | 111.4 | 507 | 49.8 | 44.1 | ckpt/config/log |

- Other detection methods

| Backbone | Kernels | Method | Lr Schd | #Params. (M) | FLOPs (G) | box mAP | Download |

|---|---|---|---|---|---|---|---|

| FocalNet-T | [11,9,9,7] | Cascade Mask R-CNN | 3x | 87.1 | 751 | 51.5 | ckpt/config/log |

| FocalNet-T | [11,9,9,7] | ATSS | 3x | 37.2 | 220 | 49.6 | ckpt/config/log |

| FocalNet-T | [11,9,9,7] | Sparse R-CNN | 3x | 111.2 | 178 | 49.9 | ckpt/config/log |

Semantic Segmentation on ADE20K

- Resolution 512x512 and Iters 160k

| Backbone | Kernels | Method | #Params. (M) | FLOPs (G) | mIoU | mIoU (MS) | Download |

|---|---|---|---|---|---|---|---|

| FocalNet-T | [9,11] | UPerNet | 61 | 944 | 46.5 | 47.2 | ckpt/config/log |

| FocalNet-T | [9,11,13] | UPerNet | 61 | 949 | 46.8 | 47.8 | ckpt/config/log |

| FocalNet-S | [9,11] | UPerNet | 83 | 1035 | 49.3 | 50.1 | ckpt/config/log |

| FocalNet-S | [9,11,13] | UPerNet | 84 | 1044 | 49.1 | 50.1 | ckpt/config/log |

| FocalNet-B | [9,11] | UPerNet | 124 | 1180 | 50.2 | 51.1 | ckpt/config/log |

| FocalNet-B | [9,11,13] | UPerNet | 126 | 1192 | 50.5 | 51.4 | ckpt/config/log |

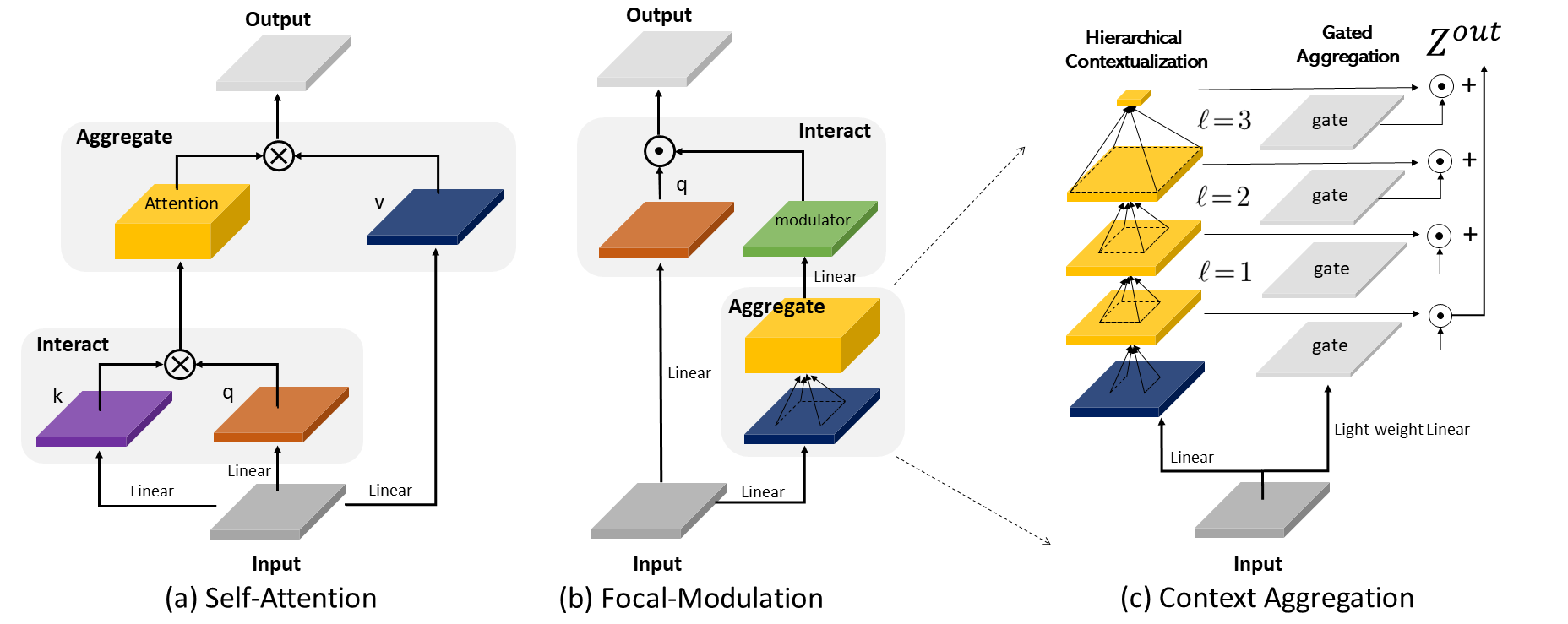

There are three steps in our FocalNets:

- Contexualization with depth-wise conv;

- Multi-scale aggregation with gating mechanism;

- Modulator derived from context aggregation and projection.

We visualize them one by one.

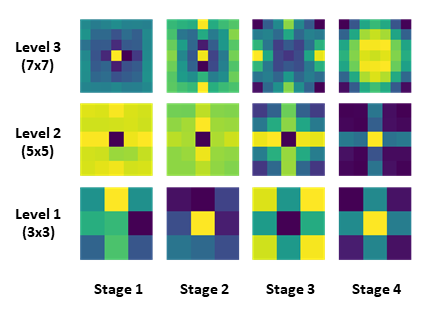

- Depth-wise convolution kernels learned in FocalNets:

Yellow colors represent higher values. Apparently, FocalNets learn to gather more local context at earlier stages while more global context at later stages.

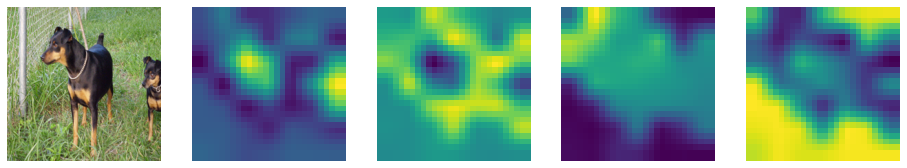

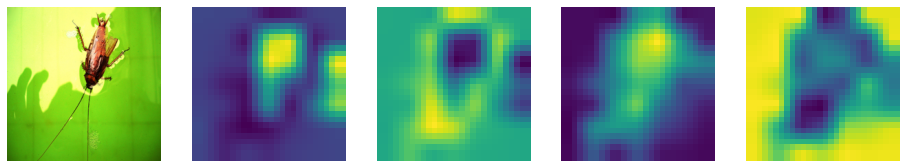

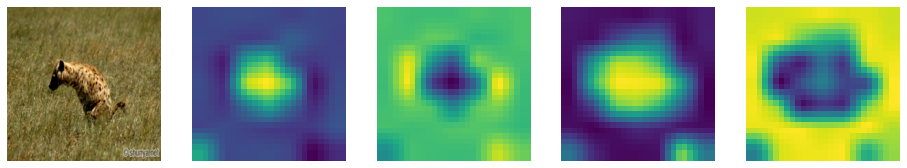

- Gating maps at last layer of FocalNets for different input images:

From left to right, the images are input image, gating map for focal level 1,2,3 and the global context. Clearly, our model has learned where to gather the context depending on the visual contents at different locations.

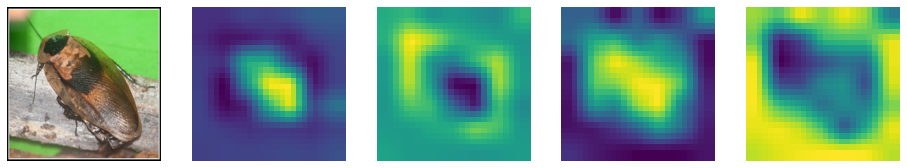

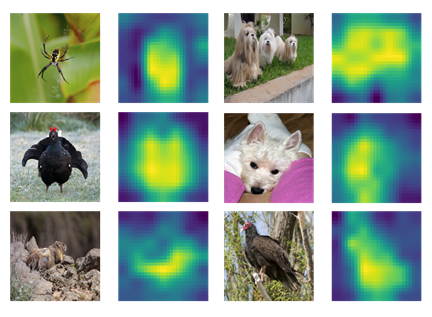

- Modulator learned in FocalNets for different input images:

The modulator derived from our model automatically learns to focus on the foreground regions.

For visualization by your own, please refer to visualization notebook.

If you find this repo useful to your project, please consider to cite it with following bib:

@misc{yang2022focal,

title={Focal Modulation Networks},

author={Jianwei Yang and Chunyuan Li and Xiyang Dai and Jianfeng Gao},

journal={Advances in Neural Information Processing Systems (NeurIPS)},

year={2022}

}

Our codebase is built based on Swin Transformer and Focal Transformer. To achieve the SoTA object detection performance, we heavily rely on the most advanced method DINO and the advices from the authors. We thank the authors for the nicely organized code!

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.opensource.microsoft.com.

When you submit a pull request, a CLA bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., status check, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow Microsoft's Trademark & Brand Guidelines. Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party's policies.