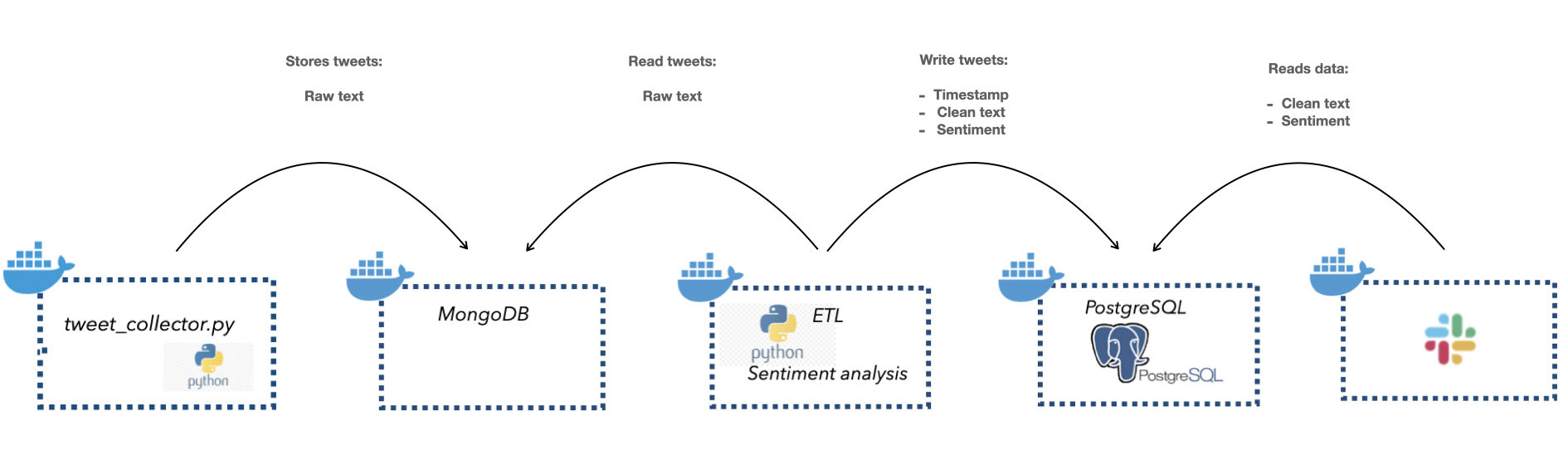

There are 5 steps in the data pipeline:

- Extract tweets with Tweepy API

- Loaded the tweets in a MongoDB

- Extracted the tweets from MongoDB, performed sentiment analysis on the tweets, and loaded the transformed data in a PostgresDB (ETL job)

- Loaded the tweets and corresponding sentiment assessment in a PostgresDB

- Extracted the data from the PostgresDB and posted it in a slack channel with a Slack bot

- Install Docker on your machine

- Clone the repository:

git clone https://github.com/miladbehrooz/Dockerized_Data_Pipeline.git - Get credentials for Twitter API and insert them in

tweet_collector/credentials.py - Get credentials for Slack bot and insert them in

slack_bot/credentials.py - Run

docker-compose build, thendocker-compose upin terminal