This is the repo of Stable Diffusion Segmentation for Biomedical Images with Single-step Reverse Process.

By

- 06/27: The paper of SDSeg has been pre-released on

- 06/17: 🎉🥳 SDSeg has been accepted by MICCAI2024! Our paper will be available soon.

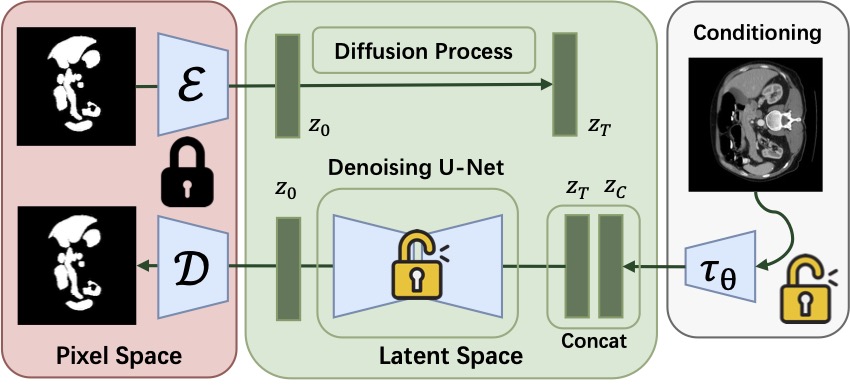

SDSeg is built on Stable Diffusion (V1), with a downsampling-factor 8 autoencoder, a denoising UNet, and trainable vision encoder (with the same architecture of the encoder in the f=8 autoencoder).

A suitable conda environment named sdseg can be created

and activated with:

conda env create -f environment.yaml

conda activate sdsegThen, install some dependencies by:

pip install -e git+https://github.com/CompVis/taming-transformers.git@master#egg=taming-transformers

pip install -e git+https://github.com/openai/CLIP.git@main#egg=clip

pip install -e .Solve GitHub connection issues when downloading taming-transformers or clip

After creating and entering the sdseg environment:

- create an

srcfolder and enter:

mkdir src

cd src- download the following codebases in

*.zipfiles and upload tosrc/:- https://github.com/CompVis/taming-transformers,

taming-transformers-master.zip - https://github.com/openai/CLIP,

CLIP-main.zip

- https://github.com/CompVis/taming-transformers,

- unzip and install taming-transformers:

unzip taming-transformers-master.zip

cd taming-transformers-master

pip install -e .

cd ..- unzip and install clip:

unzip CLIP-main.zip

cd CLIP-main

pip install -e .

cd ..- install latent-diffusion:

cd ..

pip install -e .Then you're good to go!

The image data should be place at

./data/, while the dataloaders are at./ldm/data/

We evaluate SDSeg on the following medical image datasets:

| Dataset | URL | Preprocess |

|---|---|---|

BTCV |

This URL, download the Abdomen/RawData.zip. |

Use the code in ./data/synapse/nii2format.py |

STS-3D |

This URL, download the labelled.zip. |

Use the code in ./data/sts3d/sts3d_preprocess.py |

REFUGE2 |

This URL | Following this repo |

CVC-ClinicDB |

This URL | None |

Kvasir-SEG |

This URL | None |

SDSeg uses pre-trained weights from SD to initialize before training.

For pre-trained weights of the autoencoder and conditioning model, run

bash scripts/download_first_stages_f8.shFor pre-trained wights of the denoising UNet, run

bash scripts/download_models_lsun_churches.shThe model weights trained on medical image datasets will be available soon.

Take CVC dataset as an example, run

nohup python -u main.py --base configs/latent-diffusion/refuge2-ldm-kl-8.yaml -t --gpus 3, --name refuge_training > nohup/refuge_training.log 2>&1 &You can check the training log by

tail -f nohup/experiment_name.logAlso, tensorboard will be on automatically. You can start a tensorboard session with --logdir=./logs/

STORAGE WARNING: A single SDSeg model ckeckpoint is around 5GB. By default, save only the last model and the model with the highest dice score. If you have tons of storage space, feel free to save more models by increasing the

save_top_kparameter inmain.py.

After training an SDSeg model, you should manually modify the run paths in scripts/slice2seg.py, and begin an inference process like

python -u scripts/slice2seg.py --dataset refuge2To conduct an stability evaluation process mentioned in the paper, you can start the test by

python -u scripts/slice2seg.py --dataset refuge2 --times 10 --save_resultsThis will save 10 times of inference results in ./outputs/ folder. To run the stability evaluation, open scripts/stability_evaluation.ipynb, and modify the path for the segmentation results. Then, click Run All and enjoy.

Training related:

- SDSeg model:

./ldm/models/diffusion/ddpm.pyin the classLatentDiffusion. - Experiment Configurations:

./configs/latent-diffusion

Inference related:

- Inference starting scripts:

./scripts/slice2seg.py, - Inference implementation:

./ldm/models/diffusion/ddpm.py, under thelog_dicemethod ofLatentDiffusion.

Dataset related:

- Dataset storation:

./data/ - Dataloader files:

./ldm/data/

If you find our work useful, please cite:

@inproceedings{lin2024stable,

title={Stable Diffusion Segmentation for Biomedical Images with Single-step Reverse Process},

author={Lin, Tianyu and Chen, Zhiguang and Yan, Zhonghao and Yu, Weijiang and Zheng, Fudan},

booktitle={International Conference on Medical Image Computing and Computer-Assisted Intervention},

year={2024},

organization={Springer}

}

- Organizing the inference code. (Toooo redundant right now.)

- Reimplement SDSeg in OOP. (Elegance is the key!)

- Add README for multi-class segmentation.

- Release model weights.

- Reimplement using diffusers.